IGP Power Consumption - 780G, GF8200, and G35

by Gary Key on April 18, 2008 2:00 AM EST- Posted in

- CPUs

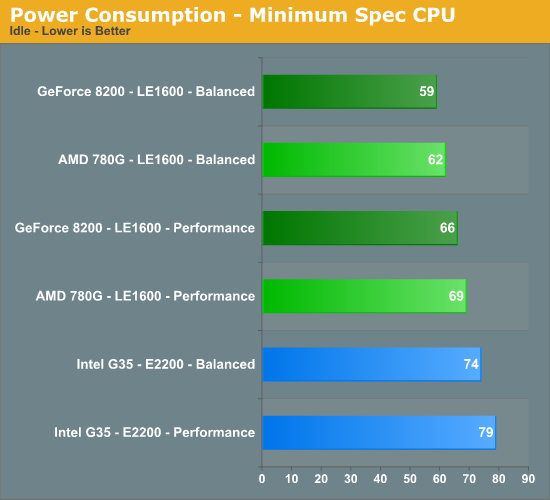

Idle Tests

We enabled the power management capabilities of each chipset in the BIOS and set our voltage selections to auto except for memory. We set memory to 1.90V to ensure stability at our timings. The boards would default to 1.80V or 1.85V, but we found 1.90V necessary for absolute stability in our configurations at the rated 4-4-4-12 timings.

On the two AMD boards, this resulted in almost identical settings with the exception being chipset voltages, although those were within a fraction of each other. Overall, each of the CPUs hovered around 1.250V and all power management options functioned perfectly on these particular board choices. We then set Vista to use either the Performance or Balanced profiles depending on test requirements.

We typically run our machines with the balanced profile. Using the Power Savings setting resulted in a decrease of 1W to 5W depending on the CPU and application tested. At idle, the Balanced and Power Savings profiles both set the minimum value for processor power management to 5%, while the maximum is set to 100% for Balanced and 50% for Power Savings. The Performance setting sets both values to 100% and is the reason for the increases in power requirements even if you have power management turned on in the BIOS.

The

results surprised us - more like floored us. The same company that brings you

global warming friendly chipsets like the 680i/780i has suddenly turned a new

leaf, or at least saved the tree it came from. We see the GeForce 8200 besting

the AMD 780G and Intel G35 platforms in our minimum spec configuration utilizing

the Power Saving profile by 3W and 15W respectively. To be fair to Intel, we

are comparing a single core AMD processor to a dual-core processor. However,

these are the minimum CPUs we would utilize. (4/19/08 Update - Minimum Spec chart is correct now)

Frankly, the AMD LE1600 is just on the verge of not being an acceptable processor during HD playback. The LE1600 was able to pass all of our tests, but the menu operation was slow when choosing our movie options and CPU utilization did hit the upper 90% range on some of the more demanding titles even with the 780G or GF8200 providing hardware offload capabilities.

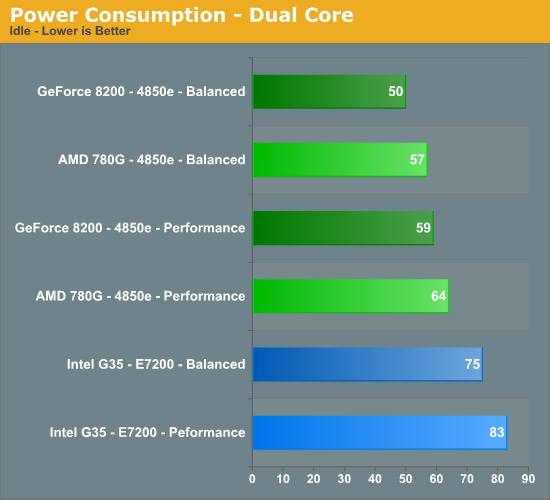

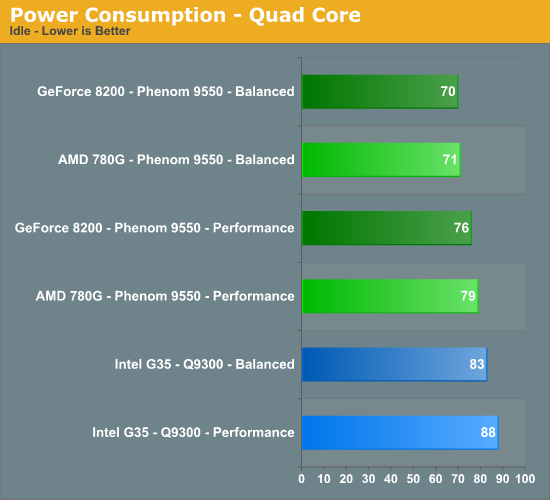

The pattern changes slightly with the dual-core setup having a 7W and 25W advantage for the GF8200. Our quad-core results are almost even with the GeForce 8200 board from Biostar having only a 1W difference compared to the Gigabyte 780G setup. The GeForce 8200/Phenom 9550 combo comes in with a 13W advantage over the Q9300 on the ASUS G35 board.

44 Comments

View All Comments

Darth Farter - Saturday, April 19, 2008 - link

awesome, over here where it's US$ 30cents/kWH you can understand that it will start to make a difference. Only thing I would like to see is Undervolting tho that like overclocking depends on the mileage. I'm running a G1 brisbane at 2Ghz with 0.975vcore on a 690g for 24/7 download/internet box. I wonder what it costs me/monthJarredWalton - Saturday, April 19, 2008 - link

Given our earlier calculations of $0.10/kWh, tripling the cost of energy means you're looking at savings of up to $30 per year for 24/7 use and a difference of 10W. If you're running a 100W PC 24/7 for a whole year, that PC would cost $262.80 at $0.30/kWh or $87.60 at $0.10/kWh.royalcrown - Saturday, April 19, 2008 - link

What is going on with the fried mosfets also, we never did get that weekend update ;) ?royalcrown - Saturday, April 19, 2008 - link

Why don't you have ANAND buy you guys some meters and on EVERY GFX card or PROCESSOR review list the actual wattage used by the systems. This NEEDING of at LEAST a 550 watt ps is BS for those of us that will never use dual cards.I just calculated that my new system on FULL load should draw about 280 watts with an 8800gt, so a 400 watt supply with 450 peak is fine for me . I read than Nvidia claims 125 watts on their page and the real draw is a lot less when they use the meter.

I for one am sick of these companies pushing monster PSU when they AREN'T needed in every case, and sites like Anandtech should give us the scoop instead of plastering ads for 1200 watt psu and not telling readers that we may not need even 550.

Zaranthos - Saturday, April 19, 2008 - link

That's a fact. I'm so sick of seeing insanely large power supplies shoved down peoples throats. I keep upgrading my computer and my 300W power supply keeps running my computer just fine. You'd think that wasn't even possible by most of the reviews/ads/propaganda. I'd like to see tests showing what the minimum power supply requirements are.JarredWalton - Saturday, April 19, 2008 - link

You mean like our PSU reviews where we repeatedly state that the only way you can even come near the point where a 1000W PSU is required is if you're heavily (i.e. water- or phase-cooling) overclocking your quad-core CPU and running 3-way or 4-way GPUs?Most PSUs are at maximum efficiency around the 50% load mark, but even at 30% load the good PSUs are above 83% efficiency. Couple that to the fact that a 600W PSU is generally quieter delivering 150W than a 300W PSU delivering the same wattage, and there are reasons to buy higher-spec PSUs. The biggest reason to buy a higher spec PSU, of course, is that it's very difficult to find good quality PSUs rated under 400W. (Seasonic and the Seasonic-built PSUs are about the only option.)

All that is totally overlooking the fact that *testing* with a highly-rated 520W PSU is not the same as saying the PSU is required. What's important is consistency, and here we are using the same PSU for all tests. It should have an 80-85% efficiency across the tested power requirements, which is well within the margin of error. If we drop to a 300W Seasonic, power draw might change slightly, but proportionately the results should be nearly identical to what we see in this article.

Perhaps Gary can chime in here with some comments; I know that he sent me an initial configuration table for this article on Thursday and then changed the PSU and case later that night. The original PSU was a Seasonic unit, so perhaps he ran into some difficulties. Again, not that it really makes a difference.

Wirmish - Saturday, April 19, 2008 - link

Flight Simulator X Test:nVidia vs AMD -> 0W to 3W, or ~2%.

Ok... nVidia win by 2%.

And "watt" about the FPS during these benchs ?

Did nVidia 8200 have -2% FPS vs AMD 780G ?

And if the 780G is faster, can you underclock it, or overclock the 8200 ?

Try it... just to compare the consumption at the same performance level.

Esben - Saturday, April 19, 2008 - link

Thanks for shedding light on the current IGP situation. It's great to see Nvidia is still competitive in the IGP-business, consumption wise. Now we eagerly await the performance numbers.Please keep writing about IGPs and power consumption. I'd find it very interesting if you made an articles about maximizing performance per watt, and how far in performance you can push the IGP.

An IGP-system is fitting most peoples needs, so the interest is definitely there.

jacito - Friday, April 18, 2008 - link

The artical is very well written, and this is going to sound rather stupid, but what does IGP stand for?JarredWalton - Friday, April 18, 2008 - link

IGP = Integrated Graphics Processor