More Single Card Dual GPU Madness: NVIDIA's Flagship 9800 GX2

by Derek Wilson on March 18, 2008 9:00 AM EST- Posted in

- GPUs

The 9800 GX2 Inside, Out and Quad

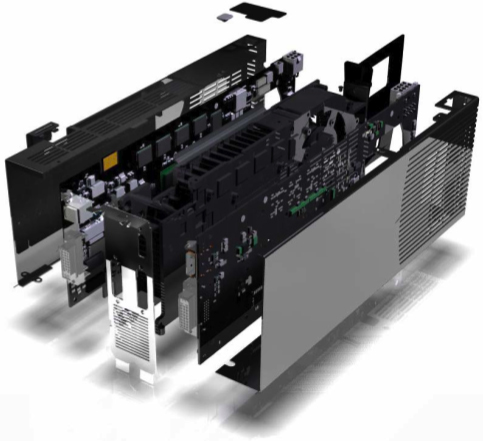

The most noticeable thing about the card is the fact that it looks like a single PCB with a dual slot HSF solution. Appearances are quite deceiving though, as on further inspection, it is clear that their are really two PCBs hidden inside the black box that is the 9800 GX2. This is quite unlike the 3870 X2 which puts two GPUs on the same PCB, but it isn't quite the same as the 7950 GX2 either.

The special sauce on this card is the fact that the cooling solution is sandwiched between the GPUs. Having the GPUs actually face each other is definitely interesting, as it helps make the look of the solution quite a bit more polished than the 7950 GX2 (and let's face it, for $600+ you expect the thing to at least look like it has some value).

NVIDIA also opted not to put both display outputs on one PCB as they did with their previous design. The word on why: it is easier for layout and cooling. This adds an unexpected twist in that the DVI connectors are oriented in opposite directions. Not really a plus or a minus, but its just a bit different. Moving from ISA to PCI was a bit awkward with everything turned upside down, and now we've got one of each on the same piece of hardware.

On the inside, the GPUs are connected via PCIe 1.0 lanes in spite of the fact that the GPUs support PCIe 2.0. This is likely another case where cost benefit analysis lead the way and upgrading to PCIe 2.0 didn't offer any real benefit.

Because the 9800 GX2 is G9x based, it also features all the PureVideo enhancements contained in the 9600 GT and the 8800 GT. We've already talked about these features, but the short list is the inclusion of some dynamic image enhancement techniques (dynamic contrast and color enhancement), and the ability to hardware accelerate the decode of multiple video streams in order to assist in playing movies with picture in picture features.

We will definitely test these features out, but this card is certainly not aimed at the HTPC user. For now we'll focus on the purpose of this card: gaming performance. Although, it is worth mentioning that the cards coming out at launch are likely to all be reference design based, and thus they will all include an internal SPDIF connector and an HDMI output. Let's hope that next time NVIDIA puts these features on cards that really need it.

Which brings us to Quad SLI. Yes, the beast has reared its ugly head once again. And this time around, under Vista (Windows XP is still limited by a 3 frame render ahead), Quad SLI will be able to implement a 4 frame AFR mode for some blazing fast speed in certain games. Unfortunately, we can't bring you numbers today, but when we can we will absolutely pit it against AMD's CrossFireX. We do expect to see similarities with CrossFireX in that it won't scale quite as well when we move from 3 to 4 GPUs.

Once again, we are fortunate to have access to an Intel D5400XS board in which we can compare SLI to CrossFire on the same platform. While 4-way solutions are novel, they certainly are not for everyone. Especially when the pair of cards costs between $1200 and $1300. But we are certainly interested in discovering just how much worse price / performance gets when you plug two 9800 GX2 cards into the same box.

It is also important to note that these cards come with hefty power requirements and using a PCIe 2.0 powersupply is a must. Unlike the AMD solutions, it is not possible to run the 9800 GX2 with a 6-pin PCIe power connector in the 8-pin PCIe 2.0 socket. NVIDIA recommends a 580W PSU with PCIe 2.0 support for a system with a single 9800 GX2. For Quad, they recommend 850W+ PSUs.

NVIDIA notes that some PSU makers have built their connectors a little out of spec so the fit is tight. They say that some card makers or PSU vendors will be offering adapters but that future power supply revisions should meet the specifications better.

As this is a power hungry beast, NVIDIA is including its HybridPower support for 9800 GX2 when paired with a motherboard that features NVIDIA integrated graphics. This will allow normal usage of the system to run on relatively low power by turning off the 9800 GX2 (or both if you have Quad set up), and should save quite a bit on your power bill. We don't have a platform to test the power savings in our graphics lab right now, but it should be interesting to see just how big an impact this has.

50 Comments

View All Comments

archerprimemd - Tuesday, April 1, 2008 - link

i apologize for the noob question, just didn't know where to look for the info:dual gpu single card or single gpu dual cards (meaning, in SLI)

which is better?

also, isn't having the 2 gpus in one card sort of like doing an SLI?

Tephlon - Friday, April 4, 2008 - link

"dual gpu single card or single gpu dual cards (meaning, in SLI)which is better?"

To be honest, these seems to very based on timing and pricing.

For instance, back in the 7 series, I bought two 7800GT's and SLI'd them. About a week later, the 7950GX2 became available. It offered similar (if not better in some cases) performance than the two 7800gt's, so I returned the gt's and got the GX2.

But at the same time... The 7800 Ultra's were available... and two of those in SLI were better than the GX2... but for nearly twice the money.

Again, this might vary generation to generation, so YMMV.

"also, isn't having the 2 gpus in one card sort of like doing an SLI?"

the short answer is yes. I actually posted about this in more detail just a few pages ago, so for more on the subject see http://www.anandtech.com/video/showdoc.aspx?i=3266...">http://www.anandtech.com/video/showdoc.aspx?i=3266...

Tephlon - Friday, April 4, 2008 - link

oops, sorry.I meant to say see my post at http://www.anandtech.com/video/showdoc.aspx?i=3266...">http://www.anandtech.com/video/showdoc.aspx?i=3266...

Its about half way down the comments page. or you can just search 'Tephlon'

SlingXShot - Wednesday, March 26, 2008 - link

You know 3dfx tried this SLI madness, and put 16 chips on one board...and you know they failed...these products are not attractive to standard joe and the only people who care are the ones who install new computer for a living. Is it not good practice puting 4 video cards together. People want new design, etc.Ravensong - Friday, March 21, 2008 - link

Ok, here's what I don't get and I hope someone can clarify this for me. In the article "ATI Radeon HD 3870 X2: 2 GPUs 1 Card, A Return to the High End" the CoD4 benchmarks running at 1920x1200 HQ settings, 0x AA/16x AF give a result of 107.3 fps yet this article's benchmark shows a result of 53.8 for 1920x1200. When I saw this I yelled out like like Lil Jon "WHAAT??" How did the frames drop this much? Perhaps the new 8.3 drivers are raping performance? This seems to be the case with every benchmark other than Crysis which received a minor increase from the 8.3 drivers. I'm not a fanboy for ATI/AMD by any means but I hardly see these scores as fair when just a few video articles ago this thing was doing well and then all the sudden it has piss poor performance when the GX2 launches. Reading this site on a daily basis I figured that the weird drop in performance would have been noted?? Not sure if anyone else noticed this but I surely did right away. I know I've had nothing but headaches atm with 8.3 and trying to get the 4 3850's I bought running in crossfire X. Thankfully thats just my secondary rig, if it had been my main I may have smashed it into pieces by now :DTheDudeLasse - Friday, March 21, 2008 - link

It's gotta be Catalyst 8.3The scores im getting with 8.2 are 70% better.

Ravensong - Friday, March 21, 2008 - link

Definitely, no other explanation as to why the scores are so horrid compared to only a month and a half ago when the original benches debuted. I wish all the sites using 8.3 would correct this injustice!! lol... Hardocp went as far as saying "The Radeon HD 3870 X2 gets utterly and properly owned, this is true “pwnage” on the highest level." ... just wow. :DRavensong - Saturday, March 22, 2008 - link

Any comments on this dilemma Sir Wilson?? (referring to the author) :DTheDudeLasse - Friday, March 21, 2008 - link

I think you may have had some driver issues with the 3870X2.Im running a q6600@3.4 and 3870x2.

I´ve been running the same benchmarks as you describe and the results are completely different.

For instance Call of Duty benchmark results vary almost over 70%

I ran the same benchmark "We start FRAPS as soon as the screen clears in the helicopter and we stop it right as the captain grabs his head gear."

Example

1920x1200 4xAA and 16AF Your result 42.3 fps average

My result 76.056fps average

That's an over 75% improvment to your score.

What's the jig? screwed up catalyst drivers or what?

7Enigma - Thursday, March 20, 2008 - link

Derek,I see you have not answered the requests regarding why 8800GT and 8800GTS SLI was not included in these benchmarks. I can understand if you were not allowed to due to some Nvidia NDA, and why you might not be able to talk about it.

If you could please reply with a :) if this is the case, we would be greatly appreciative. Otherwise it looks like there is a gaping whole in this reveiw.

Thank you.