Intel's 45nm Dual-Core E8500: The Best Just Got Better

by Kris Boughton on March 5, 2008 3:00 AM EST- Posted in

- CPUs

The Truth About Processor "Degradation"

Degradation - the process by which a CPU loses the ability to maintain an equivalent overclock, often sustainable through the use of increased core voltage levels - is usually regarded as a form of ongoing failure. This is much like saying your life is nothing more than your continual march towards death. While some might find this analogy rather poignant philosophically speaking, technically speaking it's a horrible way of modeling the life-cycle of a CPU. Consider this: silicon quality is often measured as a CPU's ability to reach and maintain a desired stable switching frequency all while requiring no more than the maximum specified process voltage (plus margin). If the voltage required to reach those speeds is a function of the CPU's remaining useful life, then why would each processor come with the same three-year warranty?

The answer is quite simple really. Each processor, regardless of silicon quality, is capable of sustained error-free operation while functioning within the bounds of the specified environmental tolerances (temperature, voltage, etc.), for a period of no less than the warranted lifetime when no more performance is demanded of it than its rated frequency will allow. In other words, rather than limit the useful lifetime of each processor, and to allow for a consistent warranty policy, processors are binned based on the highest achievable speed while applying no more than the process's maximum allowable voltage. When we get right down to it, this is the key to overclocking - running CPUs in excess of their rated specifications regardless of reliability guidelines.

As soon as you concede that overclocking by definition reduces the useful lifetime of any CPU, it becomes easier to justify its more extreme application. It also goes a long way to understanding why Intel has a strict "no overclocking" policy when it comes to retaining the product warranty. Too many people believe overclocking is "safe" as long as they don't increase their processor core voltage - not true. Frequency increases drive higher load temperatures, which reduces useful life. Conversely, better cooling may be a sound investment for those that are looking for longer, unfailing operation as this should provide more positive margin for an extended period of time.

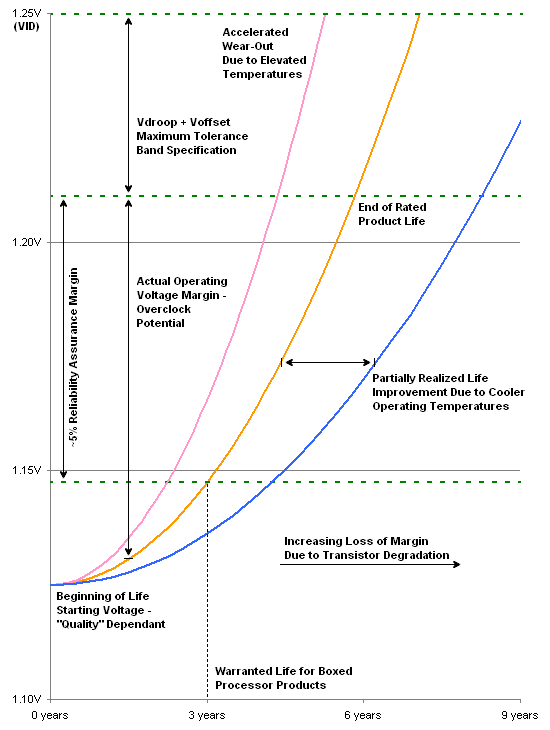

The graph above shows three curves. The middle line models the minimum required voltage needed for a processor to continuously run at 100% load for the period shown along the x-axis. During this time, the processor is subjected to its specified maximum core voltage and is never overclocked. Additionally, all of the worst-case considerations come together and our E8500 operates at its absolute maximum sustained Tcase temperature of 72.4ºC. Three years later, we would expect the CPU to have "degraded" to the point where slightly more core voltage is needed for stable operation - as shown above, a little less than 1.15V, up from 1.125V.

Including Vdroop and Voffset, an average 45nm dual-core processor with a VID of 1.25000 should see a final load voltage of about 1.21V. Shown as the dashed green line near the middle of the graph, this represents the actual CPU supply voltage (Vcore). Keep in mind that the trend line represents the minimum voltage required for continued stable operation, so as long as it stays below the actual supply voltage line (middle green line) the CPU will function properly. The lower green line is approximately 5% below the actual supply voltage, and represents an example of an offset that might be used to ensure a positive voltage margin is maintained.

The intersection point of the middle line (minimum required voltage) and the middle green line (actual supply voltage) predicts the point in time when the CPU should "fail," although an increase in supply voltage should allow for longer operation. Also, note how the middle line passes through the lower green line, representing the desired margin to stability at the three-year point, marking the end of warranty. The red line demonstrates the effect running the processor above the maximum thermal specification has on rated product lifetime - we can see the accelerated degradation caused by the higher operating temperatures. The blue line is an example of how lowering the average CPU temperature can lead to increased product longevity.

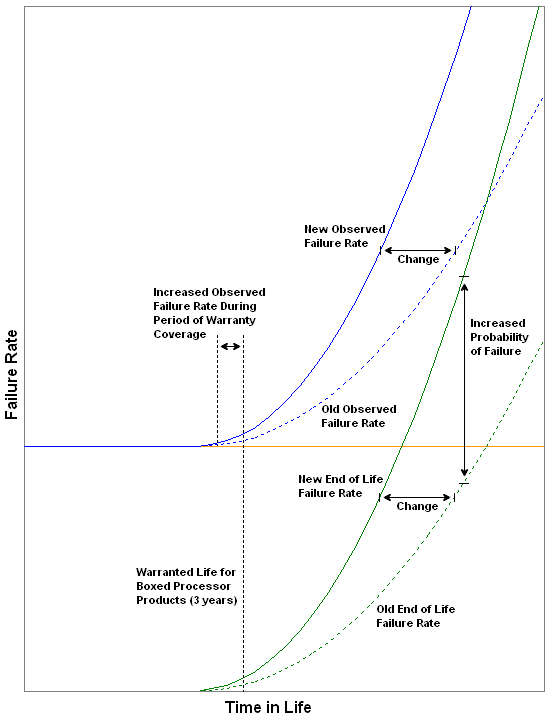

Because end of life failures are usually caused by a loss of positive voltage margin (excessive wear/degradation) we can establish a very real correlation between the increased/decreased probability of these types of failures and the operating environment experienced by the processor(s) in question. Here we see the effect a harsher operating environment has on observed failure rate due to the new end of life failure rate curve. By running the CPU outside of prescribed operating limits, we are no longer able to positively attribute any failure near the end of warranty to any known cause. Furthermore, because Intel is unable to make a distinction in failure type for each individual case of warranty failure when overclocking or improper use is suspected, policy is established which prohibits overclocking of any kind if warranty coverage is desired.

So what does all of this mean? So far we have learned that of the three basic failure types, failures due to degradation (i.e. wearing out) are in most cases directly influenced by the means and manner in which the processor is operated. Clearly, the user plays a considerable role in the creation and maintenance of a suitable operating environment. This includes the use of high-quality cooling solutions and pastes, the liberal use of fans to provide adequate case ventilation, and finally proper climate control of the surrounding areas. We have also learned that Intel has established easy to follow guidelines when it comes to ensuring the longevity of your investment.

Those that choose to ignore these recommendations and/or exceed any specification do so at their own peril. This is not meant to insinuate that doing so will necessarily cause immediate, irreparable damage or product failure. Rather, every decision made during the course of overclocking has a real and measureable "consequence." For some, there may be little reason to worry as concern for product life may not be a priority. On the other hand, perhaps precautions will be taken in order to accommodate the higher voltages like the use of water-cooling or phase-change cooling. In any case, the underlying principles are the same - overclocking is never without risk. And just like life, taking calculated risks can sometimes be the right choice.

45 Comments

View All Comments

TheJian - Thursday, March 6, 2008 - link

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.aspx?Item=N8...You can buy a Radeon 3850 and triple your 6800 performance (assuming it's a GT with an ultra it would be just under triple). Check tomshardware.com and compare cards. You'd probably end up closer to double performance because of a weaker cpu, but still once you saw your fps limit due to cpu you can crank the hell out of the card for better looks in the game. $225 vs probably $650-700 for a new board+cpu+memory+vidcard+probably PSU to handle it all. If you have socket 939 you can still get a dual core Opty144 for $97 on pricewatch :) Overclock the crap out of it you might hit 2.6-2.8 and its a dual core. So around $325 for a lot longer life and easy changes. It will continue to get better as dual core games become dominant. While I would always tell someone to spend the extra money on the Intel currently (jeez, the OC'ing is amazing..run at default until slow then bump it up a ghz/core, that's awesome), if you're on a budget a dual core opty and a 3850 looks pretty good at less than half the cost and both are easy to change out. Just a chip and a card. That's like a 15 minute upgrade. Just a thought, in case you didn't know they had an excellent AGP card out there for you. :)

mmntech - Wednesday, March 5, 2008 - link

I'm in the same boat with the X2 3800+. Anyway, when it comes to dual vs quad, the same rules apply back when the debate was single versus dual. Very few games support quad core but a quad will be more future proof and give better multitasking. The ultimate question is how much you want to spend, how long you intend to keep the processor, and what the future road maps for games and CPU tech are within that period.I'm a long time AMD/nVidia man but I'm liking what Intel and ATI are putting out. I'm definitely considering these Wolfdales, especially that sub $200 one. I'm going to wait for the prices and benchmarks for the triple core Phenoms though before I begin planning an upgrade.

Margalus - Wednesday, March 5, 2008 - link

the current state of affairs generally point to the higher clocked dual core. Very few games can take advantage of 4 cores, so the more speed you get the better.Spacecomber - Wednesday, March 5, 2008 - link

This has been mentioned in a couple of articles, now, that what these processors will run at with no more than 1.45v core voltage applied is what really matters for most people buying one of these 45nm chips. So, it begs the question, what are the results at this voltage?While the section on processor failure was somewhat interesting, I think that it should have been a separate article.

retrospooty - Wednesday, March 5, 2008 - link

"these processors will run (safely) at with no more than 1.45v core voltage applied is what really matters for most people buying one of these 45nm chips. So, it begs the question, what are the results at this voltage"Very good point. Since these CPU's are deemed safe up to 1.45 volt, lets see how far they clock at 1.45 volts. 4.5 ghz at 1.6 volts is nice for a suicide run, but lets see it at 1.45.

Spoelie - Wednesday, March 5, 2008 - link

This reads like an excerpt of a press release:"We could argue that when it came to winning the admiration and approval of overclockers, enthusiasts, and power users alike, no other single common product change could have garnered the same overwhelming success."

Except that it was not. It was a knee-jerk reaction to the K8 release way back in 2003. It was too expensive to matter to anyone except for the filthy rich. The FX around that time was more successful. In recent years they just polished the concept a bit, but gaining admiration and overwhelming success because of it?? I think not. The Conroe architecture was the catalyst, not some expensive niche product.

"Our love affair with the quad-core began not too long ago, starting with the release of Intel's QX6700 Extreme Edition processor. Ever since then Intel has been aggressive in their campaign to promote these processors to users that demand unrivaled performance and the absolute maximum amount of jaw-dropping, raw processing power possible from a single-socket desktop solution. Quickly following their 2.66GHz quad-core offering was the QX6800 processor, a revolutionary release in its own right in that it marked the first time users could purchase a processor with four cores that operated at the same frequency as the current top dual-core bin - at the time the 2.93GHz X6800."

Speed bump revolutionary? Oh well ;)

No beef with the rest of the article, those two paragraphs just stand out as being overly enthousiastic, more so than informative.

MaulSidious - Wednesday, March 5, 2008 - link

this articles a bit late isn't it? seeing as they been out for quite a while now.MrModulator - Wednesday, March 5, 2008 - link

Well it's being updated from time to time. I think it is relevant since Cubase 4 is still the latest version used of cubase and the performance is the same today. What is important with this is that they measure up two equally clocked processors where the difference is in the number of cores. Yes, the quad is better at higher latencys but it loses the advantage at lower latencys and even gets beaten by the dual-core.More of a reminder of the limitations of current day quadcores in some situations. This will probably change when Nehalem is introduced with its on-die memory controller, a higher FSB and faster DD3 memory.

adiposity - Wednesday, March 5, 2008 - link

Uh, what? I think he's saying these processors were on the shelves over a month ago. This article is acting like they are just about to come out!-Dan

MrModulator - Wednesday, March 5, 2008 - link

Yeah, you talk about games and maximum cpufrequency on dual core is important, but there are other areas that are much more interesting. Performance for sequencers where you make music (in DAW-based computeres) is seldom mentioned. It is very important to be able to cram out every ounce of performance in real-time with a lot of software synthesizers and effects using a low latency setting(not memory latency but the delay from when you press a key on the synt until it is procesed in the computer and put out from the soundcard for example).Here's an interesting benchmark:

http://www.adkproaudio.com/benchmarks.cfm">http://www.adkproaudio.com/benchmarks.cfm

(Sorry, using the linking button didn't work, you have to copy the link manually)

If you scroll down to the Cubase4/Nuendo3 Test you can compare the QX6850(4 core) with the E6850 (2 core). They both run at 3 GHz. Look at what happens when the latency is lowered. Yes the dualcore actually beats the quadcore, even though these applications use all cores available. The reason could be that all 4 cores compete for the fsb and memory access when the latency is really low. Very interesting indeed, as DAW is an area in much more for cpu than gaming...