Intel's 45nm Dual-Core E8500: The Best Just Got Better

by Kris Boughton on March 5, 2008 3:00 AM EST- Posted in

- CPUs

The Truth About Processor "Degradation"

Degradation - the process by which a CPU loses the ability to maintain an equivalent overclock, often sustainable through the use of increased core voltage levels - is usually regarded as a form of ongoing failure. This is much like saying your life is nothing more than your continual march towards death. While some might find this analogy rather poignant philosophically speaking, technically speaking it's a horrible way of modeling the life-cycle of a CPU. Consider this: silicon quality is often measured as a CPU's ability to reach and maintain a desired stable switching frequency all while requiring no more than the maximum specified process voltage (plus margin). If the voltage required to reach those speeds is a function of the CPU's remaining useful life, then why would each processor come with the same three-year warranty?

The answer is quite simple really. Each processor, regardless of silicon quality, is capable of sustained error-free operation while functioning within the bounds of the specified environmental tolerances (temperature, voltage, etc.), for a period of no less than the warranted lifetime when no more performance is demanded of it than its rated frequency will allow. In other words, rather than limit the useful lifetime of each processor, and to allow for a consistent warranty policy, processors are binned based on the highest achievable speed while applying no more than the process's maximum allowable voltage. When we get right down to it, this is the key to overclocking - running CPUs in excess of their rated specifications regardless of reliability guidelines.

As soon as you concede that overclocking by definition reduces the useful lifetime of any CPU, it becomes easier to justify its more extreme application. It also goes a long way to understanding why Intel has a strict "no overclocking" policy when it comes to retaining the product warranty. Too many people believe overclocking is "safe" as long as they don't increase their processor core voltage - not true. Frequency increases drive higher load temperatures, which reduces useful life. Conversely, better cooling may be a sound investment for those that are looking for longer, unfailing operation as this should provide more positive margin for an extended period of time.

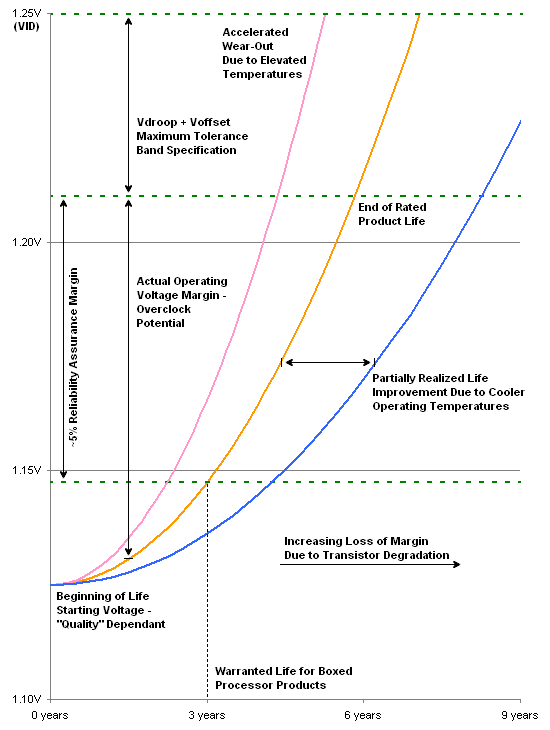

The graph above shows three curves. The middle line models the minimum required voltage needed for a processor to continuously run at 100% load for the period shown along the x-axis. During this time, the processor is subjected to its specified maximum core voltage and is never overclocked. Additionally, all of the worst-case considerations come together and our E8500 operates at its absolute maximum sustained Tcase temperature of 72.4ºC. Three years later, we would expect the CPU to have "degraded" to the point where slightly more core voltage is needed for stable operation - as shown above, a little less than 1.15V, up from 1.125V.

Including Vdroop and Voffset, an average 45nm dual-core processor with a VID of 1.25000 should see a final load voltage of about 1.21V. Shown as the dashed green line near the middle of the graph, this represents the actual CPU supply voltage (Vcore). Keep in mind that the trend line represents the minimum voltage required for continued stable operation, so as long as it stays below the actual supply voltage line (middle green line) the CPU will function properly. The lower green line is approximately 5% below the actual supply voltage, and represents an example of an offset that might be used to ensure a positive voltage margin is maintained.

The intersection point of the middle line (minimum required voltage) and the middle green line (actual supply voltage) predicts the point in time when the CPU should "fail," although an increase in supply voltage should allow for longer operation. Also, note how the middle line passes through the lower green line, representing the desired margin to stability at the three-year point, marking the end of warranty. The red line demonstrates the effect running the processor above the maximum thermal specification has on rated product lifetime - we can see the accelerated degradation caused by the higher operating temperatures. The blue line is an example of how lowering the average CPU temperature can lead to increased product longevity.

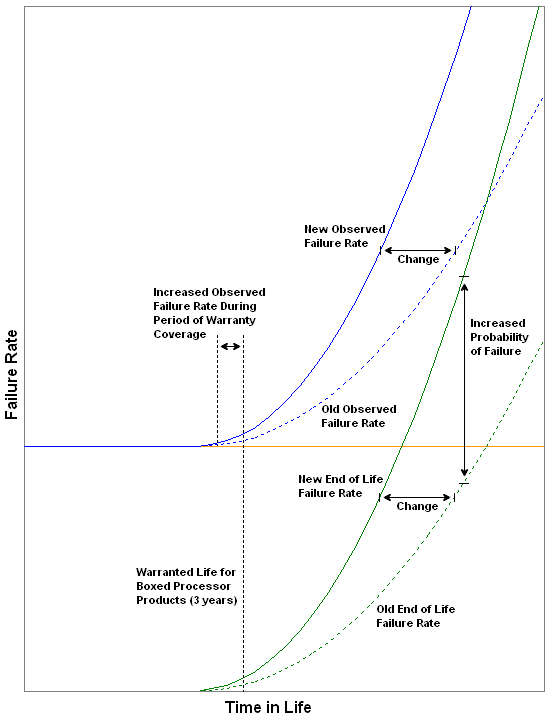

Because end of life failures are usually caused by a loss of positive voltage margin (excessive wear/degradation) we can establish a very real correlation between the increased/decreased probability of these types of failures and the operating environment experienced by the processor(s) in question. Here we see the effect a harsher operating environment has on observed failure rate due to the new end of life failure rate curve. By running the CPU outside of prescribed operating limits, we are no longer able to positively attribute any failure near the end of warranty to any known cause. Furthermore, because Intel is unable to make a distinction in failure type for each individual case of warranty failure when overclocking or improper use is suspected, policy is established which prohibits overclocking of any kind if warranty coverage is desired.

So what does all of this mean? So far we have learned that of the three basic failure types, failures due to degradation (i.e. wearing out) are in most cases directly influenced by the means and manner in which the processor is operated. Clearly, the user plays a considerable role in the creation and maintenance of a suitable operating environment. This includes the use of high-quality cooling solutions and pastes, the liberal use of fans to provide adequate case ventilation, and finally proper climate control of the surrounding areas. We have also learned that Intel has established easy to follow guidelines when it comes to ensuring the longevity of your investment.

Those that choose to ignore these recommendations and/or exceed any specification do so at their own peril. This is not meant to insinuate that doing so will necessarily cause immediate, irreparable damage or product failure. Rather, every decision made during the course of overclocking has a real and measureable "consequence." For some, there may be little reason to worry as concern for product life may not be a priority. On the other hand, perhaps precautions will be taken in order to accommodate the higher voltages like the use of water-cooling or phase-change cooling. In any case, the underlying principles are the same - overclocking is never without risk. And just like life, taking calculated risks can sometimes be the right choice.

45 Comments

View All Comments

TheJian - Thursday, March 6, 2008 - link

Agreed. I haven't had a cpu that hasn't been heavily overclocked since like 1992 or so. All of these chips clear back a 486 100mhz ran for others for years after I sold them. My sister is still running my Athlon 1700+ in one machine. It's on all day, she doesn't even use standby...LOL (except for the monitor). It's like 6yrs old. Probably older than that. Still running fine. I think it runs at 1400mhz if memory serves (but it's a 1700+, you know amd's number scheme). Every time I upgrade I sell a used chip at like 1/2 off to a customer/relative and they all run for years. I usually don't keep them for more than 1yr but they run well past the 3yr warranty for everyone else, and I drive them hard while I have them. I'd venture to guess that most of Intel/AMD chips would last 5+ years on avg. The processes are just that good. After a few years in a large company with 600+ pc's I realized they just don't die. We sent them to schools (4-5yr upgrade schedule) before they died or sold them to employees for $50-100 as full pc's! I think I saw 3 cpu deaths in 2.5yrs and they were dead weeks after purchase (p4's...presshots..LOL). Don't even get me started on the hard drive deaths though...ROFL. 40+/yr. Those damn Dell SFF's get hot and kill drives quick (not to mention the stinking plastic, smells burnt all day). You're lucky to get to warranty on those. I digress...mindless1 - Wednesday, March 5, 2008 - link

Yes, the author is completely wrong about overclocking. Overclocking (within sane bounds like not letting the processor get hotter than you'd want even if it weren't overclocked), INCREASES the usable lifespan, not decreases.The author has obviously not had much experience overclocking, for example there are still plenty of Celeron 300MHz processors that ran at 450+MHz for almost ten years then were retired due to beyond beyond their "usable" lifespan, just slow by then modern standards. Same for Coppermine P3 and Celeron, Athlon XP, take your pick there are almost never examples of a processor that fails prematurely that had ran stable for a couple years, unless it was due to some external influence like the heatsink falling off or motherboard power circuit failure.

Overclocking really isn't a gamble - unless you don't use common sense. 2-3 years is a lifespan you'd get if you were doing something extreme, not a modest voltage increase using a heatsink that keeps it cool enough.

I suggest the article page about "The Truth About Processor Degradation" should just be deleted, it's not just misleading but mostly incorrect. Here's the core of the problem:

"As soon as you concede that overclocking by definition reduces the useful lifetime of any CPU, it becomes easier to justify its more extreme application."

Absolutely backwards. Overclocking does not by definition nor by any other nonsensical standard, reduce the useful lifetime of CPUs. It increases the useful lifetime by providing more performance so that processor remains at the required performance levels (which escalate) for a longer period, then eventually is retired before failing in most cases. It is wrong to think that if an overclocked processor would last 18 years without overclocking and 12 with modest overclocking, that this suddenly means "it becomes easier to justify it's more extreme application." It means you can do something sanely and have zero problems or use random guesses and do something "extreme" and then you will find a problem. Author is completely backwards.

"Too many people believe overclocking is "safe" as long as they don't increase their processor core voltage - not true."

There is no evidence of this. Show us even one processor that failed from increase in clock speed within it's default voltage and within it's otherwise viable lifespan.

"Frequency increases drive higher load temperatures, which reduces useful life. "

Wrong. While it is true that a higher frequency will increase temps, it is not true that a higher temp (so long as it's not excessive) will cause the processor to faill within it's "useful life". On the contrary you have extended the useful life by increasing the performance. Millions upon millions of overclockers know this, a moderate overclock (or even a lot, providing the vcore isn't increased significantly) has no effect, it's always some other portion of the system that fails first from old age, generally motherboard or PSU. It might be fair to say that overclocking, through use of more current, is more likely to reduce the viable lifespan of the motherboard or PSU, or actually both long before the processor would fail.

Intel doesn't warranty overclocking because it is definitely possible to make a mistake though ignorance or ineptitude, and because their price model is based on speed/performance. It is not based upon evidence that experienced overclockers using good judgement will end up with a processor that failed within 8 years, let alone 3!

It also goes a long way to understanding why Intel has a strict "no overclocking" policy when it comes to retaining the product warranty. Too many people believe overclocking is "safe" as long as they don't increase their processor core voltage - not true. Frequency increases drive higher load temperatures, which reduces useful life.

TheJian - Thursday, March 6, 2008 - link

AMD has recently proved this, and even Intel to some extent with P4's. AMD's recent chips have been near max, with almost no overclocking room (same for quite a few models of P4's) and they lived long lives. Proving you can run at almost max at default voltages with no worries.Where does the author get his data? Just as you said. Prove it. I think Intel is tire of us overclocking the crap out of their great cores. With AMD not having ANY competitor they end up with all chips being able to hit 4ghz but having to mark them at 3.16ghz. What do we do? Overclock to near max and that pisses them off. :) Make a few phone calls to some people and tell them write a "10minute's of overclocking and your cpu blows up" article or you won't get our next engineering samples to test :) Maybe I'm wrong but it's sure suspicious. Recommending anything with Intel IGP for HTPC applications is might suspicious also. Yes, I read tech-report too. Also the same is on toms hardware! Check out this FIRST SENTENCE of their 3/4/08 article on 780 chipset from AMD:

"With today's introduction of its new 780G chipset, AMD is finally enabling users to build an HTPC or multimedia computer for HDTV, HD-DVD or Blu-ray playback that doesn't require an add-in graphics card. (AMD already included HDCP support and an HDMI interface in its predecessor chipset, the 690G.) The northbridge chip of the new 780G chipset also features an integrated Radeon HD3200 graphics unit that can decode any current high-definition video codec. As a result, CPU load is decreased to such a degree that even a humble AMD Sempron 3200+ is sufficient for HD video playback. Also, while Intel's chipsets get more power-hungry with every generation, AMD's newest design was designed with the goal of reducing power consumption."

http://www.tomshardware.com/2008/03/04/amd_780g_ch...">http://www.tomshardware.com/2008/03/04/amd_780g_ch...

OK, so for the first time we can build an HTPC without an add-in graphics card. Translation - IT can't be done on Intel! Ok, even a LOWLY SEMPRON 3200+ cuts it with this chipset! Translation - No need for an Intel Core2 2.66ghz-2.83ghz dual core! No need for a dual core at all. Before this chipset it took an A64 6400 DUAL CORE (on AMD's old 690G chipset and that chipset smokes Intels IGP) and still was choppy. Now they say a 1.8ghz SINGLE CORE SEMPRON only shows 63% cpu utilization WITHOUT choppy on the 780G! On top of that it will save you money while running. Even the chipset is the BEST EVER in power use. They openly tell you how BAD Intel's chipsets are at 90nm. But Anandtech wants us to buy this crap? BLU-RAY finally hit it's limit on a 1.6ghz SEMPRON at tomshardware. They hit 100% cpu in a few spots. I hope Anandtech's 780G chipset review sets this record straight. They'd better say you should AVOID INTEL like the plague or something is FISHY in HTPC/Intel/Anandtech world.

Don't get me wrong Intel has the greatest chips now for a year, I'm personally waiting on the E8400 to hand me down my E4300 to my dad (with runs at 3.2hz with ease). But call a spade a spade man. Intel sucks in HTPC. SERIOUSLY SUCKS after early this week!

Quiet1 - Wednesday, March 5, 2008 - link

Kris exposes his personal preferences when he writes... "While there is no doubt that the E8500 will excel when subjected to even the most intense processing loads, underclocked and undervolted it's hard to find a better suited Home Theater PC (HTPC) processor. For this reason alone we predict the E8200 (2.66GHz) and E8300 (2.83GHz) processors will become some of the most popular choices ever when it comes to building your next HTPC."But what are you going to plug that CPU in to??? An Intel motherboard with Intel integrated graphics? Look at the full picture and you'll see that if you're building an HTPC, the CPU just has to be decent enough to get the job done... the really important thing is your IG performance on your chipset.

The Tech Report: “AMD's 780G chipset / Integrated graphics all grown up”

http://www.techreport.com/articles.x/14261/1">http://www.techreport.com/articles.x/14261/1

“The first thing to take away from these results is just how completely the 780G's integrated graphics core outclasses the G35 Express. Settings that deliver reasonably playable framerates on the 780G reduce the G35 to little more than an embarrassing slideshow.”

"Between our integrated graphics platforms, the 780G exhibits much lower CPU utilization than the G35 Express. More importantly, the AMD chipset's playback was buttery smooth throughout. The same can't be said for the G35 Express, whose playback of MPEG2 and AVC movies was choppy enough to be unwatchable."

sprockkets - Thursday, March 6, 2008 - link

That's the problem with Intel's platform, at least without an add in card. I thought the new nVidia chipset would change all that, then I found out they are only using a single channel of ram, how retarded is that?Then, for now, having the ability to run the add in card for games but then shut it down afterwards when you do not need it is sweet. I would wait though for the 8200 chipset since i know it will be easier to get working in Linux but may go still for the 780G for Windows Vista.

HilbertSpace - Wednesday, March 5, 2008 - link

I read that article too, and thought the same thing.Atreus21 - Wednesday, March 5, 2008 - link

I wish to hell Intel would quit using those penises for marketing their architecture shrinks. Every time I try and read it I'm like, "Ah!"One would think they're trying to say something.

Atreus21 - Wednesday, March 5, 2008 - link

I mean, the least they could do is not make it flesh colored.frombauer - Wednesday, March 5, 2008 - link

I'll finally upgrade my x2 3800+ (@2.5GHz) very soon. Question is, for gaming mostly, will a high clocked dual core suffice, or a lower clocked quad will be faster as games become more multi-threaded?7Enigma - Thursday, March 6, 2008 - link

I think it really depends on how long you plan on keeping the new system. Since your current rig is a couple years old, you fall into the category of 90% of us. We don't throw out the mobo and cpu every time a new chip comes out, we wait out a couple generations and then rebuild. I'm running at home right now on an A64 3200+ (OC'd to 2.5GHz) so I don't even have dual-core right now.My plan is that even though the duals offer potentially better gaming performance right now (gpu obviously still being the caveat), since I only rebuild every 3-4 years I need something to be more futureproof than someone who upgrades every year. It would be great to say I'll get a fast dual-core today and next year get a quad, but 4 out of 5 times the upgrade would require a new mobo anyway so I'd rather wait another month or two, get a 45nm quad and the 9800 when it comes out.

My biggest dissapointment with my last build was NOT jumping on the "new" slot and instead getting an AGP mobo. That is what has really hampered my gaming the last year or so. Once the main manufacturers stopped producing AGP gfx cards my upgrade path stopped cold. If I could go back to jan 05 I would have spent the extra $50-100 on a mobo supporting PCI-X, which would have allowed me to upgrade past my current 6800GT and keep on gaming. Right now I have a box of games I've never played (gifts from Christmas) because my system can't even load them.

So in short, the duals are right NOW the better buy for gaming, but I'd hedge my bets and splurge on a 45nm quad when they come out. In all honesty unless you play RTS' heavily, or we have some crazy change of mindset by game producers (not likely) the gpu will continue to be the bottleneck at anything above 17-19" LCD resolutions. I actually just got a really nice 19" LCD this past Christmas to replace my aging 19" CRT and I did it for a very good reason. All it takes is to see a game like Crysis and realize that we may not be ready yet for the resolutions that 20/22/24 display, unless we have the cash to burn on top of the line components and upgrade at a much more frequent rate.

2cents.