Real World DirectX 10 Performance: It Ain't Pretty

by Derek Wilson on July 5, 2007 9:00 AM EST- Posted in

- GPUs

Company of Heroes

While Company of Heroes was first out of the gate with a DirectX 10 version, Relic didn't simply recompile their DX9 code for DX10; Company of Heroes was planned for DX10 from the start before there was any hardware available to test with. We are told that it's quite difficult to develop a game when going only by the specifications of the API. Apparently Relic was very aggressive in their use of DX10 specific features and had to scale back their effort to better fit the actual hardware that ended up hitting the street.

In spite of the fact that Microsoft requires support for specific features in order to be certified as a DX10 part, requiring a minimum level of performance for features is not part of the deal. This certainly made it hard for early adopters to produce workable code before the arrival of hardware, as developers had no idea which features would run fastest and most efficiently.

In the end, a lot of the DX10 specific features included in CoH had to be rewritten in a way that could have been implemented on DX9 as well. That's not to say that DX10 exclusive features aren't there (they do make use of geometry shaders in new effects); it's just that doing things in a way similar to how they are currently done offers better performance and consistency between hardware platforms. Let's take a look at some of what has been added in with the DX10 version.

The lighting model has been upgraded to be completely per pixel with softer and more shadows. All lights can cast shadows, making night scenes more detailed than on the DX9 version. These shadows are created by generating cube maps on the fly from each light source and using a combination of instancing and geometry shading to create the effect.

Company of Heroes DirectX 9

Company of Heroes DirectX 10

There is more debris and grass around levels to add detail to terrain. Rather than textures, actual geometry is used (through instancing and geometry shaders) to create procedurally generated "litter" like rocks and short grass.

Triple buffering is enabled by default, but has been disabled (along with vsync) for our tests.

We discovered that our cards with 256MB of RAM or less had trouble running with 4xAA and DirectX 10. Apparently this is a known issue with CoH on 32-bit Vista running out of addressable memory. Relic says the solution is to switch to the 64-bit version of the OS, which we haven't had time to test out quite yet.

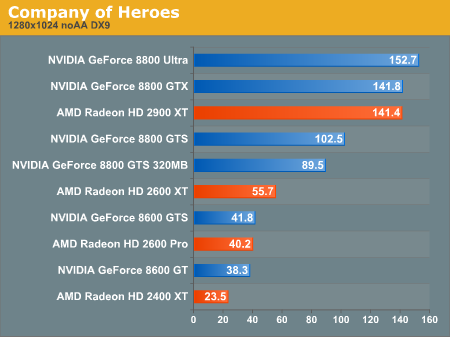

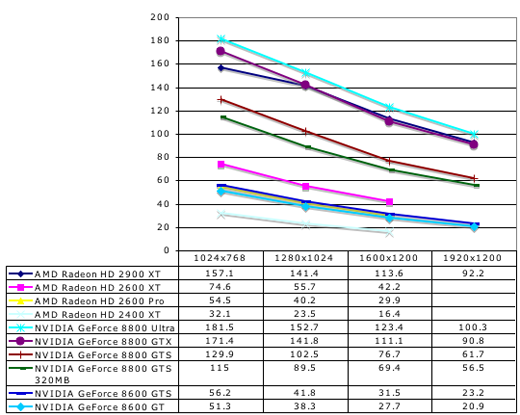

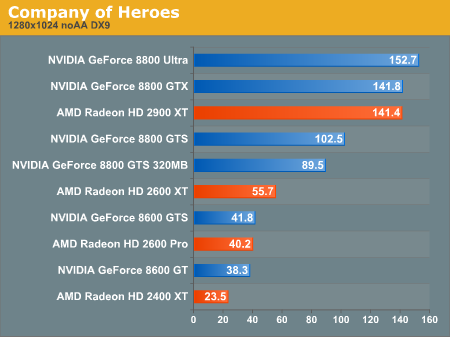

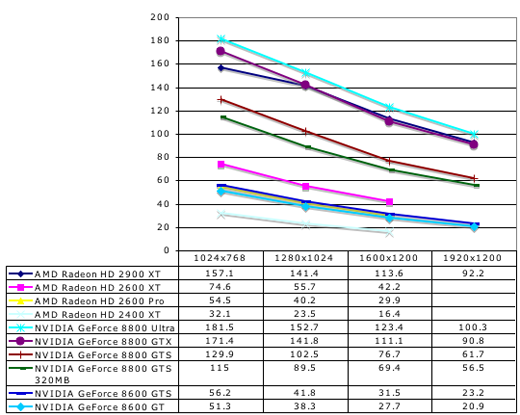

DirectX 9 Tests

Company of Heroes DX9 Performance

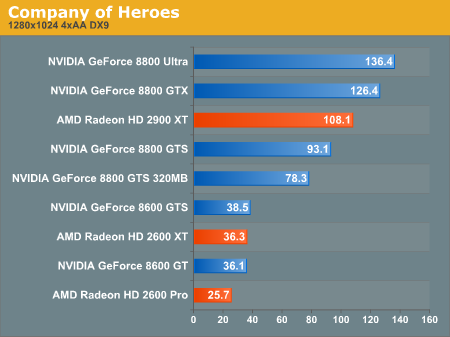

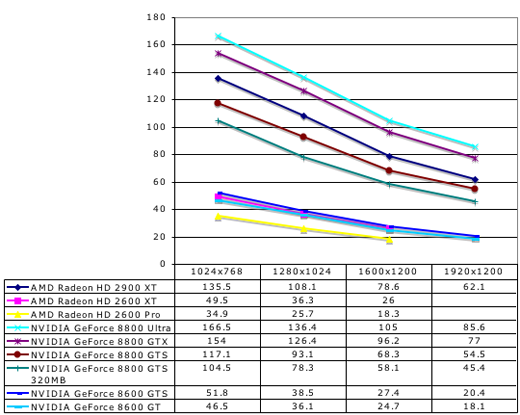

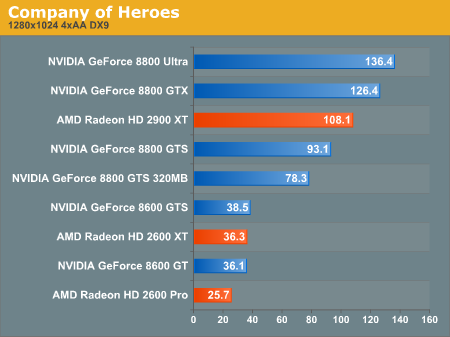

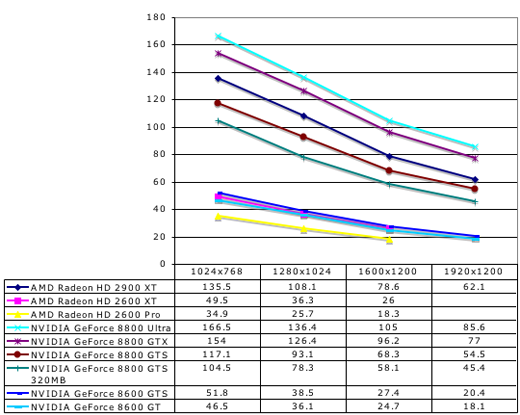

Company of Heroes 4xAA DX9 Performance

Under DX9, the Radeon HD 2900 XT performs quite well when running Company of Heroes. The card is able to keep up with the 8800 GTX here. In spite of a little heavier hit from enabling 4xAA, the 2900 XT still manages to best it's 8800 GTS competition. But the story changes when we move to DX10.

DirectX 10 Tests

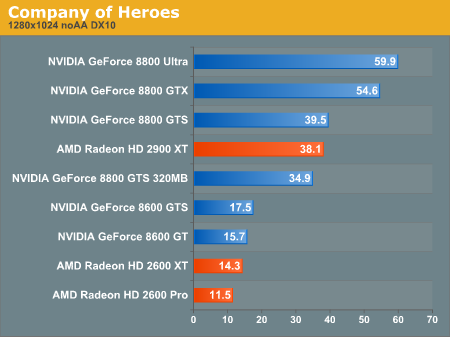

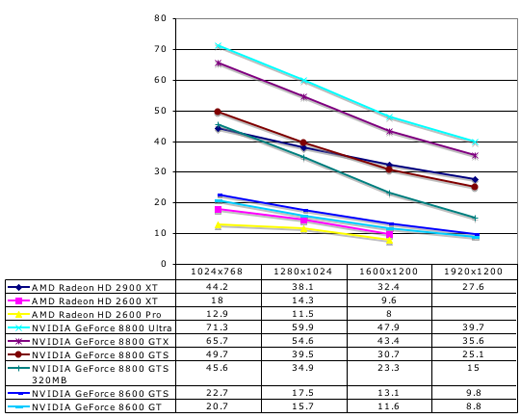

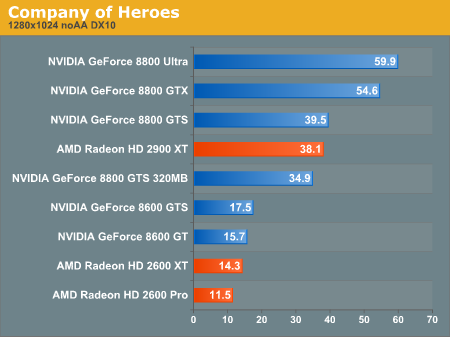

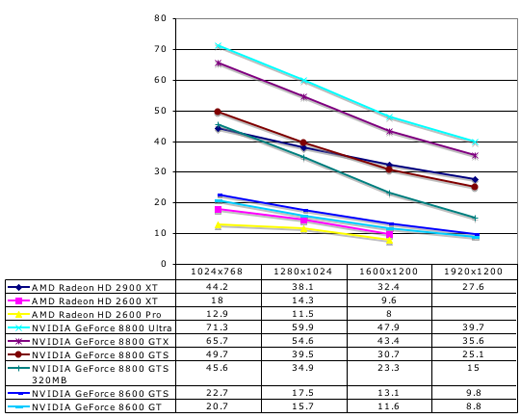

Company of Heroes DX10 Performance

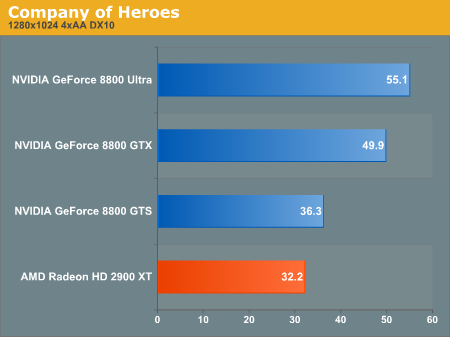

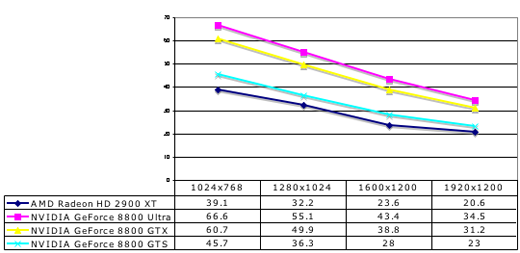

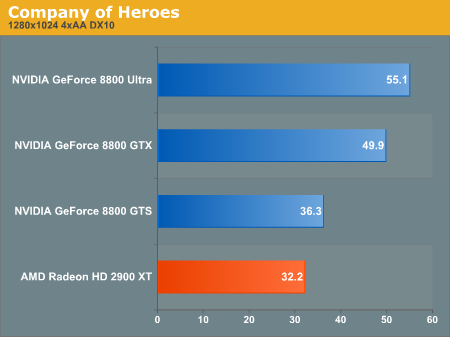

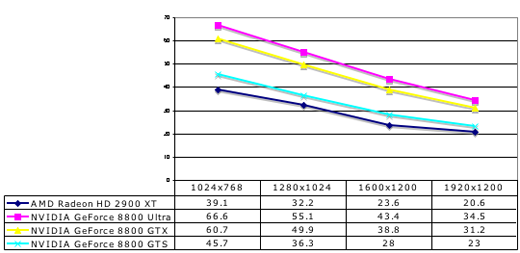

Company of Heroes 4xAA DX10 Performance

When running with all the DX10 features enabled, the HD 2900 XT falls to just below the performance of the GeForce 8800 GTS. Once again, the low-end NVIDIA and AMD cards are unable to run at playable framerates under DX10, though the NVIDIA cards do lead AMD.

Enabling 4xAA further hurts the 2900 XT relative to the rest of the pack. We will try to stick with Windows Vista x64 in the future in order to run numbers with this game on hardware with less RAM.

While Company of Heroes was first out of the gate with a DirectX 10 version, Relic didn't simply recompile their DX9 code for DX10; Company of Heroes was planned for DX10 from the start before there was any hardware available to test with. We are told that it's quite difficult to develop a game when going only by the specifications of the API. Apparently Relic was very aggressive in their use of DX10 specific features and had to scale back their effort to better fit the actual hardware that ended up hitting the street.

In spite of the fact that Microsoft requires support for specific features in order to be certified as a DX10 part, requiring a minimum level of performance for features is not part of the deal. This certainly made it hard for early adopters to produce workable code before the arrival of hardware, as developers had no idea which features would run fastest and most efficiently.

In the end, a lot of the DX10 specific features included in CoH had to be rewritten in a way that could have been implemented on DX9 as well. That's not to say that DX10 exclusive features aren't there (they do make use of geometry shaders in new effects); it's just that doing things in a way similar to how they are currently done offers better performance and consistency between hardware platforms. Let's take a look at some of what has been added in with the DX10 version.

The lighting model has been upgraded to be completely per pixel with softer and more shadows. All lights can cast shadows, making night scenes more detailed than on the DX9 version. These shadows are created by generating cube maps on the fly from each light source and using a combination of instancing and geometry shading to create the effect.

Company of Heroes DirectX 9

Company of Heroes DirectX 10

There is more debris and grass around levels to add detail to terrain. Rather than textures, actual geometry is used (through instancing and geometry shaders) to create procedurally generated "litter" like rocks and short grass.

Triple buffering is enabled by default, but has been disabled (along with vsync) for our tests.

We discovered that our cards with 256MB of RAM or less had trouble running with 4xAA and DirectX 10. Apparently this is a known issue with CoH on 32-bit Vista running out of addressable memory. Relic says the solution is to switch to the 64-bit version of the OS, which we haven't had time to test out quite yet.

DirectX 9 Tests

Under DX9, the Radeon HD 2900 XT performs quite well when running Company of Heroes. The card is able to keep up with the 8800 GTX here. In spite of a little heavier hit from enabling 4xAA, the 2900 XT still manages to best it's 8800 GTS competition. But the story changes when we move to DX10.

DirectX 10 Tests

When running with all the DX10 features enabled, the HD 2900 XT falls to just below the performance of the GeForce 8800 GTS. Once again, the low-end NVIDIA and AMD cards are unable to run at playable framerates under DX10, though the NVIDIA cards do lead AMD.

Enabling 4xAA further hurts the 2900 XT relative to the rest of the pack. We will try to stick with Windows Vista x64 in the future in order to run numbers with this game on hardware with less RAM.

59 Comments

View All Comments

titan7 - Wednesday, July 11, 2007 - link

CoH also got 59.9fps on the GTX Ultra. Today's d3d10 games can run at full frame rates on today's hardware. Just ensure you're using the latest drivers and you have the most expensive card money can buy ;)BigDDesign - Thursday, July 5, 2007 - link

I'm with Derek here. I liked your article. We need much more powerful hardware and time for DX10, Drivers, Developers & Vista to get it together. A couple of years from now, all should be good with DX10. Not any sooner methinks.WaltC - Thursday, July 5, 2007 - link

This article, I thought, was extremely poor for several reasons:(1) DX10 in terms of developer support presently is just about exactly where DX9 was when Microsoft first released it. At the time, people were swearing up & down that DX8.1 was "great" and wondering what all of the fuss about DX9 really meant. Then we saw the protracted "shader model wars" in which nVidia kept defending pre-SM2.0 modes while ATi's 9700 Pro pushed nVidia all the way back to the drawing boards, as its SM2.0 support, specific to DX9, created both image quality and performance that it took nVidia a couple of years to catch.

AnandTech did indeed mention almost in passing that DX10 was still early yet, and that much would undoubtedly improve dramatically in the coming months, but I think that unfortunately AT created the impression that DX10 and DX9 were exactly *alike* except for the fact that DX10 framerates were about half as fast on average as DX9 framerates. A cardinal sin of omission, no doubt about it, because...

(2) DX10 is primarily if not exclusively about improvements in Image Quality. It is *not* about maintaining DX9-levels of IQ while outperforming DX9. It is about creating DX10 levels of Image Quality--period. AnandTech does not seem to understand this at all.

(3)First lesson in Image Quality analysis that even newbies can readily understand is this: if the performance is not where you want it, but the IQ is where you want it, then you do the following to improve performance *without* sacrificing Image Quality (This is a lesson that AnandTech truly seems to have completely forgotten):

Instead of talking about how sorry the performance was in DX10 titles (those very few early attempts that AT looked at), AT should have seen what AT could have done to increase performance while maintaining DX10-levels of Image Quality. That is, AT should have *lowered* test resolutions and raised the level of FSAA employed to get the best balance of Image quality and performance. AnandTech did not even try to do this--which in my view is inexcusable and fairly unforgivable. It is a very bad mistake. I'm sorry--but many, many people, including me, do not use 1280x1024 *exclusively* while playing 3d games. My DX9 resolution of choice is 1152x864, for instance.

The point to be made about DX10 is *not* frame rates locked in at 1280x1024. Sorry AT--you really screwed the pooch on this one. The whole point of DX10 is *better image quality* which everyone who ever graduated from the 3d-school-of-hard-knocks is *supposed* to know!

So, just what does a bunch of *bar charts* detailing absolutely nothing except frame rates tell us about DX10? Not much, if anything at all. Gee, it does tell us that with the reduced image quality that DX9 is capable of providing contrasted with DX10, that DX9 runs faster in terms of frames per second on DX10 hardware! Gosh, who might ever have guessed....<sarcasm>

IMO, the fact that DX10 software even early on is running slower than DX9 on DX10-compliant hardware tells *me* nothing except that DX10 is demanding a lot more work out of the hardware than DX9, which means that we can expect the Image Quality of DX10 to be much better than DX9. These early games that AT tested with are merely the tip of the iceburg of what is to come. AnandTech really blew this one.

Jeff7181 - Tuesday, September 11, 2007 - link

"DX10 is *not* frame rates locked in at 1280x1024"Too bad you didn't say this earlier in your rant, I could have saved myself a few minutes and stopped reading sooner.

Thanks for the laugh though... imagine... someone who thinks 1280x1024 is a frame rate telling AnandTech they screwed the pooch when publishing this article. LOL

titan7 - Thursday, July 12, 2007 - link

This article was awesome. Too bad reality doesn't match your expectations or you'd like it too.1) You have no idea what the "shader war" was about. There isn't even a single parallel to be drawn if you *correctly* look back.

2) d3d10 is nothing about image quality. No hardware on the market or rumoured to be coming out next generation can handle running a full length SM2.0b shaders! And forget about even 3.0! Or even 2.0a that the GeForceFX supported for that matter. d3d10 is about one thing-making d3d on the PC more like a console. That means lower CPU (not GPU!) overhead (more performance in CPU limited situations) and making lives easier for developers.

3) AT has CoH at 1024x768, 1280x1024, 1600x1200, and 1920x1200 (common native LCD resolutions). These days everybody has bigger monitors and playing below 1024x768 is pretty rare. You can extrapolate your 1152x864 resolution from that.

What this shows us is if you increase the image quality (see the screen shots) today's cards don't have enough power to run at full frame rate, with the exception of the 8800 GTX Ultra. AT did a great job showing the world that with simple bar graphs and everything. It's too bad you were too angry to realize that is all it was trying to show.

strikeback03 - Friday, July 6, 2007 - link

umm, they tested Company of Heroes and Call of Juarez at 1024x768, and Lost Planet at 800x600, and some of the cheaper cards could still not maintain playable frame rates. How low on the resolution do you want them to go?jay401 - Friday, July 6, 2007 - link

I'm not sure how raising FSAA is going to improve performance?Nor how removing DX10 visuals but lowering screen res will "maintain DX10 level of visuals" either?

anandtech02148 - Thursday, July 5, 2007 - link

Excellent sincere analysis of the current hardwares situation. Which lead me to some after thoughts,- Maybe a 600buxs PS3 isn't so bad after all.

-What am i going to do with this 8800gtx and the lack of pc games, quite dry season compare to consoles.

-For those of you who hold out longer than I have, a 8800gts or 2900xt is a decent investment if you have a 1920x1200 monitor to go with it.

kilkennycat - Thursday, July 5, 2007 - link

... if you presently have a DX9 system with acceptable performance. Until the NEXT generation of DX10 hardware is released.Anybody who goes out and buys a current Dx10 card ( or even worse, dual cards ) just because "the Dx10 games are coming" ( and "I want bragging-rights") has a lot more money than sense. Buying a 8600 or 2600 for acceptable HD-decoding in your HTPC (or your mid-range PC with a weak CPU) is the only purchasing action with the current Dx10 offerings that makes total sense. All of the upcoming Dx10-capable game-titles for 2007 will have excellent Dx9/SM3 graphics. The lack of Dx10 hardware will smother "bragging-rights" but will have zero effect on playability.

nVidia has been developing the successor family to the G80-series GPU for almost a year now and the first graphics cards from this new generation are expected by the end of 2007. I would not be at all surprised if the first card out of the chute in the new family will immediately fill the cost-space between 8600GTX and 8800GTS, but with DX9 and Dx10 performance far superior to the 8800GTS. No doubt the GPU will also be 65nm, since the manufacturing/yield cost of the huge 80nm G80 die is the immovable stumbling-block to dropping the price of the 8800GTS.

KeithTalent - Thursday, July 5, 2007 - link

That's fine if you game at resolutions below 1680x1050, but some of us game at higher resolutions, and most high-end DX9 cards were struggling mightily to play the latest DX9 games (Oblivion, Supreme Commander, STALKER, etc...).These new generation cards are not only about DX10, they are also about improved performance (exponentially improved performance actually) over that last generation, and some of us actually need that power.

What you said is all well and good for you if you are still gaming at 800x600 or whatever, but I like my resolution a little higher thank you very much.

KT