ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

General Image Quality

Beyond antialiasing, there are quite a number of factors that go into making real-time 3D look good. Real-time graphics are an optimization problem, and the balance between performance and quality is very important. There is no single "right" way to do graphics, and AMD and NVIDIA must listen carefully to developers and consumers to deliver what they believe is the sweet spot between doing things fast and doing things accurately.

NVIDIA currently offers much more customizable image quality. Users are able to turn on and off different optimizations as they see fit. AMD really only offers a couple specific settings that affect image quality, while most of their optimizations are handled on a per game basis by the ominous feature known as Catalyst A.I. The options we have are disabled, standard and advanced. This doesn't really tell us what is going on behind the scenes, but we leave this setting on standard for all of our tests, as this is the default setting and most users will leave it alone.

Aside from optimizations, texture filtering plays a large role in image quality when high levels of filtering are called for. It's trivial to point sample or bilinear filter, and no one skimps on these duties, but when we get to trilinear and anisotropic filtering the number of texture samples we need and the number of calculations we must perform per pixel go up very quickly. In order to mitigate the cost of these operations, both AMD and NVIDIA attempt to apply high levels of filtering where they are needed and not-so-high levels of filtering where it won't matter that much. Of course there is much debate over where to draw the lines here, and NVIDIA and AMD both choose different paths.

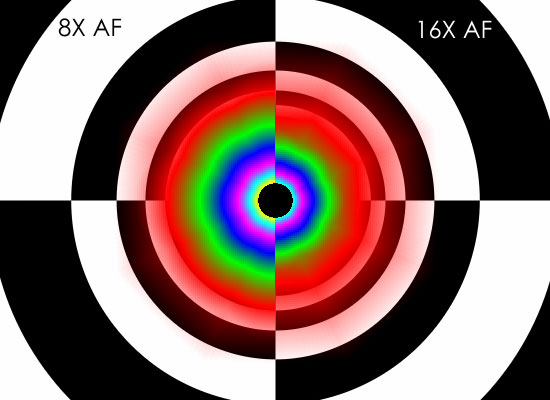

To investigate texture filtering quality, we have employed the trusty D3D AF-Tester. This long-lived application enables us to look at one texture with different colored mipmap levels to see how hardware handles filtering them under different settings. Thankfully, we don't have to talk about angle dependent anisotropic filtering (which is actually a contradiction in terms anyway). AMD and NVIDIA both finally do good quality anisotropic filtering that results in higher resolutions textures being used more of the time where possible. Take a look at these images to see how the different hardware stacks up.

NVIDIA G80 Tunnel 8x/16x AF

G80

R5xx

R6xx

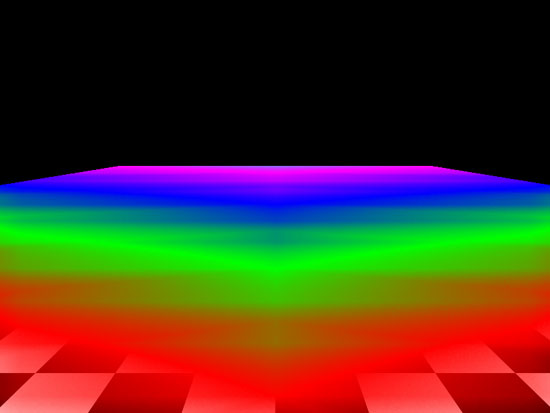

It still looks like NVIDIA is doing slightly more angle independence filtering. In practice, it will be very difficult to tell the difference between an image rendered on AMD hardware and one rendered on NVIDIA hardware. We can also see that AMD has slightly tweaked their AF technique to eliminate some of the odd transitions we noticed on R5xx hardware. This comes through a little better if we look at a flat plane:

AMD R600 Plane 8x AF

R5xx

R6xx

We did happen to notice at least one image quality issue not related to texture filtering on AMD hardware. The problem turns up in Rainbow Six: Vegas in the form of very bad banding where we should see HDR lighting. We didn't notice this problem on G80, as we can see from our comparison.

|

| Click to enlarge |

We also noticed a small issue with Oblivion at one point where the oblivion gate shader would bleed through other objects, but this was not reproducible and we couldn't get a screenshot of it. This means it could be a game related issue rather than a hardware or driver problem. We'll keep our eyes peeled.

Overall IQ of the current DX10 hardware available is quite good, but we will continue to dig further into the matter to make sure that everything stays that way. We're also waiting for DX10 games before we can determine if there are other differences, but hopefully that won't be the case as DX10 has a single set of requirements.

86 Comments

View All Comments

johnsonx - Monday, May 14, 2007 - link

and to which are you going to admit to?What was that old saying about glass houses and throwing stones? Shouldn't throw them in one? Definitely shouldn't them if you ARE one!

Puddleglum - Monday, May 14, 2007 - link

You mean, while it does compete performance-wise?johnsonx - Monday, May 14, 2007 - link

No, I'm pretty sure they mean DOESN'T. That is, the card can't compete with a GTX, yet still uses more power.INTC - Monday, May 14, 2007 - link

Chadder007 - Monday, May 14, 2007 - link

When will we have the 2600's out in review?? Thats the card im waiting for.TA152H - Monday, May 14, 2007 - link

Derek,I like the fact you weren't mincing your words, except for a little on the last page, but I'll give you a perspective of why it might be a little better than some people will think.

There are some of us, and I am one, that will never buy NVIDIA. I bought one, had nothing but trouble with it, and have been buying ATI for 20 years. ATI has been around for so long, there is brand loyalty, and as long as they come out with something that is competent, we'll consider it against their other products without respect to NVIDIA. I'd rather give up the performance to work with something I'm a lot more comfortable with.

The power though is damning, I agree with you 100% on this. Any idea if these beasts are being made by AMD now, or still whoever ATI contracted out? AMD is typically really poor in their first iteration of a product on a process technology, but tend to improve quite a bit in succeeding ones. I wonder how much they'll push this product initially. It might be they just get it out to have it out, and the next one will be what is really a worthwhile product. That only makes sense, of course, if AMD is now manufacturing this product. I hope they are, they surely don't need to make anymore of their processors that aren't selling well.

One last thing I noticed is the 2400 Pro had no fan! It had a heatsink from Hell, but that will still make this a really attractive product for a growing market segment. Any chance of you guys doing a review on the best fanless cards?

DerekWilson - Wednesday, May 16, 2007 - link

TSMC is manufacturing the R600 GPUs, not AMD.AnnonymousCoward - Tuesday, May 15, 2007 - link

"I bought one, had nothing but trouble with it, and have been buying ATI for 20 years."That made me laugh. If one bad experience was all it took to stop you from using a computer component, you'd be left with a PS/2 keyboard at best.

"...to work with something I'm a lot more comfortable with."

Are you more comfortable having 4:3 resolutions stretched on a widescreen? Maybe you're also more comfortable with having crappier performance than nvidia has offered for the last 6 months and counting? This kind of brand loyalty is silly.

MadBoris - Monday, May 14, 2007 - link

As far as your brand loyalty, ATI doesn't exist anymore. Furthermore AMD executives will got the staff so you can't call it the same.Secondly, Nvidia has been a stellar company providing stellar products. Everyone has some ups and downs. Unfortunately with the hardware and drivers this is ATI's (er AMD's) downs.

This card should do ok in comparison to the GTS, especially as drivers mature. Some reviews show it doing better than GTS640 in most tests, so I am not sure where or how discrepencies are coming about. Maybe hardware compatibility, maybe settings.

rADo2 - Monday, May 14, 2007 - link

Many NVIDIA 8600GT/GTS cards do not have a fan, are available on the market now, and are (probably; different league) much more powerful than 2400 ;) But as you are a fanboy, you are not interested, right?