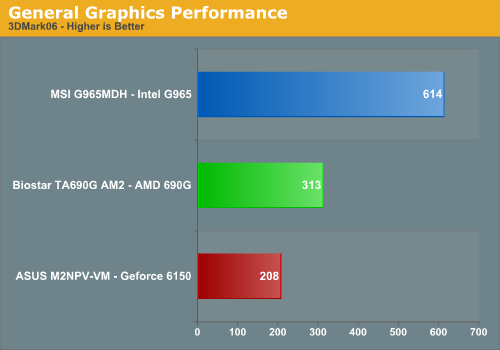

Synthetic Graphics Performance

The 3DMark series of benchmarks developed and provided by Futuremark are among the most widely used tools for benchmark reporting and comparisons. Although the benchmarks are very useful for providing apple to apple comparisons across a broad array of GPU and CPU configurations they are not a substitute for actual application and gaming benchmarks. In this sense we consider the 3DMark benchmarks to be purely synthetic in nature but still valuable for providing consistent measurements of performance.

In our first test, the combination of the Intel Core 2 Duo and G965 makes for a great showing against the AM2 offerings. Okay, so we're being a bit sarcastic in that announcement as we consider these results to be anything but great. The Intel platform had no issues running the full 3DMark series but our AMD platforms could not complete the Shader Mark 3.0 tests. However, they exceeded the Intel platform scores in both the SM 2.0 and CPU tests. While the Intel platform passed the SM3.0 tests, this means little in actual game performance where the G965 failed to properly run games with SM3.0 capability.

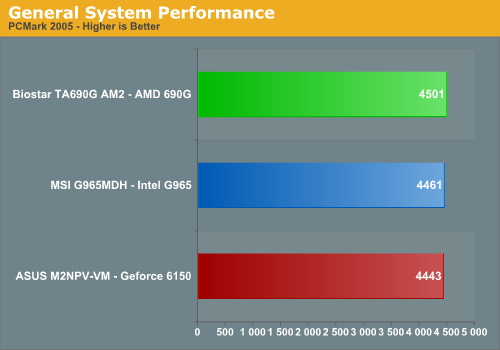

General System Performance

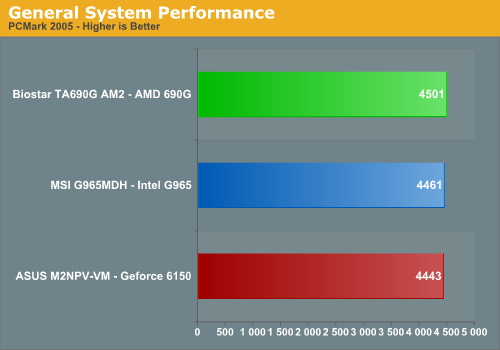

The PCMark05 benchmark developed and provided by Futuremark was designed for determining overall system performance for the typical home computing user. This tool provides both system and component level benchmarking results utilizing subsets of real world applications or programs. This benchmark is useful for providing comparative results across a broad array of Graphics, CPU, Hard Disk, and Memory configurations along with multithreading results. In this sense we consider the PCMark benchmark to be both synthetic and real world in nature and it provides consistency in our benchmark results.

The margins are closer in the PCMark05 results with the 690G platform showing a minor advantage over the G965 and 6150 platforms. While this benchmark is designed around actual application usage, we will see if these results mirror our application testing.

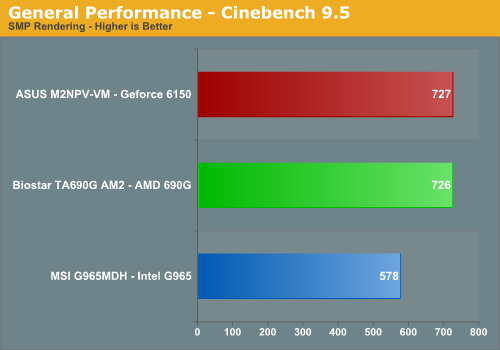

Rendering Performance

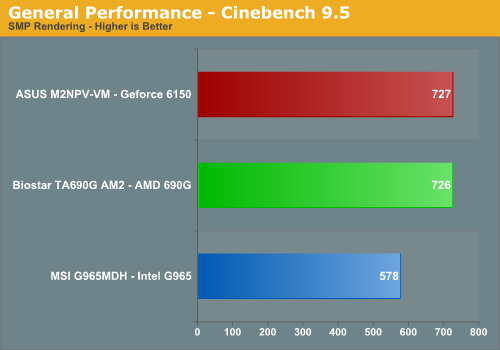

We are using the Cinebench 9.5 benchmark as it tends to heavily stress the CPU subsystem while performing graphics modeling and rendering. Cinebench 9.5 features two different benchmarks with one test utilizing a single core and the second test showcasing the power of multiple cores in rendering the benchmark image. We utilize the standard multiple core benchmark demo and default settings.

The AM2 processors have always enjoyed an advantage in this test and the results continue to show the AM2 platform being dominant in this benchmark with a 26% advantage. The two AM2 platforms basically tie each other indicating CPU throughput is equal on either solution.

The 3DMark series of benchmarks developed and provided by Futuremark are among the most widely used tools for benchmark reporting and comparisons. Although the benchmarks are very useful for providing apple to apple comparisons across a broad array of GPU and CPU configurations they are not a substitute for actual application and gaming benchmarks. In this sense we consider the 3DMark benchmarks to be purely synthetic in nature but still valuable for providing consistent measurements of performance.

In our first test, the combination of the Intel Core 2 Duo and G965 makes for a great showing against the AM2 offerings. Okay, so we're being a bit sarcastic in that announcement as we consider these results to be anything but great. The Intel platform had no issues running the full 3DMark series but our AMD platforms could not complete the Shader Mark 3.0 tests. However, they exceeded the Intel platform scores in both the SM 2.0 and CPU tests. While the Intel platform passed the SM3.0 tests, this means little in actual game performance where the G965 failed to properly run games with SM3.0 capability.

General System Performance

The PCMark05 benchmark developed and provided by Futuremark was designed for determining overall system performance for the typical home computing user. This tool provides both system and component level benchmarking results utilizing subsets of real world applications or programs. This benchmark is useful for providing comparative results across a broad array of Graphics, CPU, Hard Disk, and Memory configurations along with multithreading results. In this sense we consider the PCMark benchmark to be both synthetic and real world in nature and it provides consistency in our benchmark results.

The margins are closer in the PCMark05 results with the 690G platform showing a minor advantage over the G965 and 6150 platforms. While this benchmark is designed around actual application usage, we will see if these results mirror our application testing.

Rendering Performance

We are using the Cinebench 9.5 benchmark as it tends to heavily stress the CPU subsystem while performing graphics modeling and rendering. Cinebench 9.5 features two different benchmarks with one test utilizing a single core and the second test showcasing the power of multiple cores in rendering the benchmark image. We utilize the standard multiple core benchmark demo and default settings.

The AM2 processors have always enjoyed an advantage in this test and the results continue to show the AM2 platform being dominant in this benchmark with a 26% advantage. The two AM2 platforms basically tie each other indicating CPU throughput is equal on either solution.

70 Comments

View All Comments

goinginstyle - Tuesday, March 6, 2007 - link

I think most of the people missed the comments or observations in the article. The article was geared to proving or disproving the capabilities of the 690g and in a way the competing platforms. It was obvious to me the office crowd was not being addressed in this article and it was the home audience that the tests were geared towards. I think the separation between the two was correct.The first computer I bought from Gateway was an IGP unit that claimed it would run everything and anything. It did not and pissed me off. After doing some homework I realized where I went wrong and would never again buy an IGP box unless the video and memory is upgraded, even if it is not for gaming. I have several friends who bought computers for their kids when World of WarCraft came out and bitched non-stop at work because their new Dell or HP would not run the game. At least the author had the balls to state what many of us think. The article was fair and thorough in my opinion although I was hoping to see some 1080P screen shots. Hint Hint

Final Hamlet - Tuesday, March 6, 2007 - link

Too bad one can't edit one's comments...My point (besides correcting a mistake) is, that I think that this test is gravely imbalanced... you are testing - as you have said yourself - an office chipset - then why do you do it with an overpowered CPU?

Office PC's in small businesses go after price and where is the difference in using a mail program between a Core 2 Duo for 1000$ and the smallest and cheapest AMD offering for less than 100$?

Gary Key - Tuesday, March 6, 2007 - link

We were not testing an office chipset. We are testing chipsets marketed as an all in solution to the home, home/office, multimedia, HTPC, and casual gaming crowd. The office chipsets are the Q965/963 and 690V solutions. The G965 and 690G are not targeted to the office workers and were not tested as such. Our goal was to test these boards in the environment and with applications they are marketed to run.

JarredWalton - Tuesday, March 6, 2007 - link

We mentioned this above, but basically we were looking to keep platform costs equal. Sure, X2 3800+ is half as expensive and about 30% slower than the 5200+. But since the Intel side was going to get an E6300 (that's what we had available), the use of a low-end AMD X2 would have skewed results the other direction. We could have used an X2 4800+ to keep costs closer, but that's an odd CPU choice as well as we would recommend spending the extra $15 to get the 5200+.The intent was not to do a strict CPU-to-CPU comparison as we've done that plenty (as recently as the http://www.anandtech.com/cpuchipsets/showdoc.aspx?...">X2 6000+ launch). We wanted to look at platform and keep them relatively equal in the cost department. All you have to do is look at the power numbers to see that the 5200+ with 690G compares quite well (and quiet well) to the E6300 with G965.

The major selling point of this chipset is basically that it supports HDMI output. That's nice, and for HTPC users it could be a good choice. Outside of that specific market, though, there's not a whole lot to put this IGP chipset above other offerings. That was what we were hoping to convey with the article. It's not bad, but neither is it the greatest thing since sliced bread.

If you care at all about GPU performance, all of the modern IGP solutions are too slow. If you don't care, then they're all fast enough to do whatever most people need. For typical business applications, the vast majority of companies are still running Pentium 4, simply because it is more than sufficient. New PCs are now coming with Core 2 Duo, but I know at least a few major corporations that have hundreds of thousands of P4 and P3 systems in use, and I'm sure there are plenty more. Needless to say, those corporations probably won't be touching Vista for at least three or four years - one of them only switched to XP as recently as two years back.

JarredWalton - Tuesday, March 6, 2007 - link

Perhaps it's because the companies releasing these products make so much noise about how much better their new IGP is compared to the older offerings from their competitors? If AMD had released this and said, "This is just a minor update to our previous IGP to improve features and video quality; it is not dramatically faster and is not intended for games" then we would cut them some slack. When all of the companies involved are going on about how much faster percentage-wise they are than the competition (never mind that it's 5 FPS vs. 4 FPS), we're inclined to point out how ludicrous this is. When Intel hypes the DX9 capability of their G965 and yet still can't run most DX9 applications, maybe someone ought to call them on the carpet?Obviously, these low performance IGPs have a place in the business world, but Vista is now placing more of a demand on the GPU than ever before, and bare minimum functionality might now be adequate for a lot of people. As for power, isn't it interesting that the HIGHEST PERFORMANCE IGP ends up using the least amount of power? Never mind the fact that Core 2 Duo already has a power advantage over the X2 5200+!

So, while you might like to pull out the names and call us inane 15 year olds, there was certainly thought put into what we said. Just because something works okay doesn't mean it's great, and we are going to point out the flaws in a product regardless of marketing hype. Given how much effort Intel puts into their CPUs, a little bit more out of their IGP and drivers is not too much to ask for.

TA152H - Wednesday, March 7, 2007 - link

Jared,Maybe they didn't intend their products to be tested in the way you did. As someone pointed out, playing at 800 x 600 isn't that bad, and doesn't ruin the experience unless you have an obsession. Incredibly crude games were incredibly fun, so the resolution isn't going to make or break a game, it's the ideas behind it that will.

You can't be serious about what you want AMD to say. You know they can't, they are in competition and stuff like that would be extremely detrimental to them. Percentages are important, because they may not running the same games as you are, at the same settings. You would prefer they use absolutes as if they would give more information? Did AMD actually tell anyone these were excellent for all types of game? I never saw that.

With regards to CPUs and GPUs, you are trying to obfuscate the point. Everyone uses a CPU, some more than others. But, they do sell lower power ones, and even single core ones. Not everyone uses 3D functionality. If you don't get it, I DON'T want it on certain machines of mine. I don't run stuff like that on them, and I don't want the higher power use or heat dissipation problems from it. What you call effort isn't at all, it's a tradeoff. Don't confuse it with you get something for nothing if Intel puts more into it. You pay for it, and that's the problem. People who use it should, people that don't, shouldn't, so the kiddies can play their shoot 'em ups.

Just so you know, I'm both. I have mostly work machines, but two play machines. I like playing some games that require a good 3D card, but just don't like the mentality the the whole world should subsidize a bunch of gameplayers when they don't need it. That's what add-in cards are for. I would be equally against it if no one made 3D cards because most people didn't need them. I like choices, and I don't want to pay for excessive 3D functionality on something that will never use it, to help gameplayers out. Both existing is great, and IGPs will creep up as they always have, when it becomes inexpensive (both in power and initial cost) to add capabality, so the tradeoff is minor.

StriderGT - Tuesday, March 6, 2007 - link

Does this chipset support 5.1 LPCM over HDMI or not??? Or more plainly can someone send 5.1 (games, HD movies, etc) digitally to receiver with the 690G? According to your previous article on the 690G 5.1 48khz was supported over the HDMI port. Now its back to 2 channel and AC3 bitstream. Which is it?Gary Key - Wednesday, March 7, 2007 - link

It is two channel plus AC3 over HDMI. That is the final spec on production level boards and drivers. We will have a full audio review up in a week or so that also utilizes the on-board codec.StriderGT - Thursday, March 8, 2007 - link

Why is this happening? Why on earth can't they produce a PC HDMI Audio solution that outputs up to 7.1 LPCM (96khz/24bit) for ALL sources!?! They already do that for 2 channel sources!!!! Do you have any info from the hardware vendors regarding the reason/s they will not produce such a straightforward and simple solution?!?PS There are lots of people demanding a TRUE PC HDMI Audio solution not this SPDIF hacks...

Renoir - Tuesday, March 6, 2007 - link

I'm also interested to know more specifics about the audio side of this chipset. The support of HDMI v1.3 suggests that with an appropriate driver and supporting playback software Dolby TrueHD and DTS-HD bitstreams should be able to be sent via HDMI to a v1.3 receiver with the necessary decoders. Is this a possibility?