NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

Our DX9FSAAViewer won't show us the exact sample patterns for CSAA, but we can take a look at where ATI and NVIDIA are getting their color sample points:

| ATI |  |

|

|

| G70 |  |

|

|

| G80 |  |

|

|

| G80* |  |

|

|

*Gamma AA disabled

As we can see, NVIDIA's 8x color sample AA modes use a much better pseudo random sample pattern rather than a combination of two rotated grid 4xAA patterns as in G70's 8xSAA.

While it is interesting to talk about the internal differences between MSAA and CSAA, the real test is pitting NVIDIA's new highest quality mode against ATI's highest quality.

G70 4X G80 16XQ ATI 6X

Hold mouse over links to see Image Quality

G70 4X G80 16XQ ATI 6X

Hold mouse over links to see Image Quality

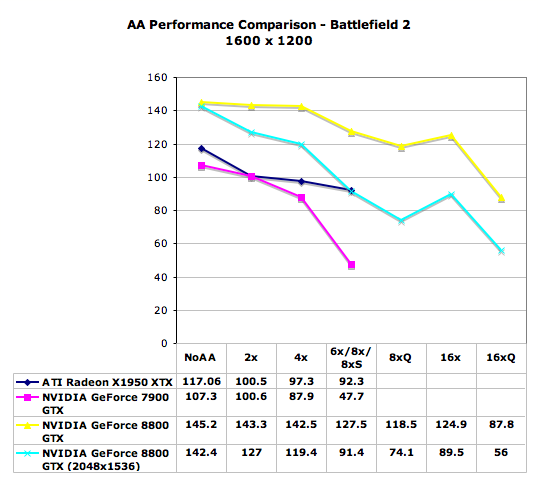

Stacking up the best shows the power of NVIDIA's CSAA with 16 sample points and 8 color/z values looking much smoother than ATI's 6xAA. Compared to G70, both ATI and G80 look much better. Now let's take a look at the performance impact of CSAA. This graph may require a little explanation to understand, but it is quite interesting and worth looking at.

As we move from lower to higher quality AA modes, performance generally goes down. The exception is with G80's 16x mode. Its performance is only slightly lower than 8x. This is due to the fact that both modes use 4 color samples alongside more coverage samples. We can see the performance impact of having more coverage samples than color samples by looking at the performance drop from 4x to 8x on G80. There is another slight drop in performance when increasing the number of coverage samples from 8x to 16x, but it is almost nil. With the higher number of multisamples in 8xQ, algorithms that require z/stencil data per sub-pixel may look better, but 16x definitely does great job with the common edge case with much less performance impact. Enabling 16xQ shows us the performance impact of enabling more coverage samples with 8x multisamples.

It is conceivable that a CSAA mode using 32 sample points and 8 color points could be enabled to further improve coverage data at nearly the same performance impact of 16xQ (similar to the performance difference we see with 8x and 16x). Whatever the reason this wasn't done in G80, the potential is there for future revisions of the hardware to offer a 32x mode with the performance impact of 8x. Whether the quality improvement is there or not is another issue entirely.

111 Comments

View All Comments

Sunrise089 - Thursday, November 9, 2006 - link

Then I suppose he's in the market to part with an ugly old high-end CRT. I'd love to buy it from him. Seriously.JarredWalton - Thursday, November 9, 2006 - link

You want an older 21" Cornerstone CRT? It's a beast, but you can have it for the cost of shipping (which unfortunately would probably be ~$50). I'd also sell my Samsung 997DF 19" CRT for about $50, and maybe an NEC FE991-SB for $50 (which unfortunately has a scratch from my daughter in the anti-glare coating). If anyone lives in the Olympia, WA area, you know how to contact me (I hope). I'd rather someone come by to pick up any of these CRTs rather than shipping, as I don't think I have the original boxes.DerekWilson - Thursday, November 9, 2006 - link

lol next thing you know links to ebay auctions are gonna start showing up in our articles :-)yyrkoon - Thursday, November 9, 2006 - link

lol, I've got a 21" techtronics I'll sell for $200 usd, plus shipping ;) Hasnt been used since I purchased my Viewsonic VA1912wb (well, been used very little ).imaheadcase - Wednesday, November 8, 2006 - link

can't stand AA benchmarks myself :)Question: Do you have any info on what kinda card nvidia releasing this feb? Is it something in between these 2 cards or something even lower?

Im looking for a $300ish g80! :D

flexy - Wednesday, November 8, 2006 - link

if ANYTHING counts then how those high-end cards perform WITH their various AA settings.... the power of those cards (and the money spent on :) RIGHT translated into ---> IMAGE QUALITY/PERFORMANCE.Please dont tell you you would get an G80 but do NOT care about AA, this does NOT make any sense...sorry...

I am especially impressed reading that transparency AA has such a LITTLE performance impact. What game engine did you test this on ?

On the older ATI cards (and am i right that T.A. is the same as "adaptive antialiasing" ? )...this feature (depending on game engine) is the FPS killer....eg. w/ games like oblivion (WHERE ARE THE GOTHIC 3 BENCHEIS BTW ? :)...much vegetation etc. game-engines.

Enable transparency AA and see all those trees, grass etc. without jaggies.

imaheadcase - Thursday, November 9, 2006 - link

Well lots of people don't are for AA. Even if i had this card I would not use it. I visually see NO difference with it on or off. Its personal test. I don't even see "jaggies" on my older 9700 PRO card.flexy - Thursday, November 9, 2006 - link

you sure are talking about ANTIALIASING ???What resoltions do you run ? Not that my CRT can even handle more than 1600x1200..but even w/ 1600 i get VERY prominent jaggies if i dont run AA.

I made it a habit to run at least 4xAA in ANY game, and some engines (hl2:source engine) etc. run extremely well with 4xAA, even 6xAA is very playable at elast with HL2.

The very recent games, namely NWN2 and G3 now dont support AA, playing at 1280x1024 and it looks utterly horrible ! If you say you dont see jags in say ANY resolution under 1600..very hard to believe

imaheadcase - Thursday, November 9, 2006 - link

Yes im talking about antialiasing. I normally play BF2 and oblivion at 1024x768 (9700 pro remember).Fact is most people won't see them unless someone points them out. The brain is still better at rendering stuff the way you want to see it vs hardware :)

flexy - Thursday, November 9, 2006 - link

ok..but then it's also a performance problem. If it doesn't bother you, well ok.I also have to settle w/ the fact that many RECENT games are even unable to do AA..however i wish they would.

But once i get a 8800 i will do &&&& to get the most out of IQ, AA, AF, transparency/texture AF, you name it. ALONE also for the reason that i would need a super-high end monitor first to even run resolutions like 2000xsomething...and as long as i have a lame 19" CRT and CANNOT even go over 1600 (99,99% of games even running everything on 1280x or 1360x) i will use all the power to get out best possible IQ in those low resolutions.

Also..looking at the benchmarks..its NOT that you lose any real time gaming-experiencee since THOSE monster cards are made for exactly this...eg. running oblivion with all those settings at MAX AND AA on and HDR...and you are still in VERY reasobale FPS ranges.