NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

General Purpose Processing

With all the talk about how general purpose G80 is, can we expect it to replace our shiny new quad core desktop processor? This isn't quite possible at this point due to the way most general purpose code uses the CPU. Many dependencies and low parallelism prevent NVIDIA from simply dropping this in a motherboard and running Windows on it.

But there are general purpose tasks that lend themselves well to the parallelism of G80, and NVIDIA is enabling developers to take advantage of this via a technology they call CUDA (Compute Unified Device Architecture).

The major thing to take away from this is that NVIDIA will have a C compiler that is able to generate code targeted at their architecture. We aren't talking about some OpenGL code manipulated to use graphics hardware for math. This will be C code written like a developer would write C.

A programmer will be able to treat G80 like a hugely parallel data processing engine. Applications that require massively parallel compute power will see huge speed up when running on G80 as compared to the CPU. This includes financial analysis, matrix manipulation, physics processing, and all manner of scientific computations.

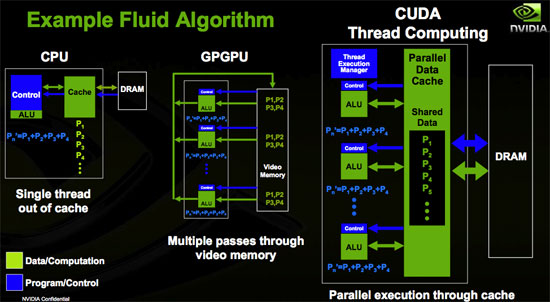

NVIDIA has written a totally separate driver for G80 that will be used to run compiled C code targeted at G80. The reason they've done this is because the usage model for GPGPU programming is so different from that of graphics. Both the graphics driver and the CUDA driver can be running on G80 at the same time. This may allow programmers to take advantage of CUDA for in game physics on a single card. The driver changes the conceptual layout of the GPU into something that looks more like this:

This design, along with stream output capabilities, allows programmers to treat the GPU like a general purpose data processing engine. Each block of 16 SPs is able to share data with each other and can perform multiple passes on the data without having to write out and read back in from the onboard graphics memory. Developers are given the ability to manage the caches themselves.

Will NVIDIA make an x86 CPU? Most likely not, but we may see NVIDIA produce even more general purpose CPUs for the handheld, CE, integrated markets. NVIDIA may end up becoming a producer of system on a chip solutions utilizing its graphics technology and simply expanding G80 to be more general purpose (and obviously get rid of some of the SPs in order to lower costs).

111 Comments

View All Comments

aweigh - Friday, November 10, 2006 - link

You can just use the program DX Tweaker to enable Triple Buffering in any D3D game and use your VSYNC with negligable performance impact. So you can play with your VSYNC, a high-res and AA as well. :)aweigh - Friday, November 10, 2006 - link

I'm gonna buy an 88 specifically to use 4x4 SuperSampling in games. Why bother with MSAA with a card like that?DerekWilson - Friday, November 10, 2006 - link

Supersampling can make textures blurry -- especially very detailed textures.And the impact will be much greater with the use of longer more detailed pixel shaders (as the shaders must be evaluated at every sub-pixel in supersample).

I think transparency / adaptive AA are enough.

On your previous comment, I don't think we're to the point where we can hit triple buffering, vsync, high levels of AA AND high resolution (2560x1600) without some input lag (triple buffering plus vsync with framerates less than your refresh rate can cause problems).

If you're talking about enabling all these options on a lower resolution lcd panel, then I can definitely see that as a good use of the hardware. And it might be interesting to look at more numbers with these type of options enabled.

Thanks for the suggestion.

aweigh - Saturday, November 11, 2006 - link

I never knew that about SuperSampling. Is it something similar to Quincux blurring? And would using a negative LOD via RivaTuner/nHancer counteract the effect?How about NVIDIA's Digital Sharpness setting in Color Correction? I've found a smidge of sharpening can do wonders to improve overall clarity.

By the way, when you said Adaptive AA, were you referring to ATI cards?

Unam - Friday, November 10, 2006 - link

Derek,Saw your comment regarding the rationale for the test resolution, while I understand your reasoning now, it still begs the question how many of your readers have 30" LCD flat panels?

DerekWilson - Friday, November 10, 2006 - link

There might not be many out there right now, but it's still the right test platform for G80. We did test down to 1600x1200, so people do have information if they need it.But it speaks to who should own an 8800 GTX right now. It doesn't make sense to spend that much money on a part if you aren't going to get anything out of it with your 1280x1024 panel.

Owners of a 2560x1600 panel will want an 8800 GTX. Owners of an 8800 GTX will want a 2560x1600 panel. Smooth framerates with the ability to enable 4xAA in every game that allowed it is reason enough. People without a 2560x1600 panel should probably wait until prices come down on the 8800 GTX or until games that are able to push the 8800 GTX harder to buy the card.

Unam - Tuesday, November 14, 2006 - link

Derek,A follow up to testing resolutions, the FPS numbers we see in your articles, are they maximum, minimum or average?

Unam - Friday, November 10, 2006 - link

Who the heck runs 2560x1600? At 4XAA? Come on guys, real world benchmarks please!DerekWilson - Friday, November 10, 2006 - link

we did:1600x1200, 1920x1440, and even 1280x1024 in Oblivion

dragonsqrrl - Thursday, August 25, 2011 - link

....lol, owned.