Valve Hardware Day 2006 - Multithreaded Edition

by Jarred Walton on November 7, 2006 6:00 AM EST- Posted in

- Trade Shows

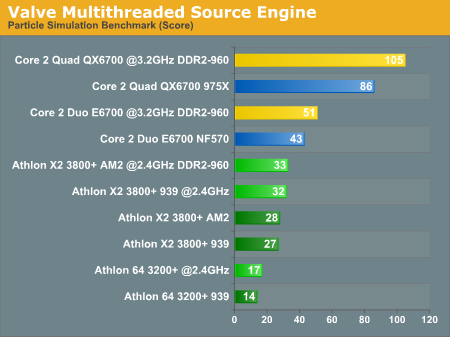

Particle Systems Benchmark

The more meaningful of the two benchmarks in terms of end users is going to be the particle simulation benchmark, as this has the potential to actually impact gameplay. The only problem is that the map is a contrived situation with four rooms each showing different particle system simulations. As proof that simulating particle systems can require a lot of CPU processing power, and that Valve can multithread the algorithms, the benchmark is meaningful. How it will actually impact future gaming performance is more difficult to determine. Also note that particle systems are only one aspect of game engine performance that can use more processing cores; artificial intelligence, physics, animation, and other tasks can benefit as well, and we look forward to the day when we have a full gaming benchmark that can simulate all of these areas rather than just particle systems. For now, here's a quick look at the particle system performance results.

There are several interesting things we get from the particle simulation benchmark. First, it scales almost linearly with the number of processor cores, so the Core 2 Quad system ends up being twice as fast as the Core 2 Duo system when running at the same clock speed. We will take a look at how CPU cache and memory bandwidth affects performance in the future, but at present it's pretty clear that Core 2 once again holds a commanding performance lead over AMD's Athlon 64/X2 processors. As for Pentium D, we repeatedly got a program crash when trying to run it, even with several different graphics cards. There's no reason to assume it would be faster than Athlon X2, though, and we did get results with Pentium D on the other test.

Athlon X2 performed the same, more or less, whether running on 939 or AM2 - even with high-end DDR2-800 memory. Our E6700 test system generated inconsistent results when overclocked, likely due to limitations with the nForce 570 SLI chipset. For most of the platforms, the 20% overclock brought on average a 20% performance increase, showing again that we are essentially completely CPU limited. The lack of granularity makes the scores vary slightly from 20% but it's close enough for now. Finally, taking a look at Athlon 64 vs. X2 on socket 939, the second CPU core improves performance by ~90%

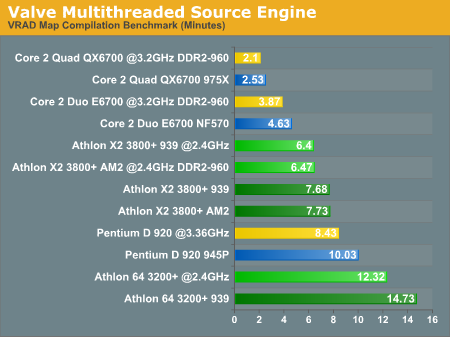

VRAD Map Compilation Benchmark

As more of a developer/content creation benchmark, the results of the VRAD benchmark are not likely to be as interesting to a lot of people. However, keep in mind that better performance in this area can lead to more productive employees, so hopefully that means better games sooner. (Or maybe it just means more stress for the content developers?)

The results we got on the map compilation benchmark support Valve's own research and help to explain why they would be very interested in getting more Core 2 Quad systems into their offices. We don't have a single core Pentium 4 processor represented, but even a Pentium D 920 still ends up taking more than twice as long as a Core 2 Duo E6700 system, and about four times as long as Core 2 Quad. Looking at the CPU speed scaling, a 20% higher clock speed with the Pentium D resulted in 19% higher performance. If Intel had tried to stick with the NetBurst architecture, they would need dual core Pentium D processors running at more than 6.0 GHz in order to match the performance offered by the E6700. We won't even get into discussions about how much power such a CPU would require.

Performance scales almost linearly with clock speed once again, improving by 20% with the overclocking. Moving from single to dual core Athlon chips improves performance by about 92%. Going from a Core 2 Duo to a Core 2 Quad on the other hand improves performance by "only" 84%. It is not too surprising to find that moving to four cores doesn't show scaling equal to that of the single to dual move, but an 84% increase is still very good, roughly equal to what we see in 3D rendering applications.

The more meaningful of the two benchmarks in terms of end users is going to be the particle simulation benchmark, as this has the potential to actually impact gameplay. The only problem is that the map is a contrived situation with four rooms each showing different particle system simulations. As proof that simulating particle systems can require a lot of CPU processing power, and that Valve can multithread the algorithms, the benchmark is meaningful. How it will actually impact future gaming performance is more difficult to determine. Also note that particle systems are only one aspect of game engine performance that can use more processing cores; artificial intelligence, physics, animation, and other tasks can benefit as well, and we look forward to the day when we have a full gaming benchmark that can simulate all of these areas rather than just particle systems. For now, here's a quick look at the particle system performance results.

There are several interesting things we get from the particle simulation benchmark. First, it scales almost linearly with the number of processor cores, so the Core 2 Quad system ends up being twice as fast as the Core 2 Duo system when running at the same clock speed. We will take a look at how CPU cache and memory bandwidth affects performance in the future, but at present it's pretty clear that Core 2 once again holds a commanding performance lead over AMD's Athlon 64/X2 processors. As for Pentium D, we repeatedly got a program crash when trying to run it, even with several different graphics cards. There's no reason to assume it would be faster than Athlon X2, though, and we did get results with Pentium D on the other test.

Athlon X2 performed the same, more or less, whether running on 939 or AM2 - even with high-end DDR2-800 memory. Our E6700 test system generated inconsistent results when overclocked, likely due to limitations with the nForce 570 SLI chipset. For most of the platforms, the 20% overclock brought on average a 20% performance increase, showing again that we are essentially completely CPU limited. The lack of granularity makes the scores vary slightly from 20% but it's close enough for now. Finally, taking a look at Athlon 64 vs. X2 on socket 939, the second CPU core improves performance by ~90%

VRAD Map Compilation Benchmark

As more of a developer/content creation benchmark, the results of the VRAD benchmark are not likely to be as interesting to a lot of people. However, keep in mind that better performance in this area can lead to more productive employees, so hopefully that means better games sooner. (Or maybe it just means more stress for the content developers?)

The results we got on the map compilation benchmark support Valve's own research and help to explain why they would be very interested in getting more Core 2 Quad systems into their offices. We don't have a single core Pentium 4 processor represented, but even a Pentium D 920 still ends up taking more than twice as long as a Core 2 Duo E6700 system, and about four times as long as Core 2 Quad. Looking at the CPU speed scaling, a 20% higher clock speed with the Pentium D resulted in 19% higher performance. If Intel had tried to stick with the NetBurst architecture, they would need dual core Pentium D processors running at more than 6.0 GHz in order to match the performance offered by the E6700. We won't even get into discussions about how much power such a CPU would require.

Performance scales almost linearly with clock speed once again, improving by 20% with the overclocking. Moving from single to dual core Athlon chips improves performance by about 92%. Going from a Core 2 Duo to a Core 2 Quad on the other hand improves performance by "only" 84%. It is not too surprising to find that moving to four cores doesn't show scaling equal to that of the single to dual move, but an 84% increase is still very good, roughly equal to what we see in 3D rendering applications.

55 Comments

View All Comments

Nighteye2 - Wednesday, November 8, 2006 - link

Ok, so that's how Valve will implement multi-threading. But what about other companies, like Epic? How does the latest Unreal Engine multi-thread?Justin Case - Wednesday, November 8, 2006 - link

Why aren't any high-end AMD CPUs tested? You're testing 2GHz AMD CPUs against 2.6+ GHz Intel CPUs. Doesn't Anandtech have access to faster AMD chips? I know the point of the article is to compare single- and multi-core CPUs, but it seems a bit odd that all the Intel CPUs are top-of-the-line while all AMD CPUs are low end.JarredWalton - Wednesday, November 8, 2006 - link

AnandTech? Yes. Jarred? Not right now. I have a 5000+ AM2, but you can see that performance scaling doesn't change the situation. 1MB AMD chips do perform better than 512K versions, almost equaling a full CPU bin - 2.2GHz Opteron on 939 was nearly equal to the 2.4GHz 3800+ (both OC'ed). A 2.8 GHz FX-62 still isn't going to equal any of the upper Core 2 Duo chips.archcommus - Tuesday, November 7, 2006 - link

It must be a really great feeling for Valve knowing they have the capacity and capability to deliver this new engine to EVERY customer and player of their games as soon as it's ready. What a massive and ugly patch that would be for virtually any other developer.Don't really see how you could hate on Steam nowadays considering things like that. It's really powerful and works really well.

Zanfib - Tuesday, November 7, 2006 - link

While I design software (so not so much programming as GUI design and whatnot), I can remember my University courses dealing with threading, and all the pain threading can bring.I predicted (though I'm sure many could say this and I have no public proof) that Valve would be one of the first to do such work, they are a very forward thinking company with large resources (like Google--they want to work on ANYthing, they can...), a great deal of experience and, (as noted in the article) the content delivery system to support it all.

Great article about a great subject, goes a long way to putting to rest some of the fears myself and others have about just how well multi-core chips will be used (with the exception of Cell, but after reading a lot about Cell's hardware I think it will always be an insanely difficult chip to code for).

Bonesdad - Tuesday, November 7, 2006 - link

mmmmmmmmm, chicken and mashed potatoes....Aquila76 - Tuesday, November 7, 2006 - link

Jarred, I wanted to thank you for explaining in terms simple enough for my extremely non-technical wife to understand why I just bought a dual-core CPU! That was a great progression on it as well, going through the various multi-threading techniques. I am saving that for future reference.archcommus - Tuesday, November 7, 2006 - link

Another excellent article, I am extremely pleased with the depth your articles provide, and somehow, every time I come up with questions while reading, you always seem to answer exactly what I was thinking! It's great to see you can write on a technical level but still think like a common reader so you know how to appeal to them.With regards to Valve, well, I knew they were the best since Half-Life 1 and it still appears to be so. I remember back in the days when we weren't even sure if Half-Life 2 was being developed. Fast forward a few years and Valve is once again revolutionizing the industry. I'm glad HL2 was so popular as to give them the monetary resources to do this kind of development.

Right now I'm still sitting on a single core system with XP Pro and have lots of questions bustling in my head. What will be the sweet spot for Episode 2? Will a quad core really offer substantially better features than a dual core, or a dual core over a single core? Will Episode 2 be fully DX10, and will we need DX10 compliant hardware and Vista by its release? Will the rollout of the multithreaded Source engine affect the performance I already see in HL2 and Episode 1? Will Valve actually end up distributing different versions of the game based on your hardware? I thought that would not be necessary due to the fact that their engine is specifically designed to work for ANY number of cores, so that takes care of that automatically. Will having one core versus four make big graphical differences or only differences in AI and physics?

Like you said yourself, more questions than answers at this point!

archcommus - Tuesday, November 7, 2006 - link

One last question I forgot to put in. Say it was somehow possible to build a 10 or 15 GHz single core CPU with reasonable heat output. Would this be better than the multi-core direction we are moving towards today? In other words, are we only moving to mult-core because we CAN'T increase clock speeds further, or is this the preferred direction even if we could.saratoga - Tuesday, November 7, 2006 - link

You got it.A higher clock speed processor would be better, assuming performance scaled well enough anyway. Parallel hardware is less general then serial hardware at increasing performance because it requires parallelism to be present in the workload. If the work is highly serial, then adding parallelism to the hardware does nothing at all. Conversely, even if the workload is highly parallel, doubling serial performance still doubles performance. Doubleing the width of a unit could double the performance of that unit for certain workloads, while doing nothing at all for others. In general, if you can accelerate the entire system equally, doubling serial performance will always double program speed, regardless of the program.

Thats the theory anyway. Practice says you can only make certain parts faster. So you might get away with doubling clock speed, but probably not halving memory latency, so your serial performance doesn't scale like you'd hope. Not to mention increasing serial performance is extremely expensive compared to parallel performance. But if it were possible, no one would ever bother with parallelism. Its a huge pain in the ass from a software perspective, and its becoming big now mostly because we're starting to run out of tricks to increase serial performance.