Server Guide Part 1: Introduction to the Server World

by Johan De Gelas on August 17, 2006 1:45 PM EST- Posted in

- IT Computing

What makes a server different?

This is far from an academic or philosophical question as it allows you to see the difference between a souped up desktop with the label "server" which is being sold with a higher profit margin and a real server configuration that will be offering reliable services for years.

A few years ago, the question above would have been very easy to answer for a typical hardware person. Servers used to distinguish themselves on first sight from a normal desktop pc: they had SCSI disks, RAID controllers, multiple CPUs with large amounts of cache and Gigabit Ethernet. In a nutshell, servers had faster and more CPUs, better storage and faster access to the LAN.

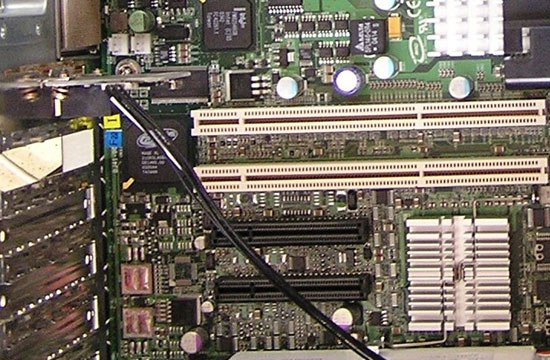

PCI-e (black) has still a long way to go before it

will replace PCI-X (white) in the server world

It is clear that is a pretty simplistic and wrong way to understand what servers are all about. Since the introduction of new SATA features (SATA revision 2.5) such as staggered spindle spin up, Native Command Queuing and Port Multipliers, servers are equipped with SATA drives just like desktop PCs. A high end desktop pc has two CPU cores, 10,000 rpm SATA drives, gigabit Ethernet and RAID-5. Next year that same desktop might even have 4 cores.

So it is pretty clear that the hardware gap between servers and desktops is shrinking and not really a good way to judge. What makes a server a server? A server's main purpose is to make certain IT services (database, web, mail, DHCP...) available to many users at the same time, and concurrent access to these services is an important build criteria. Secondly, a server is a business tool, therefore it will be evaluated on how much it costs to deliver those services during each year or semester. The focus on Total Cost of Ownership (TCO) and concurrent access performance is what really sets a server apart from a typical desktop at home.

Basically, a server is different on the following points:

The three last points all are part of lowering TCO. So what is TCO anyway?

This is far from an academic or philosophical question as it allows you to see the difference between a souped up desktop with the label "server" which is being sold with a higher profit margin and a real server configuration that will be offering reliable services for years.

A few years ago, the question above would have been very easy to answer for a typical hardware person. Servers used to distinguish themselves on first sight from a normal desktop pc: they had SCSI disks, RAID controllers, multiple CPUs with large amounts of cache and Gigabit Ethernet. In a nutshell, servers had faster and more CPUs, better storage and faster access to the LAN.

PCI-e (black) has still a long way to go before it

will replace PCI-X (white) in the server world

It is clear that is a pretty simplistic and wrong way to understand what servers are all about. Since the introduction of new SATA features (SATA revision 2.5) such as staggered spindle spin up, Native Command Queuing and Port Multipliers, servers are equipped with SATA drives just like desktop PCs. A high end desktop pc has two CPU cores, 10,000 rpm SATA drives, gigabit Ethernet and RAID-5. Next year that same desktop might even have 4 cores.

So it is pretty clear that the hardware gap between servers and desktops is shrinking and not really a good way to judge. What makes a server a server? A server's main purpose is to make certain IT services (database, web, mail, DHCP...) available to many users at the same time, and concurrent access to these services is an important build criteria. Secondly, a server is a business tool, therefore it will be evaluated on how much it costs to deliver those services during each year or semester. The focus on Total Cost of Ownership (TCO) and concurrent access performance is what really sets a server apart from a typical desktop at home.

Basically, a server is different on the following points:

- Hardware optimized for concurrent access

- Professional upgrade slots such as PCI-X

- RAS features

- Chassis format

- Remote management

The three last points all are part of lowering TCO. So what is TCO anyway?

32 Comments

View All Comments

JarredWalton - Thursday, August 17, 2006 - link

Fixed.Whohangs - Thursday, August 17, 2006 - link

Great stuff, definitely looking forward to more in depth articles in this arena!saiku - Thursday, August 17, 2006 - link

This article kind of reminds me THG's recent series of articles on how computer graphics cards work.For us techies who don't get to peep into our server rooms much, this is a great intro. Especially for guys like me who work in small companies where all we have are some dusty Windows 2000 servers stuck in a small server "room".

Thanks for this cool info.

JohanAnandtech - Friday, August 18, 2006 - link

Thanks! Been in the same situation as you. Then I got a very small budget for upgrading our serverroom (about $20000) at the university I work for and I found out that there is quite a bit of information about servers but all fragmented, and mostly coming from non-independent sources.splines - Thursday, August 17, 2006 - link

Excellent work pointing out the benefits and drawbacks of Blades. They are mighty cool, but not this second coming of the server christ that IBM et al would have you believe.Good work all round. It looks to be a great primer for those new to the administration side of the business.

WackyDan - Thursday, August 17, 2006 - link

Having worked with blades quite a bit, I can tell you that they are quite a significant innovation.I'll disagree with the author of the article that there is no standard. Intel co-designed the IBM bladecenter and licensed it's manufacture to other OEMS. Together, IBM and Intel have/had over 50% share inthe blade space. THat share along with Intel's collaboration is by default considered the standard int he industry.

Blades, done properly, have huge advantages over their rack counterparts. ie; far less cables. In the IBM's the mid-plane replaces all the individual network and optical cables as the networking modules (copper and fibre) are internal and you can get several flavors... Plus I only need one cable drop to manage 14 servers....

And if you've never seen 14 blades in 7u of space fully redundant, your are missing out. As for VMware, I've seen it running on blades with the same advantages as it's rack mount peers... and FYI... Blades are still considered rack mount as well...No you are not going to have any 16/32 ways as of yet.... but still, Blades really could replace 80%+ of all traditional rack mount servers.

splines - Friday, August 18, 2006 - link

I don't disagree with you on any one point there. Our business is in the process of moving to multiple blade clusters and attached SANs for our excessively large fileservers.But I do think that virtualisation does provide a great stepping-stone for business not quite ready to clear out the racks and invest in a fairly expensive replacement. We can afford to make this change, but many cannot. Even though the likes of IBM are pushing for blades left right and centre I wouldn't discount the old racks quite yet.

And no, I've haven't had the opportunity to see such a 7U blade setup. Sounds like fun :)

yyrkoon - Friday, August 18, 2006 - link

Wouldnt you push a single system that can run into the tens of thousands, to possibly hundreds of thousands for a single blade ? I know i would ;)Mysoggy - Thursday, August 17, 2006 - link

I am pretty amazed that they did not mention the cost of power in the TCO section.The cost of powering a server in a datacenter can be even greater than the TCA over it's lifetime.

I love the people that say...oh a got a great deal on this dell server...it was $400 off of the list price. Then they eat through the savings in a few months with shoddy PSUs and hardware that consume more power.

JarredWalton - Thursday, August 17, 2006 - link

Page 3:"Facility management: the space it takes in your datacenter and the electricity it consumes"

Don't overhype power, though. There is no way even a $5,000 server is going to use more in power costs over its expected life. Let's just say that's 5 years for kicks. From http://www.anandtech.com/IT/showdoc.aspx?i=2772&am...">this page, the Dell Irwindale 3.6 GHz with 8GB of RAM maxed out at 374W. Let's say $0.10 per kWHr for electricity as a start:

24 * 374 = 8976 WHr/Day

8976 * 365.25 = 3278484 WHr/Year

3278484 * 5 = 16392420 WHr over 5 years

16392420 / 1000 = 16392.42 kWHr total

Cost for electricity (at full load, 24/7, for 5 years): $1639.24

Even if you double that (which is unreasonable in my experience, but maybe there are places that charge $0.20 per kWHr), you're still only at $3278.48. I'd actually guess that a lot of businesses pay less for energy, due to corporate discounts - can't say for sure, though.

Put another way, you need a $5000 server that uses 1140 Watts in order to potentially use $5000 of electricity in 5 years. (Or you need to pay $0.30 per kWHr.) There are servers that can use that much power, but they are far more likely to cost $100,000 or more than to cost anywhere near $5000. And of course, power demands with Woodcrest and other chips are lower than that Irwindale setup by a pretty significant amount. :)

Now if you're talking about a $400 discount to get an old Irwindale over a new Woodcrest or something, then the power costs can easily eat up thost savings. That's a bit different, though.