Intel Core 2 Duo E6300 & E6400: Tremendous Value Through Overclocking

by Anand Lal Shimpi on July 26, 2006 8:17 AM EST- Posted in

- CPUs

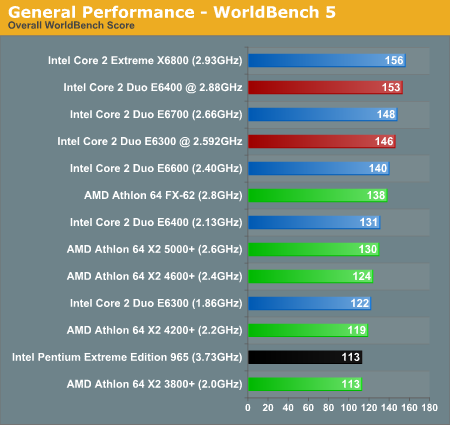

Application Performance using PC WorldBench 5

Switching over to WorldBench 5, all of the scores become much closer. The spread between the fastest and slowest tested processor is only 38%, and overclocking of the E6300 and E6400 by 39% and 35% results in a 20% and 17% performance increase, respectively. Given the number of applications being tested in WorldBench 5, the overall results are not too surprising. Some tests are CPU limited while others are bottlenecked by hard drive performance. Athlon 64 X2 is more competitive in this benchmark, and the truth is that any of these systems would be more than fast enough for typical home/office use. If you want the fastest current CPU architecture, however, that title clearly belongs to Intel's Core 2.

WorldBench 5's applications are a bit older than those used in SYSMark 2004, and the data sets not as large - meaning that the smaller cache of the E6300 and E6400 has less of a negative impact. The result is that the 2.88GHz E6400 performs very close to the 2.93GHz X6800 and the 2.59GHz E6300 performs very close to the 2.66GHz E6700. Compared to AMD, the overclocked E6300 does quite well - at 2.592GHz the E6300 is already faster than AMD's Athlon 64 FX-62 - and we're talking $183.

137 Comments

View All Comments

saiku - Wednesday, July 26, 2006 - link

I agree with Voodoo above. I'm a gamer...with a 6800GT and an socket 754 A64 3000+. I am mostly GPU limited but games like Counter-Strike : Source are only playable (with 2x AA) at 1024x768 ( Jarred Walton, for some reason , thinks that if you play at 1024, you really dont play much games at all...I beg to disagree). Any higher res, and framerates suffer badly.A gamer's mid-range/high-end buying guide is JUST the ticket at this time !

JarredWalton - Wednesday, July 26, 2006 - link

I actually still use an A64 754 3400+ for a lot of my work - "if it's not broken, don't fix/replace it!" It also has a 6800GT. I just don't like gaming at non-native LCD resolution, and I think most people are switching to LCDs (given that CRTs are a dying breed). Even with a CRT, though - I've still got an NEC FE991-SB and a Samsung 997DF - I would much rather game at 1280x960 (1280x1024 if necessary) without AA than at 1024 with AA. In some games (Source engine mostly), resolution has more of an impact than enabling AA, so in that case you might need to run 1024.Anyway, take my comment in context: people running 1024x768 either don't play many games or they don't have the latest technology/platform (or both). That's my intended meaning, and I apologize if that wasn't clear. If you're running an AGP platform still, and you play a lot of games, it really doesn't matter which CPU you're using for gaming as the GPU is usually the limiting factor (unless you're running Celeron/Athlon XP).

Also, I don't mean that *no one* plays games at 1024x768; however, if you were to take a sampling of most gamers these days, I don't think many would play at 1024 unless they simply lack the hardware to run higher resolutions. I'm personally recommending 1680x1050 or 1920x1200 WS displays these days for gaming, or 1280x1024 at minimum. Like I said, though, gamers looking at upgrading to Core 2 (or even AM2) are almost certainly not intending to run games at 1024x768, are they?

yacoub - Wednesday, July 26, 2006 - link

What I don't get is that my X800XL runs CS:S pretty well at native res on my 2007WFP at default graphical settings (I think everything High?). I mean sure it can drop to around 20fps and very rarely lower in big fights, but my system seems to do pretty well and it's just an A64 3200+ Venice (I even undid my o/c from 3800+ speeds), 1GB of DDR ram, and the X800XL.I do want to upgrade something to gain some performance so it DOESN'T bog down in firefights, and I'm betting a 7900GT is the right answer, but at the same time the Asus board I'm running is a little flakey. At least if I upgrade the GPU I can always move it over to my next system, where as if I upgrade the CPU it'd be kind of a waste even with the cheaper prices since my next cpu/mobo/ram build will be Conroe/Intel/DDR2.

drarant - Thursday, July 27, 2006 - link

I have/had the exact same setup.The thing about about CS:S and HL2 is ALOT of the work is done by the CPU. Since the Source Engine is very physics heavy, more often then not the CPU is the limiting factor.

You can see this in almost all the Conroe Benches, HL2 gains more fps from them then say BF2 or FEAR. While the X800XL isn't a rock solid card for HL2, you will see a pretty big FPS boost by upgrading that CPU.

If you run with AF or AA on, that is what hurts you during firefights as that is alot of work for the CPU (calculating physics of the shots, registering, etc) and graphically doing the shaders etc. A Sound card would also help as that is handled by the CPU and CS:S is very sound heavy, but if you get the conroe that wouldn't matter.

Cheers

Thor86 - Wednesday, July 26, 2006 - link

58% spread on BF2 testing. Sheeit.Some1ne - Wednesday, July 26, 2006 - link

So what's the deal with the gaming hardware config and software settings? I mean:1. You ran with 1900 XT's in crossfire, which is a fairly unrealistic setup when compared to what most users will actually implement. This can be forgiven, as you were testing CPU performance, so it's justifiable to use a graphics setup that provides a needless amount of power to eliminate the video subsystem as a possible bottleneck. Still, I think it would be interesting to see how the gaming performance scaled with overclocking when using a more "conventional" upper-midrange graphics solution, like a single 1900 XT or 7900 GT, because that's what most users would probably actually run.

2. You ran the tests at a somewhat unrealistic resolution of 1600x1200. If the crossfire cards were put in to eliminate the graphics subsystem as a possible bottleneck, then this configuration just undermines that effort. Most people still game at 1280x1024, or even 1024x768, so running the tests at a lower resolution would have given more meaningful results by generating less graphics load and therefore better illustrating the impact of the CPU overclocking as well.

JarredWalton - Wednesday, July 26, 2006 - link

I would say the only people gaming at 1024x768 are people that really don't play all that many games, and very likely they aren't running even socket 939/775, let alone one of the newer platforms. Other than that, the reason for the chosen settings is a matter of balance. Personally, I would take higher resolution if possible up until I max out my monitor. Since I'm using a widescreen LCD, that's 1920x1200, and needless to say just about any current GPU configuration is bottlenecked by the graphics card at setting. Anyway, page 9:I've tweaked the conclusion slightly as well to clarify the status of single GPUs:

Our reasoning is that most people don't just use their computer for gaming, even if they're "hardcore gamers", so faster CPUs are still useful. Video encoding, file compression, multitasking, etc. can benefit from faster CPUs. If you're running a single core A64 or P4 and you're happy with its performance, of course, don't run out and upgrade just because something faster is available. I'm quite certain most of my siblings wouldn't notice the difference between A64 3000+ and Core 2 X6800 in their daily activities, other than to say "it seems a bit faster." :)

Some1ne - Wednesday, July 26, 2006 - link

I don't know about the veracity of that...perhaps I'm just a statistical outlier, but I'm running a socket 939 X2 3800+ system (w/ CPU clocked to 2.4 GHz...unfortunately it won't go any higher than that) with a 7900 GT, and still play the majority of my games at 1280x1024, though my monitor will do 1600x1200 just fine. Up until a month or so ago, I was running a 6600 GT (because when I built the system, I couldn't justify the extra ~$50 cost for the mainboard, plus double the graphics cost...I suppose I could have gone with a SLI board and just the single 6600 GT, and added in a second one for cheap instead of the 7900 GT, but from what I've seen the 7900 performs better than two 6600's would in SLI, and for $200 on craigslist it was a really good deal), and playing my games at 1024x768 (except for older titles, of course, but they don't really count). I'll admit that I'm not a "hardcore" gamer, though I do play most of the major titles as they come out, and I suspect a lot of people do the same...and I do plan on moving to a core 2 duo based setup (will probably get an E6600 and overclock it, assuming the expected price of $316 holds), hopefully within the next couple of months, though when I do I'll most likely keep my 7900 GT, and avoid SLI setups like the plague (unless it turns out that you can run a second video card in the SLI/Crossfire slot in non-SLI mode and have it serve as a dedicated PPU, in which case I'd get a SLI/Crossfire capable setup solely for that purpose, though I won't be caught dead running two cards in tandem for graphics processing just because current games are too much for any current standalone card to handle...the price/performance of a dual-card setup relative to that of a single-card one just isn't justifiable in that regard).

I tend to disagree with the assertion that the fact that current games are GPU limited on any single card is, in and of itself, a good reason to consider a SLI/Crossfire setup. The price/performance of the dual-GPU setup should factor in as well (as well as additional headaches, such as the additional complexity and technical requirements and driver issues and so on), and though it has certainly improved from where it was when the technology was (re-)introduced, it's still not quite where it ought to be for a dual-card solution to really make sense for the majority of people.

And what about single cards that integrate two GPU's (or that glue together two cards so that they can interface via a single x16 slot), like the 7950's?

JarredWalton - Wednesday, July 26, 2006 - link

Consider doesn't mean purchase. ;) Hey, I'm happy with a 7800 GTX and 1920x1200 0xAA/8xAF in most titles. Also, I should have stated (and I explained this below) that I mean "most gamers" don't want to run at 1024x768. People do it, sure, but given the choice almost every gamer out there would rather play at 1280x1024 minimum (for 17/19" displays). Hopefully that clears things up. We tested at a resolution and settings that show the differecnes while still allowing the GPUs to strut their stuff.kmmatney - Wednesday, July 26, 2006 - link

I agree, most people have 19" and 20" LCDs these days, so 1280 x 1024 would be a bare minimum for testing, with 1680 x 1050 (or 1600 x 1200) probably being the most "desired" resolution for many gamers.