Out of Order Loads done right

Since the Pentium Pro, x86 CPUs have been capable of issuing and executing instructions out of order. However, on average one third of the instructions in those reorder buffers could not be reordered easily: we are talking about loads. Moving loads forward can give a very big boost to performance. Instead of loading a piece of data when you need it, it is much more useful to start the load as early as you can. That way, L1 and even L2-cache latencies can be much more easily hidden.This is pretty easy to understand. Imagine having an ALU operation that needs a certain piece of data but that the data is not available in the L1-cache. If the load has been executed many cycles before the ALU operation needs that piece of data, the L2-cache latency is going to have a reduced impact. Of course, you don't want to load a value which is being or will be written to by a previous - following the program/thread order - store. That would mean you are loading an old value, not the up to date one. Check out the picture below.

Load 2 cannot be moved forward, since it has to wait until the first Store is done. Only after Store 1 is done will variable Y have its correct value. However there is no reason why Load 4 cannot move forward. It doesn't have to wait for Store 3 and store 1 to finish. By moving Load 4 forward, you give the load unit more time to get the right operand, as we assume that after load 4 a calculation with operand Y will happen.

Currently, CPUs will generally delay load 4 when a store is in flight (active). The problem is the address to which the stores will write has yet to be calculated. To be more precise, the memory addresses are still unknown during reordering and scheduling. When a Load micro-op enters the ROB, the memory addresses of previous stores (from the program order) are not known until they pass the AGU (Address Generation Units).

However, the risk that a load will load a value out of an address that is being written to by a store that has yet to be finished is pretty small (1-2%). That is why Jack Doweck of the Core development team decided to allow Loads to go ahead of previous stores, assuming that the load will not be loading information that will be updated by that preceding store. To avoid that the assumption was wrong, a predictor is used to help. The dynamic alias predictor tries to predict whether or not a previous store will write to the same address as the address from which the load - that you want to execute earlier, thus out of order - will load its data.

Based on Jack Doweck's comments and a study of Intel's previous P6 and P-M architectures I drew up the scheme below. Be warned that this is not the official Intel diagram.

The predictor gives the ReOrder Buffer (ROB) the permission to move a load ahead of a store or not. After the Load has been moved ahead and executed, the conflict logic scans the store buffer located in the Memory reOrder Buffer (MOB) to see if any of the stores which were located before the load (following program order) have written to the address of the out of order load. If so, the load must be redone, and the misprediction penalty is about 20 lost cycles. (Note that the branch misprediction penalty is also about 20 cycles). Worst case, the new dynamic alias predictor may slightly reduce performance, but realistically it's four steps forward, one step back, resulting in a net performance boost.

Determining whether a load and a store share the same address is called memory disambiguation. Allowing loads to move ahead of stores gives a big performance boost. In some snippets of benchmarking code, Intel saw up to a 40% performance boost, solely the result of the more flexible way Loads get reordered. It is pretty clear that we won't see this in most real applications, but it is nevertheless impressive and it should show tangible (10-20%) performance boosts together with the fast L2 and L1 cache.

Let us not forget that loads are probably the most important instructions of all. Not only are loads about one third of the micro-ops that are in flight in a x86 CPU, but they can also cause costly stalls when a load needs to go to the L2 cache (or worse, system memory). So how does this super flexible reordering of loads compare with other architectures?

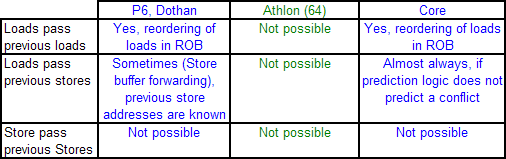

The P6 and P-M could already reorder Loads pretty good. They could move one Load before other Loads, as well as before Stores which have no unknown addresses or addresses which do not reference the same address as the load. In contrast, the Athlon 64 can only move loads before independent ALU operations (ADD etc.). Loads cannot be moved ahead much at all to minimize the effect of a cache miss, and other loads cannot be used to keep the CPU busy if a load has to wait for a store to finish. This means that the Athlon 64 processor is severely limited when it comes to reorder code.

This is probably one of the most important reasons why the Athlon 64 does not outperform the P-M in gaming and integer workloads despite having a lower latency memory system and more integer execution sources. Integer workloads tend to jump around in memory, and have many unknown addresses which must be calculated first. It is less important for FP intensive loads, which is also one of the reasons why the Athlon 64 had no problem with Dothan in this kind of workload. FP workloads access the memory in a much more regular fashion.

Once Loads and Stores are in the queues of Load/Store units, the Athlon's L/S unit allows Loads to bypass Stores, except of course when the load would bypass a store to the same address. Unfortunately, by then the Loads are already out of the ICU and cannot be used to fill the holes that dependencies and cache misses make. You could say that the Athlon (64) has some Load/Store reordering but it's much later in the pipeline and is less flexible than the P6, P-M, and Core architectures.

87 Comments

View All Comments

Betwon - Tuesday, May 2, 2006 - link

The data can not be used for Core -- Because it did not use the smart prefetcher.The Advanced smart prefetchers of Core's L1D have decreased the miss-rates very much. In fact, The data cache of Core --much more efficiency than K8's.

Compared with Core's smart cahce, K8's 64KB L1D is like an idiot .

Spoonbender - Tuesday, May 2, 2006 - link

How do you know? As the article said, the prefetching *might* in some cases decrease performance, even if it'll usually be an advantage. But I don't really think you have enough information to make a valid comparison. My point was simply that generally speaking, a 64KB, 2-way associative cache will have better hit rates than a 32KB 8-way associative. Of course, having fancy prefetching is always a good thing, but its effect *is* limited. If it was a huge improvement, people would have done that 8 years ago, instead of just messing with cache size and associativity.Betwon - Tuesday, May 2, 2006 - link

Your information is too old and should be updated now.Prefetcher give much improvement in reducing the miss-rate.

About 30-90% miss rate reduced.

The good prefetcher tech is one of the most important performance factors.

http://www.hpcaconf.org/hpca11/slides/hpca_inst_sl...">http://www.hpcaconf.org/hpca11/slides/hpca_inst_sl...

Betwon - Tuesday, May 2, 2006 - link

Who is James E. Smith? I think that you should know him.Data Cache Prefetching Using a Global History Buffer -- the prefetcher bring the great performance improvement! From 20-110%

abstract

http://ieeexplore.ieee.org/search/freesrchabstract...">http://ieeexplore.ieee.org/search/frees...+buffer%...

Of course, you can download the full-text pdf file, if you have a IEEE member account. I can download and view it, but can not release it.

slides ppt

http://www.ece.gatech.edu/~leehs/ECE7102/slides/ka...">http://www.ece.gatech.edu/~leehs/ECE7102/slides/ka...

Sunrise089 - Monday, May 1, 2006 - link

flak flak flakSeriously - props to the author on a good article, but if I had one comment it would be that there are length issues to trying to provide the ammount of background needed for this sort of article. I think it's best to either just draw the comparisons between the two chips, or do a full-length many thousands of words write-up on the technical importance of the various topics. I read the article, and while writen well and informative in it's conclusions, I cannot say all the background was enough to make me really understand the concepts better. For example I already knew what out-of-order execution was, but only being able to read a few hundred words more on it didn't allow me to really learn enough to understand all of the reasons why the K8 had a disadvantage in that area, and if all you wanted was for me to understand that it did indeed have the disadvanatge, you could have just said so.

JohanAnandtech - Monday, May 1, 2006 - link

It is indeed an issue I struggled with. Writing full length articles on these subjects doesn't sound like a good idea for me: I personally do not like lengthy articles either. So I tried to keep a balance between being technical and keeping it understandable.Anyway, Just ask about the points where you were lost. Especially on the OoO matters: it is much more interesting than "AMD has a disadvantage". Basically, reordering happens between the decoding and the execute phase.

Pushing loads forward helps in two ways:

1.Whenever a load fails to get it's data from the L1-cache, the CPU has to find other instructions to execute. As loads are very common, it is easier to fill the gaps than when you can not move loads before other loads.

2. If a load gets pushed forward and a L1-cache miss for that load occurs, it isn't that bad. This is very simplified, but assume the load has been pushed 5 cycles forward, and your L2-cache latency is 10, you only have to wait 5 cycles instead of 10.

Furen - Monday, May 1, 2006 - link

I'll be the grammar nazi today, lol.Last page, paragraph 5: "[...] increasing the <b>wideness</b> of each unit [...]"

Width, perhaps? "Wideness" refers to either quality or state (neither of which is discrete) while "width" also also applies to measurable fact (128-bits wide, for example). You can talk about the wideness of the units, for example, but you cannot talk about increasing their wideness...

Great article, by the way, it's been long since I've read such an enjoyable article.

emboss - Monday, May 1, 2006 - link

Just a quick note ... on page 4 you have the table with the execution unit details. There's a couple things incorrect (IMO) in the numbers.First, you list the number of double precision FLOPs per cycle. Double precision can be done with SSE, so in the K8 you can do 2 DP ADDs and 2 DP MULs every two cycles (due to the 64-bit wide datapaths), a total of 2 DP FLOPs per cycle.

Core can do two SSE operations per cycle (the two symmetric units), giving it a total of 4 DP FLOPs per cycle. The third SSE unit does not handle FP ops, but instead handles shuffles and the like.

Obviously, double both of these numbers if you want a "peak" single precision FLOPs per cycle.

If instead you were meaning about extended precision (64 bit precision, 80 bit floats) x87 operations, it's exactly the same concept as above since Core has apparently has combined SSE/x87 units (and a fully pipelined FMUL, unlike the P4). This gives both the K8 and Core 2 EP FLOPs per cycle.

Finally, you have the number of SSE units for the K7 wrong. The K7, like the K8, has two SSE units (FADD and FMUL), and the same 64 bit datapath as the K8. Of course, the K7 cannot handle SSE2, so must use x87 instructions for double precision (ie: two DP FLOPs per cycle).

Apart from that, very nice article! I've been trying to optimise SSE code for the Core processor and have had to do things by trial and error thanks to the complete void of any decent documentation from Intel. One thing in particular was that I was finding "odd" performance properties with SSE that pointed towards it having two FMUL units. Being symmetric units explains a lot!

JarredWalton - Monday, May 1, 2006 - link

See above note regarding Core Duo versus Core "Conroe". (Nice naming scheme, Intel. *grumble*) I will let Johan take care of the rest of your comment as appropriate. (His knowledge of the low level details of all of the microarchitectures discussed here definitely surpasses mine!)Unfortunately, it's not particularly surprising to find out that optimal code for Core Duo may need to be slightly tweaked in order to extract the most performance from Conroe. Still, they ought to be similar enough that you own by optimizing for Core Duo. The flipside is the optimal code for Conroe could very likely run worse on Core Duo and other processors. Such is the price of progress, I guess.

prx99 - Monday, May 1, 2006 - link

Core is not the first x86 having 4 decoders. That was AMDs K5.I remember a statement from AMD that in some design they considered adding one more decoder. It turned out to actually slow down the design because the amount of clock speed lost was not compensated for by the smaller amount of performance gained.

In my interpretation the fusion is done past the initial decoding, so there is not way more that 4 x86 ops can be decoded in a clock cycle (I'm referring to the "4+1" figure). The profit from fusion is not in the decoding stage but in the out of order engine.

At AMDs, the "1 branch per cycle" rule is limited to branches seen by the predictor. A branch which is generally not taken is invisible to the prediction engine and therefore free.

The original P4 indeed had a L1 latency of 2. The major P4 redesign in Prescott however increased it to 3.

Load/store reordering is already done by the P4, but the penalty from a misprediction is fairly high. This is the drawback of any kind of prediction, whether branches or memory access: It speeds up things when being correct, but slows them down quite a bit more when not. This was the general picture seen in the P4: many applications were sped up by some amount, but some suffered greatly because they systematically fooled the P4's engines.

Gruss, Andreas