Intel’s Dual-Core Xeon First Look

by Jason Clark & Ross Whitehead on December 16, 2005 12:05 AM EST- Posted in

- IT Computing

The future is performance per watt.

Given the way that the energy markets have gone during the past year, it was fairly obvious that there was going to be a focus on power, and performance per watt. Some may say that power is irrelevant and performance is key. While performance is important, performance per Watt is more important. Both Intel and AMD are focusing on ways of delivering more performance with less power - it is the future. We're facing rising energy prices everyday, and those numbers trickle down to everyone, whether you are drying your clothes, or running a few racks of servers at a datacenter.

Recently, we spoke to a bandwidth provider in one of the largest datacenters on the US east coast. The datacenter that this provider uses for its services is out of power. They can't add any more racks because the datacenter doesn't have enough power. We're not talking about a small datacenter either; this is a very large datacenter that serves some of the world's largest websites. They can't get anymore power because government regulations won't allow it; so, now what? They have to find ways to reduce power consumption.

Space is also a concern at most datacenters, so blade systems are becoming very popular at the datacenter. IDC recently forecasted that blade systems would reach 8% of the server market this year, from 2% a year ago. Blade systems may solve the space problem, but add to the power problem, as you end up with a more power-dense environment.

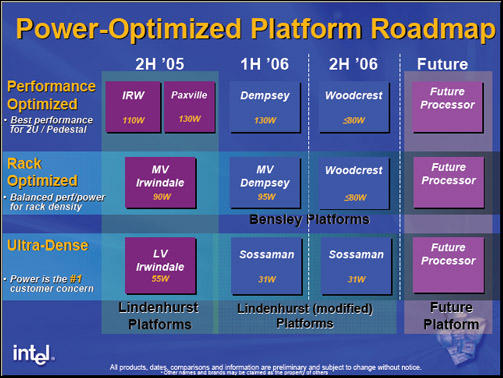

Intel's performance per watt play won't come into full effect until next year, with Woodcrest. Rough numbers for Woodcrest put it at somewhere in the 80Watt range or less. If you think back over the past few years at how the focus has been all about performance, people seem to have overlooked where AMD is in terms of performance per Watt. The Opteron has been competitive with Intel since its inception not only on performance, but delivering that performance in a lower power envelope. The Opteron 280 processor is a 95 Watt part already, and has been very competitive with Intel's Xeon.

Intel Power/Performance Roadmap

Given the way that the energy markets have gone during the past year, it was fairly obvious that there was going to be a focus on power, and performance per watt. Some may say that power is irrelevant and performance is key. While performance is important, performance per Watt is more important. Both Intel and AMD are focusing on ways of delivering more performance with less power - it is the future. We're facing rising energy prices everyday, and those numbers trickle down to everyone, whether you are drying your clothes, or running a few racks of servers at a datacenter.

Recently, we spoke to a bandwidth provider in one of the largest datacenters on the US east coast. The datacenter that this provider uses for its services is out of power. They can't add any more racks because the datacenter doesn't have enough power. We're not talking about a small datacenter either; this is a very large datacenter that serves some of the world's largest websites. They can't get anymore power because government regulations won't allow it; so, now what? They have to find ways to reduce power consumption.

Space is also a concern at most datacenters, so blade systems are becoming very popular at the datacenter. IDC recently forecasted that blade systems would reach 8% of the server market this year, from 2% a year ago. Blade systems may solve the space problem, but add to the power problem, as you end up with a more power-dense environment.

Intel's performance per watt play won't come into full effect until next year, with Woodcrest. Rough numbers for Woodcrest put it at somewhere in the 80Watt range or less. If you think back over the past few years at how the focus has been all about performance, people seem to have overlooked where AMD is in terms of performance per Watt. The Opteron has been competitive with Intel since its inception not only on performance, but delivering that performance in a lower power envelope. The Opteron 280 processor is a 95 Watt part already, and has been very competitive with Intel's Xeon.

Intel Power/Performance Roadmap

67 Comments

View All Comments

Furen - Friday, December 16, 2005 - link

I must say that performance is very good on these (seriously). The cost may be a bit prohibitive (then again, decent servers are always expensive as hell) since it introduces FB-DIMMs (and 4 channels for this performance). Also, I would like to see someone test these at a 667 FSB just to see how much of a choke point it becomes, since every Dempsey besides the top end 3.46GHz one will use this (I think).Beenthere - Friday, December 16, 2005 - link

Intel holds a Dog & Pony Show for some hand picked journalists it feels will be "Intel friendly" as a result of getting the "scoop" over the mainstream PC media on Intel products as far as three years off. Then Intel proceeds to provide prototype CPUs for testing months if not years before they will actually be available. What a manipulation of the media and public opinion.This is damage control in action folks. Intel is desperate to save face and as many customers as it can while it hopelessly tries to deliver some competitive product in a year or two. The problem is AMD is so far ahead in technology, they can just release better CPUs any time they desire and Intel has nothing to counter AMD's superior products. Even the Intel fanboys and "media friendly journalists" have had to admit that purchasing an Intel product now or in the foreseeable future would be a very poor investment.

The bad situation Intel is in couldn't happen to a nicer, more deserving company IMNHO.

ElJefe - Friday, December 16, 2005 - link

Bensley. thats a gay name.The chip does nicely though.

i hope opteron makes something nicer. i mean, just increase the speed .2ghz on the chip and it will most likely blow it away and still not be power hungry.

hm.

1st

Brian23 - Friday, December 16, 2005 - link

The cost per month is wrong.Example:

The intel chip pulls 479W at 100% load.

In 24 hours, that's 24*479 = 11496W/d

Assume 31 days in a month, thats 11496*31 = 356,376W/m = 356kWh/month

Assume 14 cents per kWh 356*0.14 = $49.89 dollars per month

coldpower27 - Friday, December 16, 2005 - link

Actually I think I agree with you.1 kWH = 3,600,000J

Worse Case Scenario

479W = 479J in 1 Second, 1,724,400J in 1 Hour, 41,385,600J in 1 Day, 1,282,953,600J in 31 Days.

Divide by 1 kWH = 3,600,000

= 356.376kWH

Multiply by 0.14/kWH

= $49.89 Per month, the above poster is correct.

coldpower27 - Friday, December 16, 2005 - link

The actual difference between running 40 Opteron Systems & 40 Bensley Systems for 1 Year @ 40-60% Load comes to a difference of $5890.4 ~ 1/10 the amount Anandtech reports.

coldpower27 - Friday, December 16, 2005 - link

Disregard, used money figures of 172 and 292 for Wattage Oops :P.coldpower27 - Friday, December 16, 2005 - link

In regard to $5000 ish figure.Furen - Friday, December 16, 2005 - link

The difference is around $8140 for 40-60% load (which is realistic) and around $10,500 ($23,500 compared to around $13,500) for full load.The problem, however, is that the system's power consumption is not the only thing a data center deals with. The more power the system uses, the more heat it throw off. Energy consumption for cooling can match the system's power consumption. Another thing to take into account is the AC-DC and DC-AC power conversion inefficiencies (this is before even hitting the system's power supply, which will lead to even more inefficiency) which will probably add another 20-30% to the real power consumption. So instead of having a difference of $8140 you end up with a difference of $19,536, and that's assuming that you don't need to purchase any new equipment aside from the 40 servers themselves. Another VERY important thing is power density. You could conceivably throw 64 1U systems onto a single rack using Opterons, with a ~17KW peak power draw, but 64 1U Benseley systems would require a peak power draw of ~31KW, not to mention that it's probably very stupid to stick 2 Dempseys into a 1U system (but hey, I'd say the same thing about sticking 4 dual-core Opterons onto a 1U system but people still do it).

That is not to say that Anandtech's data is right, 'cause it isn't. I just wanted to point out that though measuring power consumption in itself is important, trying to draw conclusions from the power consumption BY ITSELF is not very useful, since it ignored all other related costs and limitations.

coldpower27 - Friday, December 16, 2005 - link

How does a difference of $8140 increase to $19,536 which is an increae of over 130%?