Intel Motherboards: Something Wicked This Way Comes...

by Gary Key on October 12, 2005 2:13 PM EST- Posted in

- Motherboards

Gigabyte 8N SLI Quad Royal: Features

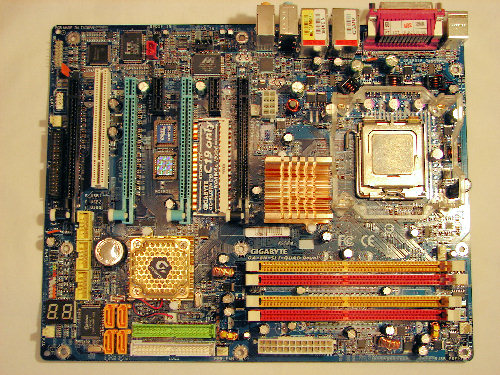

Gigabyte did an excellent job with the color coordination of the various peripheral slots and connectors. The DIMM module slots' color coordination is correct for dual channel setup. The power plug placement favors standard ATX case design and the power cable management is very good. Gigabyte actually places the eight-pin 12V auxiliary power connector below the CPU socket area, which should assist in easier installs for newer case designs that mount the power supply at the bottom of the case. In a standard ATX case design, there is the possibility of cable clutter around the CPU socket area with shorter power cables.

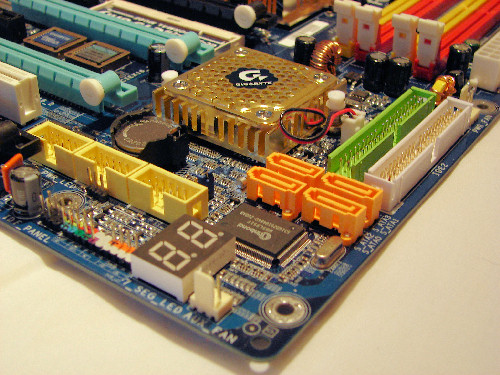

The nForce 4 USB connectors are located below the battery and the adaptor connectors are a tight fit when utilizing the PCI slot and bottom PCI Express slot. Also located in this area is a port 80 debug solution based on two-digit LED display.

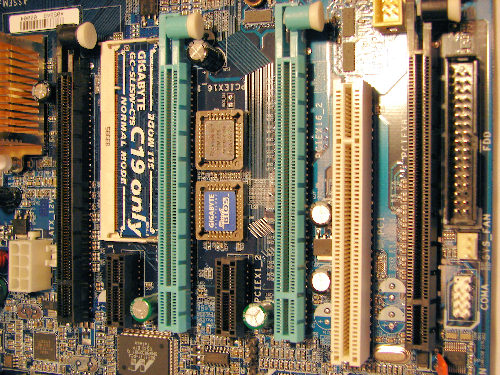

In between the first two x16 PCI Express slots is a mechanical SLI switch that accepts paddle cards. These paddle cards determine normal or SLI operation along with a special paddle card that activates the Gigabyte dual GPU 3D1 series of video cards. The settings can also be enabled in the BIOS and. throughout testing on the board, we found it best to leave the BIOS setting to Auto and correctly install the appropriate paddle card for fail-safe operation.

The CMOS reset is a traditional jumper design located between the first and second PCI slot that proved to be inconvenient at times.

The Northbridge is passively cooled, but Gigabyte does provide their Cool Plus fan that should be used based on our overclock testing. However, upon installing the fan, it can interfere with a larger style heatsink or a video card in the first PCI Express slot. The Southbridge (CK804 chipset) heatsink is a low profile design with a fan and will not interfere with cards in either x16 PCI Express slot.

Gigabyte did an excellent job with the color coordination of the various peripheral slots and connectors. The DIMM module slots' color coordination is correct for dual channel setup. The power plug placement favors standard ATX case design and the power cable management is very good. Gigabyte actually places the eight-pin 12V auxiliary power connector below the CPU socket area, which should assist in easier installs for newer case designs that mount the power supply at the bottom of the case. In a standard ATX case design, there is the possibility of cable clutter around the CPU socket area with shorter power cables.

The nForce 4 USB connectors are located below the battery and the adaptor connectors are a tight fit when utilizing the PCI slot and bottom PCI Express slot. Also located in this area is a port 80 debug solution based on two-digit LED display.

In between the first two x16 PCI Express slots is a mechanical SLI switch that accepts paddle cards. These paddle cards determine normal or SLI operation along with a special paddle card that activates the Gigabyte dual GPU 3D1 series of video cards. The settings can also be enabled in the BIOS and. throughout testing on the board, we found it best to leave the BIOS setting to Auto and correctly install the appropriate paddle card for fail-safe operation.

The CMOS reset is a traditional jumper design located between the first and second PCI slot that proved to be inconvenient at times.

The Northbridge is passively cooled, but Gigabyte does provide their Cool Plus fan that should be used based on our overclock testing. However, upon installing the fan, it can interfere with a larger style heatsink or a video card in the first PCI Express slot. The Southbridge (CK804 chipset) heatsink is a low profile design with a fan and will not interfere with cards in either x16 PCI Express slot.

44 Comments

View All Comments

DrMrLordX - Thursday, October 13, 2005 - link

Fine, I'll retract my statement, at least partially. I wasn't reading the statement carefully enough.Having looked into the newer 3D1-68GT, it seems to be a more solid product than the original 3D1 card based on 6600s. The original seemed to serve no purpose whatsoever.

Calin - Thursday, October 13, 2005 - link

They made it an Intel board assuming that the more "corporate-oriented" users prefer multiple monitors. I don't know about current performance, but in the recent past, Intel processors smoked the Athlon64 at things like Photoshop. And introduction of dual core processors at prices much lower than AMD's dual core could coax someone into buying such a board.I agree that most every normal person would be happy with four processors (powered by two cards), however I remember cases (in Linux) when OpenGL performace fell at half when enabling 2 monitor support on a single video card. This is driving a single monitor, not two. Driving two monitors, it fell even lower.

So, for every person that WANTS (not that it really really would need) four monitor output from four video cards, this looks like the best choice

trooper11 - Thursday, October 13, 2005 - link

I kind of doubt that since the cost in video equipment does not make this a low cost solution. if a company is willing to shell out for that, they would be willing to shell out for the best in workstation performance, which just happens to be the X2s

ElJefe - Wednesday, October 12, 2005 - link

ever wonder what crack they were smoking though making it an Intel board?if you read about modders and gamers , almost 80%+ market share for DIY builders use AMD.

this board is a waste of technology.

still cool though.

Gary Key - Thursday, October 13, 2005 - link

Hi,The ability to produce this board was due to Nvidia's decision to use a HyperTransport link for the Intel SLI chipset due to the need to have an on-chip memory controller. While it would be feasible to complete a AMD version of the board, the engineering time and product cost would not be acceptable. While I will agree with everyone that the current AMD processor line up offers significantly more performance than Intels, the actual day to day real life experience with both systems is not readily apparent to most people. In fact, I have had people play on my FX55 machine and 840EE machine and nobody could decide clearly which system had the AMD64 in it without benchmarks. This was at both 1280x1024 and 1600x1200 resolutions. While I personally favor AMD for most performance oriented setups, there are some people that still want Intel. After not having an Intel based machine for the last two plus years I have to admit is not as bad as most people make it out to be.

Johnmcl7 - Wednesday, October 12, 2005 - link

Whether you like it or not, the 3D1 was an innovative product, it's not childspplay to stuff both cores together and develop the motherboard support for it.John

Viper20220k - Wednesday, October 12, 2005 - link

Yeah, what is up with that.. I would sure like to know also.Wesley Fink - Wednesday, October 12, 2005 - link

The pictures of the 10-monitor display were supplied, but Gary did hook up every monitor we could, which was 8 if I recall, to test the outputs. To test 10, we needed two more Rev. 2 3D1 cards - our extra pair were Rev. 1 cards - which couldn't be here in time for a review.We did verify the ability of the individual 3D1 cards to do what Gigabyte claimed, so there is no reason at all to doubt the 10 claim. One of the key Engineers at Gigabyte works exclusively with AT and THG. All sites use some pictures and diagrams from press kits to save time, but we perform and report our own test results and analysis.

Yes, we dis ALL of the testing ourselves. Our review took longer because we did much more extensive testing of the board, including quite a bit of overclocking tests to make sure the nVidia dual-core issue we reported in our last Intel SLI review is now fixed in this chipset.

Gary spent countless hours sniffing out the good and the not so good on this board. We also found the OC capabilities of the shipping BIOS not too exciting, and we wanted to bring you the much improved OC results from the revised BIOS.

johnsonx - Wednesday, October 12, 2005 - link

Wesley,I don't think most of your readers actually thought what the subject line of this thread implies. There are always a few who like to throw stones of course.

In my read of the THG article a few days ago, I found myself thinking that the 10-display shot was from Gigabyte, as they had no detail shots of the display control panel for 10 monitors; nine was the most they were able to get working.

Like you, I have little doubt that 10 displays will in fact work with this board, but the 9th and 10th would have to come from either a PCI card or a PCIe card running in a x1 slot. Even x1 PCIe is faster than crusty old PCI, but it's still hardly ideal. It'd be nice if 3D1 cards could be coaxed into working in x8 slots (so that'd be 4 PCIe lanes per core - still plenty), as then you could theoretically have 4 3D1 cards for 16(!) displays.

Thanks for the information on how you did the review testing.

Regards,

Dave

AmberClad - Wednesday, October 12, 2005 - link

April Fool's Day already?!