ATI's X1000 Series: Extended Performance Testing

by Derek Wilson on October 7, 2005 10:15 AM EST- Posted in

- GPUs

Far Cry Performance

Crytek has done an excellent job keeping up with the times. As new technologies come out, it seems like they do their research into how to use them on their production game. Incorporating SM3.0 code, geometry instancing, HDR, and the like into their last patch adds value to their game, gives us a platform with which to test the current incarnation of their engine, and gives potential game engine customers a look at what they could be getting in a shipping product. We are already hearing about another patch that will further extend the impact of HDR on the game, among other things. For these tests, we crank the graphics quality settings up to very high (ultra high for water) and let the chips fall where they may. The demo that we used for this test was the built-in regulator demo.

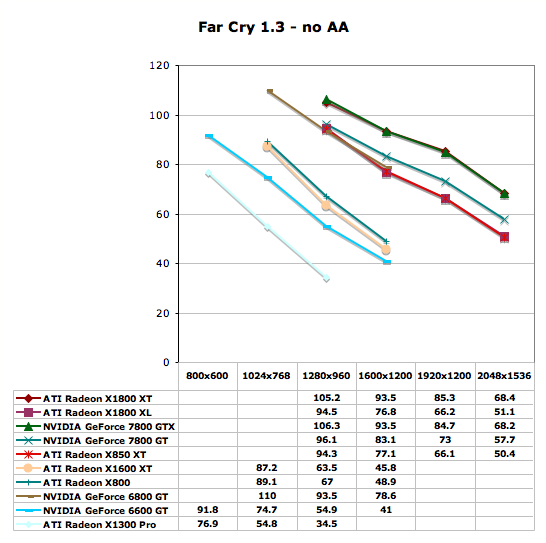

These tests show the top end ATI and NVIDIA cards running neck and neck. The 7800 GT leads the X1800 XL in performance (which is on par with the 6800 GT in the tests that overlap). The X1600 XT is able to perform better than the 6600 GT, but we should hope to see that from a card that costs over 50% more if MSRP is anywhere near street price. Again, the X1300 shouldn't be played at over 1024x768 unless the settings are dropped.

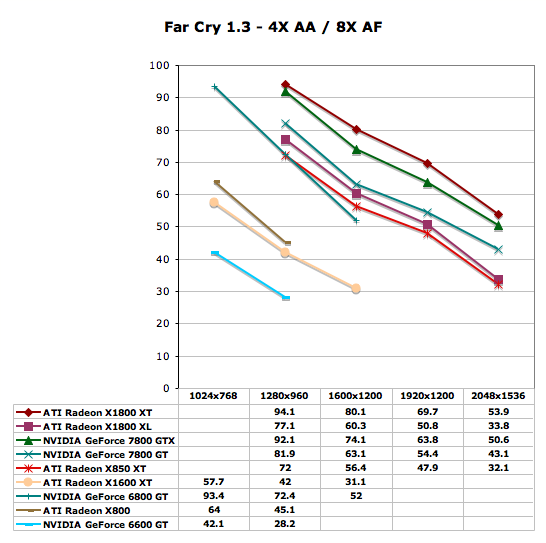

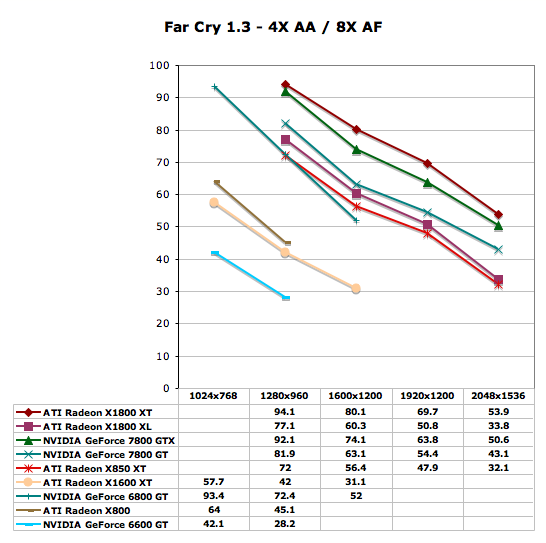

Enabling AA gives the advantage to the X1800 XT while the X1800 XL still lags behind the 7800 GT. The X1600 XT performs much better than the 6600 GT (which we wouldn't recommend running with AA).

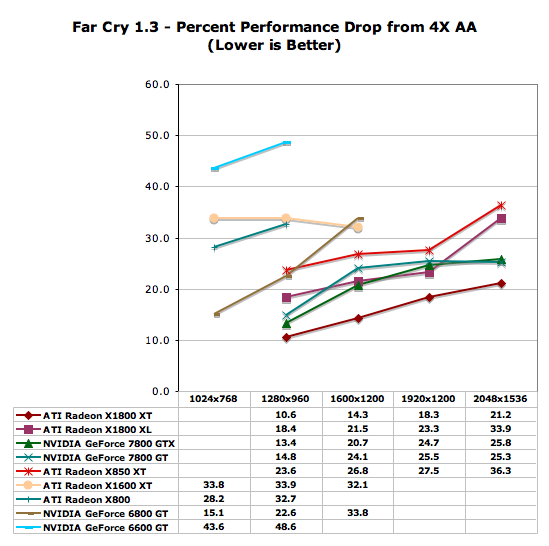

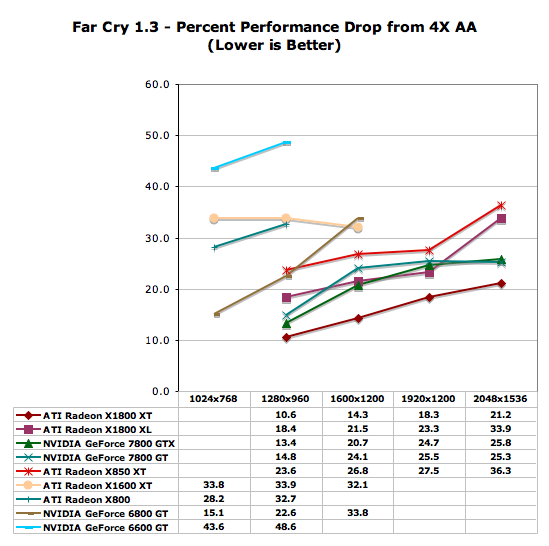

Once again, the X1800 XT handles the impact of AA better than any other card. The added memory bandwidth is likely the reason why we keep seeing such good handling of AA. The 7800 GT and 7800 GTX both handle AA almost as well as the X1800 XL (and finally over-take the new ATI part at 2048x1536).

Crytek has done an excellent job keeping up with the times. As new technologies come out, it seems like they do their research into how to use them on their production game. Incorporating SM3.0 code, geometry instancing, HDR, and the like into their last patch adds value to their game, gives us a platform with which to test the current incarnation of their engine, and gives potential game engine customers a look at what they could be getting in a shipping product. We are already hearing about another patch that will further extend the impact of HDR on the game, among other things. For these tests, we crank the graphics quality settings up to very high (ultra high for water) and let the chips fall where they may. The demo that we used for this test was the built-in regulator demo.

These tests show the top end ATI and NVIDIA cards running neck and neck. The 7800 GT leads the X1800 XL in performance (which is on par with the 6800 GT in the tests that overlap). The X1600 XT is able to perform better than the 6600 GT, but we should hope to see that from a card that costs over 50% more if MSRP is anywhere near street price. Again, the X1300 shouldn't be played at over 1024x768 unless the settings are dropped.

Enabling AA gives the advantage to the X1800 XT while the X1800 XL still lags behind the 7800 GT. The X1600 XT performs much better than the 6600 GT (which we wouldn't recommend running with AA).

Once again, the X1800 XT handles the impact of AA better than any other card. The added memory bandwidth is likely the reason why we keep seeing such good handling of AA. The 7800 GT and 7800 GTX both handle AA almost as well as the X1800 XL (and finally over-take the new ATI part at 2048x1536).

93 Comments

View All Comments

bob661 - Friday, October 7, 2005 - link

1280x960 is actually in keeping with the 4:3 aspect ratio. 1280x1024 actually stretches the height of your display although it's a little hard to tell the difference.TheInvincibleMustard - Friday, October 7, 2005 - link

The actual physical dimension of a 1280x1024 screen is larger than a 1280x960 if the pixel size is the same -- there's no "stretching" of anything, as 5:4 is just more square than 4:3 is but you've got more pixels to cover the "more squareness" of it.-TIM

DerekWilson - Friday, October 7, 2005 - link

It would be more of a squishing if you ran 1280x1024 on a monitor built for 4:3 with a game that didn't correctly manage the aspect ratio mapping.The performance of 1280x1024 and 1280x960 is very similar and it's not worth testing both.

TheInvincibleMustard - Friday, October 7, 2005 - link

True enough, but most 17" and 19" LCD monitors (the monitors in question in this line of posts) are native 1280x1024, and therefore no squishing is performed.I do agree with you that it is redundant to perform testing at both 1280x1024 and 1280x960, as those extra ~82,000 pixels don't mean a whole lot in the big picture.

-TIM

JarredWalton - Saturday, October 8, 2005 - link

Interesting... I had always assumed that 17" and 19" LCDs were still 4:3 aspect ratio screens. I just measured a 17" display that I have, and it's 13.25" x 10.75" (give or take), nearly an exact 5:4 ratio. So 1280x1024 is good for 17" and 19" LCDs, but 1280x960 would be preferred on CRTs.TheInvincibleMustard - Saturday, October 8, 2005 - link

By Jove, I think he's got it! :D-TIM

bob661 - Friday, October 7, 2005 - link

That might explain why I can't tell the difference. Thanks much for the info.intellon - Friday, October 7, 2005 - link

bang on with the graphs in this article... top notch. I guess the difference in performance of these cards make it less congested.On another note, I was wondering would it be too much hassle to set up ONE more computer with a mass sold cpu (say like the 3200+) and a value ram and just run couple of the different game engines on it, and post how the new cards perform? You don't have to run this "old" setup with every card ... just the new launches. It would be much helpful to common people who won't buy the fx55.

I for one, make estimates about how much slower the cards would run on my comp, but those estimates could be much better with a slower processor.

I understand that the point of the review is to let the gpu free and keep the cpu from holding it back, but testing with a common setup is helpful for someone with limited imagination (about how the card will run on their system) or not so deep pockets.

Of course you can just go right ahead and ignore this post and I won't complaint again, but if you do add such a system in the next review (it just has to be run with the new cards) I'll be the one who'll thank you deeply.

Sunrise089 - Friday, October 7, 2005 - link

2nd, even if only for a few testsLoneWolf15 - Friday, October 7, 2005 - link

One other factor in making a choice is that there are no ATI X1000 series cards available at this point. Once again, every review site covered a paper-launch, despite railing on it in the past. No-one is willing to be the first to be scooped and say "We won't review a product that you can't buy".I have an ATI card myself (replaced a recent nVidia card a year ago, so I've had both), but right now I'm pretty sick of card announcements for cards that aren't available. This smacks of ATI trying to boost its earnings or its rating in the eye of its shareholders, and ignoring its customers in the process. It's going to be a long time before I buy a graphics card again, but if I had to choose a vendor based on the past two years, both companies' reputations fall far short of the customer service I'd hope for.