Vendor Cards: MSI NX7800GTX

by Derek Wilson & Josh Venning on July 24, 2005 10:54 PM EST- Posted in

- GPUs

Overclocking

Unlike the EVGA e-GeForce 7800 GTX that we reviewed, the MSI NX7800 GTX did not come factory overclocked. The core clock was 430MHz and the memory clock was set at 1.20GHz, the same as our reference card. We were, however, able to overclock our MSI card a bit more than the EVGA, giving us 485MHz core and 1.25GHz memory. Our EVGA only reached up to 475MHz, and we'll see how the numbers stand up to each other in the next section.We used the same method as mentioned in the EVGA article to get our overclock speeds. To recap, we turned up the core and memory clock speeds in increments and ran Battlefield 2 benchmarks to test stability until it no longer ran cleanly. Then we backed it down until it was stable and used those numbers.

For some more general info about how we deal with overclocking, check the overclocking section of the last article on the EVGA e-GeForce 7800 GT. The story doesn't end here, however. We have been doing some digging regarding NVIDIA's handling of clock speed adjustment and have found some quite problematic information.

After talking to NVIDIA at length, we have learned that it is more difficult to actually set the 7800 GTX to a particular clock speed than it is to achieve 18pi miles per hour in a Ferrari. Apparently, NVIDIA looks at clock speed adjustments as a "speed knob" rather than a real clock speed control. The granularity of NVIDIA's clock speed adjustment is not incremental by 1 MHz as the Coolbits slider would have us believe. There are multiple clock partitions with "most of the die area" being clocked at 430MHz on the reference card. This makes it difficult to say what parts of the chip are running at a particular frequency at any given time.

Presuming that we should know exactly how fast every transistor is running at all times is absurd, but we don't have any such info on CPUs, yet there's no problem there. When working to overclock CPUs, we look at multipliers and bus speeds, giving us good reference points. If core frequency and the clock speed slider are more like a "speed knob" and all we need is a reference point, why not pick 0 to 10? Remember when enthusiasts would go so far as to replace crystals on motherboards to overclock? Are we going to have to return to those days in order to know truly what speed our GPU is running? I, for one, certainly hope not.

We can understand the delicate situation that NVIDIA is in when it comes to revealing too much information about how their chips are clocked and designed. However, there is a minimum level of information that we need in order to understand what it means to overclock a 7800 GTX, and we just don't have it yet. NVIDIA tells us that fill rate can be used to verify clock speed, but this doesn't give us enough details to determine what's actually going on.

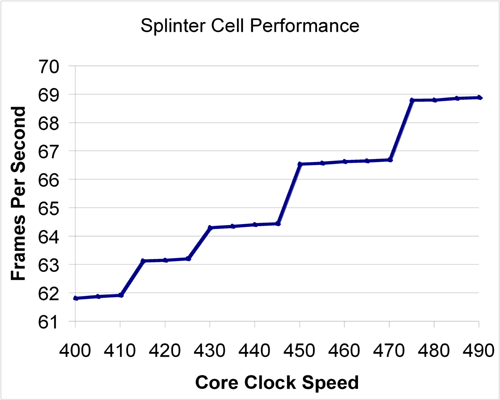

Asking NVIDIA for more information regarding how their chips are clocked has been akin to beating one's head against a wall. And so, we decided to take it upon ourselves to test a wide variety of clock speeds between 400MHz and 490MHz to try to get a better idea of what's going on. Here's a look at Splinter Cell: Chaos Theory performance over multiple core clock speeds.

As we can see, there is really no major effect on performance if clock speed isn't adjusted by about 15 to 25 MHz at a time. Smaller increases do yield some differences. The most interesting aspect to note is that it takes more of an increase to have a significant effect when starting from a higher frequency. We can see that at lower frequency, plateaus span about 10 MHz; while between 450 and 470 MHz, there is no useful increase in speed.

This data seems to indicate that each increase in the driver does increase the speed of something insignificant at every step. When moving up to one of the plateaus, it seems that a multiplier for something more important (like the pixel shader hardware) gets bumped up to the next discrete level. It is difficult to say with any certainty what is happening inside the hardware without more information.

We will be following this issue over time as we continue to cover individual 7800 GTX cards. NVIDIA has also indicated that they may "try to improve the granularity of clock speed adjustments", but when or if that happens and what the change will bring are questions that they would not discuss. Until we know more or have a better tool for overclocking, we will continue testing cards as we have in the past. For now, let's get back to the MSI card.

42 Comments

View All Comments

Fluppeteer - Friday, July 29, 2005 - link

"Advertise" is perhaps a strong word, but the PDF data sheet on the eVGA web sitedoes say that one output is dual link (even though the main specifications say

the maximum digital resolution is 1600x1200, which is nonsense, like all resolution

claims, even for most single link cards).

I couldn't (last I looked) find anything about dual link support on the MSI site.

But then, MSI have in the past ignored that the 6800GTo was dual link, and then

claimed that their (real) 6800GT *was* dual link, and that the SiI transmitters

were unnecessary... (Although I'm still mystified how the PNY AGP 6600GT seems to

have dual dual link support without external transmitters.)

I'm presuming both heads have analogue output, btw (I only ask because the GTo,

for some astonishing reason, only has digital output on its single link head).

Past experience (with the 6800) suggests that the reason none of the manufacturers

mention it is that very few people actually know what dual link DVI *is*. A lot

probably haven't tried it - there being, last I looked, only three monitors which

can use it anyway, two of which are discontinued. nVidia caused a lot of confusion

by claiming support in the chipset and putting an external transmitter on their

reference card, which most manufacturers left off without updating their specs.

Unfortunately, nVidia seem to fob off all their tech support to the manufacturers,

who aren't always qualified to answer questions - I've not found anywhere to send

driver feature requests, for example. Seeing the external transmitter make it to

released boards is a vast relief to me.

Now the Quadro 4500 has been announced, I'm hoping the 512MB boards will appear

(and they might be DDL). Fingers crossed.

DerekWilson - Thursday, July 28, 2005 - link

Yes. Again, the SI TMDS for dual-link is on the pcb. So far there are no 7800 cards that we have seen without dual-link on one port.NVIDIA didn't even make this clear at their initial launch. But it is there. If we see a board without dual-link we'll let you know.

Wulvor - Monday, July 25, 2005 - link

For that extra $4 you are also paying for a longer Warranty. eVGA has a 1+1 warranty, so 1 year warranty out of the box, and another 1 year when you register online at eVGA. MSI on the other hand has a 3 year warranty, and BFG a lifetime warranty.It must be the corporate purchaser in me, $4 is well worth the extra year ( or 2 ), but I guess if you are going to be on the "bleeding" edge, then you are buying a new video card every 6 months anyways, so who cares?

smn198 - Monday, July 25, 2005 - link

A suggestion:Regarding measuring the card's noise output and the way you measured the sound

"We had to do this because we were unable to turn on the graphics card's fan without turning on the system."

Would it be possible to try and measure the voltages going to the fan when the card is idle and under full load? Then supply the fan with these voltages when the system is off using a different power supply such as a battery (which is silent) and a variable resister.

It would also be interesting to see a graph of how the noise increases when going from idle to full load over 10 minutes (or however long it takes to reach the maximum speed) on cards which have . Instead of trying to measure the noise with the system on, again measure the voltage over time and then using your battery, variable resistor and voltage meter recreate the voltages and use this in conjunction with the voltage/time data to produce noise/time data.

Thanks

DerekWilson - Monday, July 25, 2005 - link

We are definitely evaluating different methods for measuring sound. Thanks for the suggestions.Just to be clear, even after hours of looping tests on the 7800 GTX overclocked to 485/625, we never once heard an audible increase in the fan's speed.

This is very much unlike our X850 parts that spin up and down frequenly during any given test.

We have considered attempting to heat the environment to simulate a desert like climate (we've gotten plenty of email from military personel asking about heat tolerance on graphics cards), but it is more difficult than it would seem to heat the enviroment without causing other problems in our lab.

Suggestions are welcome.

Thanks,

Derek Wilson

at80eighty - Tuesday, July 26, 2005 - link

We have considered attempting to heat the environment to simulate a desert like climate [...] but it is more difficult than it would seem to heat the enviroment without causing other problems in our labDerek, If you really wanna simulate desert like heat in the room, may i suggest inviting Monica Belluci to your lab ....should work like a charm :p

reactor - Monday, July 25, 2005 - link

Ive been using MSI cards for a few years now, there fans always seem to run at top speed and ive found they usually run at higher RPM's(Slightly louder) than other manufacturers. I think that explains why the card is cooler while drawing more power, and why you didn't notice a difference in sound as the card was stressed. Im not entirely certain, but thats from my own expenriances with MSI cards.Good article, looking forward to the BFG.

yacoub - Monday, July 25, 2005 - link

"As you can see, The EVGA slightly outperforms the MSI across the board at stock speeds."Either I'm reading it wrong or you mis-wrote that line, since I see the e-VGA normal and OC'd, the NVidia reference, and the MSI OC'd, but no MSI at stock speeds. Thus it's hard to compare th EVGA stock speeds vs the MSI stock speeds when one of them isn't on the charts.

DerekWilson - Monday, July 25, 2005 - link

Check the bold print on the Performance page --MSI stock performance is the same as the NVIDIA reference performance at 430MHz ...

To compare stock numbers compare the green bar to the EVGA @ 450/600

Sorry for the confusion, but we actually tested all the games a second time and came up with the exact same numbers. Rather than add another bar, we thought it'd be easier to just reference the one.

If you guys would rather see multipler bars for equivalent results across the board, we can certainly do that.

Thanks,

Derek Wilson

davecason - Monday, July 25, 2005 - link

Since the MSI card drew a lot more power than expected but remained cooler than the eVGA card, I was thinking that some of the excess may be due to the cooling of the card itself. Maybe the fan on the MSI card works harder than the one on the eVGA card.The people at Anandtech could test the power usage of the stock video-card cooling fans independently to see what their effect is on power load. This may explain the 6 extra watts used by the MSI card. This information might be mildly useful to a person who was already stressing out their power supply with other things (such as several hard drives). Does anyone think that is worth doing?