The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTCPU Tests: SPEC MT Performance - P and E-Core Scaling

Update Nov 6th:

We’ve finished our MT breakdown for the platform, investigating the various combination of cores and memory configurations for Alder Lake and the i9-12900K. We're posting the detailed scores for the DDR5 results, following up the aggregate results for DDR4 as well.

The results here solely cover the i9-12900K and various combinations of MT performance, such as 8 E-cores, 8 P-cores with 1T as well as 2T, and the full 24T 8P2T+8E scenario. The results here were done on Linux due to easier way to set affinities to the various cores, and they’re not completely comparable to the WSL results on the previous page, however should be within small margins of error for most tests.

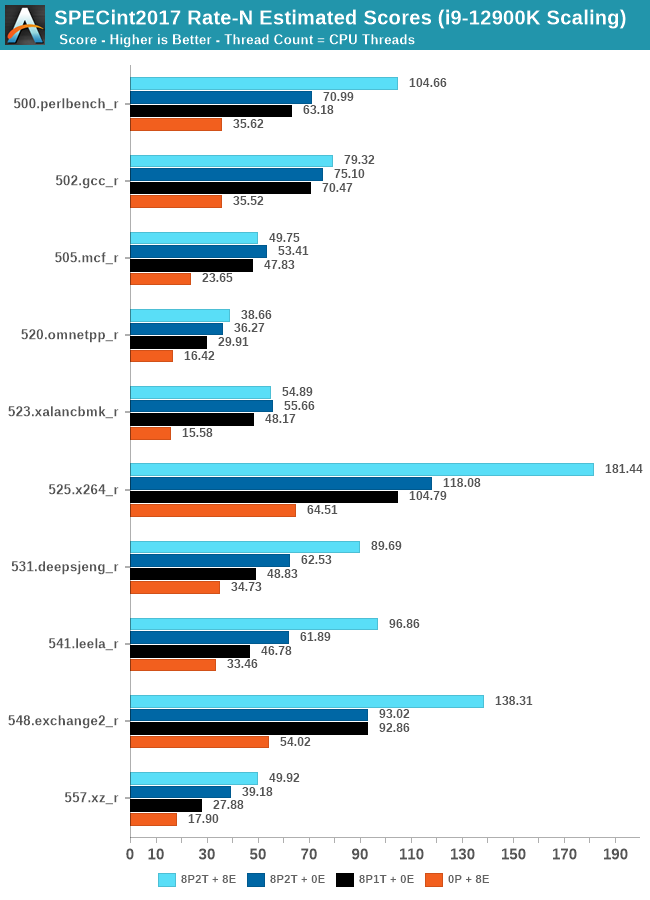

In the integer suite, the E-cores are quite powerful, reaching scores of around 50% of the 8P2T results, or more.

Many of the more core-bound workloads appear to very much enjoy just having more cores added to the suite, and these are also the workloads that have the largest gains in terms of gaining performance when we add 8 E-cores on top of the 8P2T results.

Workloads that are more cache-heavy, or rely on memory bandwidth, both shared resources on the chip, don’t scale too well at the top-end of things when adding the 8 E-cores. Most surprising to me was the 502.gcc_r result which barely saw any improvement with the added 8 E-cores.

More memory-bound workloads such as 520.omnetpp or 505.mcf are not surprising to see them not scale with the added E-cores – mcf even seeing a performance regression as the added cores mean more memory contention on the L3 and memory controllers.

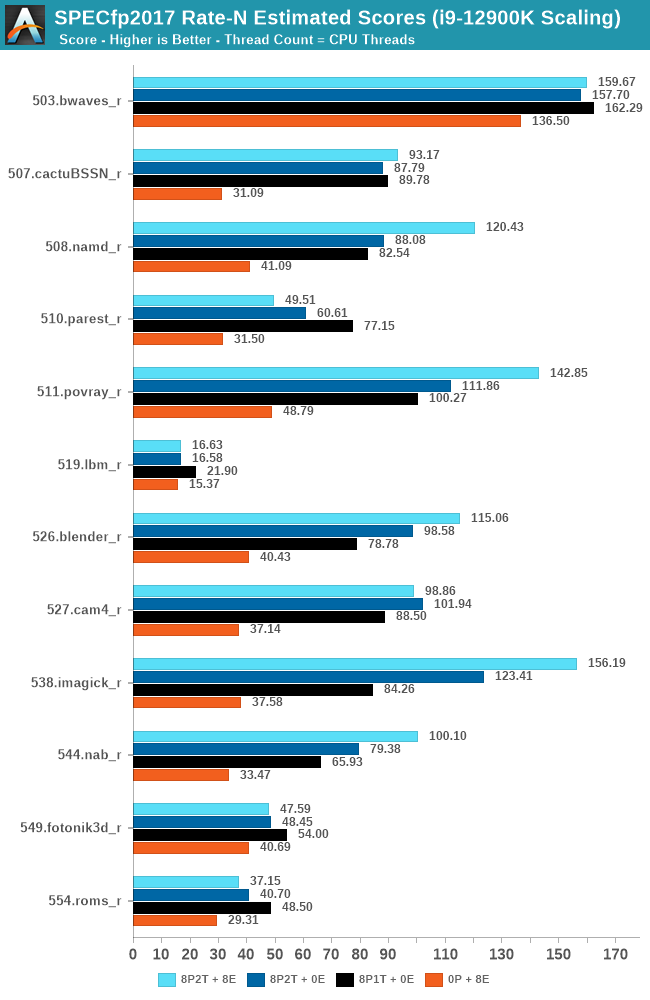

In the FP suite, the E-cores more clearly showcase a lower % of performance relative to the P-cores, and this makes sense given their design. Only few more compute-bound tests, such as 508.namd, 511.povray, or 538.imagick see larger contributions of the E-cores when they’re added in on top of the P-cores.

The FP suite also has a lot more memory-hungry workload. When it comes to DRAM bandwidth, having either E-cores or P-cores doesn’t matter much for the workload, as it’s the memory which is bottlenecked. Here, the E-cores are able to achieve extremely large performance figures compared to the P-cores. 503.bwaves and 519.lbm for example are pure DRAM bandwidth limited, and using the E-cores in MT scenarios allows for similar performance to the P-cores, however at only 35-40W package power, versus 110-125W for the P-cores result set.

Some of these workloads also see regressions in performance when adding in more cores or threads, as it just means more memory traffic contention on the chip, such as seen in the 8P2T+8E, 8P2T regressions over the 8P1T results.

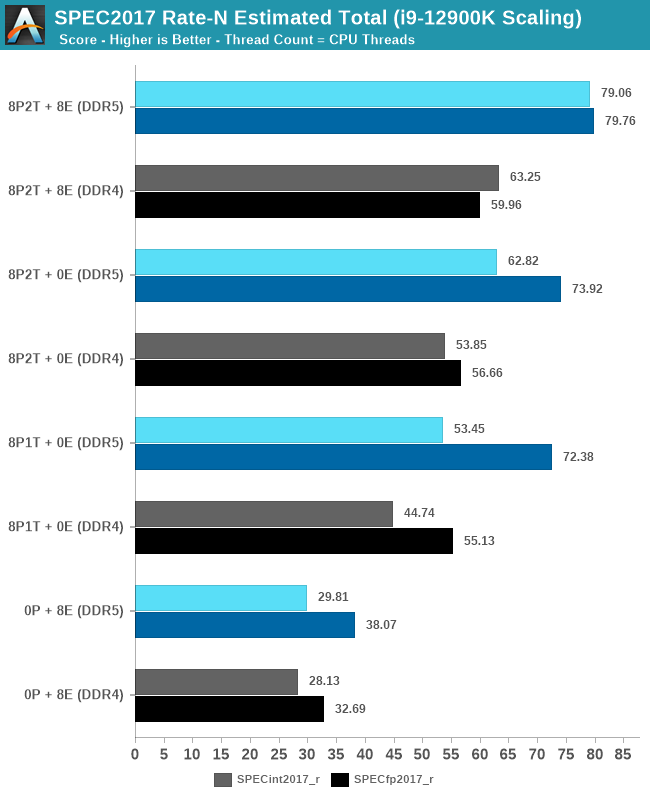

What’s most interesting here is the scaling of performance and the attribution between the P-cores and the E-cores. Focusing on the DDR5 set, the 8 E-cores are able to provide around 52-55% of the performance of 8 P-cores without SMT, and 47-51% of the P-cores with SMT. At first glance this could be argued that the 8P+8E setup can be somewhat similar to a 12P setup in MT performance, however the combined performance of both clusters only raises the MT scores by respectively 25% in the integer suite, and 5% in the FP suite, as we are hitting near package power limits with just 8P2T, and there’s diminishing returns on performance given the shared L3. What the E-cores do seem to allow the system is to allows to reduce every-day average power usage and increase the efficiency of the socket, as less P-cores need to be active at any one time.

474 Comments

View All Comments

mode_13h - Saturday, November 6, 2021 - link

> So, Alder Lake is a turkey as a high-end CPU, one that should have never been released?How do you reach that conclusion, after it blew away its predecessor and (arguably) its main competitor, even without AVX-512?

> This is because each program has to include Alder Lake AVX-512 support and

> those that don’t will cause performance regressions?

No, my point was that relying on the OS to trap AVX-512 instructions executed on E-cores and then context-switch the thread to a P-core is likely to be problematic, from a power & performance perspective. Another issue is code which autodetects AVX-512 won't see it, while running on an E-core. This can result in more than performance issues - it could result in software malfunctions if some threads are using AVX-512 datastructures while other threads in the same process aren't. Those are only a couple of the issues with enabling heterogeneous support of AVX-512, like what some people seem to be advocating for.

> Is Windows 11 able to support a software utility to disable the low-power cores

> once booted into Windows or are we restricted to disabling them via BIOS?

That's not the proposal to which I was responding, which you can see by the quote at the top of my post.

Oxford Guy - Sunday, November 7, 2021 - link

So, you’ve stated the same thing again — that Intel knew Alder Lake couldn’t be fully supported by Windows 11 even before it (AL) was designed?The question about the software utility is one you’re unable to answer, it seems.

mode_13h - Sunday, November 7, 2021 - link

> The question about the software utility is one you’re unable to answer, it seems.That's not something I was trying to address. I was only responding to @SystemsBuilder's idea that Windows should be able to manage having some cores with AVX-512 and some cores without.

If you'd like to know what I think about "the software utility", that's a fair thing to ask, but it's outside the scope of what I was discussing and therefore not a relevant counterpoint.

Oxford Guy - Monday, November 8, 2021 - link

More hilarious evasion.mode_13h - Tuesday, November 9, 2021 - link

> More hilarious evasion.Yes, evasion of your whataboutism. Glad you enjoyed it.

GeoffreyA - Sunday, November 7, 2021 - link

"So, Intel designed and released a CPU that it knew wouldn’t be properly supported by Windows 11"Oxford Guy, there's a difference between the concerns of the scheduler and that of AVX512. Alder Lake runs even on Windows 10. Only, there's a bit of suboptimal scheduling there, where the P and E cores are concerned.

If AVX512 weren't disabled, it would've been something of a nightmare keeping track of which cores support it and which don't. Usually, code checks at runtime whether a certain set of instructions---SSE3, AVX, etc---are available, using the CPUID instruction or intrinsic. Stir this complex yeast into the soup of performance and efficiency cores, and there will be trouble in the kitchen.

Under this is new, messy state of affairs, the only feasible option mum had, or should I say Intel, was bringing the cores onto a equal footing by locking AVX512 in the attic, and saying, no, that fellow doesn't live here.

GeoffreyA - Sunday, November 7, 2021 - link

Also, Intel seems pretty clear that it's disabled and so forth. Doesn't seem shady or controversial to me:https://www.intel.com/content/www/us/en/developer/...

SystemsBuilder - Saturday, November 6, 2021 - link

Thinking a bit about what you wrote: "This will not happen". And it is not easy but possible… it’s a bit technical but here we go… sorry for the wall of text.When you optimize code today (for pre Alder lake CPUs) to take advantage of AVX-512 you need to write two paths (at least). The application program (custom code) would first check if the CPU is capable of AVX-512 and at what level. There are many levels of AVX-512 support and effectively you need write customized code for each specific CPUID (class of CPUs , e.g. Ice lake, Sky lake X etc.) since for whatever CPU you end up running this particular program on, you would want to utilize the most favorable/relevant AVX-512 instructions. So with the custom code today (Pre Alder lake) the scheduler would just assign a tread to a underutilized core (loosely speaking) and the custom code would check what the core is capable off and then chose best path in real time (AVX2 and various level of AVX-512). The problem is that with Alder Lake not all cores are equal! BUT the custom code should have various paths already so it is capable!… the issue that I see is that the custom code CPU check needs to be adjusted to check core specific capability not CPUID specific (one more level of granularity) AND the scheduler should schedule code with AVX-512 paths on AVX-512 capable cores by preference... what’s needed is a code change in the AVX-512 path selection logic ( on the application developer - not a big deal) and compiler support that embed scheduler specific information about if the specific piece of code prefers AVX-512 or not. The scheduler would then use this information to schedule real time and the custom code would be able to choose the right path at execution time.

It is absolutely possible and it will come with time.

I think this is that this is not just applicable to AVX-512. I think in the future P and E cores might have more than just AVX-512 that is different (they might diverge much more than that) so the scheduler needs to be made aware of what a thread prefers and what the each core is capable of before it schedules each tread. It is the responsibility of the custom code to have multiple paths (if they want to utilize AVX-512 or not).

SystemsBuilder - Saturday, November 6, 2021 - link

old .exe which are not adjusted and are not recompiled for Alder Lake (code does not recognize Alder Lake) would simply automatically regress to AVX2 and the scheduler would not care which CPU to schedule it on. Basically that is what's happening today if you do not enable AVX-512 in the ASUS bios.Net net: you could make it would work.

mode_13h - Saturday, November 6, 2021 - link

> old .exe which are not adjusted and are not recompiled for Alder Lake (code does> not recognize Alder Lake) would simply automatically regress to AVX2

So, like 98% of shipping AVX-512 code, by the time Raptor Lake is introduced?

What you're proposing is a lot of work for Microsoft, only to benefit a very small number of applications. I think Intel would rather that people who need those apps simply buy CPU which officially support AVX-512 (or maybe switch off their E-cores and enable AVX-512 in BIOS).