Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

Huge Memory Bandwidth, but not for every Block

One highly intriguing aspect of the M1 Max, maybe less so for the M1 Pro, is the massive memory bandwidth that is available for the SoC.

Apple was keen to market their 400GB/s figure during the launch, but this number is so wild and out there that there’s just a lot of questions left open as to how the chip is able to take advantage of this kind of bandwidth, so it’s one of the first things to investigate.

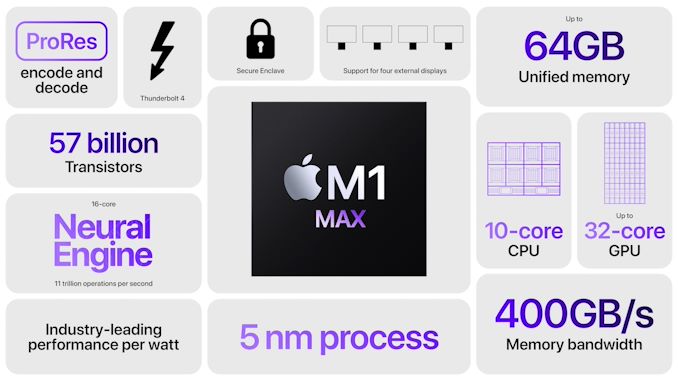

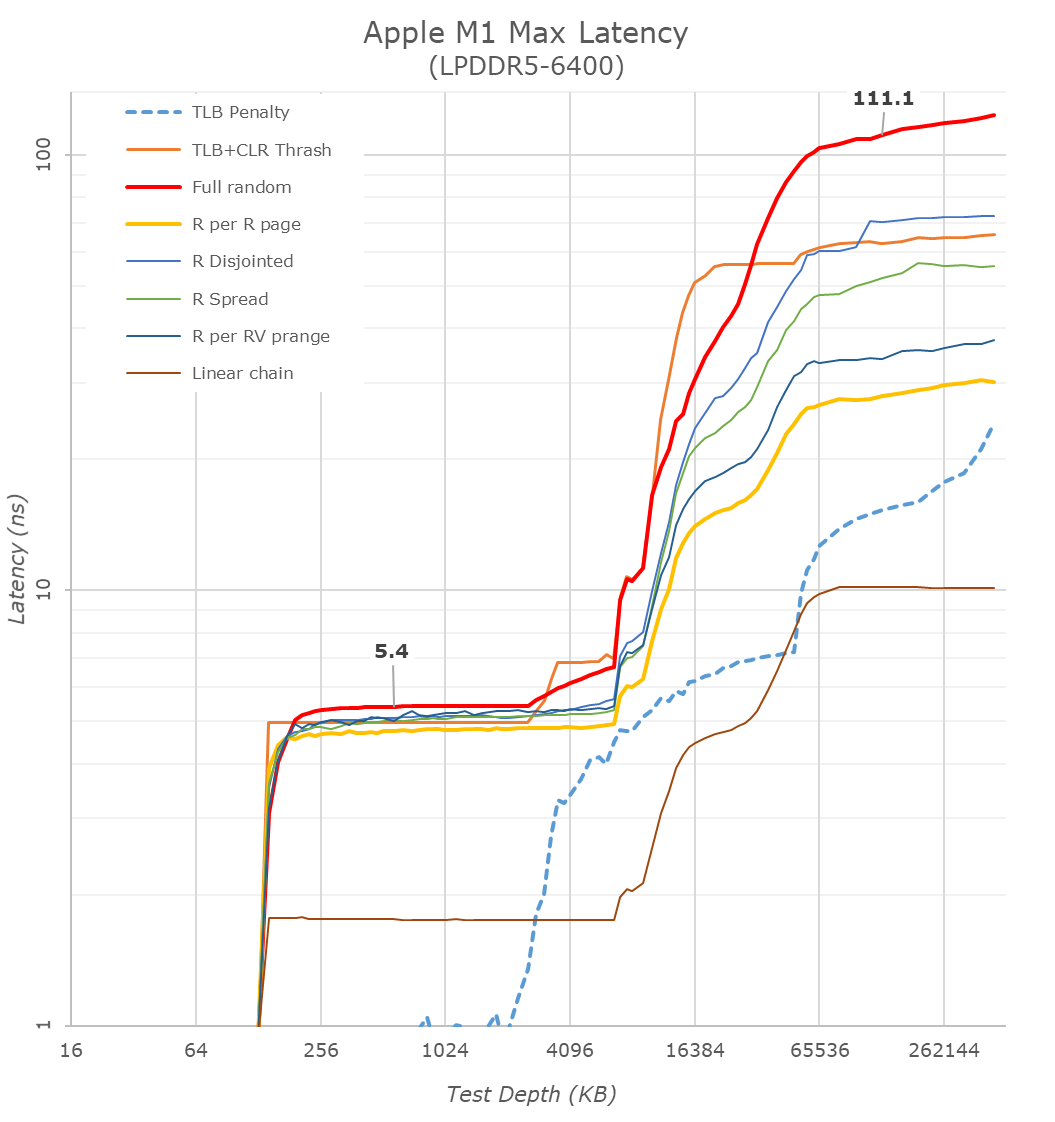

Starting off with our memory latency tests, the new M1 Max changes system memory behaviour quite significantly compared to what we’ve seen on the M1. On the core and L2 side of things, there haven’t been any changes and we consequently don’t see much alterations in terms of the results – it’s still a 3.2GHz peak core with 128KB of L1D at 3 cycles load-load latencies, and a 12MB L2 cache.

Where things are quite different is when we enter the system cache, instead of 8MB, on the M1 Max it’s now 48MB large, and also a lot more noticeable in the latency graph. While being much larger, it’s also evidently slower than the M1 SLC – the exact figures here depend on access pattern, but even the linear chain access shows that data has to travel a longer distance than the M1 and corresponding A-chips.

DRAM latency, even though on paper is faster for the M1 Max in terms of frequency on bandwidth, goes up this generation. At a 128MB comparable test depth, the new chip is roughly 15ns slower. The larger SLCs, more complex chip fabric, as well as possible worse timings on the part of the new LPDDR5 memory all could add to the regression we’re seeing here. In practical terms, because the SLC is so much bigger this generation, workloads latencies should still be lower for the M1 Max due to the higher cache hit rates, so performance shouldn’t regress.

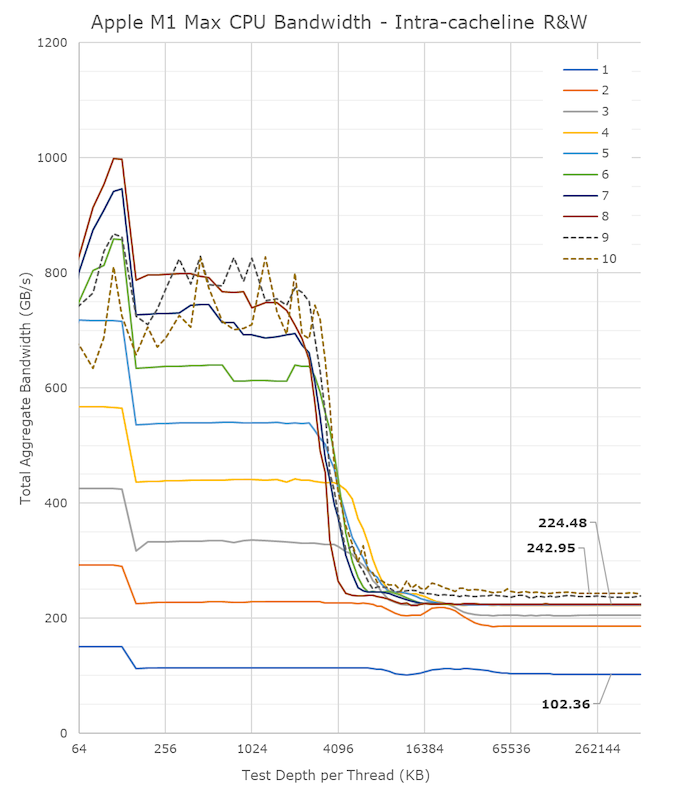

A lot of people in the HPC audience were extremely intrigued to see a chip with such massive bandwidth – not because they care about GPU or other offload engines of the SoC, but because the possibility of the CPUs being able to have access to such immense bandwidth, something that otherwise is only possible to achieve on larger server-class CPUs that cost a multitude of what the new MacBook Pros are sold at. It was also one of the first things I tested out – to see exactly just how much bandwidth the CPU cores have access to.

Unfortunately, the news here isn’t the best case-scenario that we hoped for, as the M1 Max isn’t able to fully saturate the SoC bandwidth from just the CPU side;

From a single core perspective, meaning from a single software thread, things are quite impressive for the chip, as it’s able to stress the memory fabric to up to 102GB/s. This is extremely impressive and outperforms any other design in the industry by multiple factors, we had already noted that the M1 chip was able to fully saturate its memory bandwidth with a single core and that the bottleneck had been on the DRAM itself. On the M1 Max, it seems that we’re hitting the limit of what a core can do – or more precisely, a limit to what the CPU cluster can do.

The little hump between 12MB and 64MB should be the SLC of 48MB in size, the reduction in BW at the 12MB figure signals that the core is somehow limited in bandwidth when evicting cache lines back to the upper memory system. Our test here consists of reading, modifying, and writing back cache lines, with a 1:1 R/W ratio.

Going from 1 core/threads to 2, what the system is actually doing is spreading the workload across the two performance clusters of the SoC, so both threads are on their own cluster and have full access to the 12MB of L2. The “hump” after 12MB reduces in size, ending earlier now at +24MB, which makes sense as the 48MB SLC is now shared amongst two cores. Bandwidth here increases to 186GB/s.

Adding a third thread there’s a bit of an imbalance across the clusters, DRAM bandwidth goes to 204GB/s, but a fourth thread lands us at 224GB/s and this appears to be the limit on the SoC fabric that the CPUs are able to achieve, as adding additional cores and threads beyond this point does not increase the bandwidth to DRAM at all. It’s only when the E-cores, which are in their own cluster, are added in, when the bandwidth is able to jump up again, to a maximum of 243GB/s.

While 243GB/s is massive, and overshadows any other design in the industry, it’s still quite far from the 409GB/s the chip is capable of. More importantly for the M1 Max, it’s only slightly higher than the 204GB/s limit of the M1 Pro, so from a CPU-only workload perspective, it doesn’t appear to make sense to get the Max if one is focused just on CPU bandwidth.

That begs the question, why does the M1 Max have such massive bandwidth? The GPU naturally comes to mind, however in my testing, I’ve had extreme trouble to find workloads that would stress the GPU sufficiently to take advantage of the available bandwidth. Granted, this is also an issue of lacking workloads, but for actual 3D rendering and benchmarks, I haven’t seen the GPU use more than 90GB/s (measured via system performance counters). While I’m sure there’s some productivity workload out there where the GPU is able to stretch its legs, we haven’t been able to identify them yet.

That leaves everything else which is on the SoC, media engine, NPU, and just workloads that would simply stress all parts of the chip at the same time. The new media engine on the M1 Pro and Max are now able to decode and encode ProRes RAW formats, the above clip is a 5K 12bit sample with a bitrate of 1.59Gbps, and the M1 Max is not only able to play it back in real-time, it’s able to do it at multiple times the speed, with seamless immediate seeking. Doing the same thing on my 5900X machine results in single-digit frames. The SoC DRAM bandwidth while seeking around was at around 40-50GB/s – I imagine that workloads that stress CPU, GPU, media engines all at the same time would be able to take advantage of the full system memory bandwidth, and allow the M1 Max to stretch its legs and differentiate itself more from the M1 Pro and other systems.

493 Comments

View All Comments

vlad42 - Monday, October 25, 2021 - link

And there you go making pure speculative claims without any factual basis for the quality of the ports. I could similarly make absurd claims such as every benchmark Intel's CPU looses is because that is just a bad port. Provide documented evidence it is a bad port as you are the one making that claim (and not bad Apple drivers, thermal throttling because they would not turn on the fans until the chip hit 85C, etc.).Face it, in the real world benchmarks this article provides, AMD's and Nvidia's GPUs are roughly 50% faster than Apple's M1 Max GPU.

Also, a full node shrink and integrating a dGPU into the SOC would make it much more energy efficient. The node shrink should be obvious and this site has repeatedly demonstrated the significant energy efficiency benefits of integrating discrete components, such as GPUs, into the SOCs.

jospoortvliet - Wednesday, October 27, 2021 - link

Well they are 100% sure bad ports as this gpu didn't exist. The games are written for a different platform, different gpus and different drivers. That they perform far from optimal must be obvious as fsck - driver optimization for specific games and game optimization for specific cards, vendors and even drivers usually make the difference between amd and nvidia - 20-50% between entirely unoptimized (this) and final is not even remotely rare. So yeah this is an absolute worst case. And Aztec Ruins shows the potential when (mildly?) optimized - nearly 3080 levels of performance.Blastdoor - Monday, October 25, 2021 - link

Apple's GPU isn't magic, but the advantage is real and it's not just the node. Apple has made a design choice to achieve a given performance level through more transistors rather than more Hz. This is true of both their CPU and GPU designs, actually. PC OEMs would rather pay less for a smaller, hotter chip and let their customers eat the electricity costs and inconvenience of shorter battery life and hotter devices. Apple's customers aren't PC OEMs, though, they're real people. And not just any real people, real people with $$ to spend and good taste .markiz - Tuesday, October 26, 2021 - link

When you say "Apple has made a design choice", who did in fact make that choice? Can it e attributed to an individual?Also, why is nobody else making this choice? Simply economics, or other reasons?

markiz - Tuesday, October 26, 2021 - link

Apple customers having $$ and taste, at a time where 60% of USA has an iphone can not exactly be true. Every loser these days has an iphone.I know you were likely being specific in regards to Macbooks Pros, so I guess both COULD be true, but does sound very bad to say it.

michael2k - Monday, October 25, 2021 - link

That would be true if there were and AMD or NVIDIA GPU manufactured on TSMC N5P node.Since there isn't, a 65W Apple GPU will perform like a 93W AMD GPU at N7, and slightly higher still for an NVIDIA GPU at Samsung 8nm.

That is probably the biggest reason they're so competitive. At 5nm they can fit far more transistors and clock them far lower than AMD or NVIDIA. In a desktop you can imagine they can clock higher 1.3GHz to push performance even higher. 2x perf at 2.6GHz, and power usage would only go up from 57W to 114W if there is no need to increase voltage when driving the GPU that fast.

Wrs - Monday, October 25, 2021 - link

All the evidence says M1 Max has more resources and outperforms the RTX 3060 mobile. But throw crappy/Rosetta code at the former and performance can very well turn into a wash. I don't expect that to change as Macs are mainly mobile and AAA gaming doesn't originate on mobile because of the restrictive thermals. It's just that Windows laptops are optimized for the exact same code as the desktops, so they have an easy time outperforming the M1's on games originating on Windows.When I wanna game seriously, I use a Windows desktop or a console, which outperforms any laptop by the same margin as Windows beats Mac OS/Rosetta in game efficiency. TDP is 250-600w (the consoles are more efficient because of Apple-like integration). Any gaming I'd do on a Windows laptop or an M1 is just casual. There are plenty of games already optimized for M1 btw - they started on iOS. /shrug

Blastdoor - Tuesday, October 26, 2021 - link

As things stand now, the Windows advantage in gaming is huge, no doubt.But any doubt about Apple's commitment to the Mac must surely be gone now. Apple has invested serious resources in the Mac, from top to bottom. If they've gone to all the work of creating Metal and these killer SOCs, why not take one more step and invest some money+time in getting optimized AAA games available on these machines? At this point, with so many pieces in place, it almost seems silly not to make that effort.

techconc - Monday, October 25, 2021 - link

It's hard to speak about these GPUs for gaming performance when the games you choose to run for your benchmark are Intel native and have to run under emulation. That's not exactly a showcase for native gaming performance.sean8102 - Tuesday, October 26, 2021 - link

What games could they have used? The only two somewhat demanding ARM native macOS games are WoW, and Baldur's Gate 3.