AMD Threadripper Pro Review: An Upgrade Over Regular Threadripper?

by Dr. Ian Cutress on July 14, 2021 9:00 AM EST- Posted in

- CPUs

- AMD

- ThreadRipper

- Threadripper Pro

- 3995WX

CPU Tests: Office and Science

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real world performance.

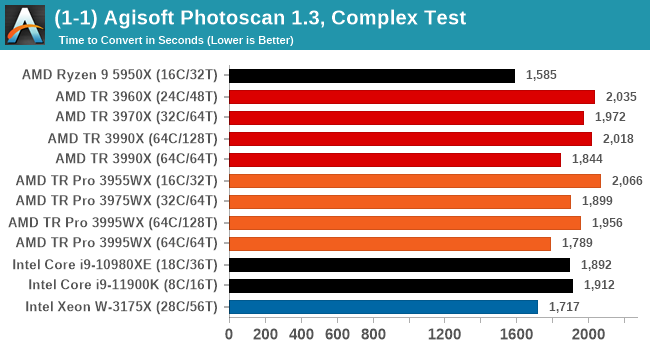

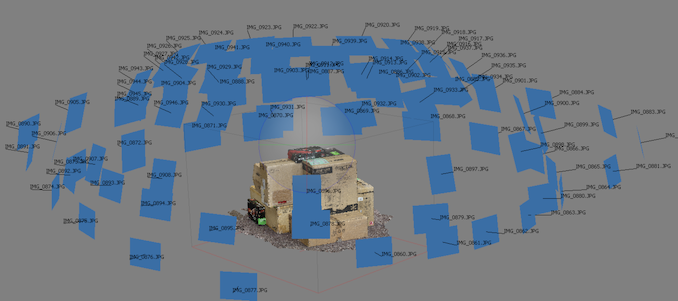

Agisoft Photoscan 1.3.3: link

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

Photoscan has variable thread scaling, so while in general we see better results with more threads, the frequency of the cores comes into play when 1-16 threads are needed in those portions of the calculation. As a result the 64C/64T versions are better here, and TR Pro has a slight advantage over TR due to memory bandwidth. Nonetheless, the consumer R9 5950X wins out.

Science

In this version of our test suite, all the science focused tests that aren’t ‘simulation’ work are now in our science section. This includes Brownian Motion, calculating digits of Pi, molecular dynamics, and for the first time, we’re trialing an artificial intelligence benchmark, both inference and training, that works under Windows using python and TensorFlow. Where possible these benchmarks have been optimized with the latest in vector instructions, except for the AI test – we were told that while it uses Intel’s Math Kernel Libraries, they’re optimized more for Linux than for Windows, and so it gives an interesting result when unoptimized software is used.

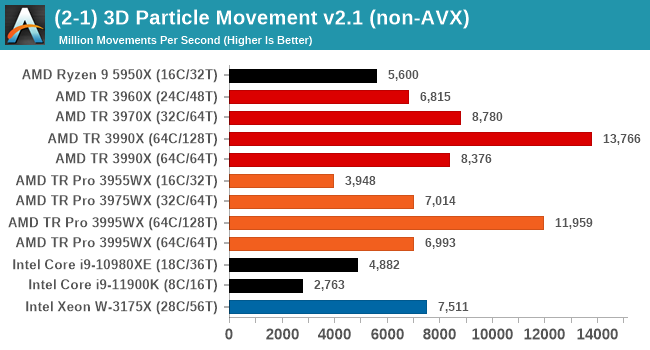

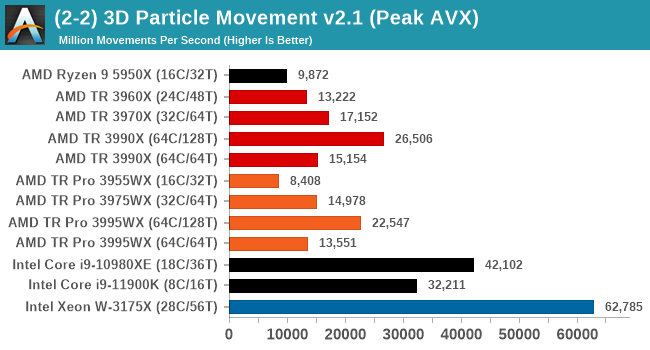

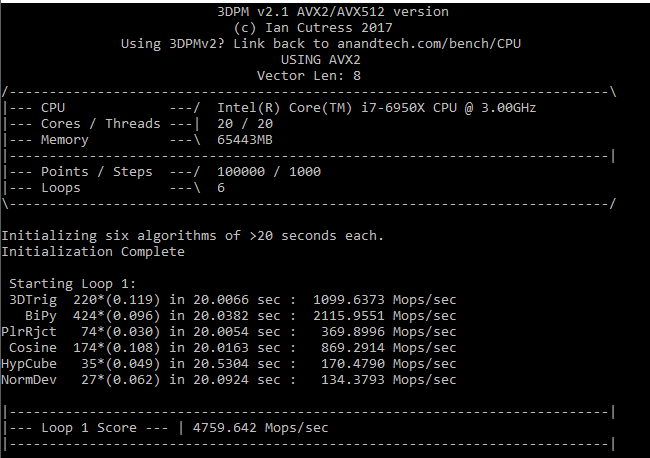

3D Particle Movement v2.1: Non-AVX and AVX2/AVX512

This is the latest version of this benchmark designed to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. This involves randomly moving particles in a 3D space using a set of algorithms that define random movement. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in.

The initial version of v2.1 is a custom C++ binary of my own code, and flags are in place to allow for multiple loops of the code with a custom benchmark length. By default this version runs six times and outputs the average score to the console, which we capture with a redirection operator that writes to file.

For v2.1, we also have a fully optimized AVX2/AVX512 version, which uses intrinsics to get the best performance out of the software. This was done by a former Intel AVX-512 engineer who now works elsewhere. According to Jim Keller, there are only a couple dozen or so people who understand how to extract the best performance out of a CPU, and this guy is one of them. To keep things honest, AMD also has a copy of the code, but has not proposed any changes.

The 3DPM test is set to output millions of movements per second, rather than time to complete a fixed number of movements.

In a non-AVX mode, having a full 128 threads works best here, and TR beats TR Pro because there is very little memory bandwidth required.

When we move into peak performance mode, the Intel chips with AVX512 scream out ahead. The AMD processors still get a rough 2x performance increase with AVX2, but the order still remains.

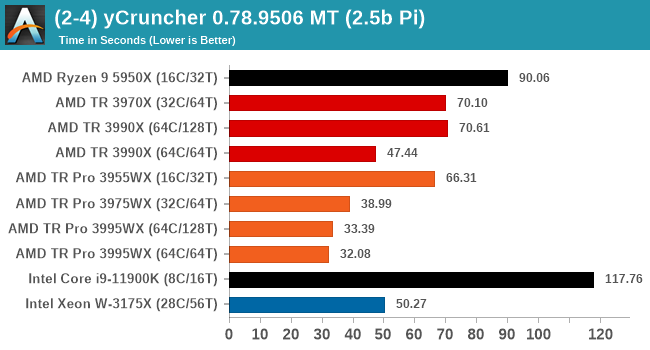

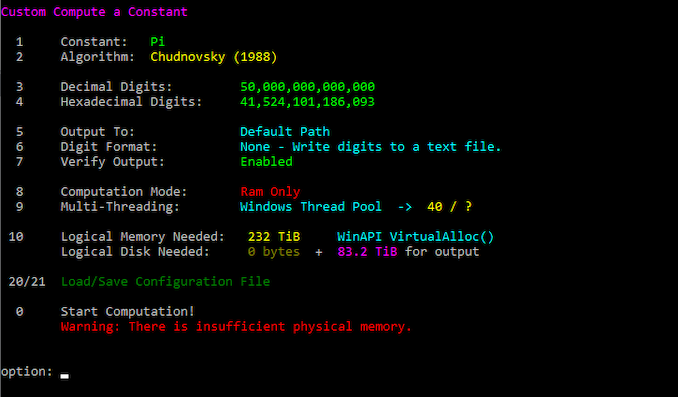

y-Cruncher 0.78.9506: www.numberworld.org/y-cruncher

If you ask anyone what sort of computer holds the world record for calculating the most digits of pi, I can guarantee that a good portion of those answers might point to some colossus super computer built into a mountain by a super-villain. Fortunately nothing could be further from the truth – the computer with the record is a quad socket Ivy Bridge server with 300 TB of storage. The software that was run to get that was y-cruncher.

Built by Alex Yee over the last part of a decade and some more, y-Cruncher is the software of choice for calculating billions and trillions of digits of the most popular mathematical constants. The software has held the world record for Pi since August 2010, and has broken the record a total of 7 times since. It also holds records for e, the Golden Ratio, and others. According to Alex, the program runs around 500,000 lines of code, and he has multiple binaries each optimized for different families of processors, such as Zen, Ice Lake, Sky Lake, all the way back to Nehalem, using the latest SSE/AVX2/AVX512 instructions where they fit in, and then further optimized for how each core is built.

For our purposes, we’re calculating Pi, as it is more compute bound than memory bound. In single thread mode we calculate 250 million digits, while in multithreaded mode we go for 2.5 billion digits. That 2.5 billion digit value requires ~12 GB of DRAM, and so is limited to systems with at least 16 GB.

In full multithreaded mode, y-Cruncher eats memory bandwidth for breakfast. TR Pro is the clear winner here, but also bandwidth per core is important, and 64C/64T is preferred.

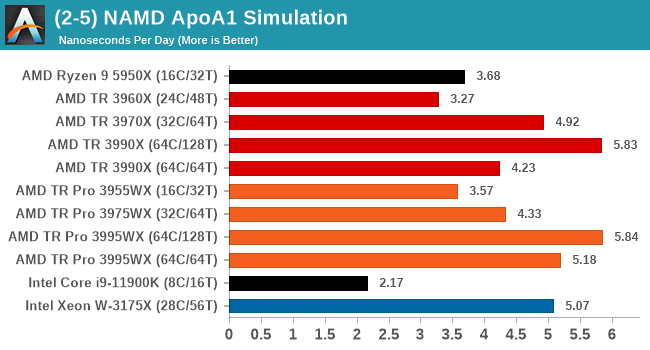

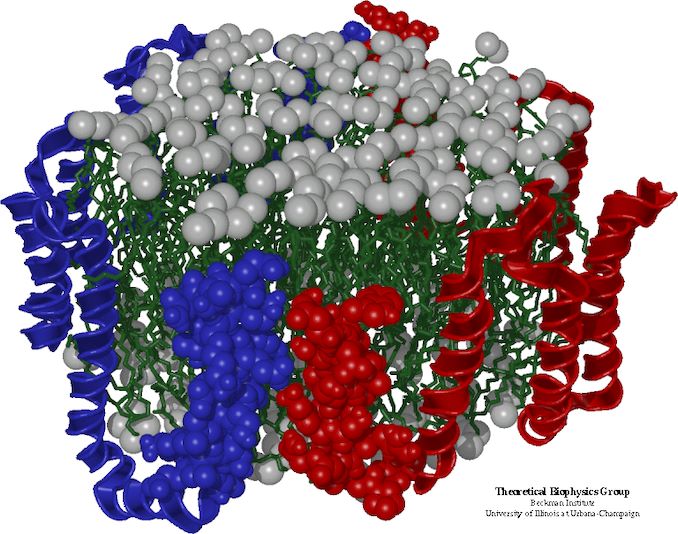

NAMD 2.13 (ApoA1): Molecular Dynamics

One of the popular science fields is modeling the dynamics of proteins. By looking at how the energy of active sites within a large protein structure over time, scientists behind the research can calculate required activation energies for potential interactions. This becomes very important in drug discovery. Molecular dynamics also plays a large role in protein folding, and in understanding what happens when proteins misfold, and what can be done to prevent it. Two of the most popular molecular dynamics packages in use today are NAMD and GROMACS.

NAMD, or Nanoscale Molecular Dynamics, has already been used in extensive Coronavirus research on the Frontier supercomputer. Typical simulations using the package are measured in how many nanoseconds per day can be calculated with the given hardware, and the ApoA1 protein (92,224 atoms) has been the standard model for molecular dynamics simulation.

Luckily the compute can home in on a typical ‘nanoseconds-per-day’ rate after only 60 seconds of simulation, however we stretch that out to 10 minutes to take a more sustained value, as by that time most turbo limits should be surpassed. The simulation itself works with 2 femtosecond timesteps. We use version 2.13 as this was the recommended version at the time of integrating this benchmark into our suite. The latest nightly builds we’re aware have started to enable support for AVX-512, however due to consistency in our benchmark suite, we are retaining with 2.13. Other software that we test with has AVX-512 acceleration.

NAMD can use all 128 threads, showcasing 64C/128T as being the better performer. Interestingly though the TR 3990X doesn't do so well here at 64C/64T, but the 3995WX does.

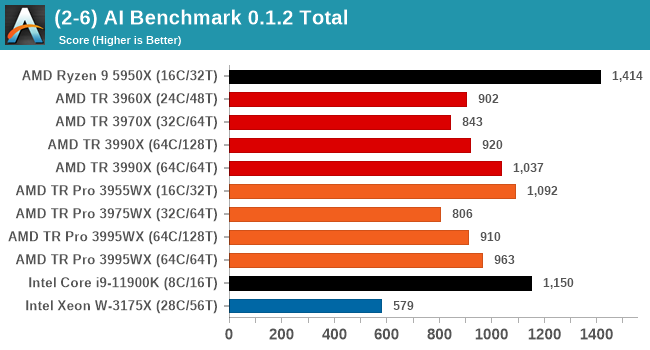

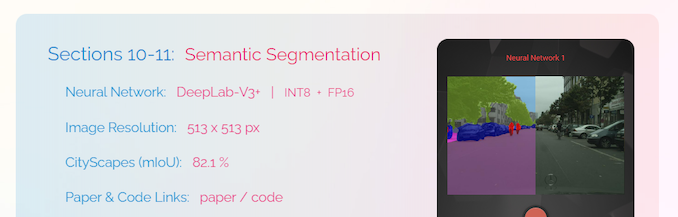

AI Benchmark 0.1.2 using TensorFlow: Link

Finding an appropriate artificial intelligence benchmark for Windows has been a holy grail of mine for quite a while. The problem is that AI is such a fast moving, fast paced word that whatever I compute this quarter will no longer be relevant in the next, and one of the key metrics in this benchmarking suite is being able to keep data over a long period of time. We’ve had AI benchmarks on smartphones for a while, given that smartphones are a better target for AI workloads, but it also makes some sense that everything on PC is geared towards Linux as well.

Thankfully however, the good folks over at ETH Zurich in Switzerland have converted their smartphone AI benchmark into something that’s useable in Windows. It uses TensorFlow, and for our benchmark purposes we’ve locked our testing down to TensorFlow 2.10, AI Benchmark 0.1.2, while using Python 3.7.6.

The benchmark runs through 19 different networks including MobileNet-V2, ResNet-V2, VGG-19 Super-Res, NVIDIA-SPADE, PSPNet, DeepLab, Pixel-RNN, and GNMT-Translation. All the tests probe both the inference and the training at various input sizes and batch sizes, except the translation that only does inference. It measures the time taken to do a given amount of work, and spits out a value at the end.

There is one big caveat for all of this, however. Speaking with the folks over at ETH, they use Intel’s Math Kernel Libraries (MKL) for Windows, and they’re seeing some incredible drawbacks. I was told that MKL for Windows doesn’t play well with multiple threads, and as a result any Windows results are going to perform a lot worse than Linux results. On top of that, after a given number of threads (~16), MKL kind of gives up and performance drops of quite substantially.

So why test it at all? Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all. Secondly, if MKL on Windows is the problem, then by publicizing the test, it might just put a boot somewhere for MKL to get fixed. To that end, we’ll stay with the benchmark as long as it remains feasible.

This benchmark likes high IPC, and R9 has it in spades.

98 Comments

View All Comments

mode_13h - Saturday, July 17, 2021 - link

Sometimes they do show it. I wonder why not, this time?One thing to note is how some of the same applications they benchmark in standalone tests are *also* included in SPEC17. So, those tests can get over-represented.

Blastdoor - Wednesday, July 14, 2021 - link

Re:"We are patiently waiting for AMD to launch Threadripper versions with Zen 3 – we hoped it would have been at Computex in June, but now we’re not sure exactly when."

Maybe it will happen when Intel offers something remotely competitive in this market? Or maybe when supply constraints ease and AMD can fully meet demand?

mode_13h - Wednesday, July 14, 2021 - link

Chagall (Threadripper 5000-series) is rumored to launch in August.Threska - Wednesday, July 14, 2021 - link

Long as AMD sticks to the same socket the platform should have longevity just like AM4.Bernecky - Wednesday, July 14, 2021 - link

Your "AMD Comparison" shows Threadripper DRAM as: 4×DDR4-3200.This is incorrect: I have a 3970X running on an ASUS ROG Zenith Extreme II Alpha, with

256GB: 8×DDR4-3600(OC slightly).

The Alpha no longer appears on the ASUS web site. Not sure what happened to it.

JMC2000 - Wednesday, July 14, 2021 - link

The "4xDDR-3200" is referencing 4 channels @ a non-overclocked speed of 3200; what you have is 8 DDR4 DIMMs in 4 channels.Railgun - Sunday, July 18, 2021 - link

Still here on the UK site.https://www.asus.com/uk/Motherboards-Components/Mo...

Oxford Guy - Wednesday, July 14, 2021 - link

‘The only downside to EPYC is that it can only be used in single socket systems, and the peak memory support is halved (from 4 TB to 2 TB).’Eh?

I assume you meant TR Pro. A big downside is that it’s Zen 2.

Thanny - Wednesday, July 14, 2021 - link

Ryzen and Threadripper support ECC memory just fine. It's only registered memory that isn't supported, which is why you can only get 128GB into a Ryzen platform and 256GB into a Threadripper platform (32GB is the largest unbuffered DIMM you can get).The motherboard must also support it, which not all Ryzen motherboards do. But all Threadripper boards support ECC. I'm using 128GB of unbuffered ECC right now with a 3960X.

willis936 - Thursday, July 15, 2021 - link

>Ryzen and Threadripper support ECC memory just fineA common misconception. Error reporting does not work with any AM4 chipset on non-pro AMD processors. Sure you have ECC, maybe. How do you know the soft error rate isn't massive?