Arm Announces Mobile Armv9 CPU Microarchitectures: Cortex-X2, Cortex-A710 & Cortex-A510

by Andrei Frumusanu on May 25, 2021 9:00 AM EST- Posted in

- SoCs

- CPUs

- Arm

- Smartphones

- Mobile

- Cortex

- ARMv9

- Cortex-X2

- Cortex-A710

- Cortex-A510

The Cortex-A710: More Performance with More Efficiency

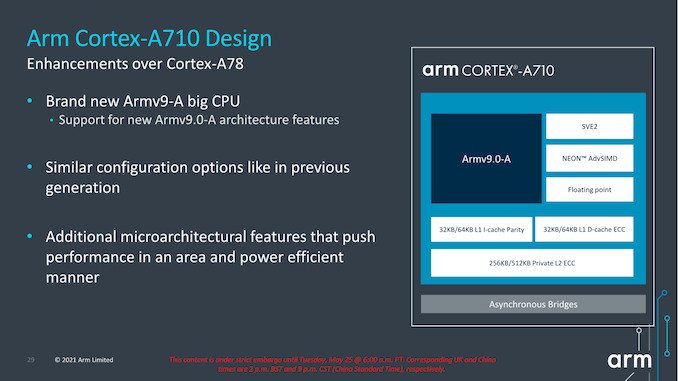

While the Cortex-X2 goes for all-out performance while paying the power and area penalties, Arm's Cortex-A710 design goes for a more efficient approach.

First of all, the new product nomenclature now is self-evident in regards to what Arm will be doing going forward- they’re skipping the A79 designation and simply starting fresh with a new three-digit scheme with the A710. Not very important in the grand scheme of things but an interesting marketing tidbit.

The Cortex-A710, much like the X2, is an Armv9 core with all new features that come with the new architecture version. Unlike the X2, the A710 also supports EL0 AArch32 execution, and as mentioned in the intro, this was mostly a design choice demanded by customers in the Chinese market where the ecosystem is still slightly lagging behind in moving all applications over to AArch64.

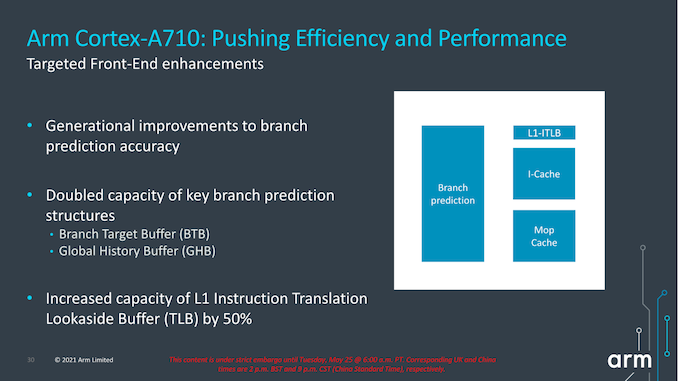

In terms of front-end enhancements, we’re seeing the same branch prediction improvements as on the X2, with larger structures as well as better accuracy. Other structures such as the L1I TLB have also seen an increase from 32 entries to 48 entries. Other front-end structures such as the macro-OP cache remain the same at 1.5K entries (The X2 also remains at 3K entries).

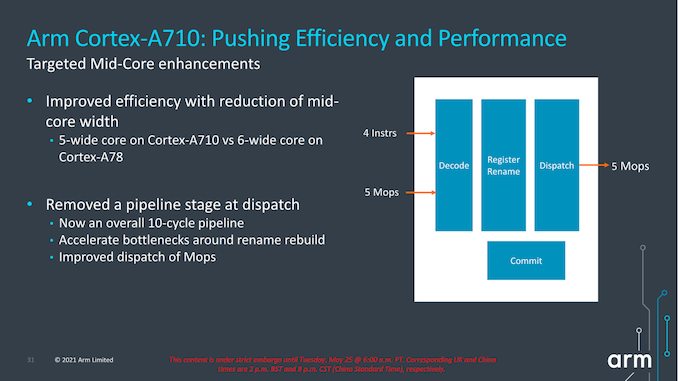

A very interesting choice for the A710 mid-core is that Arm has reduced the macro-OP cache and dispatch stage throughputs from 6-wide to 5-wide. This was mainly a targeted power and efficiency optimization for this generation, as we’re seeing a more important divergence between the Cortex-A and Cortex-X cores in terms of their specializations and targeted use-cases for performance and power.

The dispatch stage also features the same optimizations as on the X2, removing 1 cycle from the pipeline towards an overall 10-cycle pipeline design.

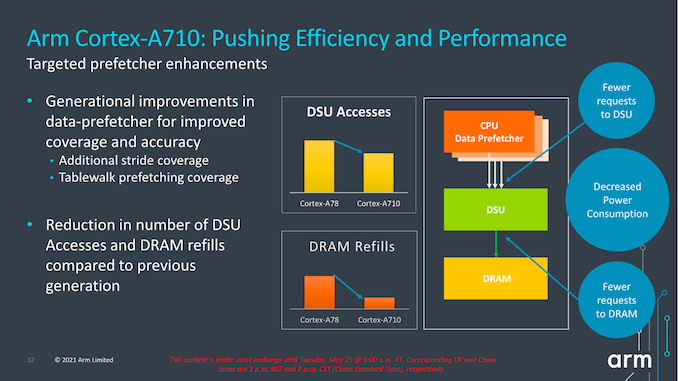

Arm also focuses on core improvements that affect the uncore parts of the system, which take place thanks to the new improvements in the prefetcher designs and how they interact with the new DSU-110 (which we’ll cover later). The new combination of core and DSU are able to reduce access from the core towards the L3 cache, as well as reducing the costly DRAM accesses thanks to the more efficiency prefetchers and larger L3 cache.

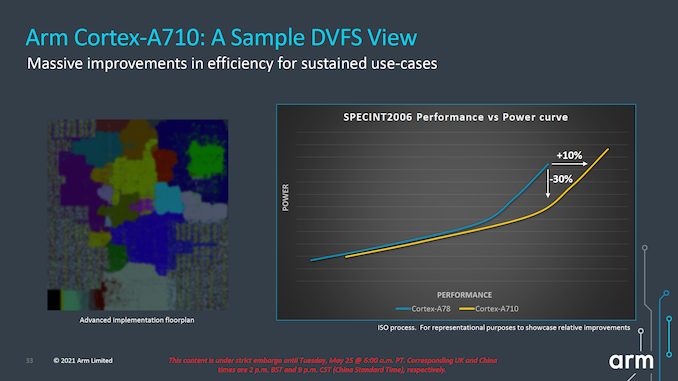

In terms of IPC, Arm advertises +10%, but again the issue with this figure here is that we’re comparing an 8MB L3 cache design to a 4MB L3 cache design. While this is a likely comparison for flagship SoCs next year, because the Cortex-A710 is also a core that would be used in mid-range or lower-end SoCs which might use much smaller L3 caches, it’s unlikely we’ll be seeing such IPC improvements in that sector unless the actual SoCs really do also improve their DSU sizes.

More important than the +10% improvement in performance is that, when backing off slightly in frequency, we can see that the power reduction can be rather large. According to Arm, at iso-performance the A710 consumes up to 30% less power than the Cortex-A78. This is something that would greatly help with sustained performance and power efficiency of more modestly clocked “middle” core implementations of the Cortex-A710.

In general, both the X2 and the A710’s performance and power figures are quite modest, making them the smallest generation-over-generation figures we’ve seen from Arm in quite a few years. Arm explains that due to this generation having made larger architectural changes with the move to Armv9, there has been an impact in regards to the usual efficiency and performance improvements that we’ve seen in prior generations.

Both the X2 and the A710 are also the fourth generation of this Austin microarchitecture family, so we’re hitting a wall of diminishing returns and maturity of the design. A few years ago we were under impression that the Austin family would only go on for three generations before handing things over to a new clean-sheet design from the Sophia team, but that original roadmap has been changed, and now we'll be seeing the new Sophia core with larger leaps in performance being disclosed next year.

181 Comments

View All Comments

mode_13h - Tuesday, June 1, 2021 - link

> some CPUs did mark the instruction boundaries in the cache.Not surprising, other than how far back you say it went.

mode_13h - Wednesday, May 26, 2021 - link

All good points. People who think "ISA doesn't matter" don't really understand everything an ISA encompasses.GeoffreyA - Wednesday, May 26, 2021 - link

The difference that divides them is that CISC can include a memory operation as part of an arithmetic one, whereas in RISC the two are separate (at least, in a load-store architecture).mode_13h - Wednesday, May 26, 2021 - link

> The difference that divides them is that CISC can include a memory operationI'm not an expert on the subject, but there are other elements in RISC orthodoxy, concerning things like:

* number of operands (also number of src & dst operands)

* encoding of immediates

* side-effects

I view the whole subject of CISC vs. RISC as something like MMA (Mixed Martial Arts). It turns out that there's no single best classical martial arts style. The most effective fighters use a blend of techniques adopted from various, disparate fighting styles.

GeoffreyA - Thursday, May 27, 2021 - link

"The most effective fighters use a blend of techniques"Absolutely. It's almost a universal principle that the winning design puts together the best elements from competing designs and throws away the junk.

Thala - Tuesday, May 25, 2021 - link

It does not matter that they are RISC-like inside, the issue with x86 is that they still carry the typical CISC baggage - and no internal RISC-like structure will help them here.km1810vm4 - Wednesday, May 26, 2021 - link

I would say x86 baggage. There were much nicer CISC architectures around at the time, like the Motorola 68000.TheinsanegamerN - Wednesday, May 26, 2021 - link

Intel's p5 processors implemented RISC style micro ops into their x86 decoding, that's part of why they were so dramatically faster then 486. It's not like this is hard to find out....mode_13h - Thursday, May 27, 2021 - link

> Intel's p5 processors implemented RISC style micro ops into their x86 decodingIn fact, early CPUs used a lot of microcode, and one of the ways they got faster was by replacing microcoded operations with hardwired logic. This was enabled by ever-increasing transistor budgets.

> that's part of why they were so dramatically faster then 486.

This feels like a bit of revisionist history. Here are some of the reasons why Pentium was faster than 80486:

https://en.wikipedia.org/wiki/P5_(microarchitectur...

Kamen Rider Blade - Tuesday, May 25, 2021 - link

Android needs to get their developers to stop using Java and use C/C++/Rust for their apps to eek out the max performance possible.Apple's App code base is generally C/C++, that's why they have the performance that they have.

And it's a long time nagging issue that I wish the Android community would solve.

It would give Android a HUGE performance boost to move their Apps over to C/C++/Rust.

Programming Languages have already been benchmarked against each other to see which one's faster and sorry to say it, but the C/C++/Rust family win the day in terms of Code Speed while using the same hardware platform.

https://benchmarksgame-team.pages.debian.net/bench...