Dual Core Intel Platform Shootout - NVIDIA nForce4 vs. Intel 955X

by Anand Lal Shimpi on April 14, 2005 1:01 PM EST- Posted in

- CPUs

Memory Performance

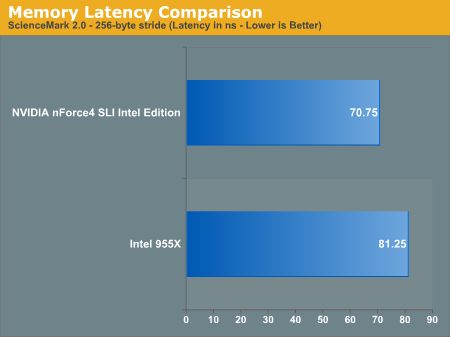

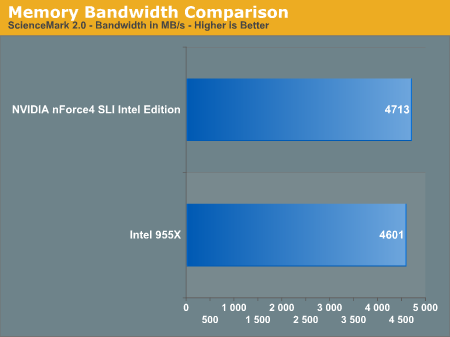

The biggest question on our minds when comparing these two heavyweights was: who has the better memory controller? We turned to the final version of ScienceMark 2.0 for the answer.

Amazingly enough, at the same memory timings, NVIDIA drops memory latency by around 13%. This is a worst case scenario for memory latency. In all of our other memory tests, the nForce4's memory controller was equal to Intel's controller - but even any advantage here is impressive, not to mention such a large advantage.

NVIDIA's latency reduction and DASP algorithms offer a negligible 2% increase in overall memory bandwidth. While you'd be hard pressed to find any noticeable examples of these performance improvements, the important thing here is that NVIDIA's memory controller appears to be just as good as, if not faster, than Intel's best. Kudos to NVIDIA - they have at least started off on the right foot with performance.

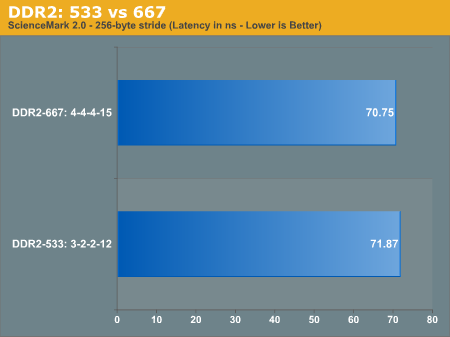

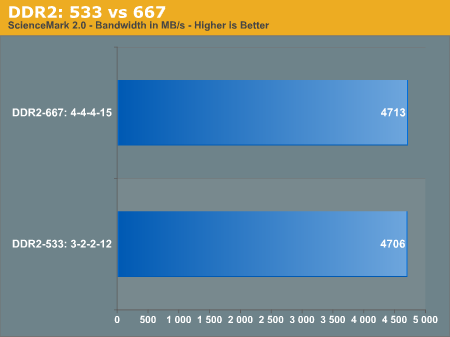

DDR2-667 or 533?

When Intel sent us their 955X platform, they configured it with DDR2-667 memory running at 5-5-5-15 timings. NVIDIA sent their nForce4 SLI Intel Edition board paired with some Corsair DIMMs running at 4-4-4-15 timings at DDR2-667. Given that we have lower latency DDR2-533 memory, we decided to find out if there was any real performance difference between DDR2-667 at relatively high timings and DDR2-533 at more aggressive timings. Once again, ScienceMark 2.0 is our tool of choice:

Here, we see that even at 3-2-2-12, DDR2-533 isn't actually any faster than DDR2-667.

...and it offers slightly less memory bandwidth.

It looks like there's not much point in worrying about low latency DDR2-533, as higher latency DDR2-667 seems to work just as well (if not a little better) on the newest Intel platforms.

96 Comments

View All Comments

Anand Lal Shimpi - Thursday, April 14, 2005 - link

whoa whoa, I'm no deity here, just a normal guy like everyone else - I can make mistakes and I encourage everyone to never blindly follow something I, or anyone else, says. That being said, Questar I've got a few things that you may be interested in reading:1) http://images.anandtech.com/graphs/pentium%204%206...

That graph shows exactly how hot Prescott gets, in fact, until the release of the latter 5xxJ series and 6xx series with EIST, Prescott systems were considerably louder than Athlon 64 systems. "Too hot" may be an opinion, but it's one echoed by the vast majority of readers as well as folks in the industry - who, in turn, are the ones purchasing/recommending the CPUs so their opinion matters quite a bit.

2) NVIDIA changed the spelling of their name from nVidia to NVIDIA a few years ago, have a look at NVIDIA's home page for confirmation - http://www.nvidia.com/page/companyinfo.html

3) I can't go into specifics as to how the Intel/NVIDIA agreement came into play, but know that Intel doesn't just strike up broad cross licensing agreements to companies like NVIDIA so they can make money on NVIDIA's chipsets. The Intel/NVIDIA relationship is far from just a "you can make chipsets for our CPUs" relationship, it is a cross licensing agreement where Intel gets access to big hunks of NVIDIA's patent portfolio and NVIDIA gets access to Intel's. That sort of a play is not made just to increase revenues, I can't go into much further detail but I suggest reading up on patent law and how it is employed by Intel.

4) Also remember that Intel not manufacturing silicon isn't necessarily a cost saver for them; a modern day fab costs around $2.5B, and you make that money back by keeping the fab running at as close to capacity as possible.

I think that's it, let me know if I missed something. I apologize for not replying earlier, I've been extremely strapped for time given next week's impending launch.

Houdani

I haven't played around with all of the multitasking tests, but I'd say that the lighter ones (I/O wise) have around 8 - 10 outstanding IOs. I believe NVIDIA disables NCQ at queue depths below 32, but I don't think Intel does (which is why Intel shows a slight performance advantage in the first set of tests).

Interestingly enough, in the first gaming multitasking scenario, Intel actually ends up being faster than NVIDIA by a couple of percent - I'm guessing because NVIDIA is running with NCQ disabled there.

Take care,

Anand

Questar - Thursday, April 14, 2005 - link

"I can only hope that you are not working for an IT company."Missed that.

I do not work for an IT company, but I do work in the IT industry.

In 2005 I will purchase 11,500 desktop/notebook systems, and 900-975 servers.

Questar - Thursday, April 14, 2005 - link

"It's pretty clear - Intel's last few products have been worthless in many cases."Once again you show your onw ignorance. Worthless means having no value. If the products were worthless then Intel wouldn't have such a large share of the market.

You will someday learn about business grasshopper :).

Time for me to go home for the night boys, have a good night!

overclockingoodness - Thursday, April 14, 2005 - link

#31: I agree with you 100 percent..overclockingoodness - Thursday, April 14, 2005 - link

#29 segagenesis: He isn't going to believe the popular sites because he thinks they are bought out and their editors have no knowledge of the industry. And if you find a smaller site, he still won't believe you because smaller sites know nothing either.Questar: Do you think you are the only with industry knowledge? I can only hope that you are not working for an IT company.

segagenesis - Thursday, April 14, 2005 - link

#28 - Just to keep things balanced here, Intel has a large portion of the OEM market because it can produce products in volume compared to AMD and most people dont really care whats "Intel Inside" thier computer. Just beacuse AMD may have a technologically superior processor doesnt mean its going to do wonders overnight when you just have to cite Betamax vs. VHS. On the other hand, Intel has the Pentium-M which is a good piece of hardware yet is limiting its market penetration with high prices/low production.overclockingoodness - Thursday, April 14, 2005 - link

#26 QUESTAR: I see you can't handle the proof, eh. After you couldn't come up with a counter-argument you decided to bash Toms. Sure, Toms may not be as in-depth as Anand and they could be biased, but they aren't that blatant about it.At least Toms is better than you.

Like I said, why don't you just get lost?

segagenesis - Thursday, April 14, 2005 - link

#26 - What I cant provide links outside this site because they dont count? Oh wait this site doesnt "count" either does it?Regarding the infamous AMD video that was a long time ago, not to mention Tom's doing such a video actually made something HAPPEN in the industry. AMD responded and added thermal protection in the newer CPUs. The P4 heat problem is *now*!

overclockingoodness - Thursday, April 14, 2005 - link

#25 QUESTAR: "Let me explain it to you:Intel get's a cut of the money from every chipset nVidia sells. What part of that don't you get?"

Is it better for Intel to get a cut out of NVIDIA's profits or hog the entire market with their own chipsets and take all the profits to themselves? What I don't get is how stupid you are.

"Ummm...yeah right, go right on thinking that."

Yet again, we have a mornoic statement from our AnandTech's very own dumbass. Maybe Anand should hire you to post stupid comments throughout the site to generate more discussions. Then again, even he will get tired seeing your stupid comments.

Intel surely doesn't have a chance against AMD with their Prescott CPUs. The only reason Intel is still the number one chipmaker is because it has signed exclusive contracts with Dell and Sony and there are quite a few people out there who could care less if they have an Intel or AMD CPUs.

Once again, it's your own ignorance that's blocking your thinking passages. Neither AMD nor Intel are strong enough take each other out of the business, but AMD CPUs do perform better in many scenarios against Intel CPUs. This include both desktop as well as server level CPUs. If you remember the article on AnandTech, Opteron kicked Intel's ass. And with the new Opterons coming soon, you will get a confirmation yourself.

It's pretty clear - Intel's last few products have been worthless in many cases.

segagenesis - Thursday, April 14, 2005 - link

#24 - I dont mind because I deal with people like him every day. I have used AMD myself for the past 5 years but I will admit that Intel has the performance crown lately when it comes to content encoding... however at a price. I have also preferred AMD due to pricing and gaming performance where it continues to do fairly well at.Working in labs maintaing them as is desktops (I am responsible for about 500+ computers) I have noticed that with newer P4's the heat output is actually noticeable. As I said a whole room full of them really raise the themperature, and thats just sitting there idle. A friend of mine has a 3.8ghz P4 and that thing is at its thermal limit with a X850 XT PE in the same case. Ouch!