Intel 3rd Gen Xeon Scalable (Ice Lake SP) Review: Generationally Big, Competitively Small

by Andrei Frumusanu on April 6, 2021 11:00 AM EST- Posted in

- Servers

- CPUs

- Intel

- Xeon

- Enterprise

- Xeon Scalable

- Ice Lake-SP

Compiling LLVM, NAMD Performance

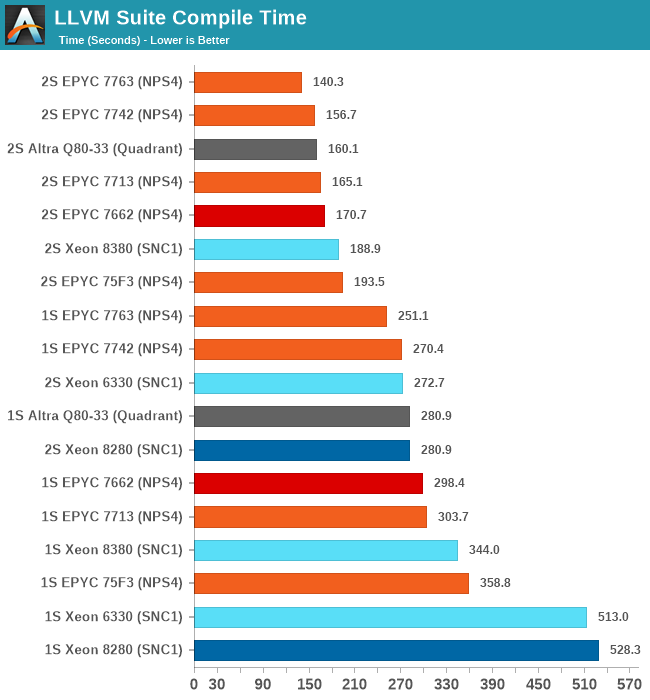

As we’re trying to rebuild our server test suite piece by piece – and there’s still a lot of work go ahead to get a good representative “real world” set of workloads, one more highly desired benchmark amongst readers was a more realistic compilation suite. Chrome and LLVM codebases being the most requested, I landed on LLVM as it’s fairly easy to set up and straightforward.

git clone https://github.com/llvm/llvm-project.gitcd llvm-projectgit checkout release/11.xmkdir ./buildcd ..mkdir llvm-project-tmpfssudo mount -t tmpfs -o size=10G,mode=1777 tmpfs ./llvm-project-tmpfscp -r llvm-project/* llvm-project-tmpfscd ./llvm-project-tmpfs/buildcmake -G Ninja \ -DLLVM_ENABLE_PROJECTS="clang;libcxx;libcxxabi;lldb;compiler-rt;lld" \ -DCMAKE_BUILD_TYPE=Release ../llvmtime cmake --build .We’re using the LLVM 11.0.0 release as the build target version, and we’re compiling Clang, libc++abi, LLDB, Compiler-RT and LLD using GCC 10.2 (self-compiled). To avoid any concerns about I/O we’re building things on a ramdisk. We’re measuring the actual build time and don’t include the configuration phase as usually in the real world that doesn’t happen repeatedly.

Starting off with the Xeon 8380, we’re looking at large generational improvements for the new Ice Lake SP chip. A 33-35% improvement in compile time depending on whether we’re looking at 2S or 1S figures is enough to reposition Intel’s flagship CPU in the rankings by notable amounts, finally no longer lagging behind as drastically as some of the competition.

It’s definitely not sufficient to compete with AMD and Ampere, both showcasing figures that are still 25 and 15% ahead of the Xeon 8380.

The Xeon 6330 is falling in line with where we benchmarked it in previous tests, just slightly edging out the Xeon 8280 (6258R equivalent), meaning we’re seeing minor ISO-core ISO-power generational improvements (again I have to mention that the 6330 is half the price of a 6258R).

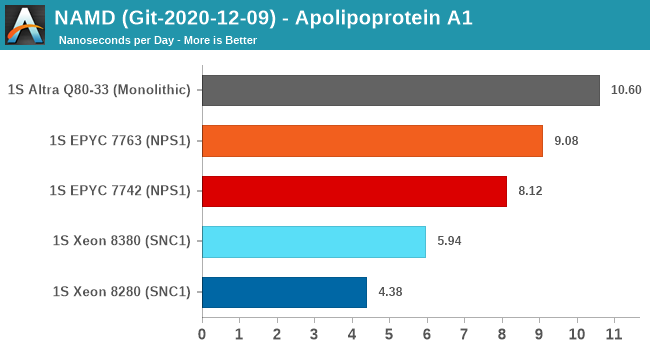

NAMD is a problem-child benchmark due to its recent addition of AVX512: the code had been contributed by Intel engineers – which isn’t exactly an issue in my view. The problem is that this is a new algorithm which has no relation to the normal code-path, which remains not as hand-optimised for AVX2, and further eyebrow raising is that it’s solely compatible with Intel’s ICC and no other compiler. That’s one level too much in terms of questionable status as a benchmark: are we benchmarking it as a general HPC-representative workload, or are we benchmarking it solely for the sake of NAMD and only NAMD performance?

We understand Intel is putting a lot of focus on these kinds of workloads that are hyper-optimised to run well extremely on Intel-only hardware, and it’s a valid optimisation path for many use-cases. I’m just questioning how representative it is of the wider market and workloads.

In any case, the GCC binaries of the test on the ApoA1 protein showcase significant performance uplifts for the Xeon 8380, showcasing a +35.6% gain. Using this apples-to-apples code path, it’s still quite behind the competition which scales the performance much higher thanks to more cores.

169 Comments

View All Comments

ricebunny - Tuesday, April 6, 2021 - link

See it like it this: the benchmark is a racing track, the CPU is a car and the compiler is the driver. If I want to get the best time for each car on a given track I will not have them driven by the same driver. Rather, I will get the best driver for each car. A single driver will repeat the same mistakes in both cars, but one car may be more forgiving than the other.DigitalFreak - Tuesday, April 6, 2021 - link

Is the compiler called The Stig?Wilco1 - Tuesday, April 6, 2021 - link

Then you are comparing drivers and not cars. A good driver can win a race with a slightly slower car. And I know a much faster driver that can beat your best driver. And he will win even with a much slower car. So does the car really matter as long as you have a really good driver?In the real world we compare cars by subjecting them to identical standardized tests rather than having a grandma drive one car and Lewis Hamilton drive another when comparing their performance/efficiency/acceleration/safety etc.

Makste - Wednesday, April 7, 2021 - link

Well saidricebunny - Wednesday, April 7, 2021 - link

Based on the compiler options that Anandtech used, we already have the situation that Intel and AMD CPUs are executing different code for the same benchmark. From there it’s only a small step further to use the best compiler for each CPU.mode_13h - Wednesday, April 7, 2021 - link

So, you're saying make the situation MORE lopsided? Instead, maybe they SHOULD use the same compiled code!mode_13h - Wednesday, April 7, 2021 - link

This is a dumb analogy. CPUs are not like race cars. They're more like family sedans or maybe 18-wheeler semi trucks (in the case of server CPUs). As such, they should be tested the way most people are going to use them.And almost NOBODY is compiling all their software with ICC. I almost never even hear about ICC, any more.

I'm even working with an Intel applications engineer on a CPU performance problem, and even HE doesn't tell me to build their own Intel-developed software with ICC!

KurtL - Wednesday, April 7, 2021 - link

Using identical compilers is the most unfair option there is to compare CPUs. Hardware and software on a modern system is tightly connected so it only makes sense to use those compilers on each platform that also are best optimised for that particular platform. Using a compiler that is underdeveloped for one platform is what makes an unfair comparison.Makste - Wednesday, April 7, 2021 - link

I think that using one unoptimized compiler for both is the best way to judge their performance. Such a compiler rules out bias and concentrates on pure hardware capabilitiesricebunny - Wednesday, April 7, 2021 - link

You do realize that even the same gcc compiler with the settings that Anandtech used will generate different machine code for Intel and AMD architectures, let alone for ARM? To really make it "apples-to-apples" on Linux x86 they should've used "--with-tune=generic" option: then both CPUs will execute the exact same code.But personally, I would prefer that they generated several binaries for each test, built them with optimal settings for each of the commonly used compilers: gcc, icc, aocc on Linux and perhaps even msvc on Windows. It's a lot more work I know, but I would appreciate it :)