Intel Core i7-11700K Review: Blasting Off with Rocket Lake

by Dr. Ian Cutress on March 5, 2021 4:30 PM EST- Posted in

- CPUs

- Intel

- 14nm

- Xe-LP

- Rocket Lake

- Cypress Cove

- i7-11700K

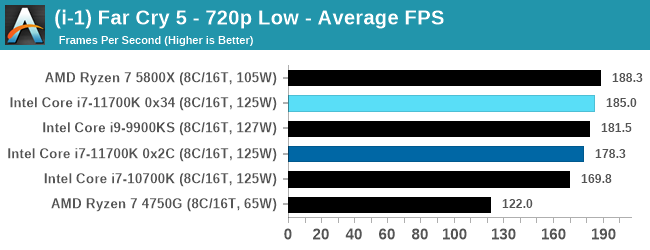

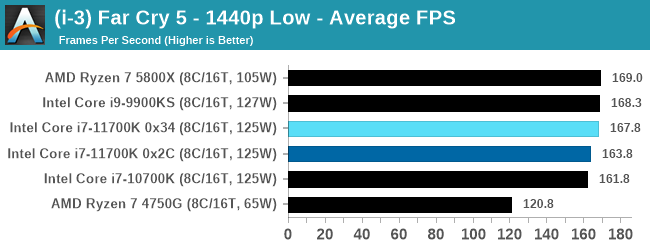

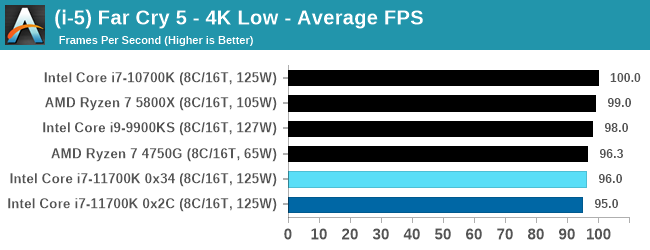

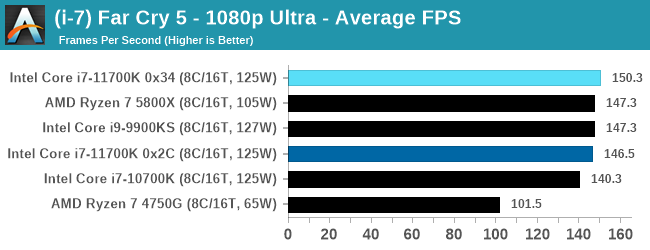

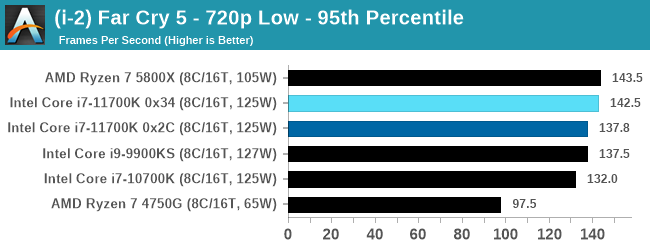

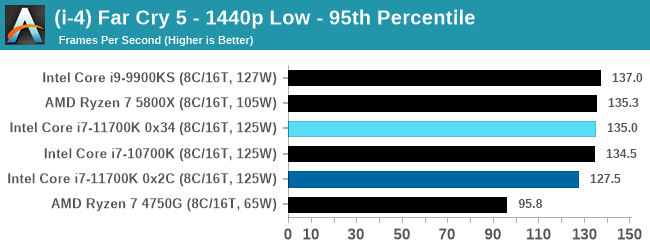

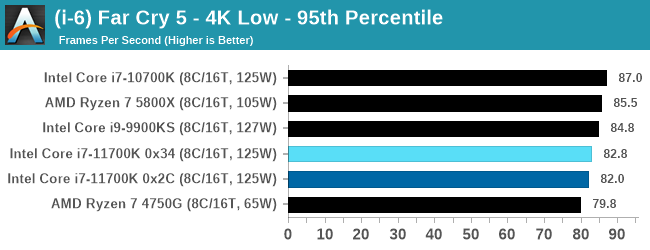

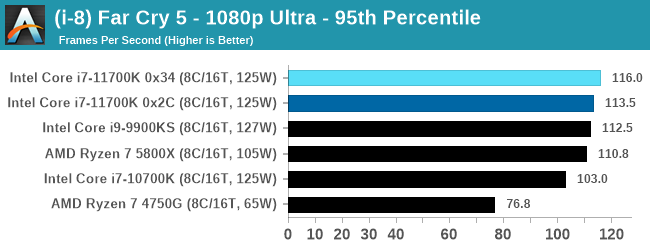

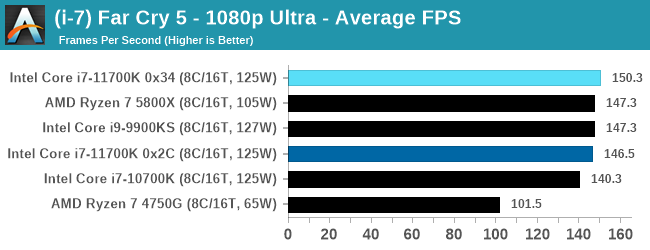

Gaming Tests: Far Cry 5

The fifth title in Ubisoft's Far Cry series lands us right into the unwelcoming arms of an armed militant cult in Montana, one of the many middles-of-nowhere in the United States. With a charismatic and enigmatic adversary, gorgeous landscapes of the northwestern American flavor, and lots of violence, it is classic Far Cry fare. Graphically intensive in an open-world environment, the game mixes in action and exploration with a lot of configurability.

Unfortunately, the game doesn’t like us changing the resolution in the results file when using certain monitors, resorting to 1080p but keeping the quality settings. But resolution scaling does work, so we decided to fix the resolution at 1080p and use a variety of different scaling factors to give the following:

- 720p Low, 1440p Low, 4K Low, 1440p Max.

Far Cry 5 outputs a results file here, but that the file is a HTML file, which showcases a graph of the FPS detected. At no point in the HTML file does it contain the frame times for each frame, but it does show the frames per second, as a value once per second in the graph. The graph in HTML form is a series of (x,y) co-ordinates scaled to the min/max of the graph, rather than the raw (second, FPS) data, and so using regex I carefully tease out the values of the graph, convert them into a (second, FPS) format, and take our values of averages and percentiles that way.

If anyone from Ubisoft wants to chat about building a benchmark platform that would not only help me but also every other member of the tech press build our benchmark testing platform to help our readers decide what is the best hardware to use on your games, please reach out to ian@anandtech.com. Some of the suggestions I want to give you will take less than half a day and it’s easily free advertising to use the benchmark over the next couple of years (or more).

As with the other gaming tests, we run each resolution/setting combination for a minimum of 10 minutes and take the relevant frame data for averages and percentiles.

| AnandTech | Low Resolution Low Quality |

Medium Resolution Low Quality |

High Resolution Low Quality |

Medium Resolution Max Quality |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

All of our benchmark results can also be found in our benchmark engine, Bench.

541 Comments

View All Comments

Franseven - Monday, March 8, 2021 - link

I know is a strange request, but i would like to know the iris integrated graphics benchmarks since i'm using my old 2080 ti for mining and i'm playing Minecraft and simple games with my integrated uhd630 of my 9700k, and unfortunately 5900x does not have integrated graphics, so i would like to know 11700k and 11900k perf with that, i have seen mobile benchmarks but as you know, is not the same thing, would like to see quality gaming benchmark as always, from you. thankskmmatney - Monday, March 8, 2021 - link

Would also be interested in this. I sold my 2070 Super - I owned it for a year, and sold it for what I paid (so free card for a year). The idea was to buy a 30X0 card with that money. That didn't happen, so lately I've just been playing Minecraft and older games on an old GTX 460. I'm curious about how the Xe graphics compares - with current prices on Ebay, the graphics along can add about $60 worth of value to the cpu.terroradagio - Monday, March 8, 2021 - link

ASUS just released another BIOS update with Rocket Lake enhancements. Probably more to come closer to the release too. This is why you don't post your review 3 weeks early.Everett F Sargent - Monday, March 8, 2021 - link

Like maybe an AVX-512 down clocking offset? Either Intel released their Rocket Engine a quarter too early or no amount of BIOS tweaking can do what you think it can do, at this, or any, point in time.From this review "Looking at our data, the all-core turbo under AVX-512 is 4.6 GHz, sometimes dipping to 4.5 GHz. Ouch. ... Our temperature graph looks quite drastic. Within a second of running AVX-512 code, we are in the high 90ºC, or in some cases, 100ºC. Our temperatures peak at 104ºC ... "

So already thermal throttling at Intel's promised 4.6 all core frequency using AVX-512. Makes you wonder what it takes to significantly OC this CPU. Which, you know, has barely been mentioned here in the comments section, OC'ing the damn thing, north or south of 300W or ~300W ...

https://i.imgur.com/8BEsGVo.png

terroradagio - Tuesday, March 9, 2021 - link

I guess you missed also the spot where normal AVX used less power than the 9900k. The vast majority don't care about AVX-512. It is just there so Intel can say it is. People who buy Rocket Lake will be interested because of gaming and there will probably be more stock than 7nm products from AMD.Qasar - Tuesday, March 9, 2021 - link

wow. really ? one test ( of a few) where intel was faster, and used less power ? big deal. over all rocket lake, looks to be a joke." People who buy Rocket Lake will be interested because of gaming " wrong, i know a few peope who are not even looking at intel, and are just waiting for zen 3 to be available, and this is for gaming and non gaming usage.

terroradagio - Tuesday, March 9, 2021 - link

I pointed out facts, and you are cherry picking one very selective AVX 512 test. Go away fanboy.Qasar - Tuesday, March 9, 2021 - link

like you your self have been doing ? and showing how much you love intel?hello pot meet kettle.

Everett F Sargent - Tuesday, March 9, 2021 - link

Yes, a 5.0GHz (all core boost clock) at 231.49W for the i9-9900KS versus a 4.6GHz (all core boost clock) at 224.56W for the i7-11700K. Conclusion? The i7-11700K runs 20-25W higher at the same all core boost frequency (4.6-5.0GHz). The i7-11700K wins at test duration though (by a similar margin as the inverse of the power ratio). The CPU energy used is about the same for both.amanpatel - Monday, March 8, 2021 - link

Few questions:1) Why is apple silicon or ARM equivalents not part of the benchmarks?

2) Why are so many CPU benchmarks needed, especially if they don't tell anything significant about them.

3) I'm not a huge gamer, but I also don't understand the point of so many gaming benchmarks for a CPU review.

Perhaps I'm the wrong audience member here, but it does seem a whole lot of charts that roughly say the same thing!