AMD 3rd Gen EPYC Milan Review: A Peak vs Per Core Performance Balance

by Dr. Ian Cutress & Andrei Frumusanu on March 15, 2021 11:00 AM ESTDisclaimer June 25th: The benchmark figures in this review have been superseded by our second follow-up Milan review article, where we observe improved performance figures on a production platform compared to AMD’s reference system in this piece.

Compiling LLVM, NAMD Performance

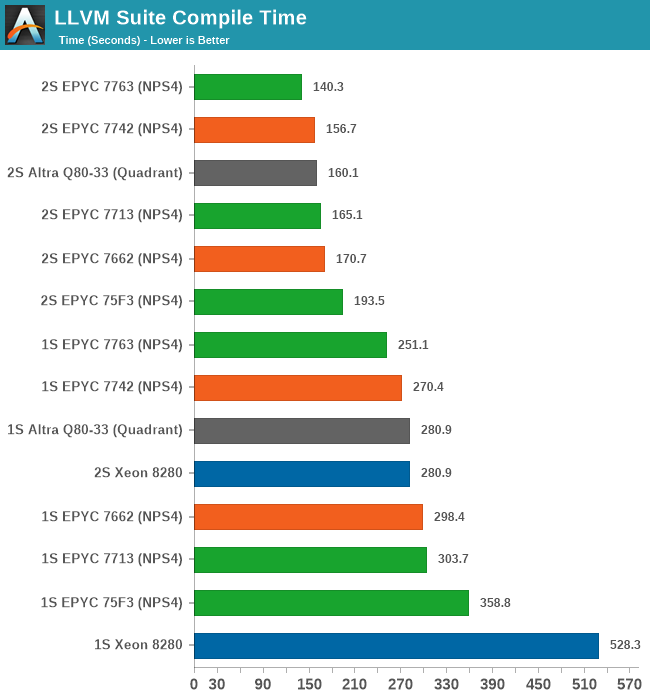

As we’re trying to rebuild our server test suite piece by piece – and there’s still a lot of work go ahead to get a good representative “real world” set of workloads, one more highly desired benchmark amongst readers was a more realistic compilation suite. Chrome and LLVM codebases being the most requested, I landed on LLVM as it’s fairly easy to set up and straightforward.

git clone https://github.com/llvm/llvm-project.gitcd llvm-projectgit checkout release/11.xmkdir ./buildcd ..mkdir llvm-project-tmpfssudo mount -t tmpfs -o size=10G,mode=1777 tmpfs ./llvm-project-tmpfscp -r llvm-project/* llvm-project-tmpfscd ./llvm-project-tmpfs/buildcmake -G Ninja \ -DLLVM_ENABLE_PROJECTS="clang;libcxx;libcxxabi;lldb;compiler-rt;lld" \ -DCMAKE_BUILD_TYPE=Release ../llvmtime cmake --build .We’re using the LLVM 11.0.0 release as the build target version, and we’re compiling Clang, libc++abi, LLDB, Compiler-RT and LLD using GCC 10.2 (self-compiled). To avoid any concerns about I/O we’re building things on a ramdisk. We’re measuring the actual build time and don’t include the configuration phase as usually in the real world that doesn’t happen repeatedly.

For the new Milan chips, the results are a bit mixed. The higher-power 7763 takes a lead with a +10.5% improvement over the 7742, however the 7713 doesn’t manage to keep up with that predecessor.

The 1S vs 2S scores are interesting as the 2S figures showcase the new Milan chips in a better light due to the higher single-threaded performance of the Zen3 cores. The compilation here also has linking phases which are single-thread performance bottle-necked. This results in scenarios such as the 7713 losing to the 7662 in 1S comparisons, however winning out against the same chip in the 2S comparison, as it’s able to make that advantage count for more.

It’s also great to see the 75F3 keep up with the 64-core counterparts at around 72% of the top-SKU performance.

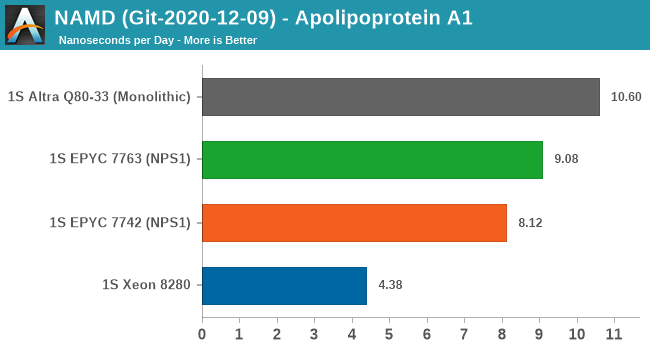

Finally, in NAMD, this is more of a core-local compute workload. We see the 7763 outperform the 7742 by +11.8%, however the Milan chip is still outperformed by the higher core compute capacity of the 80-core Altra chip.

Generally, I have my reservations about NAMD as a benchmark due to its multicore vs MPI variants and scaling anomalies, on top of the whole topic of the benchmark having a completely different algorithm for AVX512 processors.

120 Comments

View All Comments

lejeczek - Monday, March 15, 2021 - link

But those Altra Q80-33 ... gee guys. I have been thinking for a while - next upgrade of the stack in the rack might as well be...mode_13h - Monday, March 15, 2021 - link

Well, if it does well on the benchmarks that align with your workload, then I'd certainly consider at least a single-CPU Altra. IIRC, the multi-CPU interconnect was one of its weak points. You could even go dual-CPU, if you're provisioning VMs that fit on a single CPU (or better yet, just one quadrant).Pinn - Monday, March 15, 2021 - link

When does this filter to the Threadrippers?mode_13h - Monday, March 15, 2021 - link

Probably either when demand for the 3000-series Threadrippers starts slipping or if/when the supply of top-binned Zen3 dies ever catches up.It would be interesting to see what performance could be extracted from these CPUs, if AMD would raise the power/thermal limit another 100 W. Maybe the 5000-series TR Pro will be our chance to find out!

mode_13h - Monday, March 15, 2021 - link

Someone please remind me why Altra's memory performance is so much stronger. Is it simply down to avoiding the cache write-miss penalty? I'm pretty sure x86 CPUs long-ago added store buffers to fix that, but I can't think of any other explanation for that incredible stream benchmark discrepancy!Andrei Frumusanu - Monday, March 15, 2021 - link

It's due to the Neoverse N1 cores being able to dynamically transform arbitrary memory writes into non-temporal write streams instead of doing regular RFO before a write as the x86 systems are currently doing. I explain it more in the Altra review:https://www.anandtech.com/show/16315/the-ampere-al...

mode_13h - Monday, March 15, 2021 - link

That's more or less what I recall, but do you know it's *truly* emitting non-temporal stores? Those partially-bypass some or all of the cache hierarchy (I seem to recall that the Pentium 4 actually just restricted them to one set of L2 cache). It would seem to me that implausibly deep analysis would be needed for the CPU to determine that the core in question wouldn't access the data before it was replaced. And that's not even to speak of determining whether code running on *other* cores might need it.On the other hand, if it simply has enough write-buffering, it could avoid fetching the target cacheline by accumulating enough adjacent stores to determine that the entire cacheline would be overwritten. Of course, the downside would be a tiny bit more write latency, and memory-ordering constraints (esp. for x86) might mean that it'd only work for groups of consecutive stores to consecutive addresses.

I guess a way to eliminate some of those restrictions would be to observe through analysis of the instruction stream that a group of stores would overwrite the cacheline and then issue an allocation instead of a fetch. Maybe that's what Altra is doing?

Andrei Frumusanu - Tuesday, March 16, 2021 - link

You're over-complicating things. The core simply sees a stream pattern and switches over to nontemporal writes. They can fully saturate the memory controller when doing just pure write patterns.mode_13h - Wednesday, March 17, 2021 - link

But, do you know they're truly non-temporal writes? As I've tried to explain, there are ways to avoid the write-miss penalty without using true non-temporal writes.And how much of that are you inferring vs. basing this on what you've been told from official or unofficial sources?

Andrei Frumusanu - Saturday, March 20, 2021 - link

It's 100% non-temporal writes, confirmed by both hardware tests and architects.