AMD Ryzen 9 5980HS Cezanne Review: Ryzen 5000 Mobile Tested

by Dr. Ian Cutress on January 26, 2021 9:00 AM EST- Posted in

- CPUs

- AMD

- Vega

- Ryzen

- Zen 3

- Renoir

- Notebook

- Ryzen 9 5980HS

- Ryzen 5000 Mobile

- Cezanne

CPU Tests: Rendering

Rendering tests, compared to others, are often a little more simple to digest and automate. All the tests put out some sort of score or time, usually in an obtainable way that makes it fairly easy to extract. These tests are some of the most strenuous in our list, due to the highly threaded nature of rendering and ray-tracing, and can draw a lot of power. If a system is not properly configured to deal with the thermal requirements of the processor, the rendering benchmarks is where it would show most easily as the frequency drops over a sustained period of time. Most benchmarks in this case are re-run several times, and the key to this is having an appropriate idle/wait time between benchmarks to allow for temperatures to normalize from the last test.

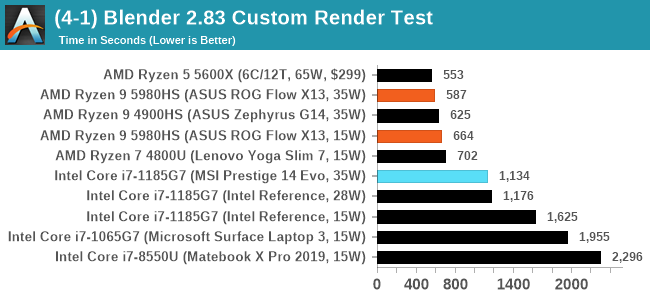

Blender 2.83 LTS: Link

One of the popular tools for rendering is Blender, with it being a public open source project that anyone in the animation industry can get involved in. This extends to conferences, use in films and VR, with a dedicated Blender Institute, and everything you might expect from a professional software package (except perhaps a professional grade support package). With it being open-source, studios can customize it in as many ways as they need to get the results they require. It ends up being a big optimization target for both Intel and AMD in this regard.

For benchmarking purposes, we fell back to one rendering a frame from a detailed project. Most reviews, as we have done in the past, focus on one of the classic Blender renders, known as BMW_27. It can take anywhere from a few minutes to almost an hour on a regular system. However now that Blender has moved onto a Long Term Support model (LTS) with the latest 2.83 release, we decided to go for something different.

We use this scene, called PartyTug at 6AM by Ian Hubert, which is the official image of Blender 2.83. It is 44.3 MB in size, and uses some of the more modern compute properties of Blender. As it is more complex than the BMW scene, but uses different aspects of the compute model, time to process is roughly similar to before. We loop the scene for at least 10 minutes, taking the average time of the completions taken. Blender offers a command-line tool for batch commands, and we redirect the output into a text file.

Intel loses out here due to core count, but AMD shows a small but not inconsequential uplift in performance generation-on-generation.

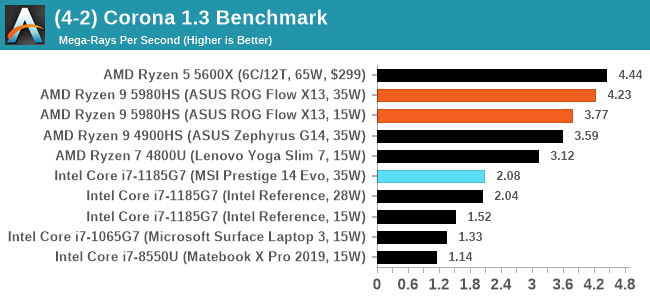

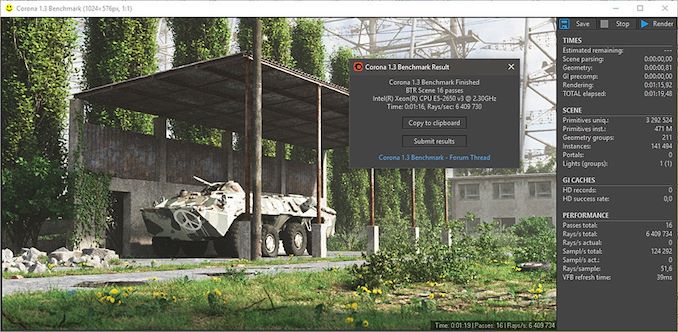

Corona 1.3: Link

Corona is billed as a popular high-performance photorealistic rendering engine for 3ds Max, with development for Cinema 4D support as well. In order to promote the software, the developers produced a downloadable benchmark on the 1.3 version of the software, with a ray-traced scene involving a military vehicle and a lot of foliage. The software does multiple passes, calculating the scene, geometry, preconditioning and rendering, with performance measured in the time to finish the benchmark (the official metric used on their website) or in rays per second (the metric we use to offer a more linear scale).

The standard benchmark provided by Corona is interface driven: the scene is calculated and displayed in front of the user, with the ability to upload the result to their online database. We got in contact with the developers, who provided us with a non-interface version that allowed for command-line entry and retrieval of the results very easily. We loop around the benchmark five times, waiting 60 seconds between each, and taking an overall average. The time to run this benchmark can be around 10 minutes on a Core i9, up to over an hour on a quad-core 2014 AMD processor or dual-core Pentium.

Corona shows a big uplift for Cezanne compared to Renoir.

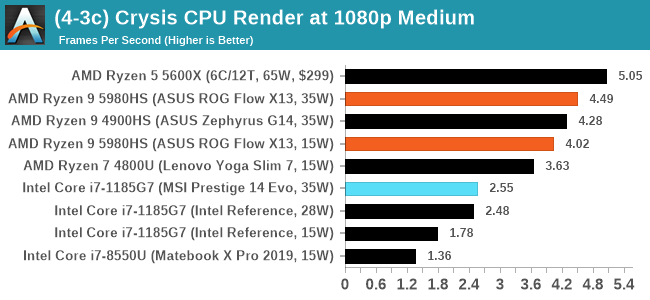

Crysis CPU-Only Gameplay

One of the most oft used memes in computer gaming is ‘Can It Run Crysis?’. The original 2007 game, built in the Crytek engine by Crytek, was heralded as a computationally complex title for the hardware at the time and several years after, suggesting that a user needed graphics hardware from the future in order to run it. Fast forward over a decade, and the game runs fairly easily on modern GPUs.

But can we also apply the same concept to pure CPU rendering? Can a CPU, on its own, render Crysis? Since 64 core processors entered the market, one can dream. So we built a benchmark to see whether the hardware can.

For this test, we’re running Crysis’ own GPU benchmark, but in CPU render mode.

At these resolutions we're seeing a small uplift for Cezanne. We spotted a performance issue when running our 320x200 test where Cezanne scores relatively low (20 FPS vs Renoir at 30 FPS), and so we're investigating that performance issue.

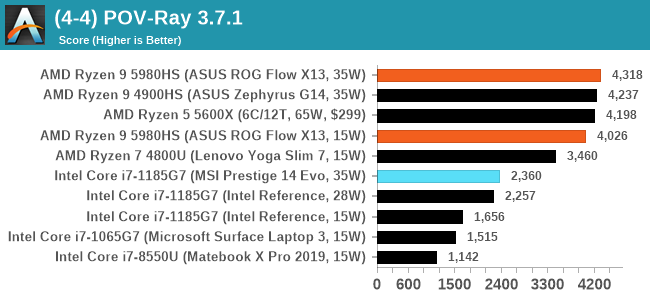

POV-Ray 3.7.1: Link

A long time benchmark staple, POV-Ray is another rendering program that is well known to load up every single thread in a system, regardless of cache and memory levels. After a long period of POV-Ray 3.7 being the latest official release, when AMD launched Ryzen the POV-Ray codebase suddenly saw a range of activity from both AMD and Intel, knowing that the software (with the built-in benchmark) would be an optimization tool for the hardware.

We had to stick a flag in the sand when it came to selecting the version that was fair to both AMD and Intel, and still relevant to end-users. Version 3.7.1 fixes a significant bug in the early 2017 code that was advised against in both Intel and AMD manuals regarding to write-after-read, leading to a nice performance boost.

The benchmark can take over 20 minutes on a slow system with few cores, or around a minute or two on a fast system, or seconds with a dual high-core count EPYC. Because POV-Ray draws a large amount of power and current, it is important to make sure the cooling is sufficient here and the system stays in its high-power state. Using a motherboard with a poor power-delivery and low airflow could create an issue that won’t be obvious in some CPU positioning if the power limit only causes a 100 MHz drop as it changes P-states.

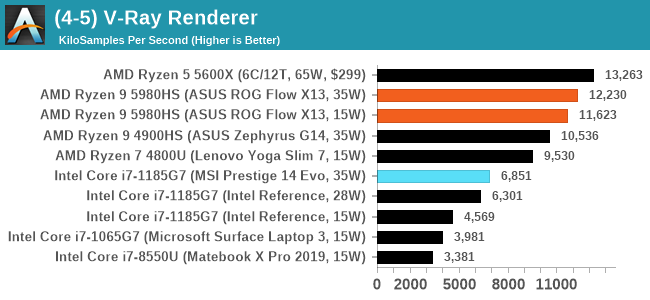

V-Ray: Link

We have a couple of renderers and ray tracers in our suite already, however V-Ray’s benchmark came through for a requested benchmark enough for us to roll it into our suite. Built by ChaosGroup, V-Ray is a 3D rendering package compatible with a number of popular commercial imaging applications, such as 3ds Max, Maya, Undreal, Cinema 4D, and Blender.

We run the standard standalone benchmark application, but in an automated fashion to pull out the result in the form of kilosamples/second. We run the test six times and take an average of the valid results.

Another good bump in performance here for Cezanne.

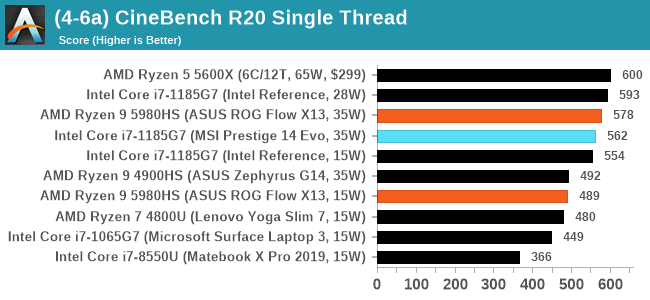

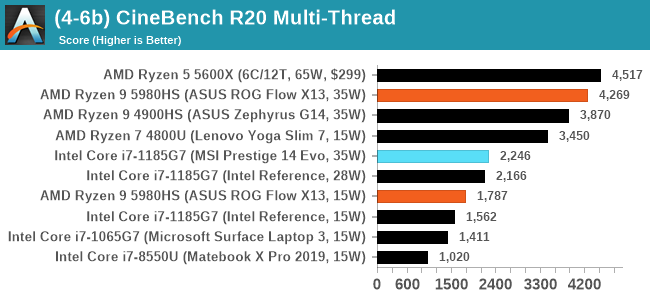

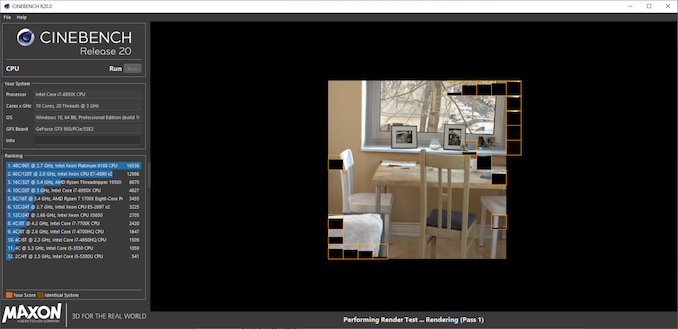

Cinebench R20: Link

Another common stable of a benchmark suite is Cinebench. Based on Cinema4D, Cinebench is a purpose built benchmark machine that renders a scene with both single and multi-threaded options. The scene is identical in both cases. The R20 version means that it targets Cinema 4D R20, a slightly older version of the software which is currently on version R21. Cinebench R20 was launched given that the R15 version had been out a long time, and despite the difference between the benchmark and the latest version of the software on which it is based, Cinebench results are often quoted a lot in marketing materials.

Results for Cinebench R20 are not comparable to R15 or older, because both the scene being used is different, but also the updates in the code bath. The results are output as a score from the software, which is directly proportional to the time taken. Using the benchmark flags for single CPU and multi-CPU workloads, we run the software from the command line which opens the test, runs it, and dumps the result into the console which is redirected to a text file. The test is repeated for a minimum of 10 minutes for both ST and MT, and then the runs averaged.

We didn't quite hit AMD's promoted performance of 600 pts here in single thread, and Intel's Tiger Lake is not far behind. In fact, our MSI Prestige 14 Evo, despite being listed as a 35W sustained processor, doesn't seem to hit the same single-core power levels that our reference design did, and as a result Intel's reference design is actually beating both MSI and ASUS in single thread. This disappears in multi-thread, but it's important to note that different laptops will have different single core power modes.

218 Comments

View All Comments

Ptosio - Tuesday, January 26, 2021 - link

ARM is not some magic silver bullet - MediaTech has vast experience with ARM but are their chromebook chips any way close to Apple M1? (or Zen3 for that matter?)And remember AMD is yet to get acess to the same TSMC process as Apple - maybe once they're on par, large part of that efficiency advantage dissapears?

ABR - Wednesday, January 27, 2021 - link

AMD has K12, which Jim Keller also worked on, waiting in the wings. Most assuredly they have continued developing it. Whether it will play in the same league with M1 remains to be seen, but they also have the graphics IP to go with it so they could likely come out with a strong offering if it comes to that. Not sure what Intel will do..Deicidium369 - Wednesday, January 27, 2021 - link

ancient design, far exceeded by even 10 year old ARM designs.Spunjji - Thursday, January 28, 2021 - link

You say some really silly thingsSpunjji - Thursday, January 28, 2021 - link

"Apple will outclass everything x86 once they introduce their second gen silicon with much higher core count and other architectural improvements."I'll believe it when I see it. Their first move was far better than expected, but it doesn't come close to justifying the claims you're making here.

Glaurung - Saturday, January 30, 2021 - link

M1 is Apple's replacement for ultra-low power, nominal 15w Intel chips. Later this year we will see their replacement for higher powered (35-65w) Intel chips. Nobody knows what those chips will be like yet, but it's pretty obvious they'll have 8 or 16 performance cores instead of just 4, with a similar scale up of the number of GPU cores. They'll add the ability to handle more than 16gb and two ports, and they will put it in their high end laptops and imac desktops. Potentially also on the menu would be a faster peak clock rate. That's not an "I'll believe it when I see it," that's a foregone conclusion. Also a foregone conclusion: next year they will have an even faster core with even better IPC to put in their phones, tablets, and computers.As of last year, Apple's chips had far better IPC and performance per watt than anything Intel or AMD could make, and they only fell short on overall performance due to only having 4 performance cores in their ultra-low power chips.

(For the record, I use Windows. But there's no denying that Apple is utterly dominating in the contest to see who can make the fastest CPUs)

GeoffreyA - Sunday, January 31, 2021 - link

Apple will release faster cores but so will AMD. And now that they've got an idea of what Apple's design is capable of, I'm pretty sure they could overtake it, if they wanted to.GeoffreyA - Sunday, January 31, 2021 - link

As much as I hate to say it, the M1 could be analogous to Core and K8 in the Netburst era. The return to lower clock speeds, higher IPC, and wider execution. Having Skylake and Sunny C. as their measure, AMD produced so and so (and brilliant stuff too, Zen 3 is). Perhaps the M1 will recalibrate the perf/watt measure, like Conroe did, like the Athlon 64 did.I've got a feeling, too, that ARM isn't playing the role in the M1 that people are thinking. It's possible the difference in perf/watt between Zen 3 and M1 is due not to x86 vs. ARM but rather the astonishing width of that core, as well as caches. How much juice ARM is adding, I doubt whether we can say, unless the other components were similar. My belief, it isn't adding much.

Farfolomew - Thursday, February 4, 2021 - link

Very nice comment, and this little thread is a really fascinating read. I've not thought of the comparisons of the P4 -> Core2Duo Mhz regression, but I really think you're on to something here. The thing is, this isn't anything new with M1, Apple has been doing it since the A9 back in 2015, when it finally had IPC parity with the Core M chips. The M1 is just the evolution and scaling up to that of an equivalent TDP laptop chip that Intel has been producing.So the question, then, is, if it's not the "ARM" architecture giving the huge advantages, why haven't we seen a radical shift in the x86 technology back to ultra wide cores, and caches? Or maybe we are, incrementally, with Ice/Tiger Lake, and Zen 2/3/4?

Very fascinating times!

GeoffreyA - Sunday, February 7, 2021 - link

"Or maybe we are, incrementally, with Ice/Tiger Lake, and Zen 2/3/4?"I think that sums it up. As to why their scaling is going at a slower rate, there are a few possible explanations. Likely, truth is somewhere in between.

Firstly, AMD and Intel have aimed for high-frequency designs, which is at loggerheads with widening of a core. Then, AMD has been targeting Haswell (and later) perf/watt with Zen. When one's measure is such, one won't go much beyond that (Zen 2 and 3 did, but there's still juice in the tank). Lastly, it could be owing to the main bottleneck in x86: the variable-length instructions, which make parallel decoding difficult. Adding more decoders helps but causes power to go up. So the front end could be limiting how much we can widen our resources down the line.

Having said that, I still think that AMD's ~15% IPC increase each year has been impressive. "The stuff of legend." Intel, back when it was leading, had us believe such gains were impossible. It's going to be some interesting years ahead, watching the directions Intel, Apple, and AMD take. I'm confident AMD will keep up the good work.