Intel Core i9-10850K Review: The Real Intel Flagship

by Dr. Ian Cutress on January 4, 2021 9:00 AM EST- Posted in

- CPUs

- Intel

- Core

- Z490

- 10th Gen Core

- Comet Lake

- LGA1200

- i9-10850K

CPU Tests: Simulation

Simulation and Science have a lot of overlap in the benchmarking world, however for this distinction we’re separating into two segments mostly based on the utility of the resulting data. The benchmarks that fall under Science have a distinct use for the data they output – in our Simulation section, these act more like synthetics but at some level are still trying to simulate a given environment.

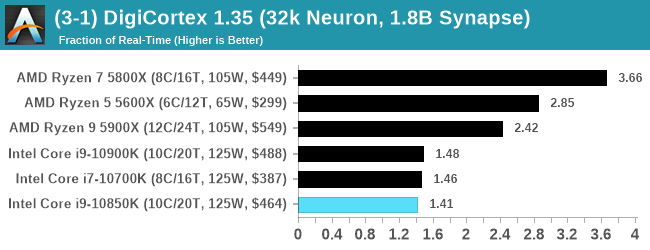

DigiCortex v1.35: link

DigiCortex is a pet project for the visualization of neuron and synapse activity in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron/1.8B synapse simulation, similar to a small slug.

The results on the output are given as a fraction of whether the system can simulate in real-time, so anything above a value of one is suitable for real-time work. The benchmark offers a 'no firing synapse' mode, which in essence detects DRAM and bus speed, however we take the firing mode which adds CPU work with every firing.

The software originally shipped with a benchmark that recorded the first few cycles and output a result. So while fast multi-threaded processors this made the benchmark last less than a few seconds, slow dual-core processors could be running for almost an hour. There is also the issue of DigiCortex starting with a base neuron/synapse map in ‘off mode’, giving a high result in the first few cycles as none of the nodes are currently active. We found that the performance settles down into a steady state after a while (when the model is actively in use), so we asked the author to allow for a ‘warm-up’ phase and for the benchmark to be the average over a second sample time.

For our test, we give the benchmark 20000 cycles to warm up and then take the data over the next 10000 cycles seconds for the test – on a modern processor this takes 30 seconds and 150 seconds respectively. This is then repeated a minimum of 10 times, with the first three results rejected. Results are shown as a multiple of real-time calculation.

The wide variation on AMD seems to prefer high-core-count single chiplet processors. Intel is taking a back seat here, as it is also using slower memory.

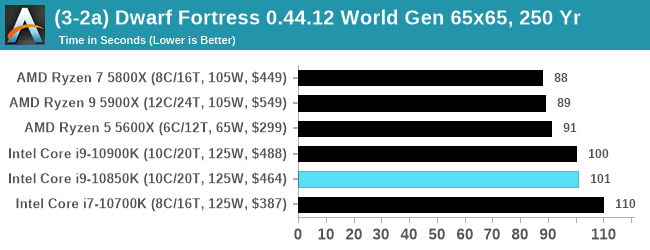

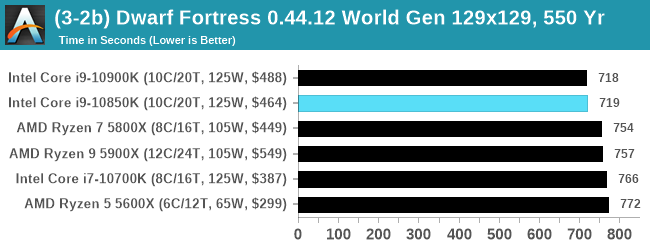

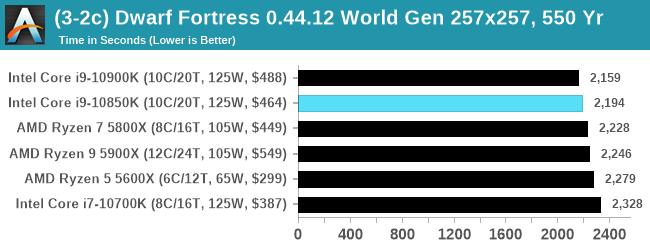

Dwarf Fortress 0.44.12: Link

Another long standing request for our benchmark suite has been Dwarf Fortress, a popular management/roguelike indie video game, first launched in 2006 and still being regularly updated today, aiming for a Steam launch sometime in the future.

Emulating the ASCII interfaces of old, this title is a rather complex beast, which can generate environments subject to millennia of rule, famous faces, peasants, and key historical figures and events. The further you get into the game, depending on the size of the world, the slower it becomes as it has to simulate more famous people, more world events, and the natural way that humanoid creatures take over an environment. Like some kind of virus.

For our test we’re using DFMark. DFMark is a benchmark built by vorsgren on the Bay12Forums that gives two different modes built on DFHack: world generation and embark. These tests can be configured, but range anywhere from 3 minutes to several hours. After analyzing the test, we ended up going for three different world generation sizes:

- Small, a 65x65 world with 250 years, 10 civilizations and 4 megabeasts

- Medium, a 127x127 world with 550 years, 10 civilizations and 4 megabeasts

- Large, a 257x257 world with 550 years, 40 civilizations and 10 megabeasts

DFMark outputs the time to run any given test, so this is what we use for the output. We loop the small test for as many times possible in 10 minutes, the medium test for as many times in 30 minutes, and the large test for as many times in an hour.

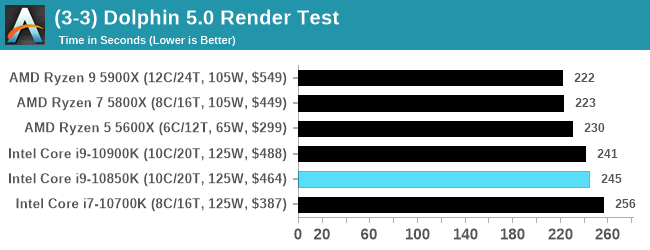

Dolphin v5.0 Emulation: Link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that ray traces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in seconds, where the Wii itself scores 1051 seconds.

126 Comments

View All Comments

1_rick - Monday, January 4, 2021 - link

Because you've got the people who will spend any amount of money to get 5fps more in their games so they can smugly tell everyone who they've got the best.lopri - Monday, January 4, 2021 - link

I see Ryzens beating this thing by sizeable margins in games.zodiacfml - Monday, January 4, 2021 - link

Ryzen 5000 series is significantly faster than Intel's i9-10900k in all games though I haven't seen compared with overclocks. The Intel gets good at rendering/encode but I'd rather buy old Xeons with Chinese motherboards for those loadsV3ctorPT - Monday, January 4, 2021 - link

In gaming the real star is the 5600X... awesome performance for its price, for a 65W(!) CPU...lmcd - Monday, January 4, 2021 - link

It's basically an 80W CPU though lolCrazyeyeskillah - Monday, January 4, 2021 - link

my 5600x is 10-20c hotter than my 3600 clock for clock on the same exact rig and watercooler.JessNarmo - Monday, January 4, 2021 - link

I was considering 10850k as an upgrade option when I it for $400. It's undeniably significantly better deal than 10900k at $530.But ultimately decided that it's just not good enough for an upgrade because it still doesn't support PCIE 4 so if I upgrade I would have to upgrade again very shortly.

Would have to wait for 5900x availability or maybe intel will come up with something better.

edzieba - Monday, January 4, 2021 - link

The same argument can be made for the 5900x and PCIe 5 (or DDR 5). There will always be a new protocol, or new interface, or etc on the horizon.JessNarmo - Monday, January 4, 2021 - link

Disagree. Right now I have the same Skylake cores running 5Ghz and the same PCIE 3, the same everything and it's still fine except I have less cores.With 5900x I'll get better single thread and multi thread performance as well as PCIE4 which is really important for future GPU's and upcoming upgrades unlike PCIE5 which isn't important at all at this point in time.

MDD1963 - Monday, January 4, 2021 - link

PCI-e 4.0 was going to be 'critical' for GPUs to get best performance from a 3080/3090...; instead, it was/is still a non-player. Maybe that will change for next gen. Maybe not.