NVIDIA Announces A100 80GB: Ampere Gets HBM2E Memory Upgrade

by Ryan Smith on November 16, 2020 9:00 AM EST

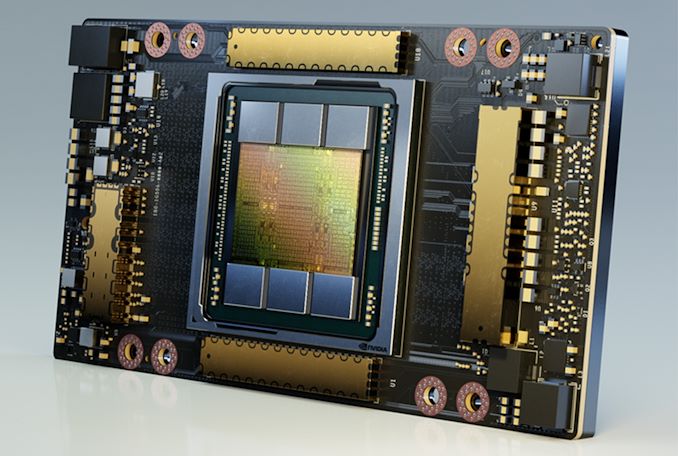

Kicking off a very virtual version of the SC20 supercomputing show, NVIDIA this morning is announcing a new version of their flagship A100 accelerator. Barely launched 6 months ago, NVIDIA is preparing to release an updated version of the GPU-based accelerator with 80 gigabytes of HBM2e memory, doubling the capacity of the initial version of the accelerator. And as an added kick, NVIDIA is dialing up the memory clockspeeds as well, bringing the 80GB version of the A100 to 3.2Gbps/pin, or just over 2TB/second of memory bandwidth in total.

The 80GB version of the A100 will continue to be sold alongside the 40GB version – which NVIDIA is now calling the A100 40GB – and it is being primarily aimed at customers with supersized AI data sets. Which at face value may sound a bit obvious, but with deep learning workloads in particular, memory capacity can be a strongly bounding factor when working with particularly large datasets. So an accelerator that’s large enough to keep an entire model in local memory can potentially be significantly faster than one that has to frequently go off-chip to swap data.

| NVIDIA Accelerator Specification Comparison | |||||

| A100 (80GB) | A100 (40GB) | V100 | |||

| FP32 CUDA Cores | 6912 | 6912 | 5120 | ||

| Boost Clock | 1.41GHz | 1.41GHz | 1530MHz | ||

| Memory Clock | 3.2Gbps HBM2e | 2.4Gbps HBM2 | 1.75Gbps HBM2 | ||

| Memory Bus Width | 5120-bit | 5120-bit | 4096-bit | ||

| Memory Bandwidth | 2.0TB/sec | 1.6TB/sec | 900GB/sec | ||

| VRAM | 80GB | 40GB | 16GB/32GB | ||

| Single Precision | 19.5 TFLOPs | 19.5 TFLOPs | 15.7 TFLOPs | ||

| Double Precision | 9.7 TFLOPs (1/2 FP32 rate) |

9.7 TFLOPs (1/2 FP32 rate) |

7.8 TFLOPs (1/2 FP32 rate) |

||

| INT8 Tensor | 624 TOPs | 624 TOPs | N/A | ||

| FP16 Tensor | 312 TFLOPs | 312 TFLOPs | 125 TFLOPs | ||

| TF32 Tensor | 156 TFLOPs | 156 TFLOPs | N/A | ||

| Interconnect | NVLink 3 12 Links (600GB/sec) |

NVLink 3 12 Links (600GB/sec) |

NVLink 2 6 Links (300GB/sec) |

||

| GPU | GA100 (826mm2) |

GA100 (826mm2) |

GV100 (815mm2) |

||

| Transistor Count | 54.2B | 54.2B | 21.1B | ||

| TDP | 400W | 400W | 300W/350W | ||

| Manufacturing Process | TSMC 7N | TSMC 7N | TSMC 12nm FFN | ||

| Interface | SXM4 | SXM4 | SXM2/SXM3 | ||

| Architecture | Ampere | Ampere | Volta | ||

Diving right into the specs, the only difference between the 40GB and 80GB versions of the A100 will be memory capacity and memory bandwidth. Both models are shipping using a mostly-enabled GA100 GPU with 108 active SMs and a boost clock of 1.41GHz. Similarly, the TDPs between the two models remain unchanged as well. So for pure, on-paper compute throughput, there’s no difference between the accelerators.

Instead, the improvements for the A100 come down to its memory capacity and its greater memory bandwidth. When the original A100 back in May, NVIDIA equipped it with six 8GB stacks of HBM2 memory, with one of those stacks disabled for yield reasons. This left the original A100 with 40GB of memory and just shy of 1.6TB/second of memory bandwidth.

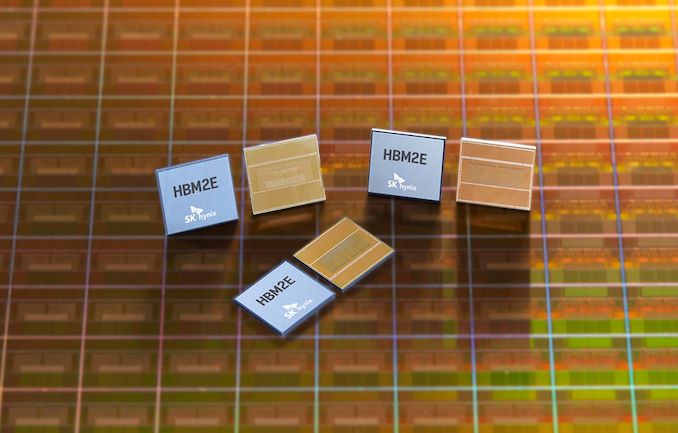

For the newer A100 80GB, NVIDIA is keeping the same configuration of 5-out-of-6 memory stacks enabled, however the memory itself has been replaced with newer HBM2E memory. HBM2E is the informal name given to the most recent update to the HBM2 memory standard, which back in February of this year defined a new maximum memory speed of 3.2Gbps/pin. Coupled with that frequency improvement, manufacturing improvements have also allowed memory manufacturers to double the capacity of the memory, going from 1GB/die to 2GB/die. The net result being that HBM2E offers both greater capacities as well as greater bandwidths, two things which NVIDIA is taking advantage of here.

With 5 active stacks of 16GB, 8-Hi memory, the updated A100 gets a total of 80GB of memory. Which, running at 3.2Gbps/pin, works out to just over 2TB/sec of memory bandwidth for the accelerator, a 25% increase over the 40GB version. This means that not only does the 80GB accelerator offer more local storage, but rare for a larger capacity model, it also offers some extra memory bandwidth to go with it. That means that in memory bandwidth-bound workloads the 80GB version should be faster than the 40GB version even without using its extra memory capacity.

Being able to offer a version of the A100 with more memory bandwidth seems to largely be an artifact of manufacturing rather than something planned by NVIDIA – Samsung and SK Hynix only finally started mass production of HBM2E a bit earlier this year – but none the less it’s sure to be a welcome one.

Otherwise, as mentioned earlier, the additional memory won’t be changing the TDP parameters of the A100. So the A100 remains a 400 Watt part, and nominally, the 80GB version should be a bit more power efficient since it offers more performance inside the same TDP.

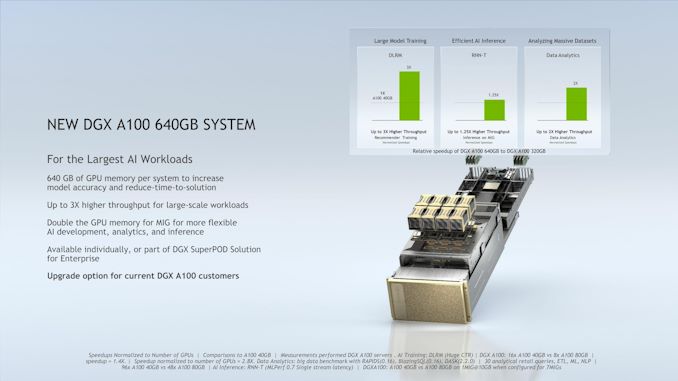

Meanwhile, NVIDIA has also confirmed that the greater memory capacity of the 80GB model will also be available to Multi-Instance GPU (MIG) users. The A100 still has a hardware limitation of 7 instances, so equal-sized instances can now have up to 10GB of dedicated memory each.

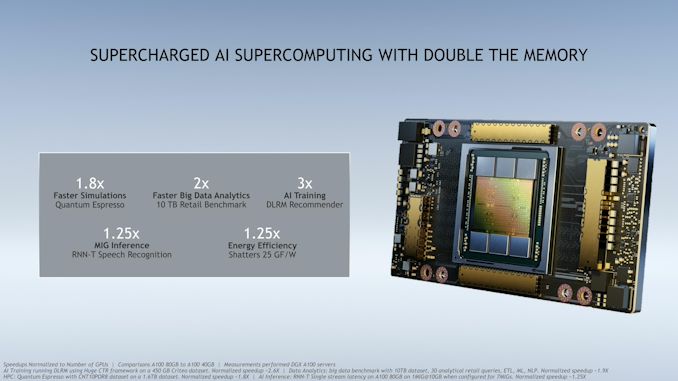

As far as performance is concerned, NVIDIA is throwing out a few numbers comparing the two versions of the A100. It’s actually a bit surprising that they’re talking up the 80GB version quite so much, as NVIDIA is going to continue selling the 40GB version. But with the A100 80GB likely to cost a leg (NVIDIA already bought the Arm), no doubt there’s still a market for both.

Finally, as with the launch of the original A100 earlier this year, NVIDIA’s immediate focus with the A100 80GB is on HGX and DGX configurations. The mezzanine form factor accelerator is designed to be installed in multi-GPU systems, so that is how NVIDIA is selling it: as part of an HGX carrier board with either 4 or 8 of the GPUs installed. For customers that need individual A100s, NVIDIA is continuing to offer the PCIe A100, though not in an 80GB configuration (at least, not yet).

Along with making the A100 80GB available to HGX customers, NVIDIA is also launching some new DGX hardware today as well. At the high-end, they’re offering a version of the DGX A100 with the new accelerators, which they’ll be calling the DGX A100 640GB. This new DGX A100 also features twice as much DRAM and storage as its predecessor, doubling the original in more than one way.

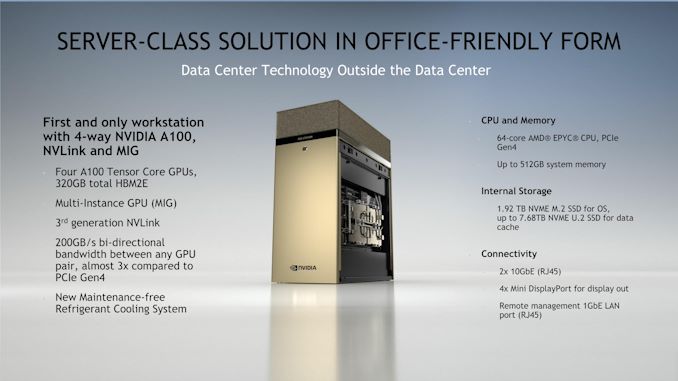

Meanwhile NVIDIA is launching a smaller, workstation version of the DGX A100, which they are calling the DGX Station A100. The successor to the original, Volta-based DGX Station, the DGX Station A100 is essentially half of a DGX A100, with 4 A100 accelerators and a single AMD EPYC processor. NVIDIA’s press pre-briefing didn’t mention total power consumption, but I’ve been told that it runs off of a standard wall socket, far less than the 6.5kW of the DGX A100.

NVIDIA is also noting that the DGX Station uses a refrigerant cooling system, meaning that they are using sub-ambient cooling (unlike the original DGX Station, which was simply water cooled). NVIDIA is promising that despite this, the DGX Station A100 is whisper quiet, so it will be interesting to see how much of that is true given the usual noise issues involved in attaching a compressor to a computer cooling loop.

Both of the new DGX systems as in production now. According to NVIDIA, the systems are already being used for some of their previously-announced supercomputing installations, such as the Cambridge-1 system. Otherwise commercial availability will start in January, with wider availability in February.

29 Comments

View All Comments

jesuscat - Monday, November 16, 2020 - link

If you spent upwards of 100k+ on a DGX workstation to game on it, you're doing it wrong.it's targeted way past your needs as a consumer.

FloridaMan - Friday, November 20, 2020 - link

I think as API's trend toward DX12 and Vulkan we'll see more a soft implementation of multi-gpu support environment. However, tech companies and media won't talk about it as there's been all but no support for this for almost a decade. DX11 swung it's massive **** around for far too long. I think it's a rather inferior API at least on the shader side, and multi-gpu support has to be separately coded. DX12 streamlines this a bit, but still has to be tweaked into the mainline. Vulkan is much easier as your doing the work on the front end, and makes it much less of a headache than having to insert alternating lines within base instructions.I'm a huge fan of the multi-gpu concept. I'd much rather grab a mid-tier GPU that's about 30% faster than the previous generation, then drop in a second a few months later. Much easier to explain two $300-$400 dollar purchases to the wife than one $800 purchase, which is too much for a toy IMO.

dgz - Monday, November 16, 2020 - link

The entire gaming industry decided to abandon multi GPU support not because it doesn't make much sense at the moment, but to annoy you.They could easily fix all the latency/stuttering racing conditions and help amd/nvidia sell infinitely more video cards but opted not to because they don't like your attitude.

kgardas - Monday, November 16, 2020 - link

Come on. How many gamers are willing to use 2 GPUs? And how many to use (and pay for!) 3 GPUs and even more? So if 100% will do 2 GPUs, then it will mean selling just twice the cards and not infinitely more cards. Also the market for 2-more GPUs gaming machines is very small due to much higher price and much bigger problems with scalability (power, cooling, space, bus etc.).Kjella - Monday, November 16, 2020 - link

Don't forget stability issues, I foolishly bought two cards for SLI in the GTX 9xx generation and games crashed much more often than running a single card. Personally I think it worked well when it worked, but since nobody would bother to find and fix the crash bugs I ended up selling the second card. Nothing like destroying a good gaming session with a crash to desktop, the FPS wasn't worth it.Holliday75 - Tuesday, November 17, 2020 - link

Multiple GPU does not necessarily mean multiple cards.haukionkannel - Tuesday, November 17, 2020 - link

No, but the problem are the same!Do you want something that works or something that crach, works sometime... maybe...

Multi GPU in gaming is dead because it don¨t work with constant driverfu!

We need operations systems that support multi chip cpus from the core... None in the market at this moment.

brantron - Monday, November 16, 2020 - link

Infinite times zero RTX 3000 cards is still zero.There are bigger problems to fix than stuttering, or both Nvidia and AMD wouldn't have these absurdly drawn out roll outs, starting with specifically the price ranges that sell the least.

Unashamed_unoriginal_username_x86 - Monday, November 16, 2020 - link

If you came here to complain about multi-GPU in video games or want a $10k+ GPU, you're less than <1% of the market and you probably don't have the money for it anyway. Give upWith that said, I wonder if they'll introduce any SKUs with 6 active HBM stacks or less SMs, perhaps next year as an refresh for AI workloads.

rsandru - Tuesday, November 17, 2020 - link

Well, you're wrong :-) I do have an SLI set up.I'm just complaining that the investment made in software to leverage multiple CPU cores, multiple AI accelerators, multiple network interfaces that can be pooled, etc.. didn't extend to video games.

Hey, it's almost Christmas, I can make a wish lol :-D