A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTCPU Tests: Microbenchmarks

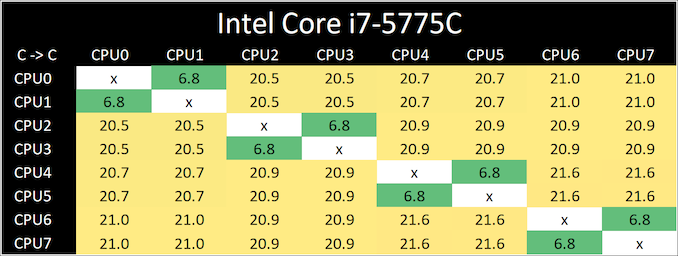

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

Broadwell is a familiar design, with all four cores connected in a ring-bus topology.

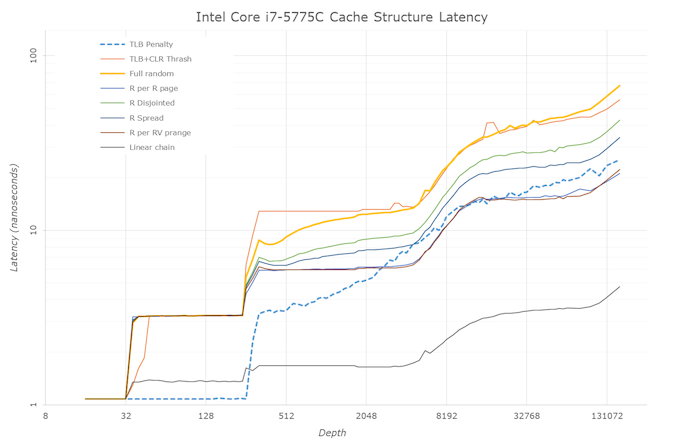

Cache-to-DRAM Latency

This is another in-house test built by Andrei, which showcases the access latency at all the points in the cache hierarchy for a single core. We start at 2 KiB, and probe the latency all the way through to 256 MB, which for most CPUs sits inside the DRAM (before you start saying 64-core TR has 256 MB of L3, it’s only 16 MB per core, so at 20 MB you are in DRAM).

Part of this test helps us understand the range of latencies for accessing a given level of cache, but also the transition between the cache levels gives insight into how different parts of the cache microarchitecture work, such as TLBs. As CPU microarchitects look at interesting and novel ways to design caches upon caches inside caches, this basic test proves to be very valuable.

Our data shows a 4-cycle L1, a 12-cycle L2, a 26-50 cycle L3, while the eDRAM has a wide range from 50-150 cycles. This is still quicker than main memory, which goes to 200+ cycles.

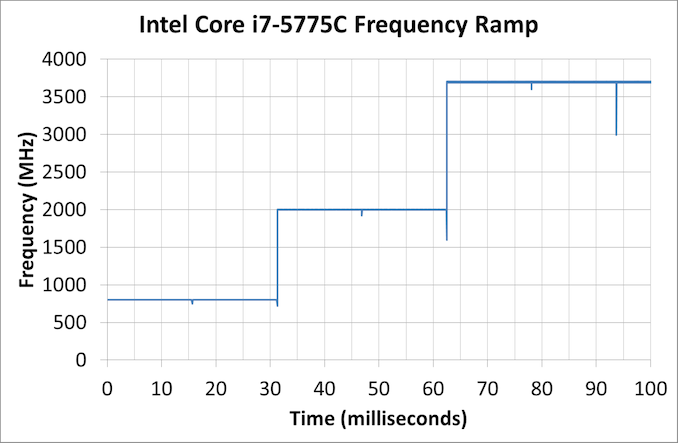

Frequency Ramping

Both AMD and Intel over the past few years have introduced features to their processors that speed up the time from when a CPU moves from idle into a high powered state. The effect of this means that users can get peak performance quicker, but the biggest knock-on effect for this is with battery life in mobile devices, especially if a system can turbo up quick and turbo down quick, ensuring that it stays in the lowest and most efficient power state for as long as possible.

Intel’s technology is called SpeedShift, although SpeedShift was not enabled until Skylake.

One of the issues though with this technology is that sometimes the adjustments in frequency can be so fast, software cannot detect them. If the frequency is changing on the order of microseconds, but your software is only probing frequency in milliseconds (or seconds), then quick changes will be missed. Not only that, as an observer probing the frequency, you could be affecting the actual turbo performance. When the CPU is changing frequency, it essentially has to pause all compute while it aligns the frequency rate of the whole core.

We wrote an extensive review analysis piece on this, called ‘Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics’, due to an issue where users were not observing the peak turbo speeds for AMD’s processors.

We got around the issue by making the frequency probing the workload causing the turbo. The software is able to detect frequency adjustments on a microsecond scale, so we can see how well a system can get to those boost frequencies. Our Frequency Ramp tool has already been in use in a number of reviews.

From an idle frequency of 800 MHz, It takes ~32 ms for Intel to boost to 2.0 GHz, then another ~32 ms to get to 3.7 GHz. We’re essentially looking at 4 frames at 60 Hz to hit those high frequencies.

A y-Cruncher Sprint

The y-cruncher website has a large about of benchmark data showing how different CPUs perform to calculate specific values of pi. Below these there are a few CPUs where it shows the time to compute moving from 25 million digits to 50 million, 100 million, 250 million, and all the way up to 10 billion, to showcase how the performance scales with digits (assuming everything is in memory). This range of results, from 25 million to 250 billion, is something I’ve dubbed a ‘sprint’.

I have written some code in order to perform a sprint on every CPU we test. It detects the DRAM, works out the biggest value that can be calculated with that amount of memory, and works up from 25 million digits. For the tests that go up to the ~25 billion digits, it only adds an extra 15 minutes to the suite for an 8-core Ryzen CPU.

With this test, we can see the effect of increasing memory requirements on the workload and the scaling factor for a workload such as this.

- MT 25m: 1.617s

- MT 50m: 3.639s

- MT 100m: 8.156s

- MT 250m: 24.050s

- MT 500m: 53.525s

- MT 1000m: 118.651s

- MT 2500m: 341.330s

The scaling here isn’t linear – moving from 25m to 2.5b, we should see a 100x time increase, but instead it is 211x.

120 Comments

View All Comments

Khenglish - Monday, November 2, 2020 - link

The infinity cache is SRAM, which will be faster but much lower density. Only IBM ever integrated DRAM on the same die as a processor. The DRAM capacitor takes up the space where you want to put all your CPU wiring.Quantumz0d - Monday, November 2, 2020 - link

Always thought why Intel is so fucking foolish in making that shitty iGPU die instead of making eDRAM on the chip. It would have given a massive boost for all their CPUs. A big missed opportunity. AMD had this "Game cache" on their Zen 2 and now with RDNA2, "Infinity Cache" again..jospoortvliet - Wednesday, November 4, 2020 - link

I guess they did the math on cost and power. They always had better memory controllers and prefetchers so they didn't benefit as much from cache- they also have the memory controller on-die, unlike amd with their i/o die. So intel would benefit waaaay less than amd does, in almost every way.dragosmp - Monday, November 2, 2020 - link

"...the same 22nm eDRAM chip is still in use today with Apple's 2020 base Macbook Pro 13"Ahem, what? Is that CPU an off the roadmap Tiger Lake?

Jorgp2 - Monday, November 2, 2020 - link

Tiger Lake doesn't have the hardware for an L4.It's probably the Skylake version

colinisation - Monday, November 2, 2020 - link

Do the part numbers on Intel CPU's mean anything, I picked up a 5775C a week agoand have not installed it yet but the part number starts "L523" - I just assume it is a later batch than what is in the review.ilt24 - Tuesday, November 3, 2020 - link

@colinisationThat L523 are the first 4 characters of the is the Finished Process Order or Batch#.

The L says it was packaged in Malaysia

The 5 says it was packaged in 2015

The 23 says it was packaged on the 23rd week

Digits 5-8 are the specific lot number number of the wafer the die came from

colinisation - Tuesday, November 3, 2020 - link

@ilt24 - Thank you very muchMday - Monday, November 2, 2020 - link

I expected more eDRAM implementations after Broadwell coming from Intel and AMD on the CPU side, as a low latency - high "capacity" cache, particularly after the launch of HBM. It made me wonder why Intel even bothered, or what shifts in strategies moved them to and away from eDRAM.ichaya - Monday, November 2, 2020 - link

This is really the first desktop part I'm hearing of, weren't most of these "Iris Pro" chips sold in Apple laptops with maybe a small minority being sold by other laptop OEMs? I believe so.