QLC Goes To 8TB: Samsung 870 QVO and Sabrent Rocket Q 8TB SSDs Reviewed

by Billy Tallis on December 4, 2020 8:00 AM ESTRandom Read Performance

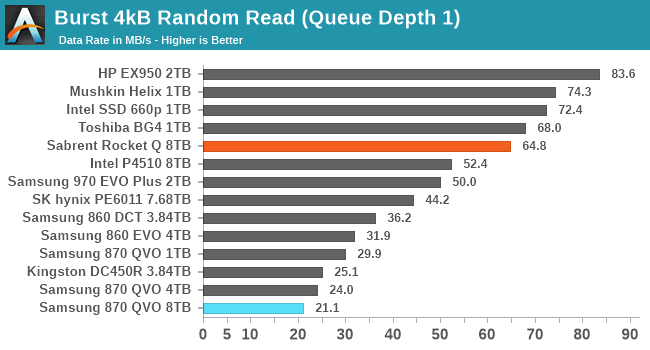

Our first test of random read performance uses very short bursts of operations issued one at a time with no queuing. The drives are given enough idle time between bursts to yield an overall duty cycle of 20%, so thermal throttling is impossible. Each burst consists of a total of 32MB of 4kB random reads, from a 16GB span of the disk. The total data read is 1GB.

The burst random read performance from the 8TB Samsung 870 QVO is even worse than the smaller 870s; even though these drives have the full amount of DRAM necessary to hold the logical to physical address mapping tables, there are other significant sources of overhead affecting the higher capacity models.

The Sabrent Rocket Q's burst random read performance doesn't quite fall at the opposite end of the spectrum, but it does clearly offer decent random read latency that is comparable to other drives using the Phison E12(S) controller and not too far behind the NVMe drives using Silicon Motion controllers.

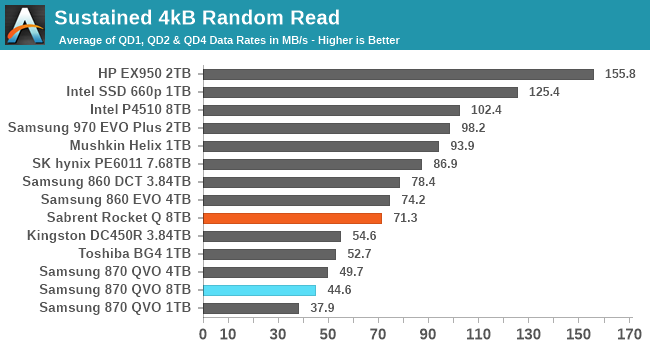

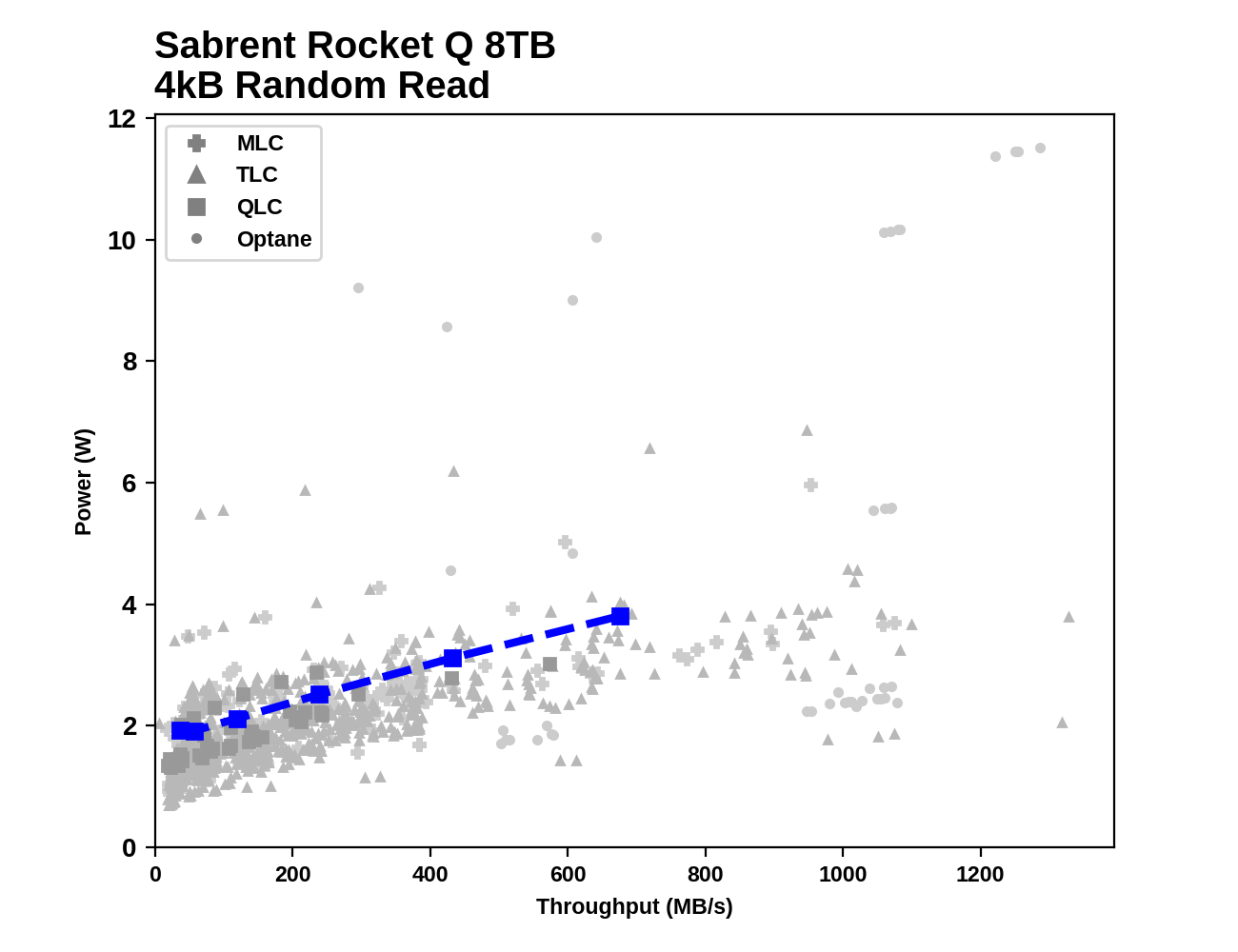

Our sustained random read performance is similar to the random read test from our 2015 test suite: queue depths from 1 to 32 are tested, and the average performance and power efficiency across QD1, QD2 and QD4 are reported as the primary scores. Each queue depth is tested for one minute or 32GB of data transferred, whichever is shorter. After each queue depth is tested, the drive is given up to one minute to cool off so that the higher queue depths are unlikely to be affected by accumulated heat build-up. The individual read operations are again 4kB, and cover a 64GB span of the drive.

The QLC drives almost all fare poorly on the longer random read test. The Sabrent Rocket Q falls to be the second-slowest NVMe drive in this batch, and a bit slower than Samsung's TLC SATA drives. The 8TB Samsung 870 QVO is no longer the slowest capacity; while it is again a bit slower than the 4TB model, the 1TB 870 QVO takes last place in this test.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

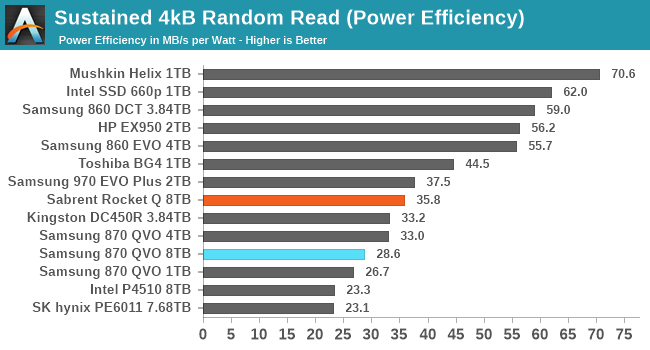

The power efficiency scores are mostly in line with the performance scores, with the slower drives tending to also be less efficient. The QLC drives follow this pattern quite well. The outliers are the particularly efficient Mushkin Helix DRAMless TLC drive, and the enterprise NVMe SSDs that show poor efficiency because they are underutilized by the low queue depths tested here.

|

|||||||||

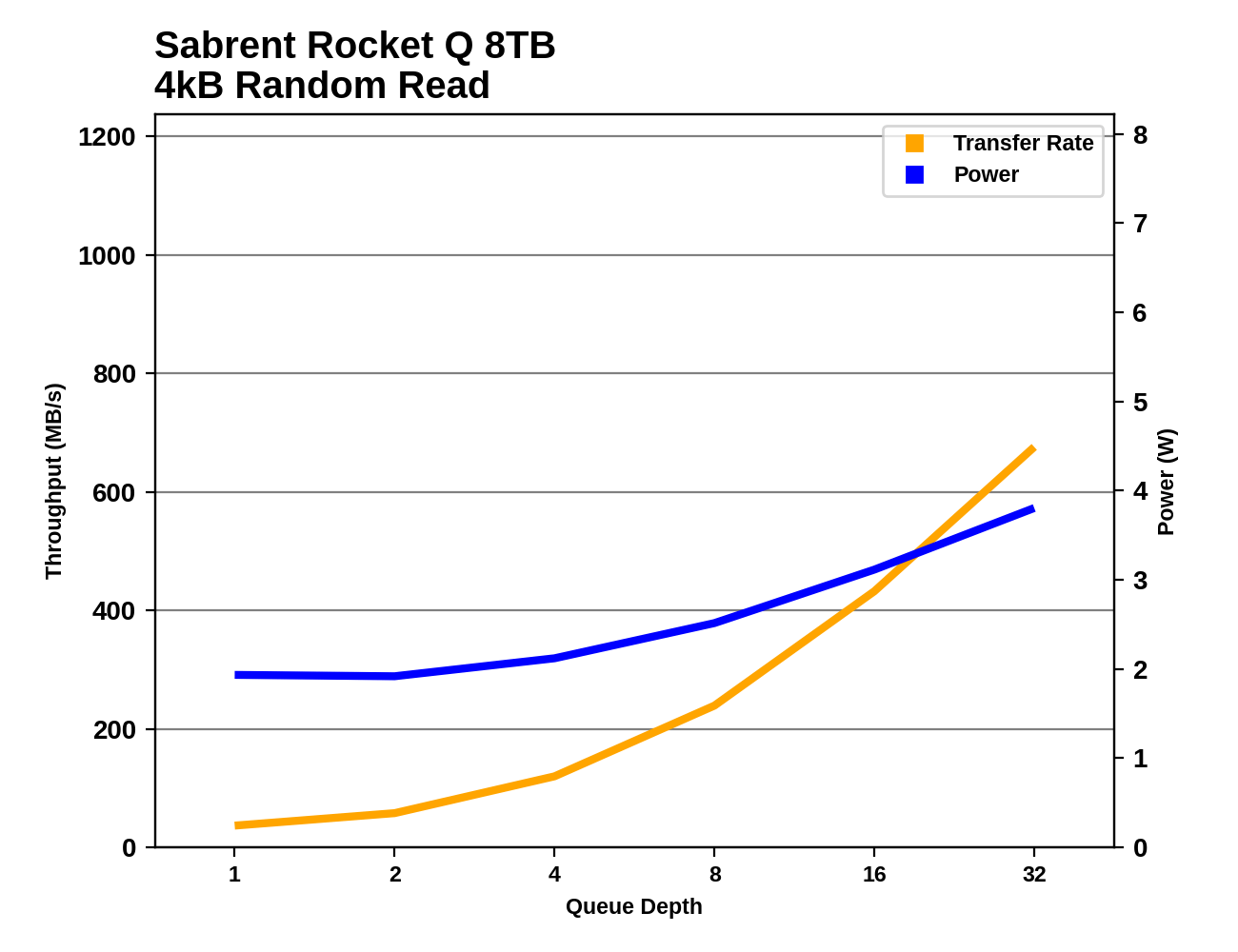

The Sabrent Rocket Q shows good performance scaling as queue depths increase during the random read test. The Samsung 870 QVO seems to be approaching saturation past QD16, even though the SATA interface is capable of delivering higher performance.

|

|||||||||

| Sabrent Rocket Q 8TB | Samsung 870 QVO 8TB | ||||||||

Comparing the 8TB drives against everything else we've tested, neither is breaking new ground. Both drives have power consumption that's on the high side but not at all unprecedented, and random read performance that doesn't push the limits of their respective interfaces.

Random Write Performance

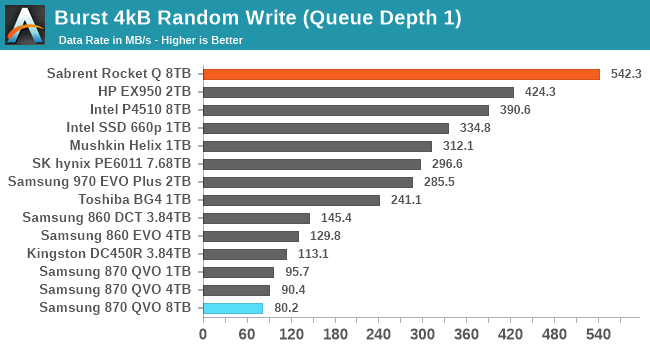

Our test of random write burst performance is structured similarly to the random read burst test, but each burst is only 4MB and the total test length is 128MB. The 4kB random write operations are distributed over a 16GB span of the drive, and the operations are issued one at a time with no queuing.

The two 8TB drives have opposite results for the burst random write performance test. The 8TB Sabrent Rocket Q it at the top of the chart with excellent SLC cache write latency, while the 8TB Samsung 870 QVO is a bit slower than the smaller capacities and turns in the worst score in this bunch.

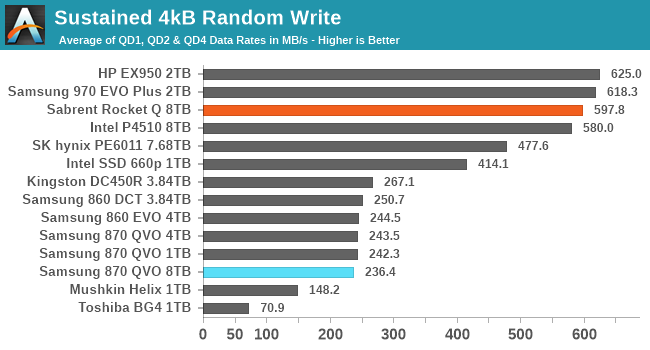

As with the sustained random read test, our sustained 4kB random write test runs for up to one minute or 32GB per queue depth, covering a 64GB span of the drive and giving the drive up to 1 minute of idle time between queue depths to allow for write caches to be flushed and for the drive to cool down.

On the longer random write test, the 8TB Rocket Q is still relying mostly on its SLC cache and continues to hang with the high-end NVMe drives. The 8TB 870 QVO is only slightly slower than the other SATA SSDs, and faster than some of the low-end DRAMless TLC NVMe drives.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

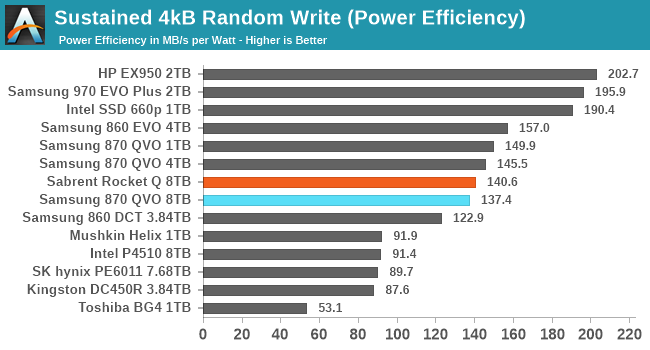

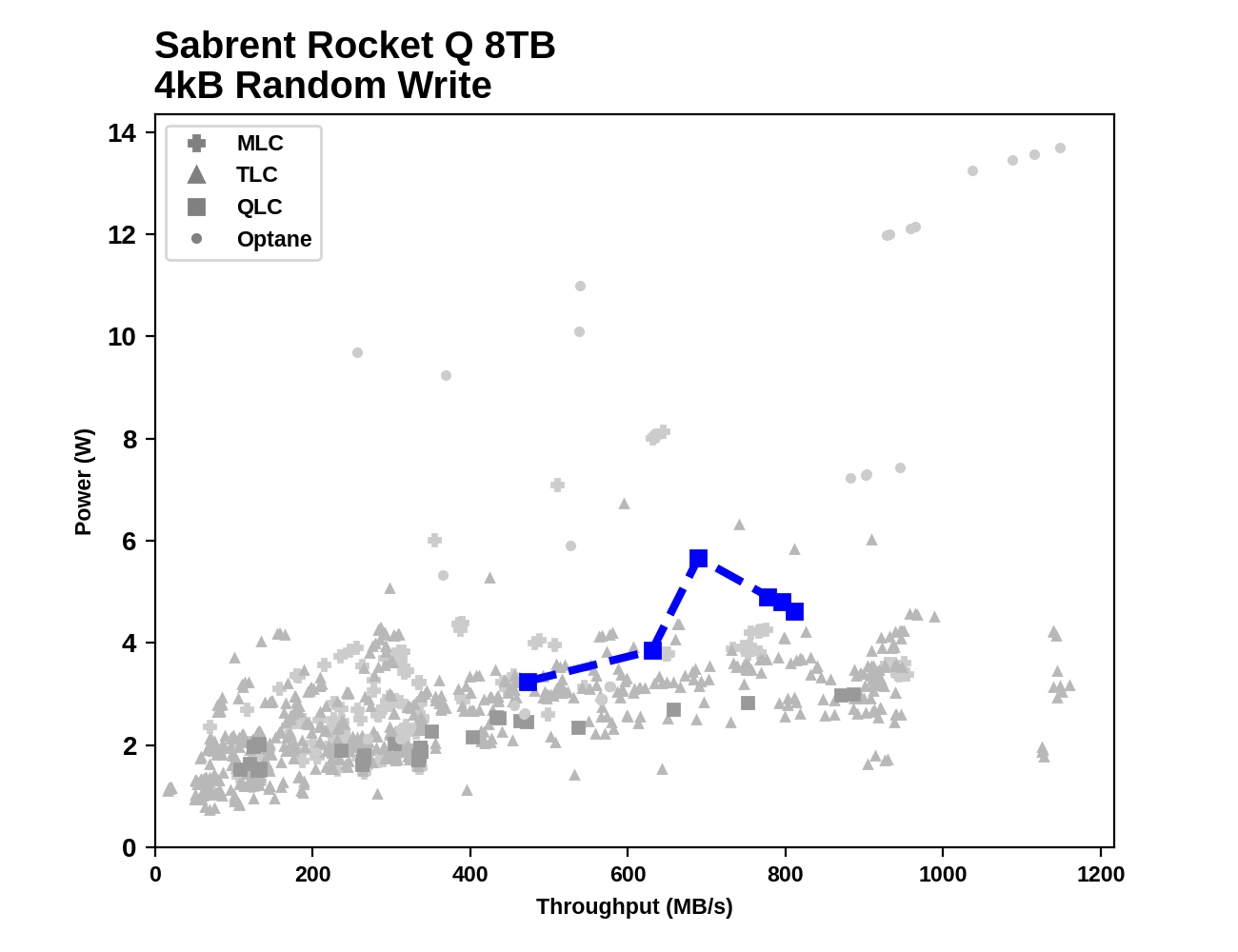

Despite their dramatically different random write performance, the two 8TB QLC drives end up with similar power efficiency that's fairly middle of the road: better than the enterprise drives and the slow DRAMless TLC drives, but clearly worse than the better TLC NVMe drives.

|

|||||||||

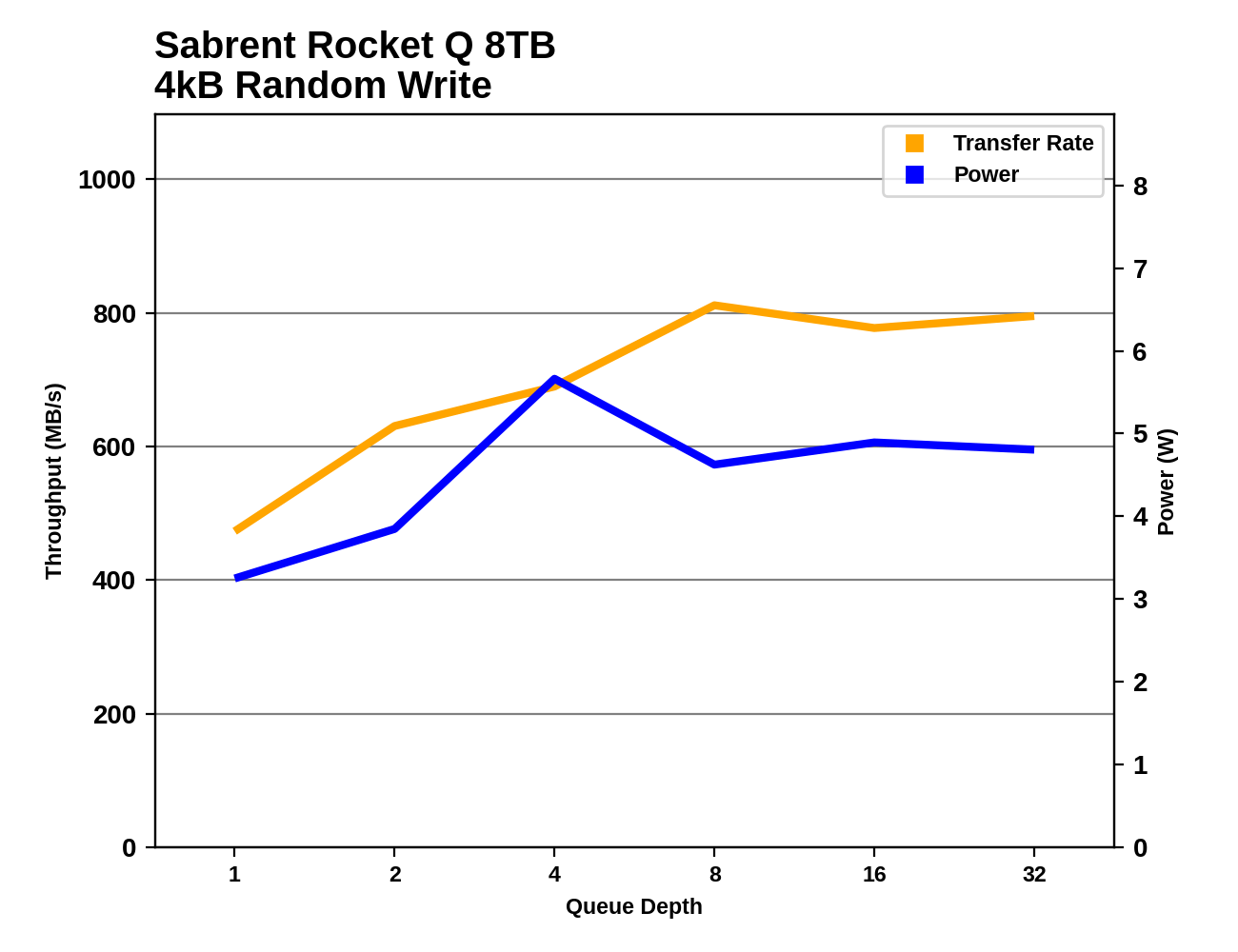

The random write performance of the Rocket Q scales a bit unevenly, but seems to saturate around QD8. Power consumption actually drops after QD4, possibly because the drive is busy enough at that point with random writes that it cuts back on background cleanup work. The Samsung 870 QVO reaches full random write performance at QD4 and steadily maintains that performance through the rest of the test.

|

|||||||||

| Sabrent Rocket Q 8TB | Samsung 870 QVO 8TB | ||||||||

Unlike on the random read test, the Samsung 870 QVO comes across as having reasonably low power consumption on the random write test, especially at higher queue depths. The Sabrent Rocket Q's power consumption is still clearly on the high side, especially the spike at QD4 where it seemed to be doing a lot of background work instead of just directing writes to the SLC cache.

150 Comments

View All Comments

Great_Scott - Sunday, December 6, 2020 - link

QLC remains terrible and the price delta between the worst and good drives remains $5.The most interesting part of this review is how insanely good the performance of the DRAMless Mushkin drive is.

ksec - Friday, December 4, 2020 - link

I really wish a segment of market move towards high capacity and low speed like QVO This is going to be useful for like NAS, where the speed is limited to 1Gbps or 2.5Gbps Ethernet.The cheapest SSD I saw for 2TB was a one off deal from Sandisk at $159. I wonder when we could see that being the norm if not even lower.

Oxford Guy - Friday, December 4, 2020 - link

I wish QLC wouldn't be pushed on us because it ruins the economy of scale for 3D TLC. 3D TLC drives could have been offered in better capacities but QLC is attractive to manufacturers for margin. Too bad for us that it has so many drawbacks.SirMaster - Friday, December 4, 2020 - link

People said the same thing when they moved from SLC to MLC, and again from MLC to TLC.emn13 - Saturday, December 5, 2020 - link

There is an issue of decreasing returns, however.SLC -> MLC allowed for 2x capacity (minus some overhead) I don't remember anybody gnashing their teeth to much at that.

MLC -> TLC allowed for 1.5x capacity (minus some overhead). That's not a bad deal, but it's not as impressive anymore.

TLC -> QLC allows for 1.33x capacity (minor some overhead). That's starting to get pretty slim pickings.

Would you rather have a 4TB QLC drive, or a 3TB TLC drive? that's the trade-off - and I wish sites would benchmark drives at higher fill rates, so it'd be easier to see more real-world performance.

at_clucks - Friday, December 11, 2020 - link

@SirMaster, "People said the same thing when they moved from SLC to MLC, and again from MLC to TLC."You know you're allowed to change your mind and say no, right? Especially since some transitions can be acceptable, and others less so.

The biggest thing you're missing is that the theoretical difference between TLC and QLC is bigger than the difference between SLC and TLC. Where SLC hasto discriminate between 2 levels of charge, TLC has to discriminate between 8, and QLC between 16.

Doesn't this sound like a "you were ok with me kissing you so you definitely want the D"? When TheinsanegamerN insists ATers are "techies" and they "understand technology" I'll have this comment to refer him to.

magreen - Friday, December 4, 2020 - link

Why is that useful for NAS? A hard drive will saturate that network interface.RealBeast - Friday, December 4, 2020 - link

Yup, my eight drive RAID 6 runs about 750MB/sec for large sequential transters over SFP+ to my backup array. No need for SSDs and I certainly couldn't afford them -- the 14TB enterprise SAS drives I got were only $250 each in the early summer.nagi603 - Friday, December 4, 2020 - link

Not if it's a 10G linkleexgx - Saturday, December 5, 2020 - link

If you have enough drives in RAID6 you can come close to saturate a 10gb link (read post above 750MB/s with 8 hdds in RAID6)