The Armari Magnetar X64T Workstation OC Review: 128 Threads at 4.0 GHz, Sustained!

by Dr. Ian Cutress on September 9, 2020 12:00 PM EST- Posted in

- Desktop

- Systems

- AMD

- OC Systems

- ThreadRipper

- 3990X

- Armari

- Magnetar

- X64T

- Rendering

Power Consumption, Thermals, and Noise

Whenever we’ve tested big processors in the past, especially those designed for the super high power consumption tasks, they’ve all come with pre-prepared test systems. For the 28-core Intel Xeon W-3175X, rated at 255W, Intel sent a whole system with a 500 W Asetek liquid cooler as well as a second liquid cooler in case we were overclocking the system. When I tested the Core i9-9990XE, a 14-core 5 GHz ‘auction-only’ processor, the system integrator shipped a full 1U server with a custom liquid cooler to deal with the 400W+ thermals.

As with the Armari Magnetar X64T, the custom liquid cooling set up is an integral part of the system offering in order to achieve the high overclocked frequencies that the company promises.

Armari calls the solution its FWLv2, ‘designed to support a fully unlocked Threadripper 3990X at maximum PBO with all 64 cores sustained up to 4.1 GHz’. The solution consists of a custom monoblock created in partnership with EKWB designed to fit both the CPU and the VRM on the ASRock motherboard specifically. There is also additional convective heatsinks for the VRM to aid in additional cooling as the system also offers airflow through the chassis. This cooling loop hooks up to an EKWB Coolstream 420x45mm triple radiator, three EK-Vardar 140ER EVO fans, and a high-performance cooling pump with a custom performance profile. Armari claims a 3x better flow-rate than the best all-in-one liquid cooling solution on the market, with 200% better cooling performance and a lower noise profile (at a given power) due to the chassis design.

The system also includes two internal 140mm 1500 RPM Noctua fans, for additional airflow over the add-in components, and an angled 80mm low-noise SanAce fan mounted specifically for the memory and the VRM area of the motherboard.

As mentioned on the first page, the 420x45mm radiator is mounted on a swing arm inside the custom chassis. This makes it very easy to open the side of the case and perform maintenance. The chassis is a mix of aluminum inside and a steel frame, and weighs 18 kg / 39.7 lbs, but has handles on the top that hide inside the case, making it very easy to move but also look flush with the design. To be honest, this is a very nice chassis – it’s big, but given what it has to cool and the workstation element of it all, it is more than suitable. Externally, there are no RGB LEDs – a simple light on the top for the power/reset buttons, and blue accents at the front.

As you can probably see inside, there’s no aesthetic to pander to, especially when these systems are only meant be opened for maintenance. The standard Armari 3-year warranty for the UK (1year RTB, 2/3rd year parts + labor) includes a free full-system checkup and coolant replacement during that period.

With all that said, an overclocked 3990X is a bit of a beast, both in power consumption and cooling requirements. Armari told us going into this review that we’ll likely see a series of different power consumptions based on the workload, especially when it comes to sustained codes.

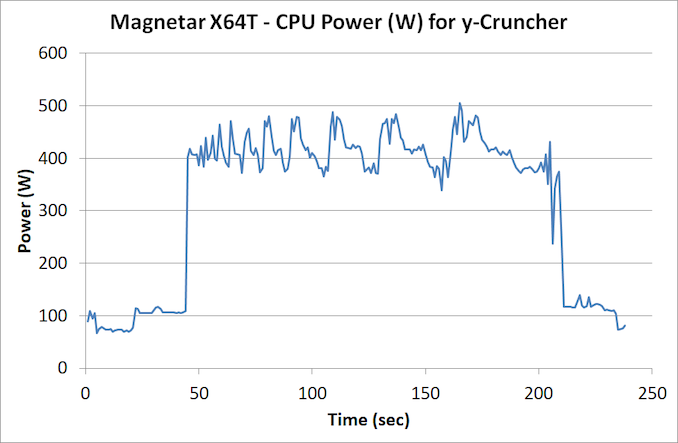

Our normal look into power consumption is typically with our y-cruncher test, which deals solely in integers:

For this test, the system was CPU over 400 W from start to finish, and the peak power was 505 W. CPU temperature averaged at 70 ºC and peaked at 82 ºC, with the CPU frequency average at 4002 MHz.

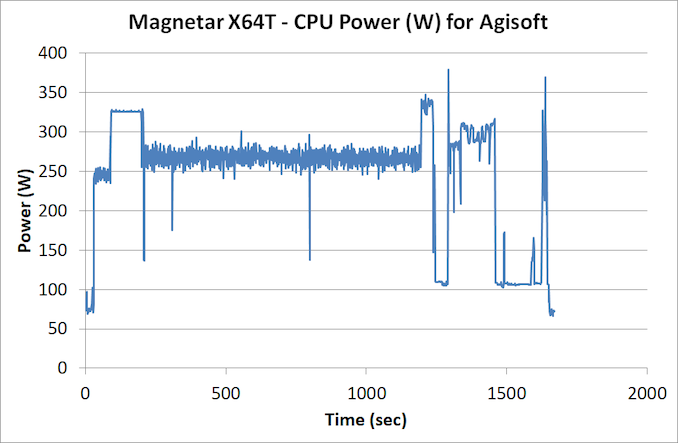

For a less dense workload that involves a mixture of math, we turn to our Agisoft test. This involves converting 2D images to 3D models, and involves four algorithmic stages – some fully multi-threaded, and others that are more serially coded.

The bulk of the test was done at around 270 W, with a single peak at 375 W. CPU temperatures never broke 50ºC.

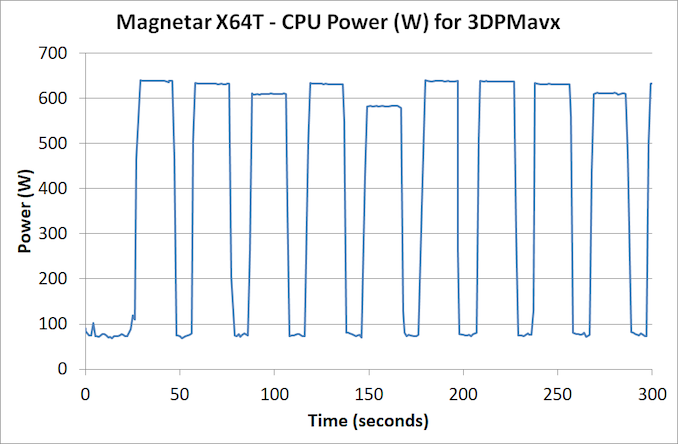

Where we saw the real power was in our 3DPMavx floating point math test. This uses AVX2 like y-cruncher, but in a much denser calculation.

So this test runs a loop for 10 seconds, then idles for 10 seconds, hence the up and down part. There are six different loops, so each one is having a different effect on the power based on the instruction density. The test then repeats – I’ve cut the graph at 300 seconds to get a clear view

The peak power is 640 W – there is no rest when the workload is this heavy, and the CPU very quickly idles down to 70 W where possible. The peak temperature with a workload this heavy, even in small 10 second bursts, was 89ºC. Depending on the exact nature of the instructions, we saw sustained all-core frequencies at 3925 MHz to 4025 MHz.

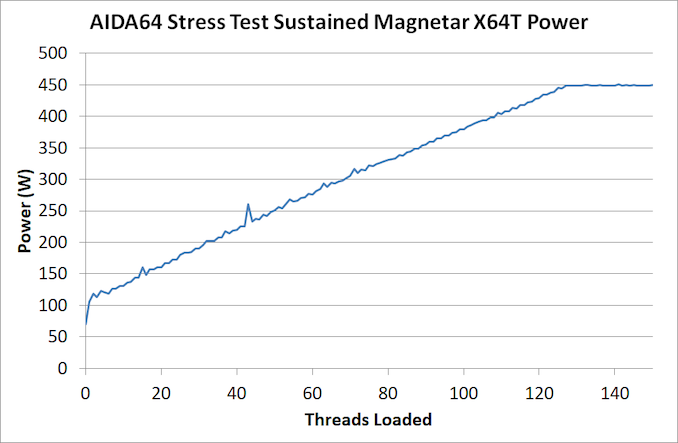

As another angle to this story, I ran a script to plot the power consumption during the AIDA64 integer stress test, and cycled through 0 threads loaded up to 256 threads loaded, with two minutes load followed by two minutes idle.

The power consumption peaks at 450 W, as with the previous integer test, and we can see a slow steady rise from 106 W with one thread loaded up to 64 threads loaded. In this instance, AIDA64 is clever enough to split threads onto separate cores. In a normal 3990X scenario, we’re going to be seeing about 3.2 W per core at full load – in this instance, we’re approaching 6 W per core. For floating point math, where we see those 640 W peaks, it’s closer to 9 W per core. Bearing in mind that some of the consumer Ryzen Zen 2 processors are similarly running 9-12 W per core at full load, this is a bit wild.

Now, mentioning something like 640 W running at 10 seconds already got the CPU to 89ºC, the next question is what happens to the system when that load is sustained. Depending on the use case, some software might focus on INT workloads while others prefer FP. I attached a wall power meter to the system and fired up an 8K Blender render, and left the system on for over 10 minutes.

As you can see from the video, the software starts at around 4050 MHz, and slowly decreases over time to keep everything in check to about 3850 MHz. During this time, the system averaged about 900 W at the wall, with ~935 W peak. In this time, the temperature didn’t go above 92ºC, and with an audio meter at a distance of 1 ft, I measured 45-49 dB (compared to an idle of 36 dB).

To pile even more on, I turned to software that could load up the overclocked processor as well as the Quadro RTX 6000 in the system. TheaRender is our benchmark of choice here – it’s a physically based global illumination render that can find how many samples per pixel it can calculate in any given time. It works on CPU and GPU simultaneously.

This pushed the system hard. The benchmark can take up to 20 minutes or more, and the wall meter peaked at 1167 W for the full system. This was the one and only time I heard the cooling fans kick into high gear, at 52-55 dB. Thermals on the CPU were measured at 96 ºC, which seems to be a pseudo-ceiling. Even with that said, the processor was still running at 3850 MHz all-core.

I was running the system in a 100 sq ft room, and so pushing 1000 W for a long time without adequate air flow will warm up the room. I left my benching scripts on overnight, especially the per-thread power loading, to notice the next morning the room was warm. For any users planning to run sustained workloads in a small office, take note. Even though the system is much quieter than other workstation-class systems I’ve tested, should there be an option to place the system in an air-conditioned environment external to the work desk, this might be preferable.

On stability, throughout all of our testing, there was nothing to mention - there wasn't a single hint of instability. On speaking with Armari, the company said that this is down to AMD's own internal DVFS when implmenting a high level PBO-based overclock: I was told that because this system was built from the ground up to accommodate this power, along with the custom tweaks, and the fact that AMD's voltage and frequency tracking metrics always ensured a stable system (as long as the temperature and BIOS is managed), then they can build 10, 20, or 50 systems in a row and not experience any issues. All systems are pre-tested extensively before shipping with customer-like workloads as well.

96 Comments

View All Comments

WaltC - Wednesday, September 9, 2020 - link

Very impressive box!...;) Great write-up, too! Great job, Ian--your steady diet of metal & silicon is really producing obvious positive results! I would definitely want to go with a different motherboard, though. Even the GB x570 Aorus Master has received ECC ram support with the latest bios featuring the latest couple of AGESA's from AMD--so it seems like a shoe-in for a TR motherboard. I agree it's kind of an odd exclusion from AMD for TR. But, I suppose if you want ECC support and lots more ram support you'll need to step up to EPYC and its associated motherboards. This is a real pro-sumer offering and the price--well, everything about it--seems right on the money, imo. I really like the three-year warranty and the included service to change out coolant fluid every three years--very nice! If I used water cooling that is the only kind of fluid I would want to use--some of these el cheapo concoctions will eat up a radiator in a year or less! Enjoyed the article--thanks again...;)Makaveli - Wednesday, September 9, 2020 - link

"Ideally AMD would need a product that pairs the 8-channel + ECC support with a processor overclock."But why?

I maybe wrong here but when you are spending 10k+ on a build isn't stability more important than overclocking?

MenhirMike - Wednesday, September 9, 2020 - link

Overclocking doesn't have to be unstable - and for some workloads, the extra performance is worth the effort to find the limits and beef up the cooling solution.Ian Cutress - Thursday, September 10, 2020 - link

Had a chat with Armari. The system was built with the OC requirements in mind, and customized to support that. They're using a PBO-based overclock as well, and they've been really impressed with how AMD's latest variation of PBO can optimize the DVFS of the chip to keep the system stable regardless of workload (as long as heat is managed). In my testing, there was zero instability. I was told by Armari that they can build 10, 20, or 50 systems in a row without having any stability issues coming from the processor, and that binning the CPU is almost virtually non-existant.Everett F Sargent - Wednesday, September 9, 2020 - link

So, such a waste of power, time and money.Anyone can build TWO 3990X systems for less then half the price AND less then half the power consumption with each of them easily getting ~90% of the benchmarks.

That is with a top of the line titanium PSU, top of the line MB, top of the line 2TB SSD, top of the line Quadro RTX 4000 (oh damn paying 5X for the 6000 for only less then 2X the performance, what a b1tch, not), top of the line ... everything.

So ~1.8X of the total performance at ~1.8X the cost (sale pricing for all components otherwise make that ~1.9X the cost) and ~0.9X of the total power consumption.

But, you say, an ~15KUS system pays for itself, in less then 1E−44 seconds, even. Magnetarded indeed. /:

TallestGargoyle - Thursday, September 10, 2020 - link

That's a pretty disingenuous stance. Yes, you can split the performance across multiple systems, likely for cheaper, but that ignores general infrastructure requirements, like having multiple locations to set up a system in, having enough power outlets to run them all, being capable of splitting the workload across multiple systems.Not every workload can support distributed processing. Not every office has the space for a dedicated rack of systems churning away. Not every workload can sufficiently run on a half-performing GPU, even if the price is only a fifth of the one used here.

If your only metric is price-to-performance, then yes, this isn't the workstation for you. But this clearly isn't hitting a price-to-performance metric. It's focusing on the performance.

Tomatotech - Thursday, September 10, 2020 - link

Something something chickens and oxen.Or was it something something sports cars and 49-ton trucks? I’ll go yell at clouds instead.

Spunjji - Friday, September 11, 2020 - link

Perish the thought that someone might order one of these with a Quadro 4000 and obviate his biggest gripe (not that it would solve the potential problem of fitting a dataset into 8GB of RAM instead of 24GB)Everett F Sargent - Saturday, September 12, 2020 - link

Well there is that 20% VAT that pushes the actual system price to ~$17K US so dividing by two ~$8.5K per system minus the cost of a RTX 4000 at ~1K US gives one ~$6K US sans graphics card (remember I said build two systems with RTX 4000 at ~$7K US).So I have about ~$2.5K per system for a graphics card. Which easily gives one RTX 5000 per build at ~$2K US. Whiich is 2/3 of the RTX 6000.

But, if the GPU benchmarks are all eventually CPU bound, which appears to be the case here in this review, then yes I get two systems at ~$8K US or ~$16K US for two systems, in other words, I get to build a 3900X system for about ~$1K US to boot..

So no, I would rather see benchmarks at 4K, mind you (as these were at standard HD) with different GPU options. Basically, I would want to use my money wisely (assuming at a minimum a 3990X CPU at ~$4K US as the entry point).

Therefore, this review is totally useless to anyone interested in efficiency (which should normally include everyone) and costs (ditto).

TallestGargoyle - Monday, September 14, 2020 - link

That still doesn't take into account the issues I brought up initially with owning and running two systems.