Storage Matters: Why Xbox and Playstation SSDs Usher In A New Era of Gaming

by Billy Tallis on June 12, 2020 9:30 AM EST- Posted in

- SSDs

- Storage

- Microsoft

- Sony

- Consoles

- NVMe

- Xbox Series X

- PlayStation 5

What's Necessary to Get Full Performance Out of a Solid State Drive?

The storage hardware in the new consoles opens up new possibilities, but on its own the hardware cannot revolutionize gaming. Implementing the new features enabled by fast SSDs still requires software work from the console vendors and game developers. Extracting full performance from a high-end NVMe SSD requires a different approach to IO than methods that work for hard drives and optical discs.

We don't have any next-gen console SSDs to play with yet, but based on the few specifications released so far we can make some pretty solid projections about their performance characteristics. First and foremost, hitting the advertised sequential read speeds will require keeping the SSDs busy with a lot of requests for data. Consider one of the fastest SSDs we've ever tested, the Samsung PM1725a enterprise SSD. It's capable of reaching over 6.2 GB/s when performing sequential reads in 128kB chunks. But asking for those chunks one at a time only gets us 680 MB/s. This drive requires a queue depth of at least 16 to hit 5 GB/s, and at least QD32 to hit 6 GB/s. Newer SSDs with faster flash memory may not require queue depths that are quite so high, but games will definitely need to make more than a few requests at a time to keep the console SSDs busy.

The consoles cannot afford to waste too much CPU power on communicating with the SSDs, so they need a way for just one or two threads to manage all of the IO requests and still have CPU time left over for those cores to do something useful with the data. That means the consoles will have to be programmed using asynchronous IO APIs, where a thread issues a read request to the operating system (or IO coprocessor) but goes back to work while the request is being processed. And the thread will have to check back later to see if a request has been fulfilled. In the hard drive days, such a thread would go off and do several non-storage tasks while waiting for a read operation to complete. Now, that thread will have to spend that time issuing several more requests.

In addition to keeping queue depths up, obtaining full speed from the SSDs will require doing IO in relatively large chunks. Trying to hit 5.5 GB/s with 4kB requests would require handling about 1.4M IOs per second, which would strain several parts of the system with overhead. Fortunately, games tend to naturally deal with larger chunks of data, so this requirement isn't too much trouble; it mainly means that many traditional measures of SSD performance are irrelevant.

Microsoft has said extremely little about the software side of the Xbox Series X storage stack. They have announced a new API called DirectStorage. We don't have any description of how it works or differs from existing or previous console storage APIs, but it is designed to be more efficient:

DirectStorage can reduce the CPU overhead for these I/O operations from multiple cores to taking just a small fraction of a single core.

The most interesting bit about DirectStorage is that Microsoft plans to bring it to Windows, so the new API cannot be relying on any custom hardware and it has to be something that would work on top of a regular NTFS filesystem. Based on our experiences testing fast SSDs under Windows, they could certainly use a lower-overhead storage API, and it would be applicable to far more than just video games.

Sony's storage API design is probably intertwined with their IO coprocessors, but it's unlikely that game developers have to be specifically aware that their IO requests are being offloaded. Mark Cerny has stated that games can bypass normal file IO, elaborated a bit in an interview with Digital Foundry:

There's low level and high level access and game-makers can choose whichever flavour they want - but it's the new I/O API that allows developers to tap into the extreme speed of the new hardware. The concept of filenames and paths is gone in favour of an ID-based system which tells the system exactly where to find the data they need as quickly as possible. Developers simply need to specify the ID, the start location and end location and a few milliseconds later, the data is delivered. Two command lists are sent to the hardware - one with the list of IDs, the other centring on memory allocation and deallocation - i.e. making sure that the memory is freed up for the new data.

Getting rid of filenames and paths doesn't win much performance on its own, especially since the system still has to support a hierarchical filesystem API for the sake of older code. The real savings come from being able to specify the whole IO procedure in a single step instead of the application having to manage parts like the decompression and relocating the data in memory—both handled by special-purpose hardware on the PS5.

For a more public example of what a modern high-performance storage can accomplish, it's worth looking at the io_uring asynchronous API added to Linux last year. We used it on our last round of enterprise SSD reviews to get much better throughput and latency out of the fastest drives available. Where old-school Unix style synchronous IO topped out at a bit less than 600k IOPS on our 36-core server, io_uring allowed a single core to hit 400k IOPS. Even compared to the previous asynchronous IO APIs in Linux, io_uring has lower overhead and better scalability. The API's design has applications communicating with the operating system in a very similar manner to how the operating system communicates with NVMe SSDs: pairs of command submission and completion queues that are accessible by both parties. Large batches of IO commands can be submitted with at most one system call, and no system calls are needed to check for command completion. That's a big advantage in a post-Spectre world where system call overhead is much higher. Recent experimentation has even shown that the io_uring design allows for shader programs running on a GPU to submit IO requests with minimal CPU involvement.

Most of the work relating to io_uring on Linux is too recent to have influenced console development, but it still illustrates a general direction that the industry is moving toward, driven by the same needs to make good use of NVMe performance without wasting too much CPU time.

Keeping Latency Under Control

While game developers will need to put some effort into extracting full performance from the console SSDs, there is a competing goal. Pushing an SSD to its performance limits causes latency to increase significantly, especially if queue depths go above what is necessary to saturate the drive. This extra latency doesn't matter if the console is just showing a loading screen, but next-generation games will want to keep the game running interactively while streaming in large quantities of data. Sony has outlined their plan for dealing with this challenge: their SSD implements a custom feature to support 6 priority levels for IO commands, allowing large amounts of data to be loaded without getting in the way when a more urgent read request crops up. Sony didn't explain much of the reasoning behind this feature or how it works, but it's easy to see why they need something to prioritize IO.

Loading a new world in 2.25 seconds as Ratchet & Clank fall through an inter-dimensional rift

Mark Cerny gave a hypothetical example of when multiple priority levels are needed: when a player is moving into a new area, lots of new textures may need to be loaded, at several GB per second. But since the game isn't interrupted by a loading screen, stuff keeps happening, and an in-game event (eg a character getting shot) may require data like a new sound effect to be loaded. The request for that sound effect will be issued after the requests for several GB of textures, but it needs to be completed before all the texture loading is done because stuttering sound is much more noticeable and distracting than a slight delay in the gradual loading of fresh texture data.

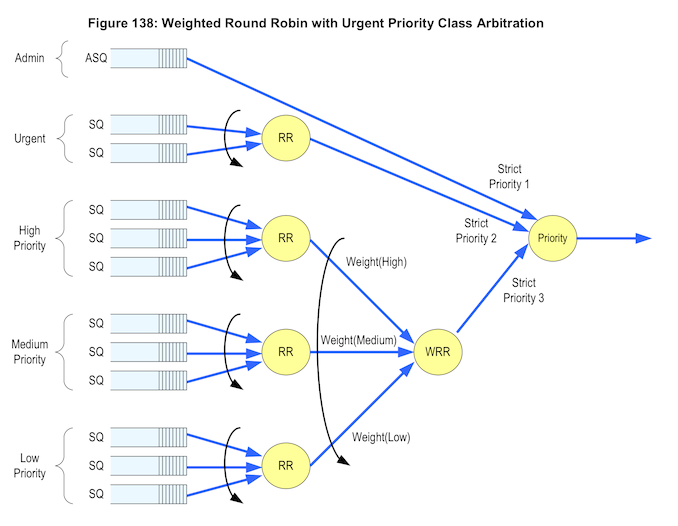

But the NVMe standard already includes a prioritization feature, so why did Sony develop their own? Sony's SSD will support 6 priority levels, and Mark Cerny claims that the NVMe standard only supports "2 true priority levels". A quick glance at the NVMe spec shows that it's not that simple:

The NVMe spec defines two different command arbitration schemes for determining which queue will supply the next command to be handled by the drive. The default is a simple round-robin balancing that treats all IO queues equally and leaves all prioritization up to the host system. Drives can also optionally implement the weighted round robin scheme, which provides four priority levels (not counting one for admin commands only). But the detail Sony is apparently concerned with is that among those four priority levels, only the "urgent" class is given strict priority over the other levels. Strict prioritization is the simplest form of prioritization to implement, but such methods are a poor choice for general-purpose systems. In a closed specialized system like a game console it's much easier to coordinate all the software that's doing IO in order to avoid deadlocks and starvation. much of the IO done by a game console also comes with natural timing requirements.

This much attention being given to command arbitration comes as a bit of a surprise. The conventional wisdom about NVMe SSDs is that they are usually so fast that IO prioritization is unnecessary, and wasting CPU time on re-ordering IO commands is just as likely to reduce overall performance. In the PC and server space, the NVMe WRR command arbitration feature has been largely ignored by drive manufacturers and OS vendors—a partial survey of our consumer NVMe SSD collection only turned up two brands that have enabled this feature on their drives. So when it comes to supporting third-party SSD upgrades, Sony cannot depend on using the WRR command arbitration feature. This might mean they also won't bother to use it even when a drive has this feature, instead relying entirely on their own mechanism managed by the CPU and IO coprocessors.

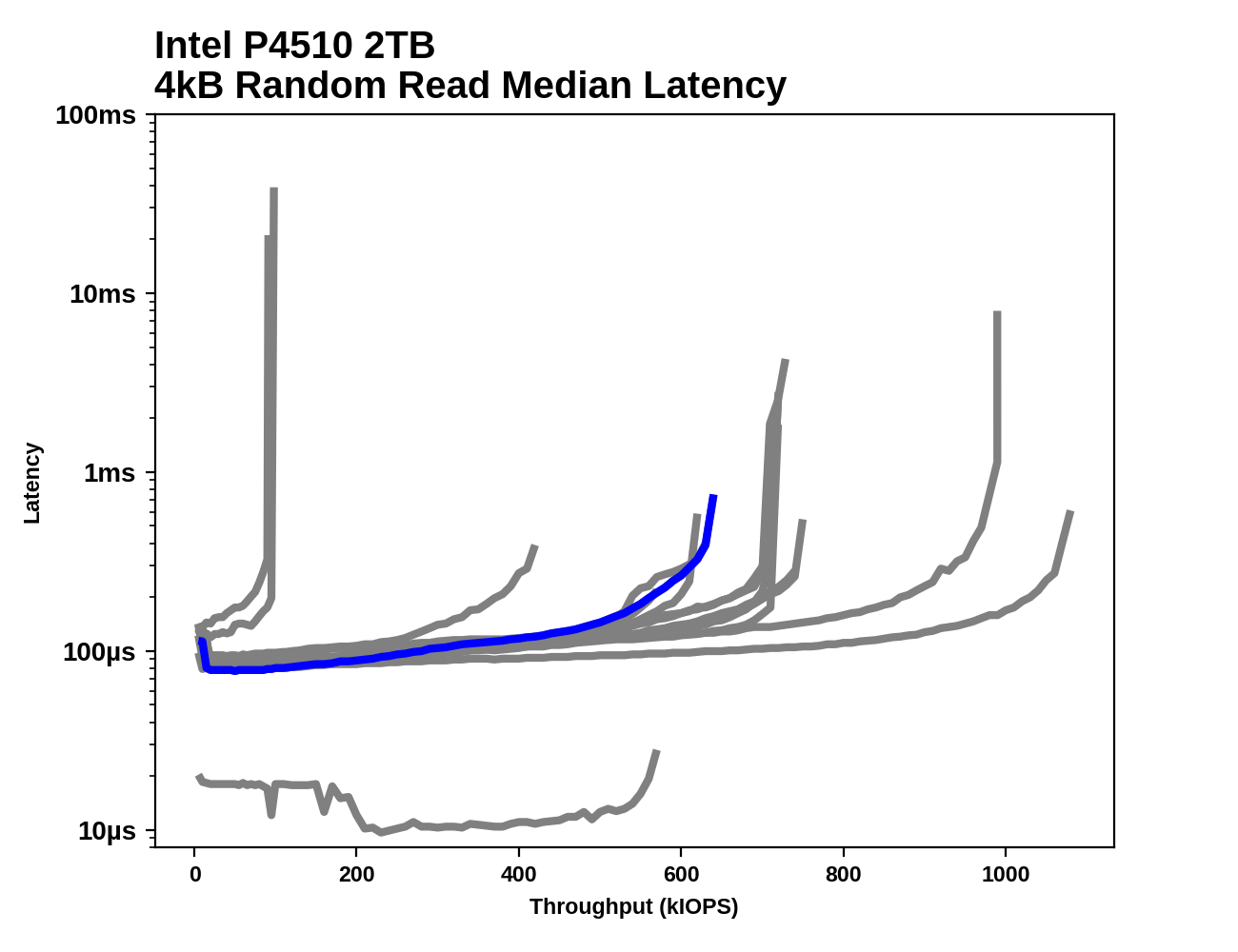

Sony says the lack of six priority levels on off the shelf NVMe drives means they'll need slightly higher raw performance to match the same real-world performance of Sony's drive because Sony will have to emulate the 6 priority levels on the host side, using some combination of CPU and IO coprocessor work. Based on our observations of enterprise SSDs (which are designed with more of a focus on QoS than consumer SSDs), holding 15-20% of performance in reserve typically keeps latency plenty low (about 2x the latency of an idle SSD) without any other prioritization mechanism, so we project that drives capable of 6.5GB/s or more should have no trouble at all.

|

Latency spikes as drives get close to their throughput limit

It's still a bit of a mystery what Sony plans to do with so many priority levels. We can certainly imagine a hierarchy of several priority levels for different kinds of data: Perhaps game code is the highest priority to load since at least one thread of execution will be completely stalled while handling a page fault, so this data is needed as fast as possible (and ideally should be kept in RAM full-time rather than loaded on the fly). Texture pre-fetching is probably the lowest priority, especially fetching higher-resolution mimpaps when a lower-resolution version is already in RAM and usable in the interim. Geometry may be a higher priority than textures, because it may be needed for collision detection and textures are useless without geometry to apply them to. Sound effects should ideally be loaded with latency of at most a few tens of milliseconds. Their patent mentions giving higher priority to IO done using the new API, on the theory that such code is more likely to be performance-critical.

Planning out six priority classes of data for a game engine isn't too difficult, but that doesn't mean it will actually be useful to break things down that way when interacting with actual hardware. Recall that the whole point of prioritization and other QoS methods is to avoid excess latency. Excess latency happens when you give the SSD more requests than it can work on simultaneously; some of the requests have to sit in the command queue(s) waiting their turn. If there are a lot of commands queued up, a new command added at the back of the line will have a long time to wait. If a game sends the PS5 SSD new requests at a rate totaling more than 5.5GB/s, a backlog will build up and latency will keep growing until the game stops requesting data more quickly than the SSD can deliver. When the game is requesting data at much less than 5.5GB/s, every time a new read command is sent to the SSD, it will start processing that request almost immediately.

So what's most important is limiting the amount of requests that can pile up in the SSD's queues, and once that problem is solved, there's not much need for further prioritization. It should only take one queue to put all the background, latency-insensitive IO commands into to be throttled, and then everything else can be handled with low latency.

Closing Thoughts

The transition of console gaming to solid state storage will change the landscape of video game design and development. A dam is breaking, and game developers will soon be free to ignore the limitations of hard drives and start exploring the possibilities of fast storage. It may take a while for games to fully utilize the performance of the new console SSDs, but there will be many tangible improvements available at launch.

The effects of this transition will also spill over into the PC gaming market, exerting pressure to help finally push hard drives out of low-end gaming PCs, and allowing gamers with high-end PCs to start enjoying more performance from their heretofore underutilized fast SSDs. And changes to to the Windows operating system itself are already underway because of these new consoles.

Ultimately, it will be interesting to see whether the novel parts of the new console storage subsystems end up being a real advantage that influences the direction of PC hardware development, or if they end up just being interesting quirks that get left in the dust as PC hardware eventually overtakes the consoles with superior raw performance. NVMe SSDs arrived at the high end of the consumer market five years ago. Now, they're crossing a tipping point and are well on the way to becoming the mainstream standard for storage.

200 Comments

View All Comments

almighty15 - Sunday, June 14, 2020 - link

It should read "By the time it ships, the PS5 SSD's read performance will be unremarkable – matched by other high-end SSDs ON PAPER"In terms of real world performance a 5Gb/s NVMe drive can't beat a 550Mb/s SATA III SSD and yet Anandtech somehow think they'll compete with console?

Eliadbu - Saturday, June 13, 2020 - link

This change might in few years make games to require SSD at certain speed as a base requirement. I'm for it since I feel my NVME SSDs are not helpful in gaming more than my SATA ssd. In many cases you need the consoles to make a move for PCs to enjoy it, I see this as definitely a case of such.vol.2 - Saturday, June 13, 2020 - link

So, is the PS5 officially the ugliest console ever created?wrkingclass_hero - Sunday, June 14, 2020 - link

It's up there.wolfesteinabhi - Saturday, June 13, 2020 - link

with main cpu/gpu being very identical in both camps they are running out of ways to differentiate.storage is important though .. but i wish they woukd stick to some common standard ....and over time we get games that can be played on either of the consoles or they can be htpcs in itself that can do a lot more from their h/w than just games .... given current hardware they have ... i feel its a bit wasted when they are only limited to games(that too a very limited amlunt..especially Sony/PS)

almighty15 - Sunday, June 14, 2020 - link

I don't normally comment on article like this but feel I have due to the tone you have regarding PC 'catching up' to consoles.That is a long way away, I'm talking years! As I explained on Twitter a 5Gb/s NVMe drives loads games no faster then a 550Mb/s SATA III SSD, and while some of that is down to having to cater to machines that still run mechanical drives most of it is due to Windows just having a file and I/O systems that decades old.

If we want to send a texture to a GPU on PC this is the 'hardware' path it has to take:

SSD > system bus > Chipset (South bridge) > system bus > CPU > system bus > main RAM >

system bus > CPU (North bridge) > PCIEX bus > VRAM

To get a texture to GPU memory on PlayStation 5 it goes:

SSD > system bus > I/O controller > system bus > VRAM

On PC these system buses and chips all run and communicate with each other at different speeds which causes bottlenecks as data is moved through all that hardware.

On PS5 the path is so much straight forward and the I/O block runs at the same speed as the CPU clock so it's all super faster and efficient.

And then on PC there's the software side of it, which again is a HUGE problem that Microsoft can't fix with a little Windows update. The hardware on PC can not directly talk to other hardware, meaning your GPU can not directly talk to the storage driver and ask it for a texture, it has to ask Windows, who then ask the chipset driver, who then asks the storage driver for a file.......

It requires a complete RE-WRITE of Windows storage drivers and kernal which is a process that takes years as they have to send any new idea's over to developers and software owners so they can do their own testing and plan patching their existing software.

When Apple updated their file system for SSD it them 3 years! And they way less legacy hardware and hardware configurations to worry about then Micorosft.

There is currently nothing in the develop changes about a new version of Windows or a new file system in the works meaning that it's at least 3-4 years away.

This article doesn't even scratch the surface as to why storage and I/O is so slow and bottlenecked on PC and make it out to be like it's a simple fix to get PC SSD's performing like consoles.

PC's will catch up, they always do, but do not let articles like this one trick you in to thinking it's a quick fix as it most certainly isn't.

eddman - Sunday, June 14, 2020 - link

As mentioned in this very article, they have this new DirectStorage API on XSX and plan to bring it to windows. They haven't released any specific details but it might even be some sort of a direct GPU/VRAM-to-storage solution.Whatever it is, it's surely bound to improve the file transfer performance, and since it'd be part of the Directx suite, developers should have an easy time taking advantage of it.

Billy Tallis - Sunday, June 14, 2020 - link

Your description of how the data paths differ between a standard PC and the PS5 is wildly inaccurate.eddman - Sunday, June 14, 2020 - link

Yea, the path is wrong. For one, RAM is not connected to the CPU through the system bus. It's something like this for desktops, IINM:1. Intel/Ryzen (SSD connected to the chipset):

SSD > PCIe > Chipset > system bus (DMI/PCIe x4) > CPU > memory channel > RAM > memory channel > CPU(*) > PCIe x16 > VRAM

2. Ryzen (SSD connected directly to the CPU):

SSD > PCIe > CPU > memory channel > RAM > memory channel > CPU(*) > PCIe x16 > VRAM

(*) at this step, perhaps the CPU has already done the I/O calculations, so the data goes directly from the system RAM (through the memory controller and then PCIe x16) to the VRAM (without wasting CPU cycles)?

(I don't know that much about hardware at such low levels, so please correct me if I'm wrong.)

With a GPU-to-storage direct access, it should look like these:

1. SSD > PCIe > Chipset > system bus (DMI/PCIe x4) > CPU(!) > PCIe x16 > VRAM

2. SSD > PCIe > CPU(!) > PCIe x16 > VRAM

The second option doesn't look much different from the PS5.

(!) just passing through CPU's System Agent/Infinity Fabric with minimal CPU overhead.

Billy Tallis - Sunday, June 14, 2020 - link

It is important to make a distinction between when data hits the CPU die but doesn't actually require attention from a CPU core. DMA is important! Data coming in from the SSD can be forwarded to RAM or to the GPU (P2P DMA) by the PCIe root complex without involvement from a CPU core. The CPU just needs to initiate the transaction and handle the completion interrupt (which often involves setting up the next DMA transfer).On the PS5, there will also be a DMA round-trip from RAM to the decompression unit back to RAM, with either a CPU core or the IO coprocessor setting up the DMA transfers.