The Intel Comet Lake Core i9-10900K, i7-10700K, i5-10600K CPU Review: Skylake We Go Again

by Dr. Ian Cutress on May 20, 2020 9:00 AM EST- Posted in

- CPUs

- Intel

- Skylake

- 14nm

- Z490

- 10th Gen Core

- Comet Lake

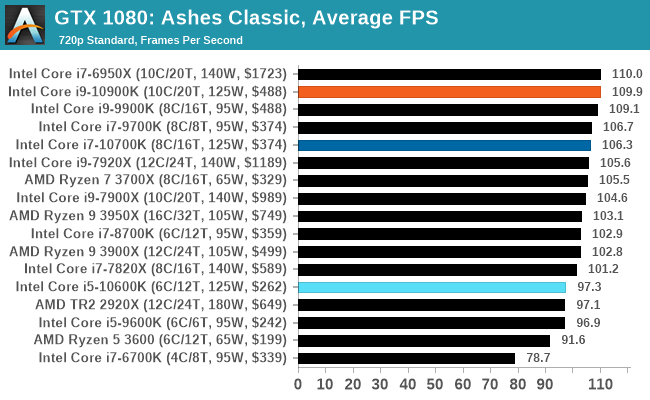

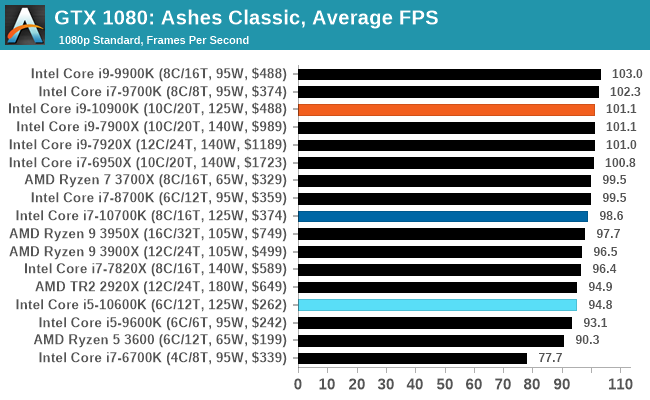

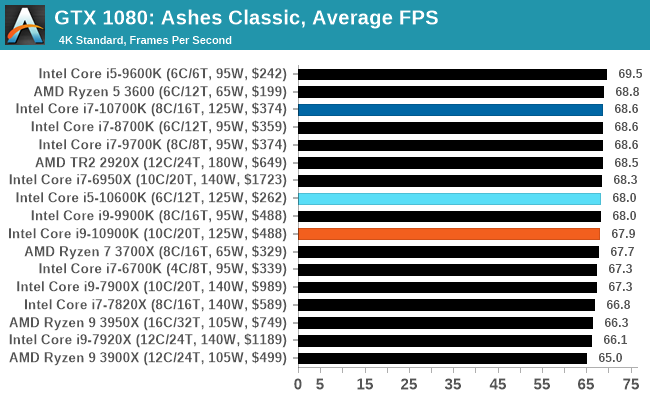

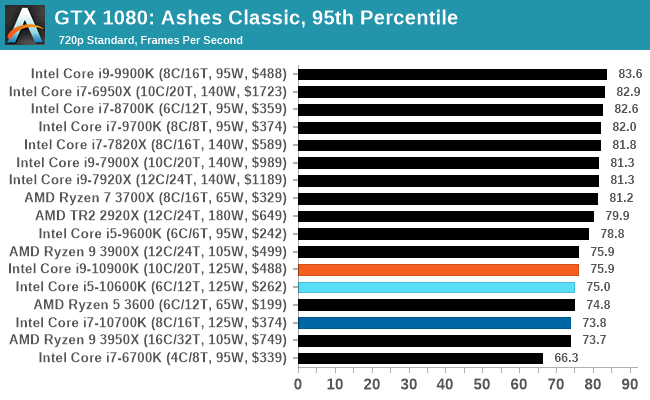

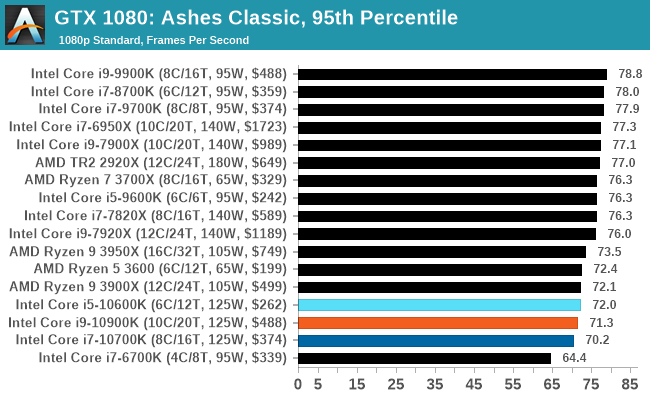

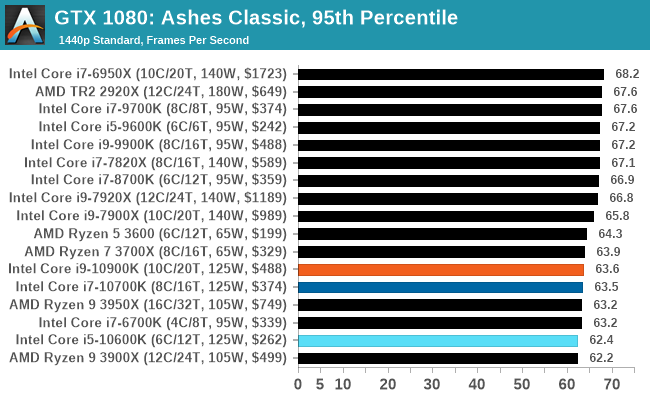

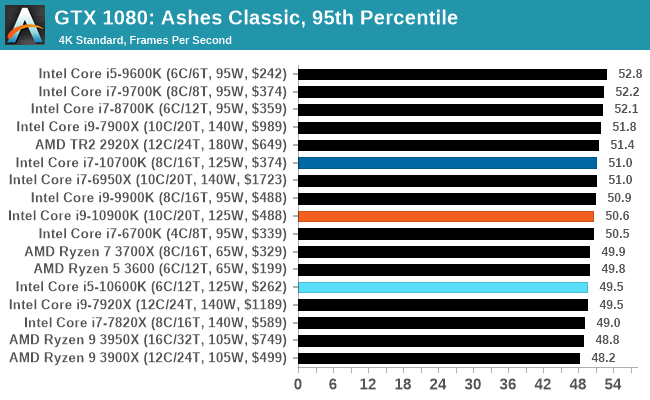

Gaming: Ashes Classic (DX12)

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of the DirectX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run Ashes Classic: an older version of the game before the Escalation update. The reason for this is that this is easier to automate, without a splash screen, but still has a strong visual fidelity to test.

Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at the above settings, and take the frame-time output for our average and percentile numbers.

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

220 Comments

View All Comments

yankeeDDL - Wednesday, May 20, 2020 - link

I think the main idea was to show if the CPU was getting in the way when teh GPU is definitely not the bottleneck.mrvco - Wednesday, May 20, 2020 - link

That's difficult to discern without all the relevant data.. i.e. diminishing returns as the bottle-neck transitions from the CPU to the GPU at typical resolutions and quality settings. I think better of the typical AnandTech reader, but I would hate to think that someone reads this review and extrapolates 720p / medium quality FPS relative performance to 1440p or 2160p at high or ultra settings and blows their build budget on a $400+ CPU and associated components required to power and cool that CPU with little or no improvement in actual gaming performance.dullard - Wednesday, May 20, 2020 - link

Do we really need this same comment with every CPU review ever? Every single CPU review for years (Decades?) people make that exact same comment. That is why the reviews test several different resolutions already.Anandtech did 2 to 4 resolutions with each game. Isn't that enough? Can't you interpolate or extrapolate as needed to whatever specific resolution you use? Or did you miss that there are scroll over graphs of other resolutions in the review.

schujj07 - Wednesday, May 20, 2020 - link

“There are two types of people in this world: 1.) Those who can extrapolate from incomplete data.”diediealldie - Thursday, May 21, 2020 - link

LMAO you're geniusDrKlahn - Wednesday, May 20, 2020 - link

In some cases they do higher than 1080p and some they don't. I do wish they would include higher resolution in all tests and that the "gaming lead" statements came with the caveat that it's largely only going to be beneficial for those seeking low resolution with very high frame rates. Someone with a 1080p 60Hz monitor likely isn't going to benefit from the Intel platform, nor is someone with a high resolution monitor with eye candy enabled. But the conclusion doesn't really spell that out well for the less educated. And it's certainly not just Anandtech doing this. Seems to be the norm. But you see people parroting "Intel is better for gaming" when in their setup it may not bring any benefit while incurring more cost and being more difficult to cool due to the substantial power use.Spunjji - Tuesday, May 26, 2020 - link

It's almost like their access is partially contingent on following at least a few of the guidelines about how to position the product. :/mrvco - Wednesday, May 20, 2020 - link

Granted, 720p and 1080p resolutions are highly CPU dependent when using a modern GPU, but I'm not seeing 1440p at high or ultra quality results which is where things do transition to being more GPU dependent and a more realistic real-world scenario for anyone paying up for mid-range to high-end gaming PCs.Meteor2 - Wednesday, July 15, 2020 - link

Spend as much as you can on the GPU and pair with a $200 CPU. It’s actually pretty simple.yankeeDDL - Wednesday, May 20, 2020 - link

I have to say that this fared better than I expected.I would definitely not buy one, but kudos to Intel.

Can't imagine what it means to have a 250W CPU + 200W GPU in a PC next to you while you're playing. Must sound like an airplane.