The NVIDIA GeForce GTX 1650 Review, Feat. Zotac: Fighting Brute Force With Power Efficiency

by Ryan Smith & Nate Oh on May 3, 2019 10:15 AM ESTWolfenstein II: The New Colossus (Vulkan)

id Software is popularly known for a few games involving shooting stuff until it dies, just with different 'stuff' for each one: Nazis, demons, or other players while scorning the laws of physics. Wolfenstein II is the latest of the first, the sequel of a modern reboot series developed by MachineGames and built on id Tech 6. While the tone is significantly less pulpy nowadays, the game is still a frenetic FPS at heart, succeeding DOOM as a modern Vulkan flagship title and arriving as a pure Vullkan implementation rather than the originally OpenGL DOOM.

Featuring a Nazi-occupied America of 1961, Wolfenstein II is lushly designed yet not oppressively intensive on the hardware, something that goes well with its pace of action that emerge suddenly from a level design flush with alternate historical details.

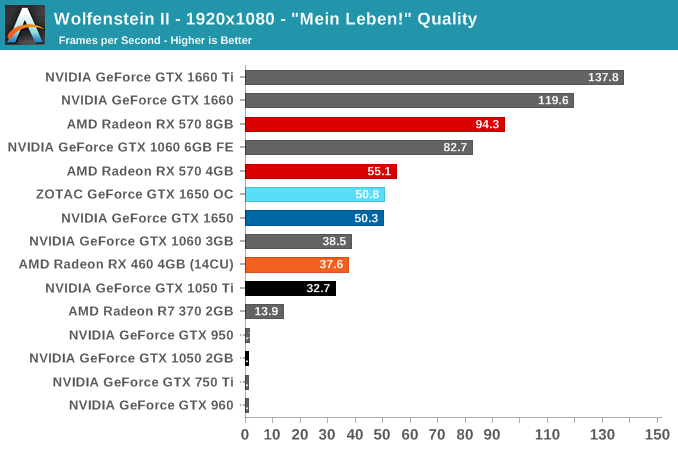

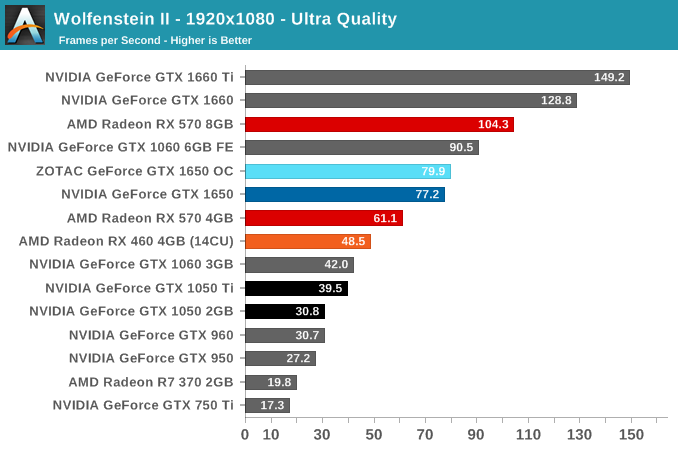

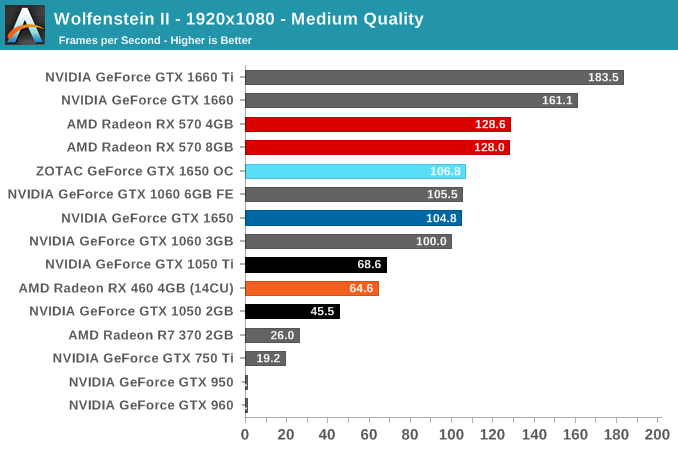

The game has 5 total graphical presets: Mein leben!, Uber, Ultra, Medium, and Low. Staying consistent with previous 1080p testing, the highest quality preset, "Mein leben!", was used, in addition to Ultra and Medium. Wolfenstein II also features Vega-centric GPU Culling and Rapid Packed Math, as well as Radeon-centric Deferred Rendering; in accordance with the presets, neither GPU Culling nor Deferred Rendering was enabled.

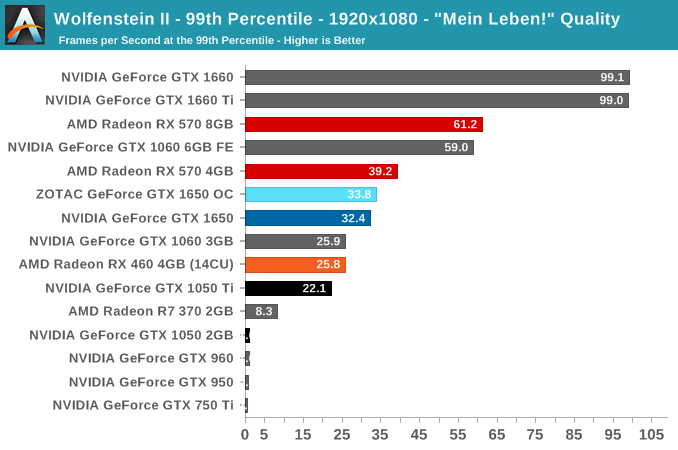

To preface, an odd performance bug afflicted the two Maxwell cards (GTX 960 and GTX 950), where Medium Image Streaming (poolsize of 768) resulted in out-of-memory sub 2-fps performance. Using the Medium preset but with any other Image Streaming setting returned to normal performance, suggesting an issue with memory allocation, and occuring on earlier drivers as well. It's not clear how much this affects sub 2-fps performance at the "Mein leben!" preset, which is already much too demanding for 2GB of framebuffer.

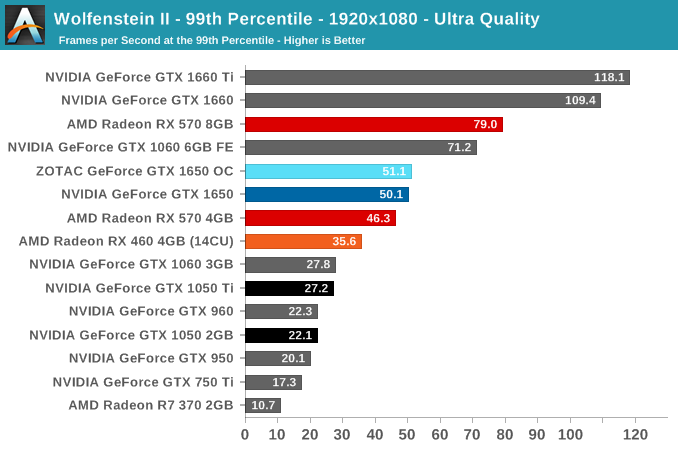

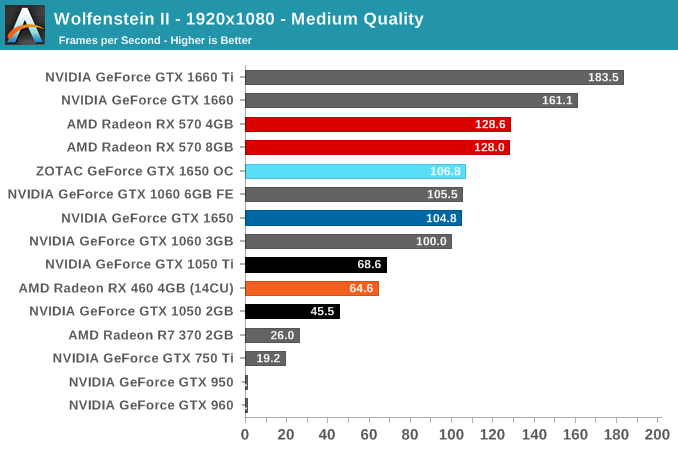

With the VRAM-hungry nature of Wolfenstein II, the GTX 1650's 4GB keeps it from suffering a premature death like all the other 2GB cards. Or even the 3GB card, in the case of the GTX 1060 3GB. Even at medium settings, the bigger framebuffer turns the tables on the GTX 1060 3GB, which is faster in every other game in the suite. So the GTX 1650 takes the lead in both performance and smoother gameplay experience.

The VRAM-centric approach does uncover more oddities; here the GTX 1650 overcomes the RX 570 4GB at higher settings, but the RX 570 8GB remains undeterred.

126 Comments

View All Comments

PeachNCream - Tuesday, May 7, 2019 - link

Agreed with nevc on this one. When you start discussing higher end and higher cost components, consideration for power consumption comes off the proverbial table to a great extent because priority is naturally assigned moreso to performance than purchase price or electrical consumption and TCO.eek2121 - Friday, May 3, 2019 - link

Disclaimer, not done reading the article yet, but I saw your comment.Some people look for low wattage cards that don't require a power connector. These types of cards are particularly suited for MiniITX systems that may sit under the TV. The 750ti was super popular because of this. Having Turings HEVC video encode/decode is really handy. You can put together a nice small MiniITX with something like the Node 202 and it will handle media duties much better than other solutions.

CptnPenguin - Friday, May 3, 2019 - link

That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder for cost saving or some other Nvidia-Alone-Knows reason. (source: Hardware Unboxed and Gamer's Nexus).Anyone buying a 1650 and expecting to get the Turing video encoding hardware is in for a nasty surprise.

Oxford Guy - Saturday, May 4, 2019 - link

"That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder"Yeah, lack of B support stinks.

JoeyJoJo123 - Friday, May 3, 2019 - link

Or if you're going with a miniITX low wattage system, you can cut out the 75w GPU and just go with a 65w AMD Ryzen 2400G since the integrated Vega GPU is perfectly suitable for an HTPC type system. It'll save you way more money with that logic.0ldman79 - Sunday, May 19, 2019 - link

What they are going to do though is look at the fast GPU + PSU vs the slower GPU alone.People with OEM boxes are going to buy one part at a time. Trust me on this, it's frustrating, but it's consistent.

Gich - Friday, May 3, 2019 - link

25$ a year? So 7cents a day?7cents is more then 1kWh where I live.

Yojimbo - Friday, May 3, 2019 - link

The us average is a bit over 13 cents per kilowatt hour. But I made an error in the calculation and was way off. It's more like $15 over 2 years and not $50. Sorry.DanNeely - Friday, May 3, 2019 - link

That's for an average of 2h/day gaming. Bump it up to a hard core 6h/day and you get around $50/2 years. Or 2h/day but somewhere with obnoxiously expensive electricity like Hawaii or Germany.rhysiam - Saturday, May 4, 2019 - link

I'd just like to point out that if you've gamed for an average of 6h per day over 2 years with a 570 instead of a 1650, then you've also been enjoying 10% or so extra performance. That's more than 4000 hours of higher detail settings and/or frame rates. If people are trying to calculate the true "value" of a card, then I would argue that this extra performance over time, let's not forget the performance benefits!