The NVIDIA GeForce GTX 1650 Review, Feat. Zotac: Fighting Brute Force With Power Efficiency

by Ryan Smith & Nate Oh on May 3, 2019 10:15 AM ESTAshes of the Singularity: Escalation (DX12)

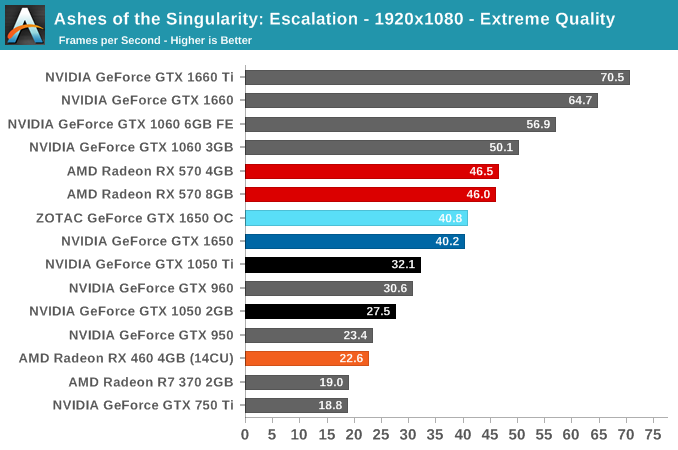

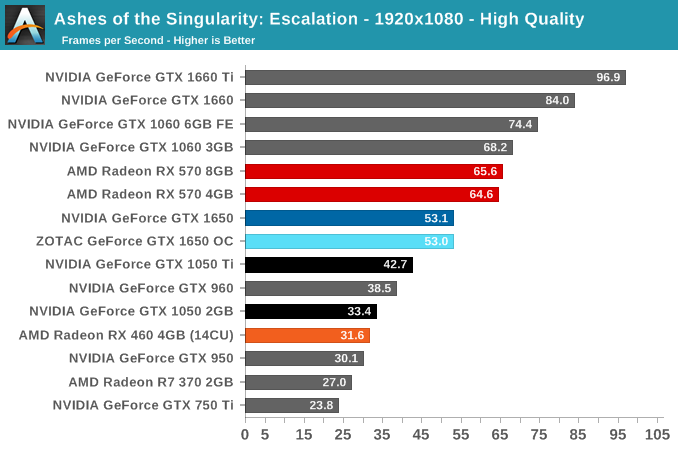

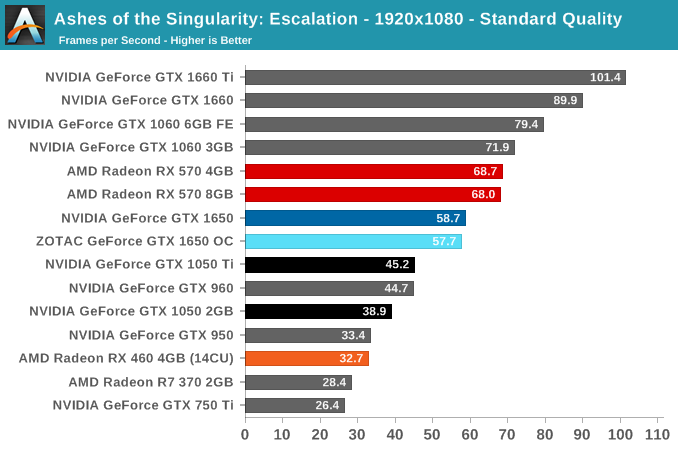

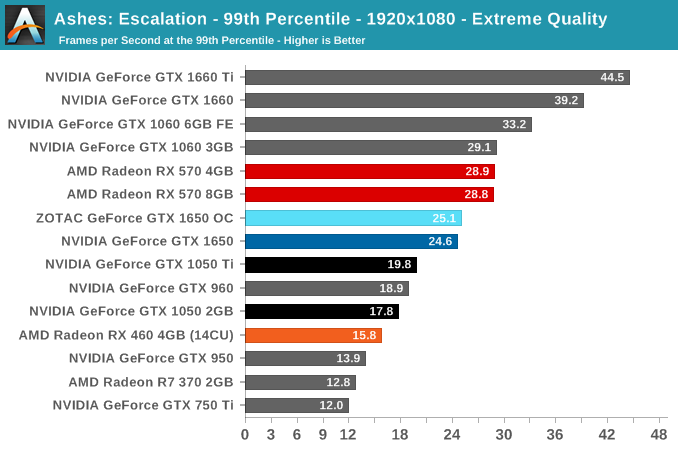

A veteran from both our 2016 and 2017 game lists, Ashes of the Singularity: Escalation remains the DirectX 12 trailblazer, with developer Oxide Games tailoring and designing the Nitrous Engine around such low-level APIs. The game makes the most of DX12's key features, from asynchronous compute to multi-threaded work submission and high batch counts. And with full Vulkan support, Ashes provides a good common ground between the forward-looking APIs of today. Its built-in benchmark tool is still one of the most versatile ways of measuring in-game workloads in terms of output data, automation, and analysis; by offering such a tool publicly and as part-and-parcel of the game, it's an example that other developers should take note of.

Settings and methodology remain identical from its usage in the 2016 GPU suite. To note, we are utilizing the vanilla Ashes Classic Extreme graphical preset, which compares to the current one with MSAA dialed down from x4 to x2, as well as adjusting Texture Rank (MipsToRemove in settings.ini). For today, we are also utilizing the vanilla High and Standard presets.

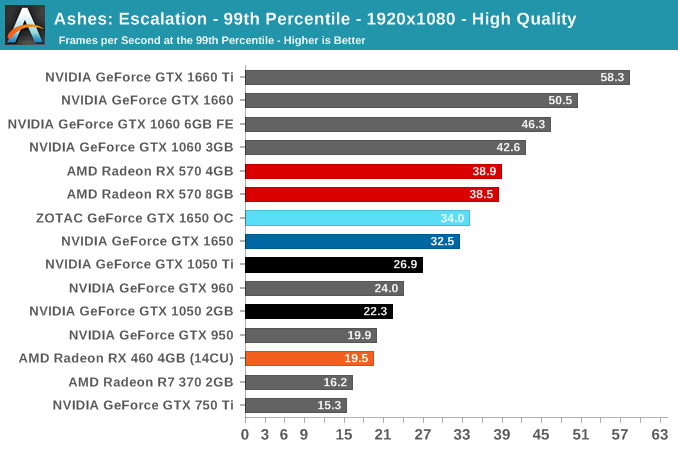

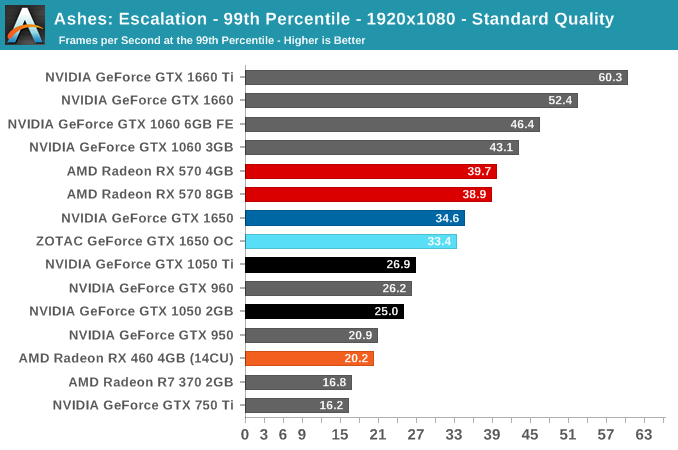

With Ashes, the GTX 1650 continues on trend, solidly slower than the RX 570 yet clearly a step up from predecessor 2GB cards.

126 Comments

View All Comments

PeachNCream - Tuesday, May 7, 2019 - link

Agreed with nevc on this one. When you start discussing higher end and higher cost components, consideration for power consumption comes off the proverbial table to a great extent because priority is naturally assigned moreso to performance than purchase price or electrical consumption and TCO.eek2121 - Friday, May 3, 2019 - link

Disclaimer, not done reading the article yet, but I saw your comment.Some people look for low wattage cards that don't require a power connector. These types of cards are particularly suited for MiniITX systems that may sit under the TV. The 750ti was super popular because of this. Having Turings HEVC video encode/decode is really handy. You can put together a nice small MiniITX with something like the Node 202 and it will handle media duties much better than other solutions.

CptnPenguin - Friday, May 3, 2019 - link

That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder for cost saving or some other Nvidia-Alone-Knows reason. (source: Hardware Unboxed and Gamer's Nexus).Anyone buying a 1650 and expecting to get the Turing video encoding hardware is in for a nasty surprise.

Oxford Guy - Saturday, May 4, 2019 - link

"That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder"Yeah, lack of B support stinks.

JoeyJoJo123 - Friday, May 3, 2019 - link

Or if you're going with a miniITX low wattage system, you can cut out the 75w GPU and just go with a 65w AMD Ryzen 2400G since the integrated Vega GPU is perfectly suitable for an HTPC type system. It'll save you way more money with that logic.0ldman79 - Sunday, May 19, 2019 - link

What they are going to do though is look at the fast GPU + PSU vs the slower GPU alone.People with OEM boxes are going to buy one part at a time. Trust me on this, it's frustrating, but it's consistent.

Gich - Friday, May 3, 2019 - link

25$ a year? So 7cents a day?7cents is more then 1kWh where I live.

Yojimbo - Friday, May 3, 2019 - link

The us average is a bit over 13 cents per kilowatt hour. But I made an error in the calculation and was way off. It's more like $15 over 2 years and not $50. Sorry.DanNeely - Friday, May 3, 2019 - link

That's for an average of 2h/day gaming. Bump it up to a hard core 6h/day and you get around $50/2 years. Or 2h/day but somewhere with obnoxiously expensive electricity like Hawaii or Germany.rhysiam - Saturday, May 4, 2019 - link

I'd just like to point out that if you've gamed for an average of 6h per day over 2 years with a 570 instead of a 1650, then you've also been enjoying 10% or so extra performance. That's more than 4000 hours of higher detail settings and/or frame rates. If people are trying to calculate the true "value" of a card, then I would argue that this extra performance over time, let's not forget the performance benefits!