Upgrading from an Intel Core i7-2600K: Testing Sandy Bridge in 2019

by Ian Cutress on May 10, 2019 10:30 AM EST- Posted in

- CPUs

- Intel

- Sandy Bridge

- Overclocking

- 7700K

- Coffee Lake

- i7-2600K

- 9700K

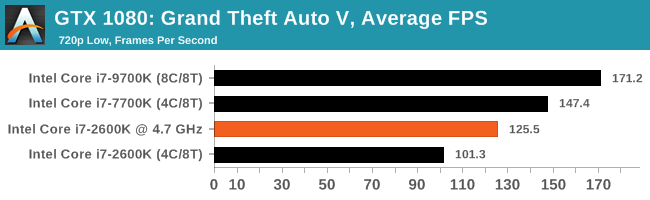

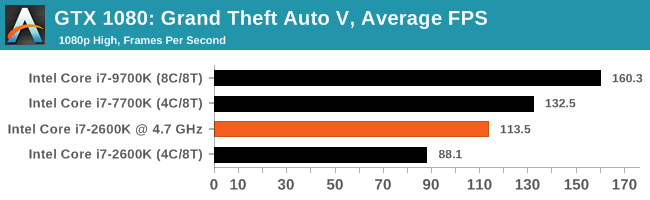

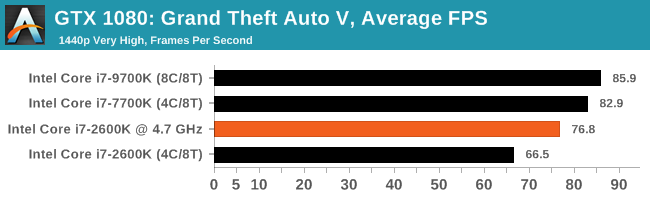

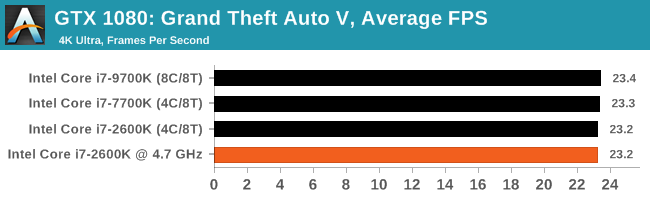

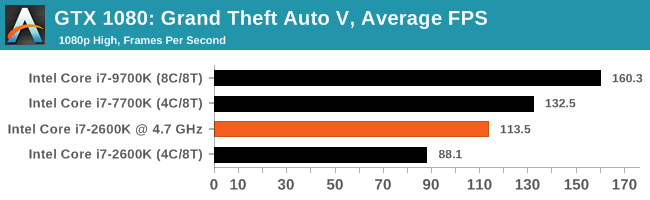

Gaming: Grand Theft Auto V

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Grand Theft Auto V | Open World | Apr 2015 |

DX11 | 720p Low |

1080p High |

1440p Very High |

4K Ultra |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

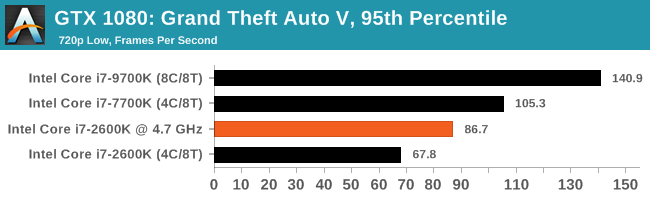

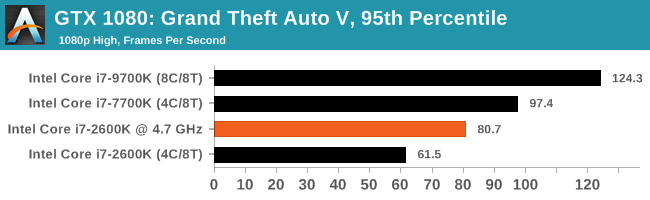

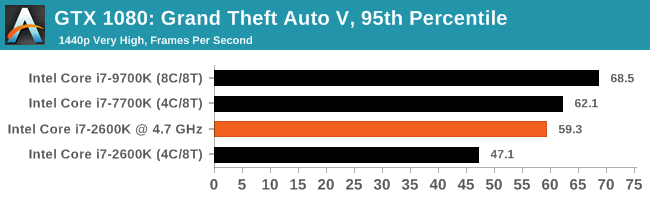

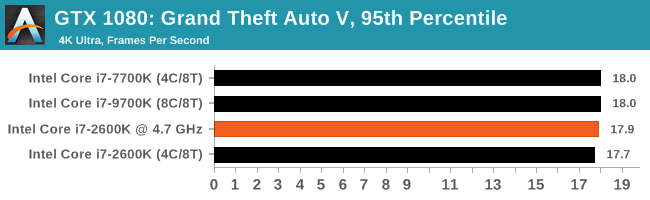

| 95th Percentile |  |

|

|

|

We see performance parity between the chips at 4K, but for all other resolutions and settings, the OC chip again still can't make it to the level of the 7700K, often sitting midway between the 7700K at stock and the 2600K at stock.

213 Comments

View All Comments

Death666Angel - Sunday, May 12, 2019 - link

I've done some horrendous posts when I used my phone to make a comment somewhere. Mostly because my phone is trained to my German texting habits and not my English commenting habits. And trying to mix them leads to sub par results in both areas, so I mostly stick to using my phone for texting and my PC and laptop for commenting. But sometimes I have to write something via my phone and it makes a beautiful mess if I'm not careful.Death666Angel - Sunday, May 12, 2019 - link

Well, laptops and desktops (with monitors) are in a different category anyway, at least that's how I see it. :-)I work with a 13.3" laptop with a 1440p resolution and 150% scaling. It's not fun, but it does the job. The advantage of the larger screen real estate with a 15" or 17" laptop is outweight by the size and weight increase. I've also done work on 1024x768 monitors and it does the job in a pinch. But I've tried to upgrade as soon as the new technology was established, cheap and good enough to make it worth it without having to pay the early adopter fee or fiddle around to get it to work. Even before Win7 made it a breeze to have multiple windows in an orderly grid, I took full advantage of a multi window and multi program workflow for research, paper/presentation writing, editing and media consumption. So it is a bit surprising to see someone like Ian, a tech enthusiast with a university doctorate be so late to great tech that can really make life easier. :D

Showtime - Saturday, May 11, 2019 - link

Great article. Was hoping to see all the CPU's tested (my 4770k), but I think it shows enough. This isn't the 1st article showing that lesser CPU's can run close to the best CPU's when it come to 4k gaming. Does that look to change any time soon? I was thinking I should upgrade this year, but would like to know if I should be shooting for an 8 core, or if a 6 will be a decent enough upgrade.Consoles run slower 8 core proc's that are utilized more efficiently. At some point won't pc games do the same?

Targon - Tuesday, May 14, 2019 - link

There is always the question about what you do on your computer, but I wouldn't go less than 8 cores(since 4-core has become the base on the desktop, and even laptops should never be sold with only 2 cores IMO). If you look at the history, when AMD wasn't competitive and Intel stopped trying to actually innovate, quad-core was all you saw on the desktop, so game developers didn't see a reason to support more threads(even though it would have made sense). Once Ryzen came out with 8 cores, and Intel finally responded, you have to expect that every game developer will design with the potential that players will have 8+ core processors, so why not design with that in mind?Remember, a program that is properly multi-threaded in design will work on lower-core processors, but will scale up well when processors with more cores are being used. So going forward, quad-core would work, but 8 or more threads WILL feel a lot better, even for overall use.

CaedenV - Saturday, May 11, 2019 - link

This was a fascinating article! And what I am seeing in the real world seems to reflect this.For the most part, the IPC for general use has improved, but not by a whole lot. But if doing anything that hits the on-chip GPU, or requiring any kind of decrypt/encrypt, then the dedicated hardware in newer chips really makes a big difference.

But at the end of the day, in real-world scenarios, the CPU is simply not the bottle neck for most people. I do a lot of video ripping (all legally purchased, and only for personal use), and the bottleneck is squarely on the Blu-Ray drive. I recently upgraded from a 4x to a 10x drive, and the performance bump was exactly what was expected. Getting a faster CPU or GPU will not help there.

I do a bit of video editing, and the bottle-neck there is still almost always in storage. The 1gbps connection to the NAS, and the 1GBps connection to my RAID0 of SSDs.

I do a bit of gaming at 4k, and again the bottleneck there is squarely on the GPU (GTX1080), and as your tests show, at lower resolution my chip will be slower than a new chip... but still faster than the 60-120fps refresh of the monitor.

The real reason for an upgrade simply isn't the CPU for most people. The upgrade is the chipset. Faster/more RAM, M.2 SSDs, more available throughput for expansion cards, faster USB/USB-C ports, and soon(ish) 10gig Ethernet. These are the things that make life better for the enthusiast and the normal user; and the newer CPUs are simply more capable of taking advantage of all the extra throughput, where Sandy Bridge would perhaps choke when dealing with these newer and faster interfaces that are not available to it.

All that said; I am still not convinced to upgrade. Every previous computer was simply broken, or could not do something after 2-3 years, so an upgrade was literally necessary. But now... my computer is some 8 years old now, and I am amazed at the fact that it still does it all, and does it relatively quickly. Without it being 'broken' it is hard to justify dropping $1000+ into a new build. I mean... I want to upgrade. But I also want to do some house projects, and replace a car, and do stuff with the kids... *sigh* priorities. Part of me wishes that it would break to give me proper motivation to replace it.

webdoctors - Saturday, May 11, 2019 - link

Great timing, I've been using the same chip for 7 or 8 years now and never felt the need to upgrade until this year, but I will upgrade end of this year. DDR4 finally dropped in price and my GTX1070TI I think is getting throttled when the CPU ain't overclocked.atomicWAR - Saturday, May 11, 2019 - link

Gaming at 4K with a i7 3930K @ 4.2ghz (4.6ghz capable when needed) with 2 GTX 1080s...I was planning a new build this year but after reading this I may hold off even longer.wrkingclass_hero - Sunday, May 12, 2019 - link

I've got a 3930K as well. I was planning on upgrading to Threadripper 3 when that comes out, but if it gets delayed I may wait a bit longer for a 5mm Threadripper.mofongo7481 - Saturday, May 11, 2019 - link

I'm still using a sandy bridge i5 2400 overclocked to 3.6Ghz. Still playing modern stuff @ 1080p and pretty enjoyable.Danvelopment - Sunday, May 12, 2019 - link

I think the conclusion is slightly off for gaming, from what I could see it's not that the newer processors were only better higher resolutions, it's that the newer systems were better able to keep the GPU fed with data, resulting in a higher maximum frame rate.So at lower resolutions/quality settings, when the GPUs could let loose they could achieve much higher FPS.

My conclusion from the results wouldn't be to keep it for higher res gaming, but to keep it for gaming if you're still using a 60Hz display (which I am). I bet if you tuned quality settings for all of the GPUs to run at 60 FPS your results would sit pretty close at any resolution.

I'm currently running an E5-2670 for my gaming machine with quad channel DDR3 (4x8GB) and a 1070. That's the budget upgrade path I'd probably recommend at 60Hz.