Upgrading from an Intel Core i7-2600K: Testing Sandy Bridge in 2019

by Ian Cutress on May 10, 2019 10:30 AM EST- Posted in

- CPUs

- Intel

- Sandy Bridge

- Overclocking

- 7700K

- Coffee Lake

- i7-2600K

- 9700K

Power Consumption

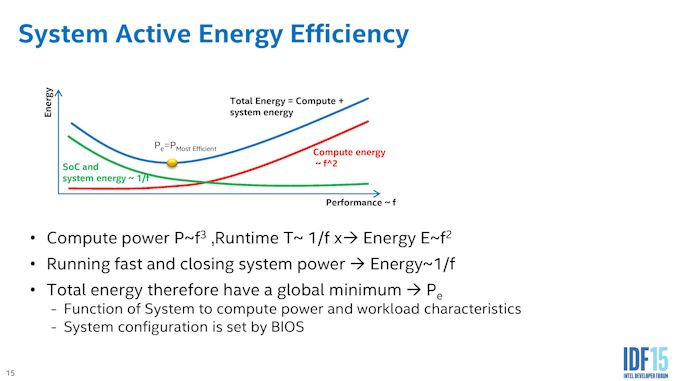

One of the risk factors in overclocking is driving the processor beyond its ideal point of power and performance. Processors are typically manufactured with a particular sweet spot in mind: the peak efficiency of a processor will be at a particular voltage and particular frequency combination, and any deviation from that mark will result in expending extra energy (usually for better performance).

When Intel first introduced the Skylake family, this efficiency point was a key element to its product portfolio. Some CPUs would test and detect the best efficiency point on POST, making sure that when the system was idle, the least power is drawn. When the CPU is actually running code however, the system raises the frequency and voltage in order to offer performance away from that peak efficiency point. If a user pushes that frequency a lot higher, voltage needs to increase and power consumption rises.

So when overclocking a processor, either one of the newer ones or even an old processor, the user ends up expending more energy for the same workload, albeit to get the workload performed faster as well. For our power testing, we took the peak power consumption values during an all-thread version of POV-Ray, using the CPU internal metrics to record full SoC power.

The Core i7-2600K was built on Intel’s 32nm process, while the i7-7700K and i7-9700K were built on variants of Intel’s 14nm process family. These latter two, as shown in the benchmarks in this review, have considerable performance advantages due to microarchitectural, platform, and frequency improvements that the more efficient process node offers. They also have AVX2, which draw a lot of power in our power test.

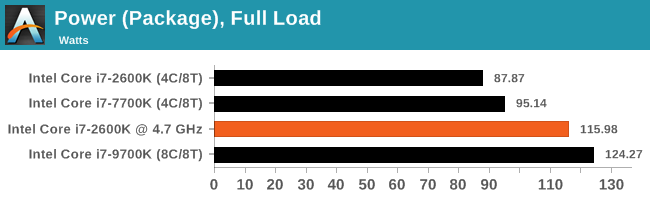

In our peak power results graph, we see the Core i7-2600K at stock (3.5 GHz all-core) hitting only 88W, while the Core i7-7700K at stock (4.3 GHz all-core) at 95 W. These results are both respectable, however adding the overclock to the 2600K, to hit 4.7 GHz all-core, shows how much extra power is needed. At 116W, the 34% overclock is consuming 31% more power (for 24% more performance) when comparing to the 2600K at stock.

The Core i7-9700K, with eight full cores, goes above and beyond this, drawing 124W at stock. While Intel’s power policy didn’t change between the generations, the way it ended up being interpreted did, as explained in our article here:

Why Intel Processors Draw More Power Than Expected: TDP and Turbo Explained

You can also learn about power control on Intel’s latest CPUs in our original Skylake review:

The Intel Skylake Mobile and Desktop Launch, with Architecture Analysis

213 Comments

View All Comments

StrangerGuy - Saturday, May 11, 2019 - link

One thing I want to point out that modern games are far less demanding relative to the CPU versus games in the 90s. If anyone thinks their 8 year old Sandy Bridge quad is having it sort of rough today, they are probably not around to remember running Half-Life comfortably above 60 FPS at least needed a CPU that was released 2 years later.versesuvius - Saturday, May 11, 2019 - link

There is a point in every Windows OS user computer endeavors, that they start playing less and less games, and at about the same time start foregoing upgrades to their CPU. They keep adding ram and hard disk space and maybe a new graphic card after a couple of years. The only reason that such a person that by now has completely stopped playing games may upgrade to a new CPU and motherboard is the maximum amount of RAM that can be installed on their motherboard. And with that really comes the final PC that such a person may have in a long, long time. Kids get the latest CPU and soon will realize the law of diminishing returns, which by now is gradually approaching "no return", much faster than their parents. So, in perhaps ten years there will be no more "Tic", or "Toc" or Cadence or Moore's law. There be will computers, baring the possibility that dumb terminals have replaced PCs, that everybody knows what they can expect from. No serendipity there for certain.Targon - Tuesday, May 14, 2019 - link

The fact that you don't see really interesting games showing up all that often is why many people stopped playing games in the first place. Many people enjoyed the old adventure games with puzzles, and while action appeals to younger players, being more strategic and needing to come up with different approaches in how you play has largely died. Interplay is gone, Bullfrog, Lionhead....On occasion something will come out, but few and far between.Games for adults(and not just adult age children who want to play soldier on the computer) are not all that common. I blame EA for much of the decline in the industry.

skirmash - Saturday, May 11, 2019 - link

I still have an i7-2600 in an old Dell based upon an H67 chipset. I was thinking about using it as a server and updating the board to get updated connectivity. updating the board and using it as a server. Z77 chipset would seem to be the way to go although getting a new board with this chipset seems expensive unless I go used. Anyone any thoughts on this - whether its worthwhile etc or a cost effective way to do it?skirmash - Saturday, May 11, 2019 - link

Sorry for the typos but I hope you get the sentiment.Tunnah - Saturday, May 11, 2019 - link

Oh wow this is insane timing, I'm actually upgrading from one of these and have had a hard time figuring out what sort of performance upgrade I'd be getting. Much appreciated!Tunnah - Saturday, May 11, 2019 - link

I feel like I can chip in a perspective re: gaming. While your benchmarks show solid average FPS and all that, they don't show the quality of life that you lose by having an underpowered CPU. I game at 4K, 2700k (4.6ghz for heat&noise reasons), 1080Ti, and regularly can't get 60fps no matter the settings, or have constant grame blips and dips. This is in comparison to a friend who has the same card but a Ryzen 1700XNewer games like Division 2, Assassin's Creed Odyssey, and as shown here, Shadow Of The Romb Raider, all severely limit your performance if you have an older CPU, to the point where getting a constant 60fps is a real struggle, and benchmarks aside, that's the only benchmark the average user is aiming for.

I also have 1333mhz RAM, which is just a whole other pain! As more and more games move into giant open world games and texture streaming and loading is happening in game rather than on loading screens, having slow RAM really affects your enjoyment.

I'm incredibly grateful for this piece btw, I'm actually moving to Zen2 when it comes out, and I gotta say, I've not been this excited since..well, Sandy Bridge.

Death666Angel - Saturday, May 11, 2019 - link

"I don’t think I purchased a monitor bigger than 1080p until 2012."Wow, really? So you were a CRT guy before that? How could you work on those low res screens all the time?! :D I got myself a 1200p 24" monitor once they became affordable in early 2008 (W2408hH). Had a 1280x1024 19" before that and it was night and day, sooo much better.

PeachNCream - Sunday, May 12, 2019 - link

Still running 1366x768 on my two non-Windows laptops (HP Steam 11 and Dell Latitude e6320) and it okay. My latest, far less uses Windows gaming system has a 14 inch panel running 1600x900. Its a slight improvement, but I could live without it. The old Latitude does all my video production work so though I could use a few more pixels, it isn't the end of the world as is. The laptop my office issued is a HP Probook 640 G3 so it has a 14 inch 1080p panel which to have to scale at 125% to actually use so the resolution is pretty much pointless.PeachNCream - Sunday, May 12, 2019 - link

Ugh, phone auto correct...I really need to look over anything I type on a phone more closely. I feel like I'm reading comment by a non-native English speaker, but its me. How depressing.