The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTPower, Temperature, and Noise

As always, we'll take a look at power, temperature, and noise of the GTX 1660 Ti, though as a pure custom launch we aren't expecting anything out of the ordinary. As mentioned earlier, the XC Black board has already revealed itself in its RTX 2060 guise.

As this is a new GPU, we will quickly review the GeForce GTX 1660 Ti's stock voltages and clockspeeds as well.

| NVIDIA GeForce Video Card Voltages | ||

| Model | Boost | Idle |

| GeForce GTX 1660 Ti | 1.037V | 0.656V |

| GeForce RTX 2060 | 1.025v | 0.725v |

| GeForce GTX 1060 6GB | 1.043v | 0.625v |

The voltages are naturally similar to the 16nm GTX 1060, and in comparison to pre-FinFET generations, these voltages are exceptionally lower because of the FinFET process used, something we went over in detail in our GTX 1080 and 1070 Founders Edition review. As we said then, the 16nm FinFET process requires said low voltages as opposed to previous planar nodes, so this can be limiting in scenarios where a lot of power and voltage are needed, i.e. high clockspeeds and overclocking. For Turing (along with Volta, Xavier, and NVSwitch), NVIDIA moved to 12nm "FFN" rather than 16nm, and capping the voltage at 1.063v.

| GeForce Video Card Average Clockspeeds | |||||

| Game | GTX 1660 Ti | EVGA GTX 1660 Ti XC |

RTX 2060 | GTX 1060 6GB | |

| Max Boost Clock |

2160MHz

|

2160MHz |

2160MHz

|

1898MHz

|

|

| Boost Clock | 1770MHz | 1770MHz | 1680MHz | 1708MHz | |

| Battlefield 1 | 1888MHz | 1901MHz | 1877MHz | 1855MHz | |

| Far Cry 5 | 1903MHz | 1912MHz | 1878MHz | 1855MHz | |

| Ashes: Escalation | 1871MHz | 1880MHz | 1848MHz | 1837MHz | |

| Wolfenstein II | 1825MHz | 1861MHz | 1796MHz | 1835MHz | |

| Final Fantasy XV | 1855MHz | 1882MHz | 1843MHz | 1850MHz | |

| GTA V | 1901MHz | 1903MHz | 1898MHz | 1872MHz | |

| Shadow of War | 1860MHz | 1880MHz | 1832MHz | 1861MHz | |

| F1 2018 | 1877MHz | 1884MHz | 1866MHz | 1865MHz | |

| Total War: Warhammer II | 1908MHz | 1911MHz | 1879MHz | 1875MHz | |

| FurMark | 1594MHz | 1655MHz | 1565MHz | 1626MHz | |

Looking at clockspeeds, a few things are clear. The obvious point is that the very similar results of the reference-clocked GTX 1660 Ti and EVGA GTX 1660 Ti XC are reflected in the virtually identical clockspeeds. The GeForce cards boost higher than the advertised boost clock, as is typically the case in our testing. All told, NVIDIA's formal estimates are still run a bit low, especially in our properly ventilated testing chassis, so we won't complain about the extra performance.

But on that note, it's interesting to see that while the GTX 1660 Ti should have a roughly 60MHz average boost advantage over the GTX 1060 6GB when going by the official specs, in practice the cards end up within half that span. Which hints that NVIDIA's official average boost clock is a little more correctly grounded here than with the GTX 1060.

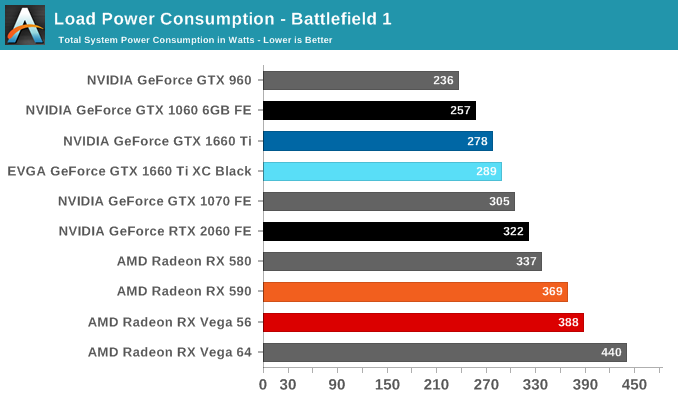

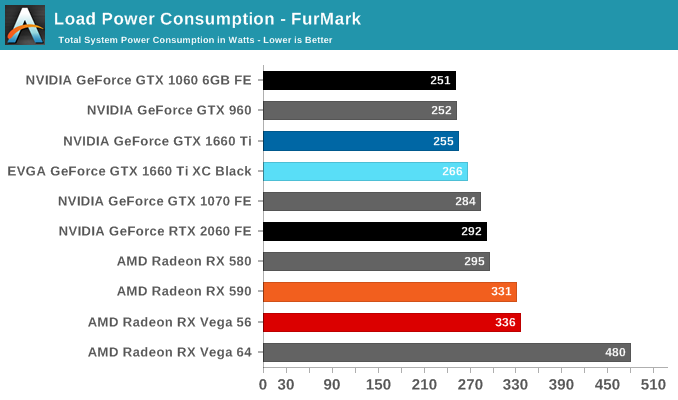

Power Consumption

Even though NVIDIA's video card prices for the xx60 cards have drifted up over the years, the same cannot be said for their power consumption. NVIDIA has set the reference specs for the card at 120W, and relative to their other cards this is exactly what we see. Looking at FurMark, our favorite pathological workload that's guaranteed to bring a video card to its maximum TDP, the GTX 960, GTX 1060, and GTX 1660 are all within 4 Watts of each other, exactly what we'd expect to see from the trio of 120W cards. It's only in Battlefield 1 do these cards pull apart in terms of total system load, and this is due to the greater CPU workload from the higher framerates afforded by the GTX 1660 Ti, rather than a difference at the card level itself.

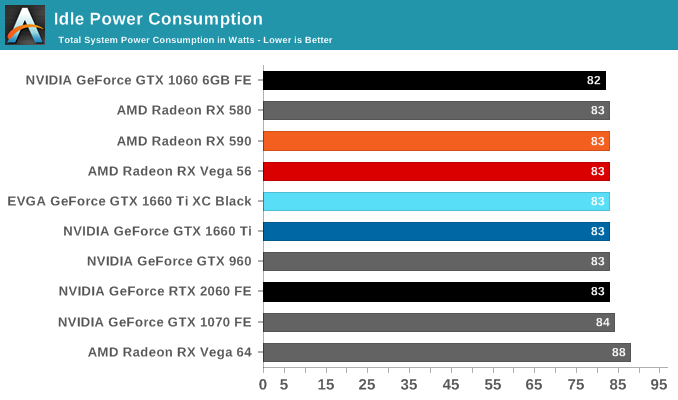

Meanwhile when it comes to idle power consumption, the GTX 1660 Ti falls in line with everything else at 83W. With contemporary desktop cards, idle power has reached the point where nothing short of low-level testing can expose what these cards are drawing.

As for the EVGA card in its natural state, we see it draw almost 10W more on the dot. I'm actually a bit surprised to see this under Battlefield 1 as well since the framerate difference between it and the reference-clocked card is barely 1%, but as higher clockspeeds get increasingly expensive in terms of power consumption, it's not far-fetched to see a small power difference translate into an even smaller performance difference.

All told, NVIDIA has very good and very consistent power control here. and it remains one of their key advantages over AMD, and key strengths in keeping their OEM customers happy.

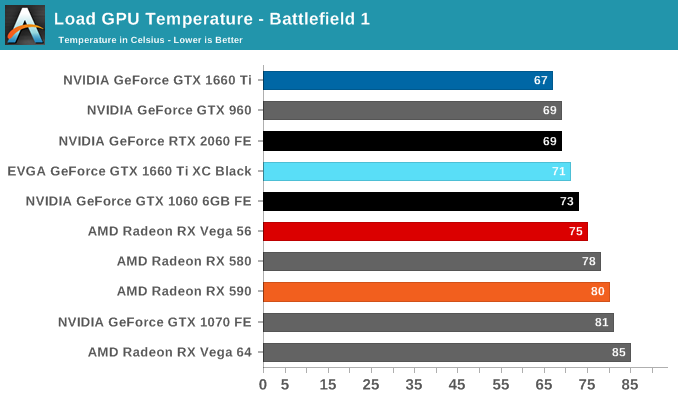

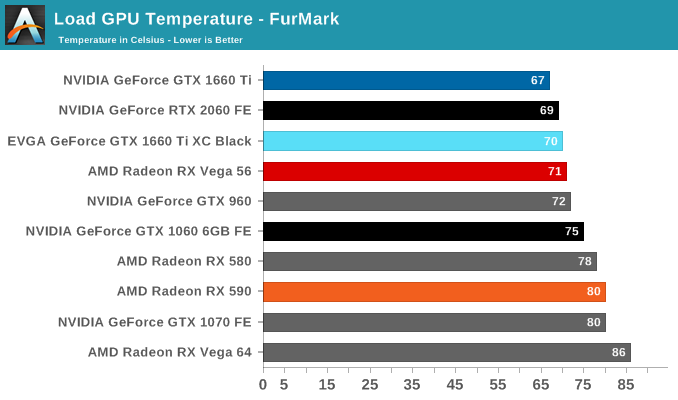

Temperature

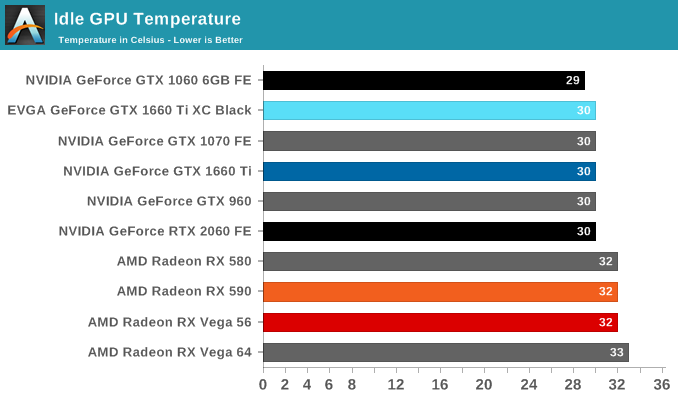

Looking at temperatures, there are no big surprises here. EVGA seems to have tuned their card for high performance cooling, and as a result the large, 2.75-slot card reports some of the lowest numbers in our charts, including a 67C under FurMark when the card is capped at the reference spec GTX 1660 Ti's 120W limit.

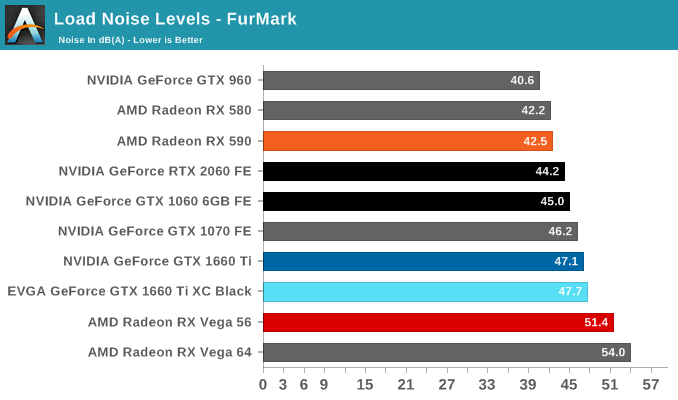

Noise

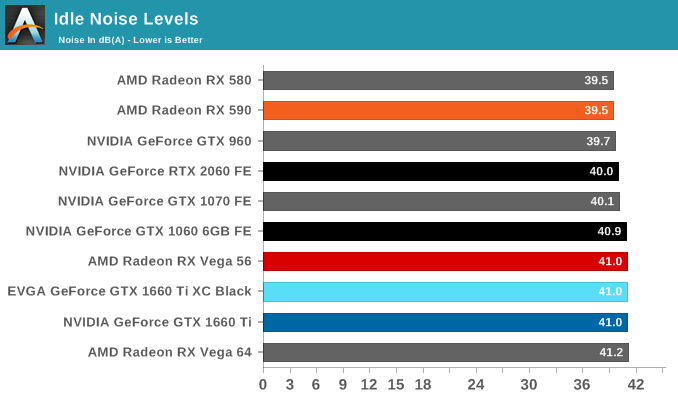

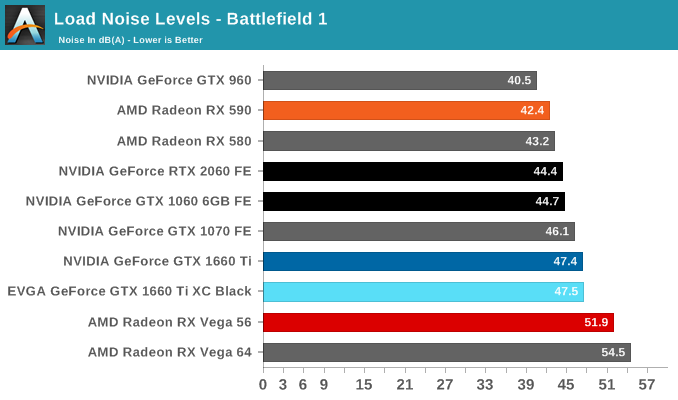

Turning again to EVGA's card, despite being a custom open air design, the GTX 1660 Ti XC Black doesn't come with 0db idle capabilties and features a single smaller but higher-RPM fan. The default fan curve puts the minimum at 33%, which is indicative that EVGA has tuned the card for cooling over acoustics. That's not an unreasonable tradeoff to make, but it's something I'd consider more appropriate for a factory overclocked card. For their reference-clocked XC card, EVGA could have very well gone with a less aggressive fan curve and still have easily maintained sub-80C temperatures while reducing their noise levels as well.

157 Comments

View All Comments

wintermute000 - Friday, February 22, 2019 - link

There is no way your 970 runs 1440p maxed in modern AAA games. Unless your definition of maxed includes frames well below 60 and settings well below ultra.I have a 1060 and it needs medium to medium-high to reliably hold 60FPS @ 1440p.

eddman - Friday, February 22, 2019 - link

$280 for ~40% on average better performance and still 6GB of memory? I already have a 6GB 1060. I suppose I have to wait for navi or 30 series before actually upgrading.Fallen Kell - Friday, February 22, 2019 - link

I guess you missed the part where their memory compression technology has increased performance another 20-33% over previous generation 10xx cards, negating the need to higher memory bandwidth and more space within the card. So, 6GB on this card is essentially like 8-9GB on the previous generation. That is what compression can do (as long as you can compress and decompress fast enough, which doesn't seem to be a problem for this hardware).eddman - Friday, February 22, 2019 - link

No, I didn't. Compression is not a replacement for physical memory, no matter what nvidia claims.eddman - Friday, February 22, 2019 - link

I'm not an expert on this topic, but they state compression is used as a mean to improve bandwidth, not memory space consumption.Someone more knowledgeable can clear this up, but to my understanding textures are compressed when moving from vram to gpu, and not when loading from hdd/ssd or system memory into vram.

Ryan Smith - Friday, February 22, 2019 - link

"I'm not an expert on this topic, but they state compression is used as a mean to improve bandwidth, not memory space consumption."You are correct.

atiradeonag - Friday, February 22, 2019 - link

Laughing at those who think they can get a $279 Vega56 right now: where's your card? where's the link?atiradeonag - Friday, February 22, 2019 - link

Posting a random "sale" being instantly OOS is the usual failed stunt that fanboys from a certain faction to argue for the price/perfOxford Guy - Saturday, February 23, 2019 - link

It's also Newegg par for the course.CiccioB - Friday, February 22, 2019 - link

At those all rejoicing that Vega56 is selling for a slice of bread.. that's the end that failing architecture do when they are a generation behind.Yes, nvidia cards are pricey, but that's because AMD solutions can stand up the competition with them even with expensive components like HBM and tons more of W to suck.

So stop laughing about how poor is this new card price/performance ratio, after few weeks it will have the ratio that the market is going to give it. What we have seen so far is that Vega appeal has gone under the ground level, and as for any new nvidia launch AMD can answer only with a price cut, close followed by a rebrand of something that is OCed (and pumped with even more W).

GCN was dead at its launch time. Let's really hope Navi is something new or we will have nvidia monopoly on the market for another 2 year period.