The AMD Radeon VII Review: An Unexpected Shot At The High-End

by Nate Oh on February 7, 2019 9:00 AM ESTRadeon VII & Radeon RX Vega 64 Clock-for-Clock Performance

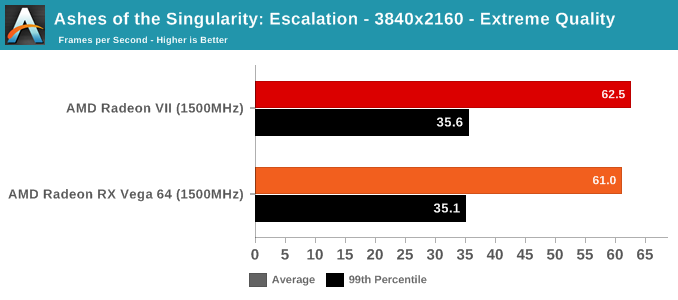

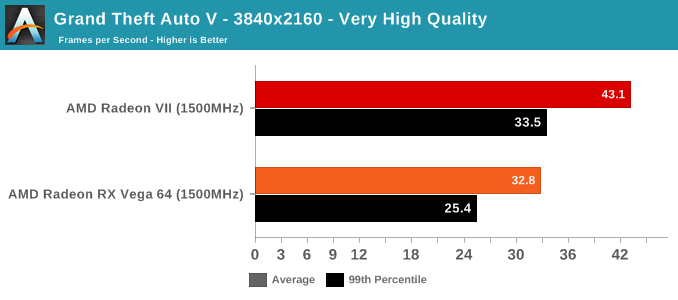

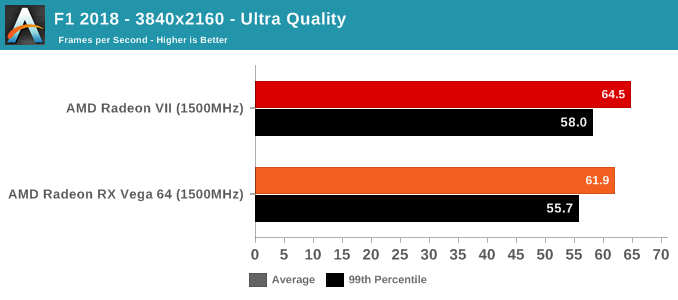

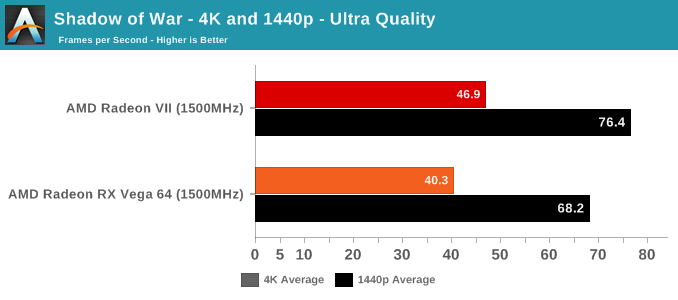

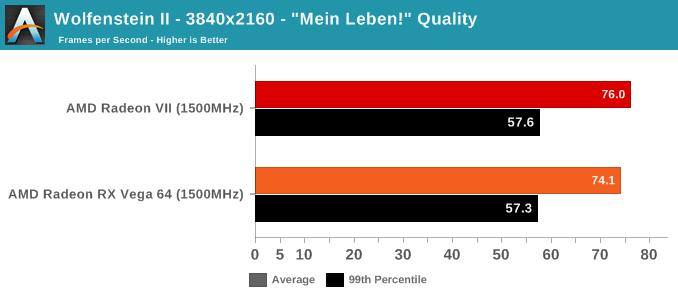

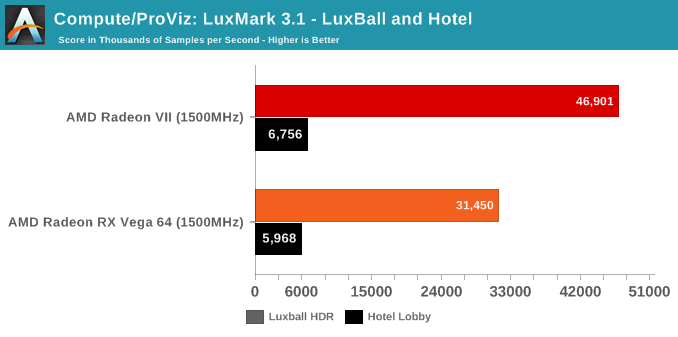

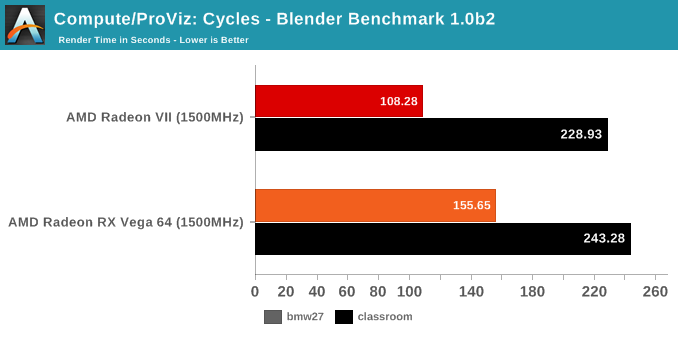

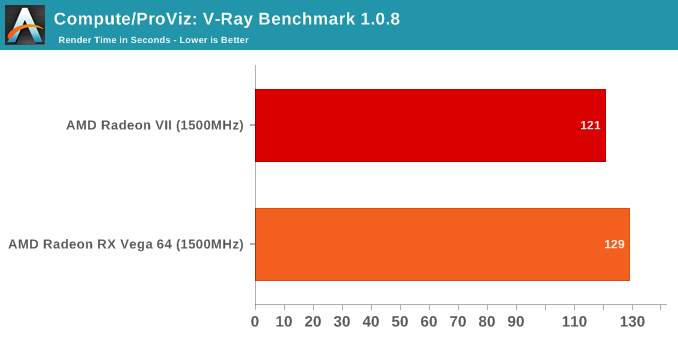

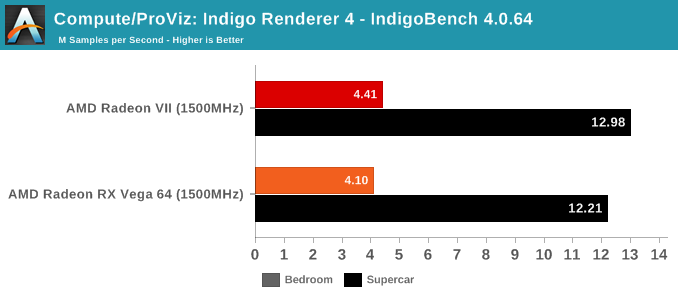

With the variety of changes from the Vega 10 powered RX Vega 64 to the new Radeon VII and its Vega 20 GPU, we wanted to take a look at performance and compute while controlling for clockspeeds. In this way, we can peek at any substantial improvements or differences in pseudo-IPC. There's a couple caveats here; obviously, because the RX Vega 64 has 64 CUs while the Radeon VII has only 60 CUs, the comparison is already not exact. The other thing is that "IPC" is not the exact metric measured here, but more so how much graphics/compute work is done per clock cycle and how that might translate to performance. Isoclock GPU comparisons tend to be less useful when comparing across generations and architectures, as like in Vega designers often design to add pipeline stages to enable higher clockspeeds, but at the cost of reducing work done per cycle and usually also increasing latency.

For our purposes, the incremental nature of 2nd generation Vega allays some of those concerns, though unfortunately, Wattman was unable to downclock memory at this time, so we couldn't get a set of datapoints for when both cards are configured for comparable memory bandwidth. While the Vega GPU boost mechanics means there's not a static pinned clockspeed, both cards were set to 1500MHz, and both fluctuated from 1490 to 1500MHZ depending on workload. All combined, this means that these results should be taken as approximations and lacking granularity, but are useful in spotting significant increases or decreases. This also means that interpreting the results is trickier, but at a high level, if the Radeon VII outperforms the RX Vega 64 at a given non-memory bound workload, then we can assume meaningful 'work per cycle' enhancements relatively decoupled from CU count.

As mentioned above, we were not able to control for the doubled memory bandwidth. But in terms of gaming, the only unexpected result is with GTA V. As an outlier, it's less likely to be an indication of increased gaming 'work per cycle,' and more likely to be related to driver optimization and memory bandwidth increases. GTA V has historically been a title where AMD hardware don't reach the expected level of performance, so regardless there's been room for driver improvement.

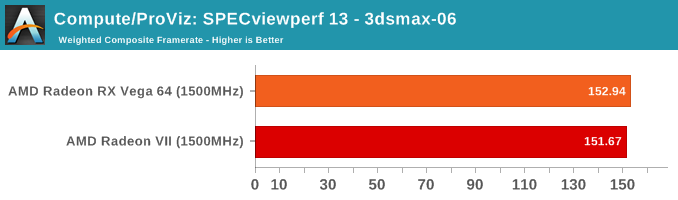

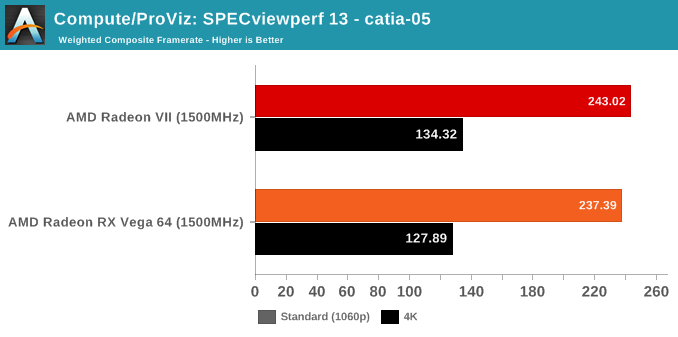

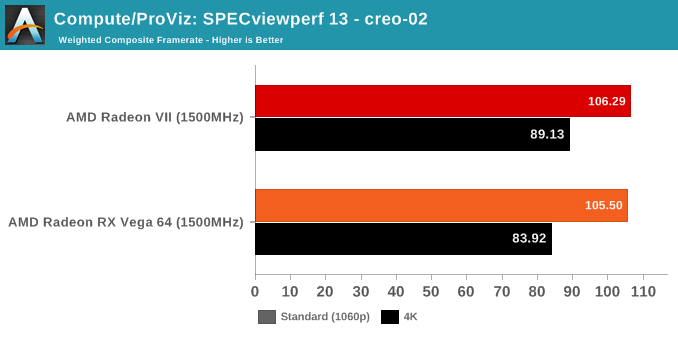

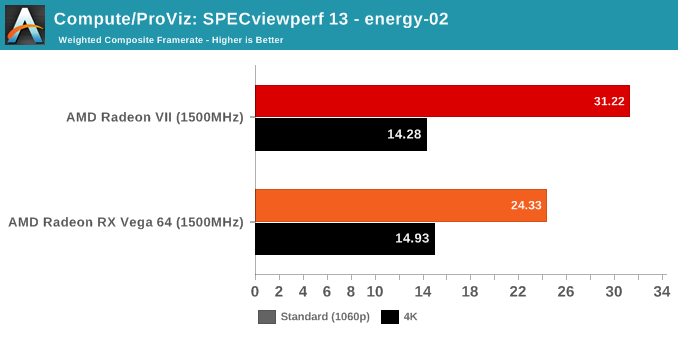

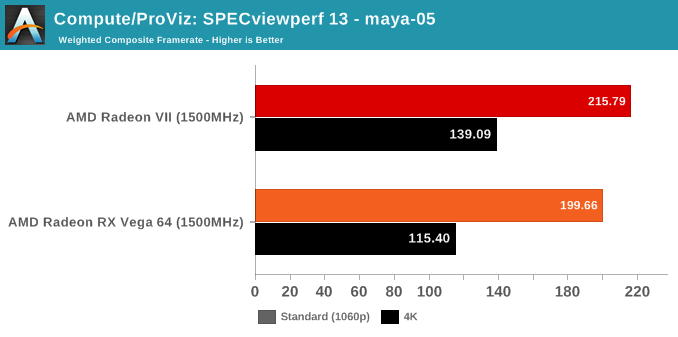

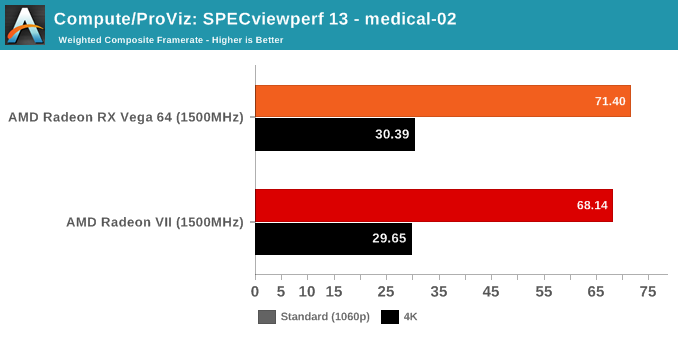

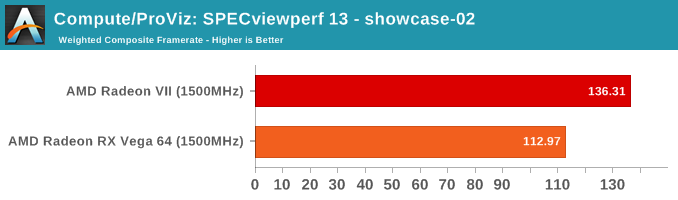

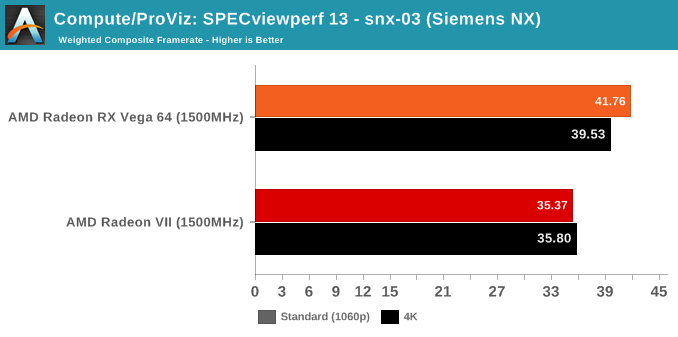

SPECviewperf is a slightly different story, though.

289 Comments

View All Comments

KateH - Friday, February 8, 2019 - link

thirded on still enjoying SupCom! i have however long ago given up on attempting to find the ultimate system to run it. i7 920 @ 4.2Ghz, nope. FX-8150 @ 4.5Ghz, nope. The engine still demands more CPU for late-game AI swarms! (and i like playing on 81x81 maps which makes it much worse)Korguz - Friday, February 8, 2019 - link

Holliday75 and KateHive run supcom on a i7 930 OC'd to 4.2 on a 7970, slow as molasses late in the game VS the AI, and on my current i7 5930k and strix 1060 and.. same thing.. very slow late in the game.... the later patches supposedly helped the game use more then 1 or 2 cores, i think Gas Powered games called it " multi core aware "

makes me wonder how it would run on something newer like a threadripper, top en Ryzen or top end i7 and an i9 with a 1080 + vid card though, compared to my current comp....

eva02langley - Friday, February 8, 2019 - link

Metal Gear Solid V, Street Fighter 5, Soulcalibur 6, Tekken 7, Senua Sacrifice...Basically, nothing from EA or Ubisoft or Activision or Epic.

ballsystemlord - Thursday, February 7, 2019 - link

Oh oh! Would you be willing to post some FLOSS benchmarks? Xonotic, 0AD, Openclonk and Supertuxkart?Manch - Friday, February 8, 2019 - link

I would like to see a mixture of games that are dedicated to a singular API, and ones that support all three or at least two of them. I think that would make for a good spread.Manch - Thursday, February 7, 2019 - link

Not sure that I expected more. The clock for clock against the V64 is telling. @$400 for the V64 vs $700 for the VII, ummm....if you need a compute card as well sure, otherwise, Nvidia got the juice you want at better temps for the same price. Not a bad card, but it's not a great card either. I think a full 64CU's may have improved things a bit more and even put it over the top.Could you do a clock for clock compare against the 56 since they have the same CU count?? I'd be curious to see this and extrapolate what a VII with 64CU's would perform like just for shits and giggles.

mapesdhs - Friday, February 8, 2019 - link

Are you really suggesting that, given two products which are basically the same, you automatically default to NVIDIA because of temperatures?? This really is the NPC mindset at work. At least AMD isn't ripping you off with the price, Radeon VII is expensive to make, whereas NVIDIA's margin is enormous. Remember the 2080 is what should actually have been the 2070, the entire stack is a level higher than it should be, confirmed by die code numbers and the ludicrous fact that the 2070 doesn't support SLI.Otoh, Radeon II is generally too expensive anyway; I get why AMD have done it, but really it's not the right way to tackle this market. They need to hit the midrange first and spread outwards. Stay out of it for a while, come back with a real hammer blow like they did with CPUs.

Manch - Friday, February 8, 2019 - link

Well, they're not basically the same. Who's the NPC LOL? I have a V64 in my gaming rig. It's loud but I do like it for the price. The 2080 is a bit faster than the VII for the same price. It does run cooler and quieter. For some that is more important. If games is all you care about, get it. If you need compute, live with the noise and get the VII.I don't care how expensive it is to make. If AMD could put out a card at this level of performance they would and they would sell it at this price.

Barely anyone uses SLI/Crossfire. It's not worth it. I previously had 2 290X 8GB in Crossfire. I needed a beter card for VR, V64 was the answer. It's louder but it was far cheaper than competitors. The game bundle helped. Before that, I had bought a 1070 for the wife's computer. It was a good deal at the time. Some of yall get too attached to your brands get all frenzied at any criticism. I buy what suits my needs at the best price/perf.

AdhesiveTeflon - Friday, February 8, 2019 - link

Not our fault AMD decided to make a video card with more expensive components and not beat the competition,mapesdhs - Friday, February 8, 2019 - link

Are you really suggesting that, given two products which are basically the same, you automatically default to NVIDIA because of temperatures?? This really is the NPC mindset at work. At least AMD isn't ripping you off with the price, Radeon VII is expensive to make, whereas NVIDIA's margin is enormous. Remember the 2080 is what should actually have been the 2070, the entire stack is a level higher than it should be, confirmed by die code numbers and the ludicrous fact that the 2070 doesn't support SLI.Otoh, Radeon II is generally too expensive anyway; I get why AMD have done it, but really it's not the right way to tackle this market. They need to hit the midrange first and spread outwards. Stay out of it for a while, come back with a real hammer blow like they did with CPUs.