The NVIDIA GeForce RTX 2060 6GB Founders Edition Review: Not Quite Mainstream

by Nate Oh on January 7, 2019 9:00 AM ESTBattlefield 1 (DX11)

Battlefield 1 returns from the 2017 benchmark suite, the 2017 benchmark suite with a bang as DICE brought gamers the long-awaited AAA World War 1 shooter a little over a year ago. With detailed maps, environmental effects, and pacy combat, Battlefield 1 provides a generally well-optimized yet demanding graphics workload. The next Battlefield game from DICE, Battlefield V, completes the nostalgia circuit with a return to World War 2, but more importantly for us, is one of the flagship titles for GeForce RTX real time ray tracing, although at this time its realtime raytracing isn't ready to be used as a generalizable benchmark.

We use the Ultra preset is used with no alterations. As these benchmarks are from single player mode, our rule of thumb with multiplayer performance still applies: multiplayer framerates generally dip to half our single player framerates. Battlefield 1 also supports HDR (HDR10, Dolby Vision).

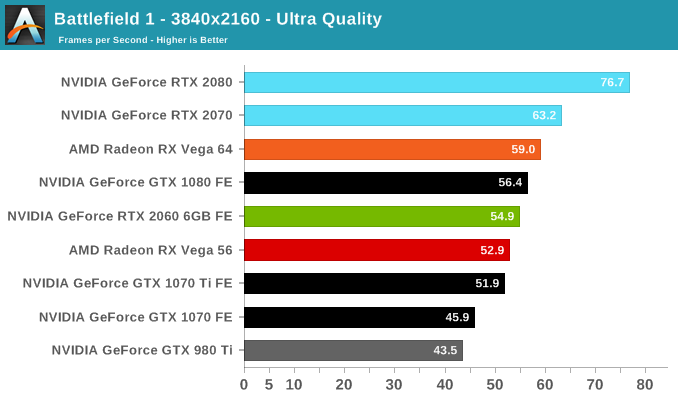

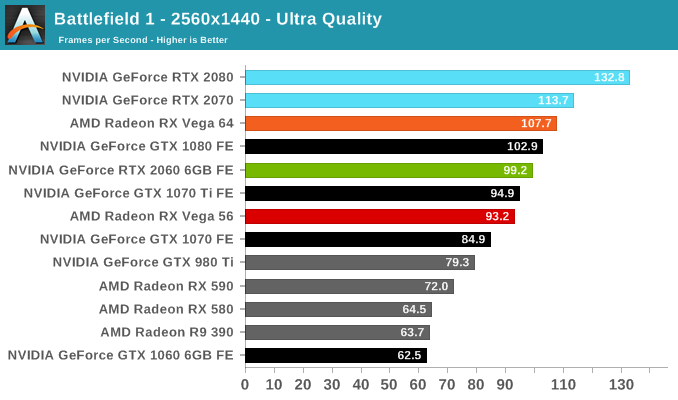

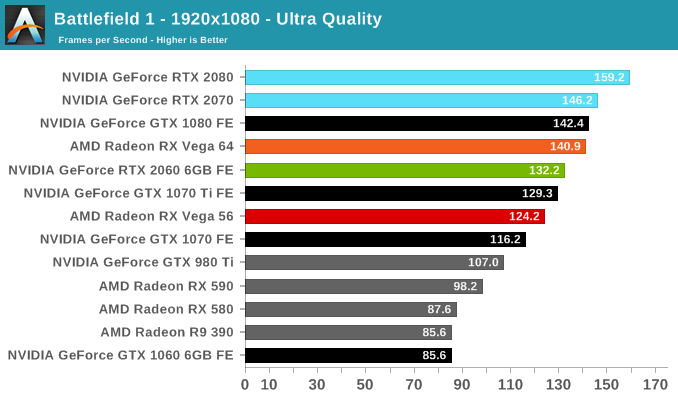

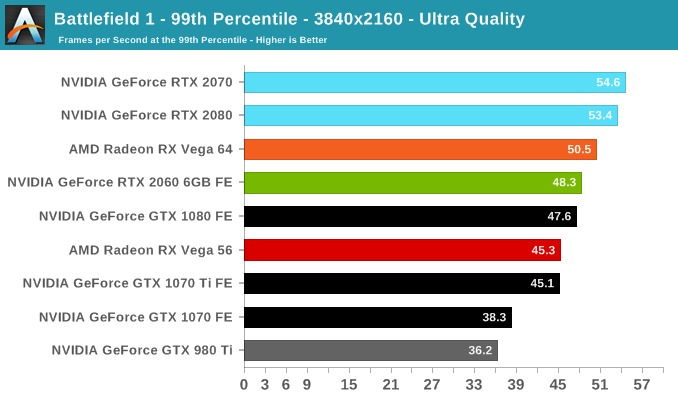

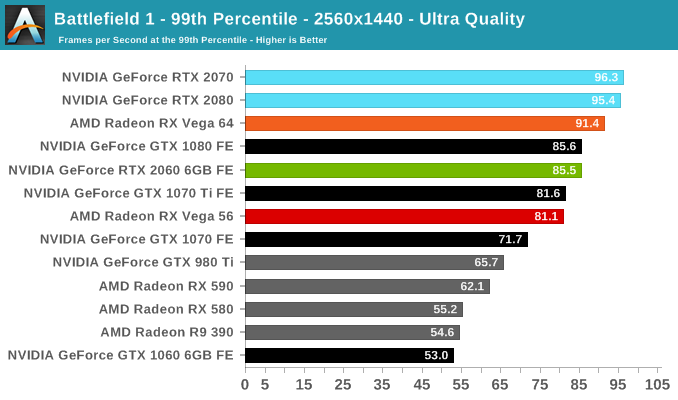

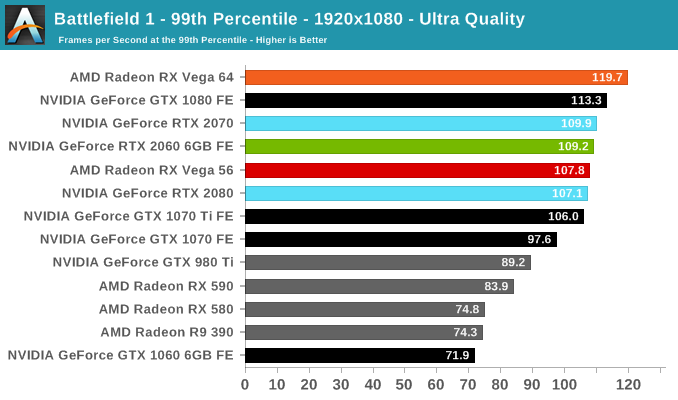

Battlefield 1 has made the rounds for some time, and after the optimizations over the years both manufacturers generally enjoy solid performance across the board. The RTX 2060 (6GB) is no exception and fares well, splitting the difference between the GTX 1070 Ti and GTX 1080. This also means it opens a lead on the RX Vega 56.

134 Comments

View All Comments

sing_electric - Monday, January 7, 2019 - link

It's likely that Nvidia has actually done something to restrict the 2060s to 6GB - either though its agreements with board makers or by physically disabling some of the RAM channels on the chip (or both). I agree, it'd be interesting to see how it performs, since I'd suspect it'd be at a decent price/perf point compared to the 2070, but that's also exactly why we're not likely to see it happen.CiccioB - Monday, January 7, 2019 - link

You can't add memory at will. You need to take into consideration the available bus, and as this is a 192bit bus, you can install 3, 6 or 12 GB of memory unless you cope with hybrid configuration thorough heavily optimized drivers (as nvidia did with 970).nevcairiel - Monday, January 7, 2019 - link

Even if they wanted to increase it, just adding 2GB more is hard to impossible. The chip has a certain memory interface, in this case 192-bit. Thats 6x 32-bit memory controller, for 6 1GB chips. You cannot just add 2 more without getting into trouble - like the 970, which had unbalanced memory speeds, which was terrible.mkaibear - Tuesday, January 8, 2019 - link

"terrible" in this case defined as "unnoticeable to anyone not obsessed with benchmark scores"Retycint - Tuesday, January 8, 2019 - link

It was unnoticeable back then, because even the most intensive game/benchmark rarely utilized more than 3.5GB of RAM. The issue, however, comes when newer games inevitably start to consume more and more VRAM - at which point the "terrible" 0.5GB of VRAM will become painfully apparent.mkaibear - Wednesday, January 9, 2019 - link

So, you agree with my original comment which was that it was not terrible at the time? Four years from launch and it's not yet "painfully apparent"?That's not a bad lifespan for a graphics card. Or if you disagree can you tell me which games, now, have noticeable performance issues from using a 970?

FWIW my 970 has been great at 1440p for me for the last 4 years. No performance issues at all.

atragorn - Monday, January 7, 2019 - link

I am more interested in that comment " yesterday’s announcement of game bundles for RTX cards, as well as ‘G-Sync Compatibility’, where NVIDIA cards will support VESA Adaptive Sync. That driver is due on the same day of the RTX 2060 (6GB) launch, and it could mean the eventual negation of AMD’s FreeSync ecosystem advantage." will ALL nvidia cards support Freesync/Freesync2 or only the the RTX series ?A5 - Monday, January 7, 2019 - link

Important to remember that VESA ASync and FreeSync aren't exactly the same.I don't *think* it will be instant compatibility with the whole FreeSync range, but it would be nice. The G-sync hardware is too expensive for its marginal benefits - this capitulation has been a loooooong time coming.

Devo2007 - Monday, January 7, 2019 - link

Anandtech's article about this last night mentioned support will be limited to Pascal & Turing cardsRyan Smith - Monday, January 7, 2019 - link

https://www.anandtech.com/show/13797/nvidia-to-sup...