The NVIDIA GeForce RTX 2060 6GB Founders Edition Review: Not Quite Mainstream

by Nate Oh on January 7, 2019 9:00 AM ESTFinal Fantasy XV (DX11)

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to utilize OCAT. Upon release, the standalone benchmark received criticism for performance issues and general bugginess, as well as confusing graphical presets and performance measurement by 'score'. In its original iteration, the graphical settings could not be adjusted, leaving the user to the presets that were tied to resolution and hidden settings such as GameWorks features.

Since then, Square Enix has patched the benchmark with custom graphics settings and bugfixes for better accuracy in profiling in-game performance and graphical options, though leaving the 'score' measurement. For our testing, we enable or adjust settings to the highest except for NVIDIA-specific features and 'Model LOD', the latter of which is left at standard. Final Fantasy XV also supports HDR, and it will support DLSS at some later date.

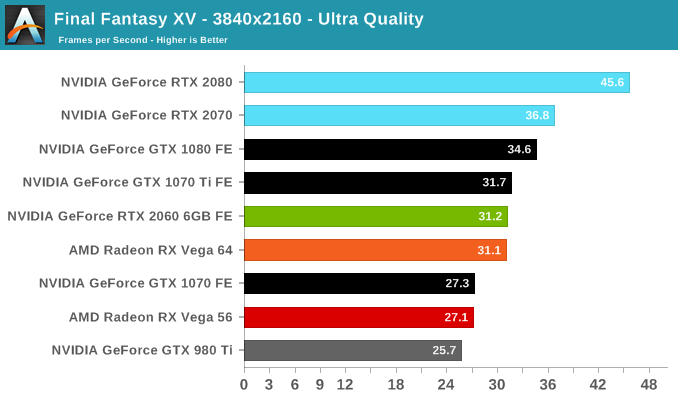

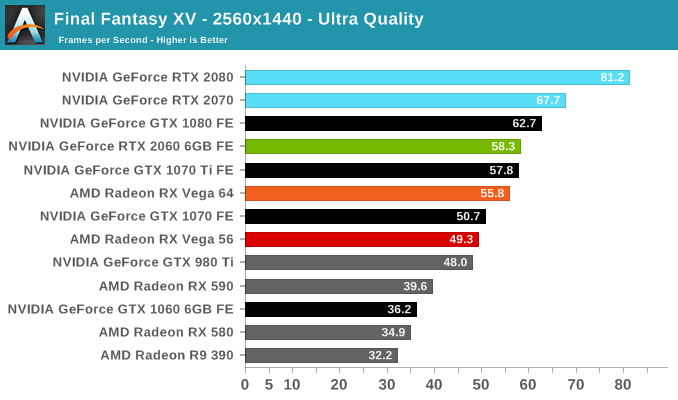

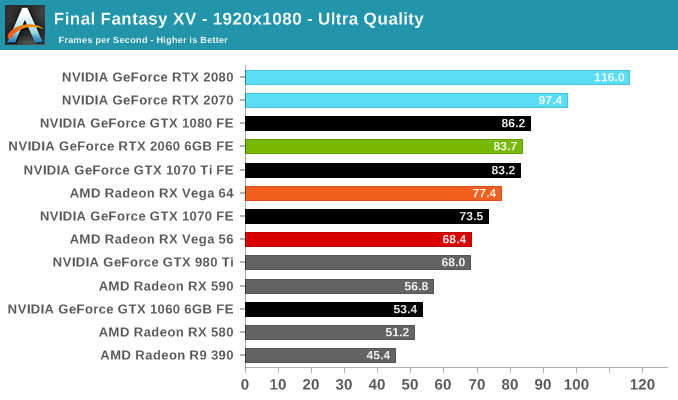

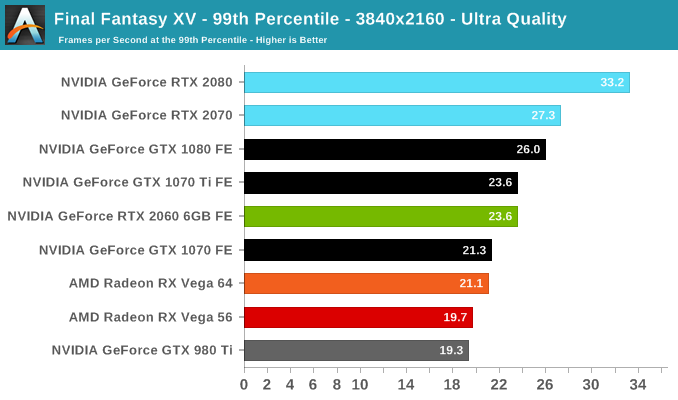

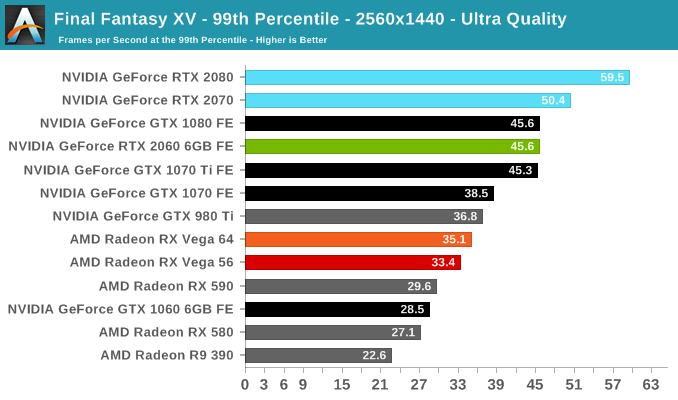

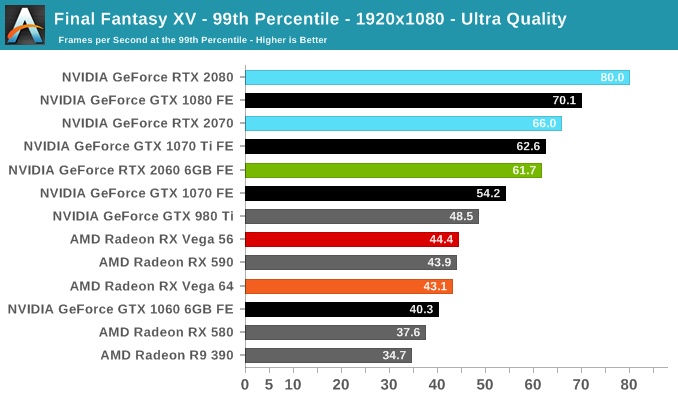

At 1080p and 1440p, the RTX 2060 (6GB) returns to its place between the GTX 1080 and GTX 1070 Ti. Final Fantasy is less favorable to the Vega cards so the RTX 2060 (6GB) is already faster than the RX Vega 64. With the relative drop in 4K performance, there are more hints of 6GB being potentially insufficient.

134 Comments

View All Comments

Bluescreendeath - Monday, January 7, 2019 - link

And take a look at the article's 4k benchmarks. The 2060 6gb performs equal to or better than the vega 64 or gtx1070 gpus with 8GB of vram even on 4k resolution. You are overly hung up on paper specs and completely missing what is actually important - real world performance. The 2060 clearly has sufficent VRAM as it performs better than its closest priced competitors and spanks the rx580 8gb by 50-60%.ryrynz - Tuesday, January 8, 2019 - link

This guy gets it.TheJian - Tuesday, January 8, 2019 - link

ROFL. AMD is aiming first 7nm cards at GTX 1080/1070ti perf. This is not going to kill NV's cash cows, which 80% of NET INCOME comes from ABOVE $300. IE, 2060 on up and workstation/server cards at 80% of NV's INCOME (currently at ~3B a year, AMD <350mil).NV is one of the companies listed as having already made LARGE orders at TSMC, along with Apple, AMD etc. The only reason AMD is first (July, MAYBE, So NV clear sailing for 6 more months on 2060+ pricing and NET INCOME), is Nvidia is waiting for price to come down so they can put out a LARGER die (you know brute force you mentioned), AND perhaps more importantly their 12nm 2080ti will beat the snot out of AMD 7nm for over a year as AMD is going small with 7nm because it's NEW. NV asked TSMC to make 12nm special for them...LOL, as you can do that with 3B net INCOME. It worked as it smacked around AMD 14nm chips and looks like they'll be fine vs. 7nm that is SMALL die sizes. If you are NOT competing above $300 you'll remain broke vs. Intel/NV.

https://www.extremetech.com/computing/283241-nvidi...

As he says, not likely NV will allow more than 6-12 on a new node without a response from NV, though if you’re not battling above $300, no point for an NV response as not much of their money is under $250.

“If Navi is a midrange play that tops out at $200-$300, Nvidia may not care enough to respond with a new top-to-bottom architectural refresh. If Navi does prove to be competitive against the RTX 2070 at a lower price point, Nvidia could simply respond by cutting Turing prices while holding a next-generation architecture in reserve precisely so it can re-establish the higher prices it so obviously finds preferable. This is particularly true if Navi lacks GPU ray tracing and Nvidia can convince consumers that ray tracing is a technology worth investing in.”

AGREED. No response needed if you are NOT chasing my cash cows. Again, just a price cut needed even if NAVI is really good (still missing RT+DLSS) and then right back to higher prices with 7nm next gen Q1 2020? This is how AMD should operate, but management doesn’t seem to get chasing RICH people instead of poor.

BRUTE FORCE=LARGER than your enemy, and beating them to death with it (NV does this, reticle limits hit regularly). NV 12nm is 445mm^2, AMD 7nm looks like 1/2 that. The process doesn't make up that much, so no win for AMD vs. top end NV stuff obviously and they are not even claiming that with 1080 GTX perf...LOL. 10-15% better than Vega64 or 1080 (must prove this at $249)? Whatever you're smoking, please pass it. ;)

Raytracing+DLSS on RTX 2060 is 88fps. Without both 90fps. I call that USEFUL, not useless. I call it FREE raytracing.

https://wccftech.com/amd-rx-3080-3070-3060-navi-gp...

Not impressed for no RT/DLSS tech likely for another year as AMD said they won't bother for now. This card doesn't compete vs ANY RTX card, as they come with RT+DLSS as NV shows releasing new 10 series cards, probably to justify the RTX pricing too (showing you AMD is in a different rung by rehashing 10 series). Which as you see below DLSS+RT massively boosts perf so it's free. It's clear using tensor cores to boost RT is pretty great.

https://wccftech.com/nvidia-geforce-rtx-2060-offic...

Now that is impressive. Beats my 1070ti handily, looks better while doing it, and shaves off 20-30w from 180. That will be 120w with 7nm and likely faster and BEFORE xmas if AMD 7nm is anything worth talking about on gpu (cpu great, gpu aimed too low, like xbox1/ps4 needing another rev or two...LOL). 5Grays/s (none for AMD, this year) which is plenty for 1080p as shown. It's almost like getting a monitor upgrade for free (1080p DLSS+RT is 1440p or better looking with no perf loss). What do you think happens with games DESIGNED for RT/DLSS instead of patched in like BF5? LOL. These games will be faster than BF5 unless designed poorly right? It only took 3 weeks to up perf 50% in a game NOT made for the tech (due to a bug in the hardware EA found I guess as noted in their vid - NV fixed, and bam, perf up 50%). 6.5TF, which even without RT+DLSS is looking tough for AMD 7nm currently based on announcements above. AMD will be 150w for 3080 it seems, vs. NV 150-160w 12nm 2060 etc. Good luck, no wiping away NV here.

"If you’re having a hard time believing this, don’t worry, you’re not alone because it does sound unbelievable."

Yep. I agree, but we'll see. Not too hard to understand why AMD would have to price down, as it has no RT+DLSS. You get more from NV, so it's likely higher if rumor pricing on AMD is correct.

Calling 60% barely faster is just WRONG. Categorically WRONG. Maybe you could say that at 10%, heck I'll give 15% to you too. But at 60% faster, you're not even on the same playing field now. RTX cards (2060+ all models total) will sell MILLIONS in the next year and likely have already sold a million (millions? All of xmas sales) with 2080/2080ti/2070 out for a while. It only takes 10mil for a dev to start making games for consoles, and I’m guessing it’s the same roughly for a PC technology (at 7nm they’ll ALL be RTX probably to push the tech). The first run of Titans was 100k and sold out in an F5 olympics session...LOL. Heck the 2nd run did the same IIRC and NV said they couldn’t make them as fast as they sold them. I'm pretty sure volume on a 2060 card is MUCH higher than Titan runs (500k? A million run for midrange?). I think we’re done here, as you have no data to back up your points ;) Heck we can’t even tell what NV is doing on 7nm yet, as it’s all just RUMOR as the extremetech article shows. Also, I HIGHLY doubt AMD will decimate the new 590 with prices like WCCFTECH rumored. The 3070 price would pretty much KILL all 590 sales if $199. Again, I don’t believe these prices, but we’ll see if AMD is this dumb. NONE of these “rumors” look like they are AMD slides etc. Just words on a page. I think they’ll all be $50 or more higher than rumored.

Bp_968 - Tuesday, January 8, 2019 - link

I think your confused about DLSS. DLSS is fancy upscaling. It won't make a 1080p image look like 1440p, it will make a 720p image "sorta" look like a 1080p image (does it even work in 1080p now? It was originally only available on 1440p and 4k, for obvious reasons.).The 2060 is the best value 20 series card so far but when compared to historical values its absolute garbage. In the 350$ price bracket you had the 4GB 970, then the 8gb 1070/1070ti, and now the *6GB* 2060. The 1070 and 1070ti were a huge improvement over the equivalent priced 970 and the 2060 (the same price bracket) is a couple of percent better (if that) and at a 2GB memory deficit!

Nvidia shoveled a enteprise design onto gamers to try and recover some R&D costs from gamers itching for a new generation card. There are already rumors of a 2020 release of an entirely new design based on 7nm. This seems quite plausible considering they are going to be facing new 7nm cards from AMD and *something* from intel in 2020. And underestimating Intel has always been a very very dangerous thing to do.

D. Lister - Tuesday, January 8, 2019 - link

"DLSS is fancy upscaling. It won't make a 1080p image look like 1440p, it will make a 720p image "sorta" look like a 1080p image (does it even work in 1080p now? It was originally only available on 1440p and 4k, for obvious reasons.)."Em no, it's not upscaling, but rather the opposite.

https://www.digitaltrends.com/computing/everything...

"DLSS also leverages some form of super-sampling to arrive at its eventual image. That involves rendering content at a higher resolution than it was originally intended for and using that information to create a better-looking image. But super-sampling typically results in a big performance hit because you’re forcing your graphics card to do a lot more work. DLSS however, appears to actually improve performance."

That is where the tensor AI comes in. It is trained in-house by Nvidia with the game, with higher res images (upto 64X resolution), which the AI then applies to change the image you see by effectively applying supersampling (aka DOWNscaling).

Example:

If object A looks like A1 at 1080p, which looks like A2 if downsampled from resolution(n*1080p)

If object B looks like B1 at 1080p, which looks like B2 if downsampled from resolution(n*1080p)

If object C looks like C1 at 1080p, which looks like C2 if downsampled from resolution(n*1080p)

Then a scene at 1080p which has objects A, B and C which, without DLSS, would look like A1B1C1, would look like A2B2C2 with DLSS.

Gastec - Saturday, January 19, 2019 - link

Intel will release low end cards, from a gaming point of view, considering 1440p as the new standard. Nvidia will still have the high end and they will raise the prices of their high performance cards even further. I see nothing good in the near future for our pockets. The wealthy trolls will be even more aggresive.ryrynz - Tuesday, January 8, 2019 - link

What? Pointless? You don't use this card for 4K...CiccioB - Wednesday, January 9, 2019 - link

Why not?If DLSS allows for the right performances I can't see why 4K are excluded from the possibile use of this card.

Or do you think that 4K is only 8+GB of RAM, 3000+ shaders and 64+ROPS with 500+GB/s bandwidth for any particular reason?

They are all there for <b>incrementing performances</b>. If can do that with other kind of work (like using DLSS) then, what's the problem?

The fact that AMD has to create a card with the above resources to do (maybe) the same work as they have not invested a single dollar in AI up to now ad are at least 4 years behind the competition on this particular feature?

DominionSeraph - Monday, January 7, 2019 - link

No, the 580 has a significantly worse price/performance ratio. All of you here are making the common mistake of calculating price/performance as though a card works without a PC to put it in, instead of improving the performance of said PC. If we calculated price/performance by the cost of the individual component then every discrete card would be infinitely worse than integrated graphics, which costs $0. We can intuitively understand that this is flat out false.If you've just dumped $3000 into a high end machine and its peripherals, with a RX 580 the cost goes to $3200. With a RTX 2060 it's $3350. The RTX 2060 is 4.7% more expensive for 50% more speed.

This price/performance holds true until we hit a $100 PC. If you pulled a $100 Sandy Bridge rig off ebay, then now at $450 with a RTX 1060 it is 50% more expensive than at $300 with a RX 580.

As long as your rig is worth more than $100 the RTX 1060 stomps the RX 580 in price/performance.

AshlayW - Monday, January 7, 2019 - link

You're ignoring the point that not everyone wants to spend $350 on a GPU. 1080p gaming is perfectly acceptable on cards costing less than $300 and at the moment AMD offers the best value here. Especially with RX 570. Turing is just overpriced.