The $60 CPU Question: AMD Athlon 200GE or Intel Pentium Gold G5400? A Review

by Ian Cutress on January 14, 2019 8:00 AM ESTCPU Performance: System Tests

Our System Test section focuses significantly on real-world testing, user experience, with a slight nod to throughput. In this section we cover application loading time, image processing, simple scientific physics, emulation, neural simulation, optimized compute, and 3D model development, with a combination of readily available and custom software. For some of these tests, the bigger suites such as PCMark do cover them (we publish those values in our office section), although multiple perspectives is always beneficial. In all our tests we will explain in-depth what is being tested, and how we are testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

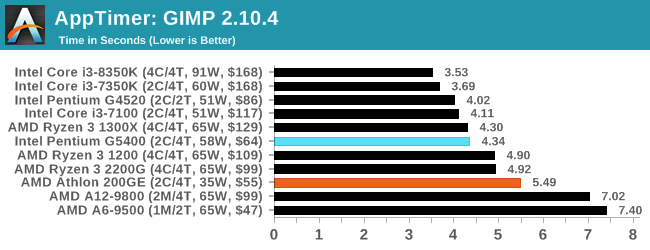

Application Load: GIMP 2.10.4

One of the most important aspects about user experience and workflow is how fast does a system respond. A good test of this is to see how long it takes for an application to load. Most applications these days, when on an SSD, load fairly instantly, however some office tools require asset pre-loading before being available. Most operating systems employ caching as well, so when certain software is loaded repeatedly (web browser, office tools), then can be initialized much quicker.

In our last suite, we tested how long it took to load a large PDF in Adobe Acrobat. Unfortunately this test was a nightmare to program for, and didn’t transfer over to Win10 RS3 easily. In the meantime we discovered an application that can automate this test, and we put it up against GIMP, a popular free open-source online photo editing tool, and the major alternative to Adobe Photoshop. We set it to load a large 50MB design template, and perform the load 10 times with 10 seconds in-between each. Due to caching, the first 3-5 results are often slower than the rest, and time to cache can be inconsistent, we take the average of the last five results to show CPU processing on cached loading.

As a single threaded test, application loading is a key part of the user experience. Unfortunately the AMD 200GE is 21% slower in this case.

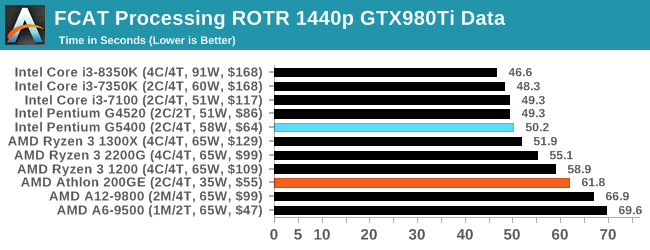

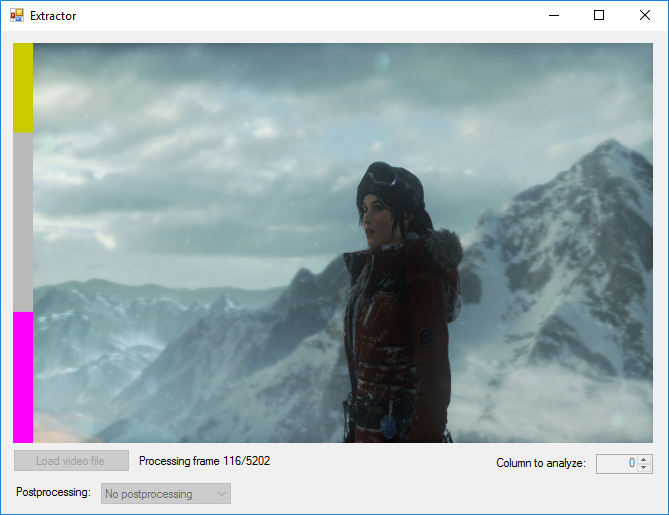

FCAT: Image Processing

The FCAT software was developed to help detect microstuttering, dropped frames, and run frames in graphics benchmarks when two accelerators were paired together to render a scene. Due to game engines and graphics drivers, not all GPU combinations performed ideally, which led to this software fixing colors to each rendered frame and dynamic raw recording of the data using a video capture device.

The FCAT software takes that recorded video, which in our case is 90 seconds of a 1440p run of Rise of the Tomb Raider, and processes that color data into frame time data so the system can plot an ‘observed’ frame rate, and correlate that to the power consumption of the accelerators. This test, by virtue of how quickly it was put together, is single threaded. We run the process and report the time to completion.

In a similar light, the single threaded nature of this test shines on an AMD processor that is 18.6% slower than the Intel competition.

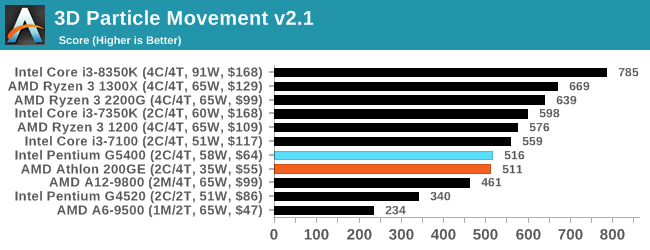

3D Particle Movement v2.1: Brownian Motion

Our 3DPM test is a custom built benchmark designed to simulate six different particle movement algorithms of points in a 3D space. The algorithms were developed as part of my PhD., and while ultimately perform best on a GPU, provide a good idea on how instruction streams are interpreted by different microarchitectures.

A key part of the algorithms is the random number generation – we use relatively fast generation which ends up implementing dependency chains in the code. The upgrade over the naïve first version of this code solved for false sharing in the caches, a major bottleneck. We are also looking at AVX2 and AVX512 versions of this benchmark for future reviews.

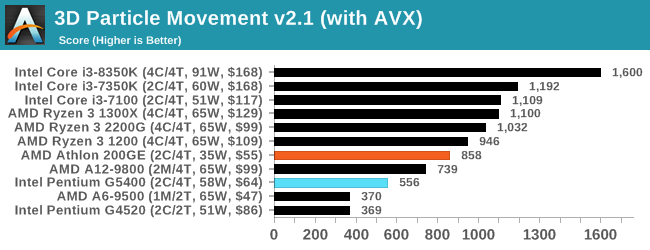

For this test, we run a stock particle set over the six algorithms for 20 seconds apiece, with 10 second pauses, and report the total rate of particle movement, in millions of operations (movements) per second. We have a non-AVX version and an AVX version, with the latter implementing AVX512 and AVX2 where possible.

3DPM v2.1 can be downloaded from our server: 3DPMv2.1.rar (13.0 MB)

For pure unoptimized throughput, both processors are similar in the 3DPM test.

But if we crank on the tuned AVX code, the AMD 200GE scores a big win. On the Pentium, the different code path had almost zero effect, with less than a 10% increase in performance, but the 200GE went up by a good 60% by comparison.

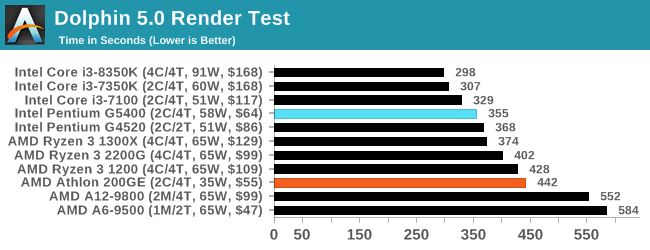

Dolphin 5.0: Console Emulation

One of the popular requested tests in our suite is to do with console emulation. Being able to pick up a game from an older system and run it as expected depends on the overhead of the emulator: it takes a significantly more powerful x86 system to be able to accurately emulate an older non-x86 console, especially if code for that console was made to abuse certain physical bugs in the hardware.

For our test, we use the popular Dolphin emulation software, and run a compute project through it to determine how close to a standard console system our processors can emulate. In this test, a Nintendo Wii would take around 1050 seconds.

The latest version of Dolphin can be downloaded from https://dolphin-emu.org/

Our emulation test has always been a strong performer for Intel CPUs.

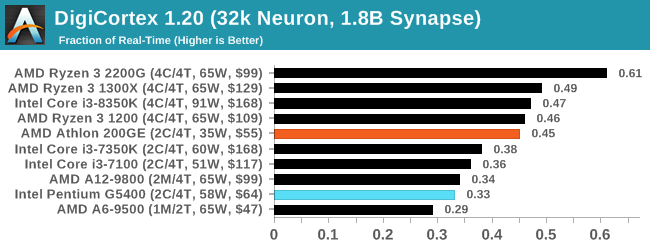

DigiCortex 1.20: Sea Slug Brain Simulation

This benchmark was originally designed for simulation and visualization of neuron and synapse activity, as is commonly found in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron / 1.8B synapse simulation, equivalent to a Sea Slug.

We report the results as the ability to simulate the data as a fraction of real-time, so anything above a ‘one’ is suitable for real-time work. Out of the two modes, a ‘non-firing’ mode which is DRAM heavy and a ‘firing’ mode which has CPU work, we choose the latter. Despite this, the benchmark is still affected by DRAM speed a fair amount.

DigiCortex can be downloaded from http://www.digicortex.net/

On this more memory limited test, the official supported frequency of the 200GE comes into play, and it scores 37% more than the Intel chip.

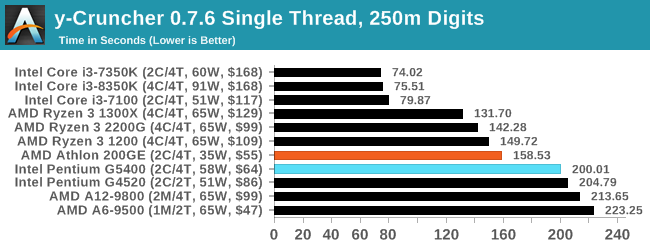

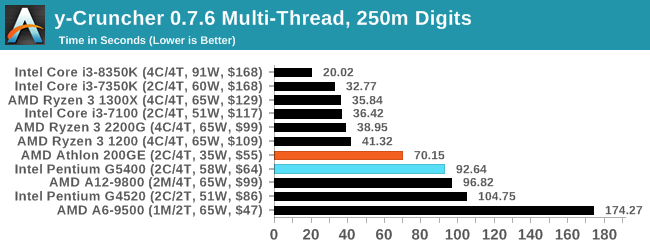

y-Cruncher v0.7.6: Microarchitecture Optimized Compute

I’ve known about y-Cruncher for a while, as a tool to help compute various mathematical constants, but it wasn’t until I began talking with its developer, Alex Yee, a researcher from NWU and now software optimization developer, that I realized that he has optimized the software like crazy to get the best performance. Naturally, any simulation that can take 20+ days can benefit from a 1% performance increase! Alex started y-cruncher as a high-school project, but it is now at a state where Alex is keeping it up to date to take advantage of the latest instruction sets before they are even made available in hardware.

For our test we run y-cruncher v0.7.6 through all the different optimized variants of the binary, single threaded and multi-threaded, including the AVX-512 optimized binaries. The test is to calculate 250m digits of Pi, and we use the single threaded and multi-threaded versions of this test.

Users can download y-cruncher from Alex’s website: http://www.numberworld.org/y-cruncher/

For our second AVX optimized test, AMD again scores a win. It would appear that the Pentium chips from Intel do not seem to be implementing the performance uplifts we see with the Core models.

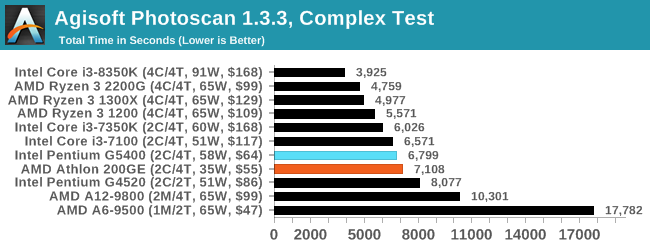

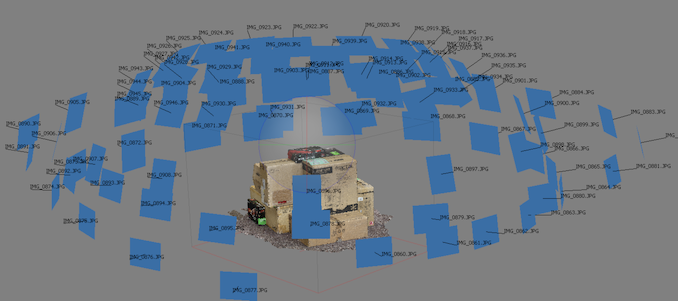

Agisoft Photoscan 1.3.3: 2D Image to 3D Model Conversion

One of the ISVs that we have worked with for a number of years is Agisoft, who develop software called PhotoScan that transforms a number of 2D images into a 3D model. This is an important tool in model development and archiving, and relies on a number of single threaded and multi-threaded algorithms to go from one side of the computation to the other.

In our test, we take v1.3.3 of the software with a good sized data set of 84 x 18 megapixel photos and push it through a reasonably fast variant of the algorithms, but is still more stringent than our 2017 test. We report the total time to complete the process.

Agisoft’s Photoscan website can be found here: http://www.agisoft.com/

Photoscan is more of a mixed workload, with multithreaded and singlethreaded steps to get a good sense of a performance. The Intel processor slips a win here, a few percent faster than the AMD.

95 Comments

View All Comments

kkilobyte - Monday, January 14, 2019 - link

s/i3/Pentium. Obviously :)freedom4556 - Monday, January 14, 2019 - link

I think you messed up your charts for Civ 6's IGP testing. That or why are you testing the IGP at 1080p Ultra when all the other IGP tests are at 720p Low?freedom4556 - Monday, January 14, 2019 - link

Also, the 8k and 16k tests are pointless wastes of time. Especially in this review, but also in the others. Your low/med/high/ultra should be 720p/1080p/1440p/4k if you want to actually represent the displays people are purchasing.nevcairiel - Monday, January 14, 2019 - link

The Civ6 tests are like that because thats when it really starts to scale like the other games. Look at its IGP vs Low, which is 1080p vs 4K. The values are almost identical (and still pretty solid). Only if you move to 8K and then 16K you see the usual performance degredation you would see with other games.AnnoyedGrunt - Tuesday, January 15, 2019 - link

I second this motion. Please have settings to cover the various common monitor choices. 1080P is an obvious choice, but 1440P should be there too, along with 4K. I don't think you need to run two 4K versions, or two 1080P versions, or whatever. I have a 1440P monitor so it would be nice to see where I become GPU limited as opposed to CPU limited. Maybe Civ6 could use some extra high resolutions in the name of science, but to be useful, you should at least include the 1440P on all games.Thanks.

-AG

eddieobscurant - Monday, January 14, 2019 - link

Another pro intel article from Ian, who hopes that someday intel will hire himPeachNCream - Monday, January 14, 2019 - link

The numbers in the chart speak for themselves. You don't have to acknowledge the conclusion text. It's only a recommendation anyway. Even though I'd personally purchase a 200GE if I were in the market, I don't think there is any sort of individual bias coming into play. Where the 200GE is relevant, gaming on the IGP, Ian recommended it. In other cases the G5400 did come out ahead by enough of a margin to make it worth consideration. The only flaw I could tease out of this is the fact that the recommendation is based on MSRP and as others have noted, the G5400 is significantly above MSRP right now. It may have been good to acknowledge that in the intro and conclusion in a stronger manner, but that means the article may not stand up as well to the test of time for someone browsing this content six months later after searching for advice on the relevant CPUs via Google.kkilobyte - Monday, January 14, 2019 - link

Acknowledge "in a stronger manner"? Well, it is actually not acknowledged in the conclusion at all!The title of the article is: "The $60 CPU question". One of those CPU is clearly not being sold at $60 on average, but is priced significantly higher. I think the article should have compared CPUs that are really available at (around) $60.

So maybe there is no personal bias - but there is clearly ignorance of the market state. And that's surprizing, since the G5400 price was above its MSRP for several months already; how could a professional journalist in the field ignore that?

I guess it could be objected that "MSRP always was used in the past as the reference price". Granted - but it made sense while the MSRP was close to the real market price. It doesn't anymore once the gap gets big, which is the case for tbe G5400. Nobody gives a damn about the theorical price if it is applied nowhere on the market.

And the 'numbers of chart' don't 'speak for themselves' - they are basically comparing CPUs whose retail price, depending on where you get them, show a 20-40% price gap. What's the point? Why isn't there a price/performance graph, as there were in past reviews? The graphs could just as well include high-end CPUs, and would be just as useless.

If I want to invest ~$60 in a CPU, I'm not interested to know how a ~$90 one performs!

sonny73n - Tuesday, January 15, 2019 - link

+1I couldn’t have said it better myself.

cheshirster - Wednesday, January 23, 2019 - link

Yes, 5400 is priced nowhere near 60$ and reviewer definitely knows it, but fails to mention this in conclusion.