The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Meet The New Future of Gaming: Different Than The Old One

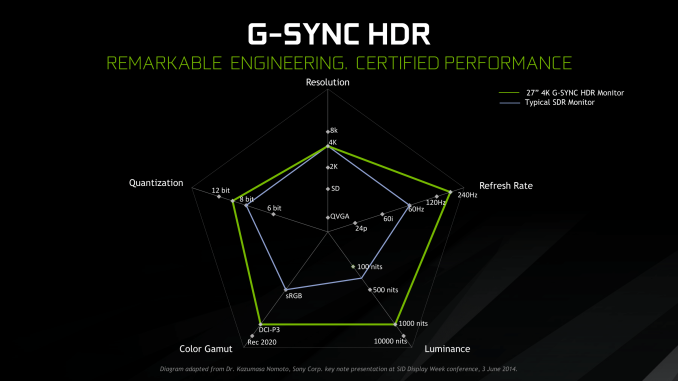

Up until last month, NVIDIA had been pushing a different, more conventional future for gaming and video cards, perhaps best exemplified by their recent launch of 27-in 4K G-Sync HDR monitors, courtesy of Asus and Acer. The specifications and display represented – and still represents – the aspired capabilities of PC gaming graphics: 4K resolution, 144 Hz refresh rate with G-Sync variable refresh, and high-quality HDR. The future was maxing out graphics settings on a game with high visual fidelity, enabling HDR, and rendering at 4K with triple-digit average framerate on a large screen. That target was not achievable by current performance, at least, certainly not by single-GPU cards. In the past, multi-GPU configurations were a stronger option provided that stuttering was not an issue, but recent years have seen both AMD and NVIDIA take a step back from CrossFireX and SLI, respectively.

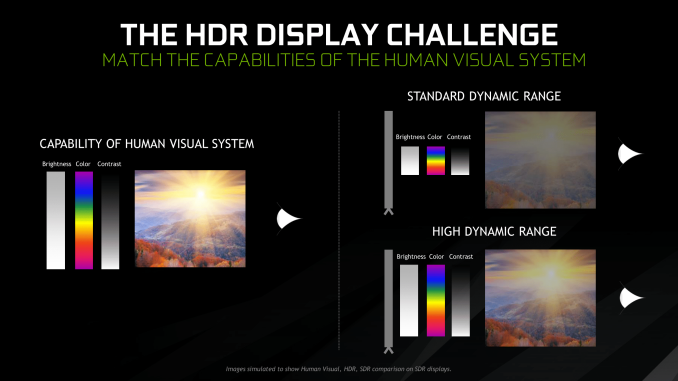

Particularly with HDR, NVIDIA expressed a qualitative rather than quantitative enhancement in the gaming experience. Faster framerates and higher resolutions were more known quantities, easily demoed and with more intuitive benefits – though in the past there was the perception of 30fps as cinematic, and currently 1080p still remains stubbornly popular – where higher resolution means more possibility for details, higher even framerates meant smoother gameplay and video. Variable refresh rate technology soon followed, resolving the screen-tearing/V-Sync input lag dilemma, though again it took time to catch on to where it is now – nigh mandatory for a higher-end gaming monitor.

For gaming displays, HDR was substantively different than adding graphical details or allowing smoother gameplay and playback, because it meant a new dimension of ‘more possible colors’ and ‘brighter whites and darker blacks’ to gaming. Because HDR capability required support from the entire graphical chain, as well as high-quality HDR monitor and content to fully take advantage, it was harder to showcase. Added to the other aspects of high-end gaming graphics and pending the further development of VR, this was the future on the horizon for GPUs.

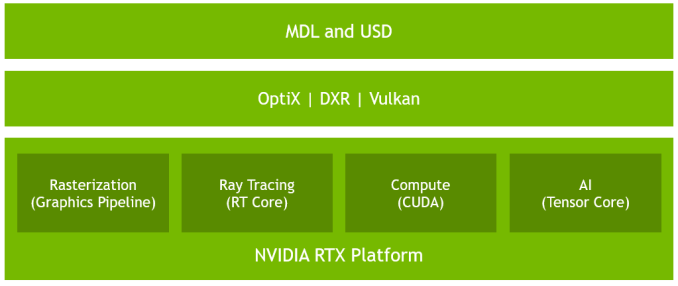

But today NVIDIA is switching gears, going to the fundamental way computer graphics are modelled in games today. Of the more realistic rendering processes, light can be emulated as rays that emit from their respective sources, but computing even a subset of the number of rays and their interactions (reflection, refraction, etc.) in a bounded space is so intensive that real time rendering was impossible. But to get the performance needed to render in real time, rasterization essentially boils down 3D objects as 2D representations to simplify the computations, significantly faking the behavior of light.

It’s on real time ray tracing that NVIDIA is staking its claim with GeForce RTX and Turing’s RT Cores. Covered more in-depth in our architecture article, NVIDIA’s real time ray tracing implementation takes all the shortcuts it can get, incorporating select real time ray tracing effects with significant denoising but keeping rasterization for everything else. Unfortunately, this hybrid rendering isn’t orthogonal to the previous concepts. Now, the ultimate experience would be hybrid rendered 4K with HDR support at high, steady, and variable framerates, though GPUs didn’t have enough performance to get to that point under traditional rasterization.

There’s a still a performance cost incurred with real time ray tracing effects, except right now only NVIDIA and developers have a clear idea of what it is. What we can say is that utilizing real time ray tracing effects in games may require sacrificing some or all three of high resolution, ultra high framerates, and HDR. HDR is limited by game support more than anything else. But the first two have arguably minimum performance standards when it comes to modern high-end gaming on PC – anything under 1080p is completely unpalatable, and anything under 30fps or more realistically 45 to 60fps hurts the playability. Variable refresh rate can mitigate the latter and framedrops are temporary, but low resolution is forever.

Ultimately, the real time ray tracing support needs to be implemented by developers via a supporting API like DXR – and many have been working hard on doing so – but currently there is no public timeline of application support for real time ray tracing, Tensor Core accelerated AI features, and Turing advanced shading. The list of games with support for Turing features - collectively called the RTX platform - will be available and updated on NVIDIA's site.

337 Comments

View All Comments

V900 - Thursday, September 20, 2018 - link

That’s plain false.Tomb Raider is a title out now with RTX enabled in the game.

Battlefield 5 is out in a month or two (though you can play it right now) and will also utilize RTX.

Sorry to destroy your narrative with the fact, that one of the biggest titles this year is supporting RTX.

And that’s of course just one out of a handful of titles that will do so, just in the next few months.

Developer support seems to be the last thing that RTX2080 owners need to worry about, considering that there are dozens of titles, many of them big AAA games, scheduled for release just in the first half of 2019.

Skiddywinks - Friday, September 21, 2018 - link

Unless I'm mistaken, TR does not support RTX yet. Obviously, otherwise it would be showing up in reviews everywhere. There is a reason every single reviewer is only benchmarking traditional games; that's all there is right now.Writer's Block - Monday, October 1, 2018 - link

Exactly.Is supporting or enabled.

However - neiher actually have it now to see, to experience.

eva02langley - Thursday, September 20, 2018 - link

These cards are nothing more than a cheap magic trick show. Nvidia knew about the performances being lackluster, and based their marketing over gimmick to square the competition by affirming that these will be the future of gaming and you will be missing out without it.Literally, they basically tried to create a need... and if you are defending Nvidia over this, you have just drinking the coolaid at this point.

Quote me on this, this will be the next gameworks feature that devs will not bother touching. Why? Because devs are developing games on consoles and transit them to PC. The extra time in development doesn't bring back any additional profit.

Skiddywinks - Friday, September 21, 2018 - link

Here's the thing though, I don't the performance is that lacklustre, the issue is we have this huge die and half of it does not do what most people want; give us more frames. If they had made the same size die with nothing but traditional CUDA cores, the 2080 Ti would be an absolute beast. And I'd imagine it would be a lot cheaper as well.But nVidia (maybe not mistakenly) have decided to push the raytracing path, and those of us you just want maximum performance for the price (me) and were waiting for the next 1080 Ti are basically left thinking "... oh well, skip".

eva02langley - Friday, September 21, 2018 - link

DOn't get me wrong, these cards are a normal upgrade performance jump, however it is not the second christ sent that Nvidia is marketing.The problem here is Nvidia want to corner AMD and their tactic they choose is RTX. However RTX is nothing else than a FEATURE. The gamble could cost them a lot.

If AMD gaming and 7nm strategy pays off, devs will develop on AMD hardware and transit to PC architecture leaving devs no incentive to put the extra work for a FEATURE.

The extra cost of the bigger die should have been for gaming performances, but Nvidia strategy is to disrupt competition and further their stand as a monopoly as they can.

Physx didn't work, hairwork didn't work and this will not work. As cool as it is, this should have been a feature for pro cards only, not consumers.

mapesdhs - Thursday, September 27, 2018 - link

That's the thing though, they aren't a "normal" upgrade performance jump, because the prices make no sense.AnnoyedGrunt - Thursday, September 20, 2018 - link

This reminds me quite a bit of the original GeForce 256 launch. Not sure how many of you were following Anandtech back then, but it was my go-to site then just as it is now. Here are links to some of the original reviews:GeForce256 SDR: https://www.anandtech.com/show/391

GeForce256 DDR: https://www.anandtech.com/show/429

Similar to the 20XX series, the GeForce256 was Nvidia's attempt to change the graphics card paradigm, adding hardware tranformation and lighting to the graphics card (and relieving the CPU from those tasks). The card was faster than the contemporary cards, but also much more expensive, making the value questionable for many.

At the time I was a young mechanical engineer, and I remember feeling that Nvidia was brilliant for creating this card. It let me run Pro/E R18 on my $1000 home computer, about as fast as I could on my $20,000 HP workstation. That card basically destroyed the market of workstation-centric companies like SGI and Sun, as people could now run CAD packages on a windows PC.

The 20XX series gives me a similar feeling, but with less obvious benefit to the user. The cards are as fast or faster than the previous generation, but are also much more expensive. The usefulness is likely there for developers and some professionals like industrial designers who would love to have an almost-real-time, high quality, rendered image. For gamers, the value seems to be a stretch.

While I was extremely excited about the launch of the original GeForce256, I am a bit "meh" about the 20XX series. I am looking to build a new computer and replace my GTX 680/i5-3570K, but this release has not changed the value equation at all.

If I look at Wolfenstein, then a strong argument could be made for the 2080 being more future proof, but pretty much all other games are a wash. The high price of the 20XX series means that the 1080 prices aren't dropping, and I doubt the 2070 will change things much since it looks like it would be competing with the vanilla 1080, but costing $100 more.

Looks like I will wait a bit more to see how that price/performance ends up, but I don't see the ray-tracing capabilities bringing immediate value to the general public, so paying extra for it doesn't seem to make a lot of sense. Maybe driver updates will improve performance in today's games, making the 20XX series look better than it does now, but I think like many, I was hoping for a bit more than an actual reduction in the performance/price ratio.

-AG

eddman - Thursday, September 20, 2018 - link

How much was a 256 at launch? I couldn't find any concrete pricing info but let's go with $500 to be safe. That's just $750 by today's dollar for something that is arguably the most revolutionary nvidia video card.Ananke - Thursday, September 20, 2018 - link

Yep, and it was also not selling well among "gamers" novelty, that became popular after falling under $100 a pop years later. Same here, financial analysts say the expected revenue from gaming products will drop in the near future, and Wall Street already dropped NVidia. Product is good, but expensive, it is not going to sell in volume, their revenue will drop in the imminent quarters.Apple's XS phone was the same, but Apple started a buy-one-get-one campaign on the very next day, plus upfront discount and solid buyback of iPhones. Yet, not clear whether they will achieve volume and revenue growth within the priced in expectations.

These are public companies - they make money from Wall Street, and they /NVidia/ can lose much more and much faster on the capital markets, versus what they would gain in profitability from lesser volume high end boutique products. This was relatively sh**y launch - NVidia actually didn't want to launch anything, they want to sell their glut of GTX inventory first, but they have silicon ordered and made already at TSMC, and couldn't just sit on it waiting...