The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

While it was roughly 2 years from Maxwell 2 to Pascal, the journey to Turing has felt much longer despite a similar 2 year gap. There’s some truth to the feeling: looking at the past couple years, there’s been basically every other possible development in the GPU space except next-generation gaming video cards, like Intel’s planned return to discrete graphics, NVIDIA’s Volta, and cryptomining-specific cards. Finally, at Gamescom 2018, NVIDIA announced the GeForce RTX 20 series, built on TSMC’s 12nm “FFN” process and powered by the Turing GPU architecture. Launching today with full general availability is just the GeForce RTX 2080, as the GeForce RTX 2080 Ti was delayed a week to the 27th, while the GeForce RTX 2070 is due in October. So up for review today is the GeForce RTX 2080 Ti and GeForce RTX 2080.

But a standard new generation of gaming GPUs this is not. The “GeForce RTX” brand, ousting the long-lived “GeForce GTX” moniker in favor of their announced “RTX technology” for real time ray tracing, aptly underlines NVIDIA’s new vision for the video card future. Like we saw last Friday, Turing and the GeForce RTX 20 series are designed around a set of specialized low-level hardware features and an intertwined ecosystem of supporting software currently in development. The central goal is a long-held dream of computer graphics researchers and engineers alike – real time ray tracing – and NVIDIA is aiming to bring that to gamers with their new cards, and willing to break some traditions on the way.

| NVIDIA GeForce Specification Comparison | ||||||

| RTX 2080 Ti | RTX 2080 | RTX 2070 | GTX 1080 | |||

| CUDA Cores | 4352 | 2944 | 2304 | 2560 | ||

| Core Clock | 1350MHz | 1515MHz | 1410MHz | 1607MHz | ||

| Boost Clock | 1545MHz FE: 1635MHz |

1710MHz FE: 1800MHz |

1620MHz FE: 1710MHz |

1733MHz | ||

| Memory Clock | 14Gbps GDDR6 | 14Gbps GDDR6 | 14Gbps GDDR6 | 10Gbps GDDR5X | ||

| Memory Bus Width | 352-bit | 256-bit | 256-bit | 256-bit | ||

| VRAM | 11GB | 8GB | 8GB | 8GB | ||

| Single Precision Perf. | 13.4 TFLOPs | 10.1 TFLOPs | 7.5 TFLOPs | 8.9 TFLOPs | ||

| Tensor Perf. (INT4) | 430TOPs | 322TOPs | 238TOPs | N/A | ||

| Ray Perf. | 10 GRays/s | 8 GRays/s | 6 GRays/s | N/A | ||

| "RTX-OPS" | 78T | 60T | 45T | N/A | ||

| TDP | 250W FE: 260W |

215W FE: 225W |

175W FE: 185W |

180W | ||

| GPU | TU102 | TU104 | TU106 | GP104 | ||

| Transistor Count | 18.6B | 13.6B | 10.8B | 7.2B | ||

| Architecture | Turing | Turing | Turing | Pascal | ||

| Manufacturing Process | TSMC 12nm "FFN" | TSMC 12nm "FFN" | TSMC 12nm "FFN" | TSMC 16nm | ||

| Launch Date | 09/27/2018 | 09/20/2018 | 10/2018 | 05/27/2016 | ||

| Launch Price | MSRP: $999 Founders $1199 |

MSRP: $699 Founders $799 |

MSRP: $499 Founders $599 |

MSRP: $599 Founders $699 |

||

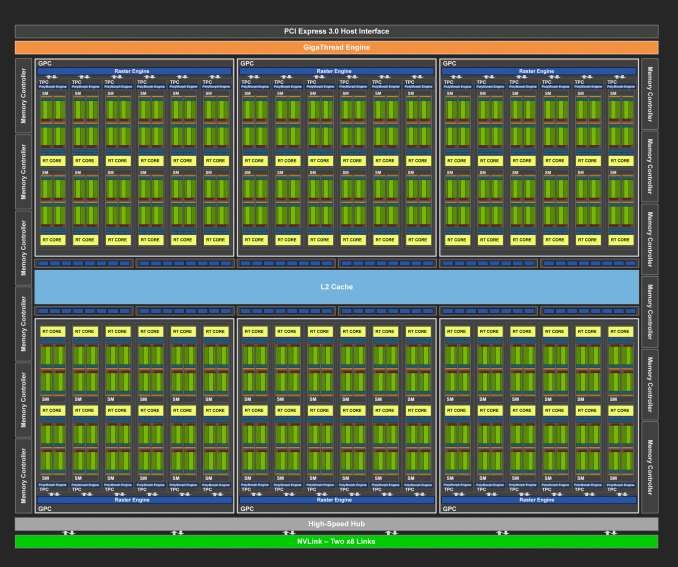

As we discussed at the announcement, one of the major breaks is that NVIDIA is introducing GeForce RTX as the full upper tier stack with x80 Ti/x80/x70 stack, where it has previously tended towards the x80/x70 products first, and the x80 Ti as a mid-cycle refresh or competitive response. More intriguingly, each GeForce card has their own distinct GPU (TU102, TU104, and TU106), with direct Quadro and now Tesla variants of TU102 and TU104. While we covered the Turing architecture in the preceding article, the takeaway is that each chip is proportionally cut-down, including the specialized RT Cores and Tensor Cores; with clockspeeds roughly the same as Pascal, architectural changes and efficiency enhancements will be largely responsible for performance gains, along with the greater bandwidth of 14Gbps GDDR6.

And as far as we know, Turing technically did not trickle down from a bigger compute chip a la GP100, though at the architectural level it is strikingly similar to Volta/GV100. Die size brings more color to the story, because with TU106 at 454mm2, the smallest of the bunch is frankly humungous for a FinFET die nominally dedicated for a x70 GeForce product, and comparable in size to the 471mm2 GP102 inside the GTX 1080 Ti and Pascal Titans. Even excluding the cost and size of enabled RT Cores and Tensor Cores, a slab of FinFET silicon that large is unlikely to be packaged and priced like the popular $330 GTX 970 and still provide the margins NVIDIA is pursuing.

These observations are not so much to be pedantic, but more so to sketch out GeForce Turing’s positioning in relation to Pascal. Having separate GPUs for each model is the most expensive approach in terms of research and development, testing, validation, extra needed fab tooling/capacity – the list goes on. And it raises interesting questions on the matter of binning, yields, and salvage parts. Though NVIDIA certainly has the spare funds to go this route, there’s surely a better explanation than Turing being primarily designed for a premium-priced consumer product that cannot command the margins of professional parts. These all point to the known Turing GPUs as oriented for lower-volume, and NVIDIA’s financial quarterly reports indicate that GeForce product volume is a significant factor, not just ASP.

And on that note, the ‘reference’ Founders Edition models are no longer reference; the GeForce RTX 2080 Ti, 2080, and 2070 Founders Editions feature 90MHz factory overclocks and 10W higher TDP, and NVIDIA does not plan to productize a reference card themselves. But arguably the biggest change is the move from blower-style coolers with a radial fan to an open air cooler with dual axial fans. The switch in design improves cooling capacity and lowers noise, but with the drawback that the card can no longer guarantee that it can cool itself. Because the open air design re-circulates the hot air back into the chassis, it is ultimately up to the chassis to properly exhaust the heat. In contrast, a blower pushes all the hot air through the back of the card and directly out of the case, regardless of the chassis airflow or case fans.

All-in-all, NVIDIA is keeping the Founders Edition premium, which is now $200 over the baseline ‘reference.’ Though AIB partner cards are also launching today, in practice the Founders Edition pricing is effectively the retail price until the launch rush has subsided.

The GeForce RTX 20 Series Competition: The GeForce GTX 10 Series

In the end, the preceding GeForce GTX 10 series ended up occupying an odd spot in the competitive landscape. After its arrival in mid-2016, only the lower end of the stack had direct competition, due to AMD’s solely mainstream/entry Polaris-based Radeon RX 400 series. AMD’s RX 500 series refresh in April 2017 didn’t fundamentally change that, and it was only until August 2017 that the higher-end Pascal parts had direct competition with their generational equal in RX Vega. But by that time, the GTX 1080 Ti (not to mention the Pascal Titans) was unchallenged. And all the while, an Ethereum-led resurgence of mining cryptocurrency on video cards was wreaking havoc on GPU pricing and inventory, first on Polaris products, then general mainstream parts, and finally affecting any and all GPUs.

Not that NVIDIA sat on their laurels with Vega, releasing the GTX 1070 Ti anyhow. But what was constant was how the pricing models evolved with the Founders Editions schema, the $1200 Titan X (Pascal), and then $700 GTX 1080 Ti and $1200 Titan Xp. Even the $3000 Titan V maintained gaming cred despite diverging greatly from previous Titan cards as firmly on the professional side of prosumer, basically allowing the product to capture both prosumers and price-no-object enthusiasts. Ultimately, these instances coincided with the rampant cryptomining price inflation and was mostly subsumed by it.

So the higher end of gaming video cards has been Pascal competing with itself and moving up the price brackets. For Turing, the GTX 1080 Ti has become the closest competitor. RX Vega performance hasn’t fundamentally changed, and the fallout appears to have snuffed out any Vega 10 parts, as well as Vega 14nm+ (i.e. 12nm) refreshes. As a competitive response, AMD doesn’t have many cards up their sleeves except the ones already played – game bundles (such as the current “Raise the Game” promotion), FreeSync/FreeSync 2, other hardware (CPU, APU, motherboard) bundles. Other than that, there’s a DXR driver in the works and a machine learning 7nm Vega on the horizon, but not much else is known, such as mobile discrete Vega. For AMD graphics cards on shelves right now, RX Vega is still hampered by high prices and low inventory/selection, remnants of cryptomining.

For the GeForce RTX 2080 Ti and 2080, NVIDIA would like to sell you the RTX cards as your next upgrade regardless of what card you may have now, essentially because no other card can do what Turing’s features enable: real time raytracing effects ((and applied deep learning) in games. And because real time ray tracing offers graphical realism beyond what rasterization can muster, it’s not comparable to an older but still performant card. Unfortunately, none of those games have support for Turing’s features today, and may not for some time. Of course, NVIDIA maintains that the cards will provide expected top-tier performance in traditional gaming. Either way, while Founders Editions are fixed at their premium MSRP, custom cards are unsurprisingly listed at those same Founders Edition price points or higher.

| Fall 2018 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| $1199 | GeForce RTX 2080 Ti | ||||

| $799 | GeForce RTX 2080 | ||||

| $709 | GeForce GTX 1080 Ti | ||||

| Radeon RX Vega 64 | $569 | ||||

| Radeon RX Vega 56 | $489 | GeForce GTX 1080 | |||

| $449 | GeForce GTX 1070 Ti | ||||

| $399 | GeForce GTX 1070 | ||||

| Radeon RX 580 (8GB) | $269/$279 | GeForce GTX 1060 6GB (1280 cores) |

|||

337 Comments

View All Comments

nevcairiel - Thursday, September 20, 2018 - link

Technically AMD would have to hit a 70-80% advancement at least once over their own cards at least if they ever want to offer high-end cards again.Arbie - Thursday, September 20, 2018 - link

Why gratuitously characterize all those complaining of price as "AMD fanboys"? Obviously, most are those who intended to buy the new NVidia boards but are dismayed to find them so costly! Hardly AMD disciples.Your repeated, needless digs mark you as the fanboy. And do nothing to promote your otherwise reasonable statements.

eddman - Thursday, September 20, 2018 - link

I'm such an AMD fanboy that I have a 1060 and have never owned an AMD product. You, on the other hand, reek of pro nvidia bias.A 40% higher launch MSRP is not "how it always works out".

No one is complaining about the performance itself but the horrible price/performance increase, or should I say decrease, compared to pascal.

Moore's law? 980 Ti was made on the same 28nm process as 780 Ti and yet offered considerably higher performance and still launched at a LOWER MSRP, $650 vs. $700.

Inteli - Saturday, September 22, 2018 - link

Got enough straw for that strawman you're building? The last 3 GPUs I've bought have been Nvidia (970, 1060, 1070 Ti). I would have considered a Vega 56, but the price wasn't low enough that I was willing to buy one.News flash: in current games, the 2080 is tied with the 1080 Ti for the same MSRP (and the 1080 Ti will be cheaper with the new generation launches). Sure, if you compare the 1080 to the 2080, the 2080 is significantly faster, but those only occupy the same position in Nvidia's product stack, not in the market. No consumer is going to compare a $500 card to a $700-800 card.

The issues people take with the Turing launch have absolutely nothing to do with either "We only got a 40% perf increase" or "Nvidia raised the prices" in isolation. Every single complaint I've seen is "Turing doesn't perform any better in current games as an equivalently priced Pascal card".

Lots of consumers are pragmatists, and will buy the best performing card they can afford. These are the people complaining about (or more accurately disappointed by) Turing's launch: Turing doesn't do anything for them. Sure, Nvidia increased the performance of the -80 and -80 Ti cards compared to last generation, but they increased the price as well, so price/performance is either the same or worse compared to Pascal. Many people were holding off on buying new cards until this launch, and in return for their patience they got...the same performance as before.

mapesdhs - Wednesday, September 26, 2018 - link

Where I live (UK), the 2080 is 100 UKP more expensive than a new 1080 Ti from normal retail sources, while a used 1080 Ti is even less. The 2080 is not worth it, especially with less RAM. It wouldn't have been quite so bad if the 2080 was the same speed for less cost, but being more expensive just makes it look silly. Likewise, if the 2080 Ti had had 16GB that would at least have been something to distinguish it from the 1080 Ti, but as it stands, the extra performance is meh, especially for the enormous cost (literally 100% more where I am).escksu - Thursday, September 20, 2018 - link

Lol.....most important??If you think that ray tracing on 2080ti is damm cool and game changer.....You should see what AMD has done. Just that no one really care about it back then.....

https://forums.geforce.com/default/topic/437987/th...

Even more incredible is that this was done 10yrs ago on 4870x2....REAL TIME......Yes, I repeat, REAL TIME.......

nevcairiel - Thursday, September 20, 2018 - link

It was just another "rasterization cheat" though, may have looked nice but ultimately didn't have the longevity that Ray Tracing may have. No 3D developer or 3D artist is going to ever argue that Ray Tracing is not the future, the question is just how to get there.V900 - Thursday, September 20, 2018 - link

Meh... The image itself is captured by cameras, it’s the manipulation of it that’s done in real time.Which is of course a neat little trick; and while it looks good, it’s hardly as impressive and computationally demanding as creating a whole image through raytracing.

escksu - Thursday, September 20, 2018 - link

https://www.cinemablend.com/games/DirectX-11-Ray-T...AMD did that again with 5870.... REAL TIME......but nobody cares........because the world only bothers about Nvidia.........

Chawitsch - Thursday, September 20, 2018 - link

The demos you linked to are beautiful indeed, however both the ATi Ruby demo and Rigid Gems demo use standard DirectX feature from those times, no ray tracing at all or any vendor specific features. Due to the latter it is worth pointing out that the 2008 Ruby demo (called Double Cross IIRC) was perfectly happy to run on nVidia cards of the time.If these demos show anything, it is that there were and are extremely talented artists out there who can do amazing things to work around the limitations of rasterization. This way however we can always merely approximate how a scene should look, with increasingly high costs, so going back to proper ray tracing was only a question of when its costs will approach that of rasterization. We seem to have arrived at the balancing point, hence hybrid rendering. I also think if AMD could have pushed nVidia more with high end GPUs, nVidia may not have made this step at this time, at least it certainly could have been a more risky proposition otherwise.