The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTUnpacking 'RTX', 'NGX', and Game Support

One of the more complicated aspects of GeForce RTX and Turing is not only the 'RTX' branding, but how all of Turing's features are collectively called the NVIDIA RTX platform. To recap, here is a quick list of the separate but similarly named groupings:

- NVIDIA RTX Platform - general platform encompassing all Turing features, including advanced shaders

- NVIDIA RTX Raytracing technology - name for ray tracing technology under RTX platform

- GameWorks Raytracing - raytracing denoiser module for GameWorks SDK

- GeForce RTX - the brand connected with games using NVIDIA RTX real time ray tracing

- GeForce RTX - the brand for graphics cards

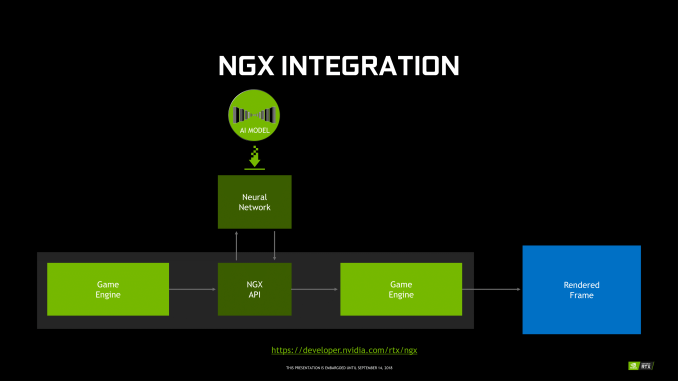

For NGX, it technically falls under the RTX platform, and includes Deep Learning Super Sampling (DLSS). Using a deep neural network (DNN) specific to the game and trained on super high quality 64x supersampled images, or 'ground truth' images, DLSS uses tensor cores to infer high quality antialiased results. In the standard mode, DLSS renders at a lower input sample count, typically 2x less but may depend on the game, and then infers a result, which at target resolution is similar quality to TAA result. A DLSS 2X mode exists, where the input is rendered at the final target resolution and then combined with a larger DLSS network.

Fortunately, GFE is not required for NGX features to work, and all the necessary NGX files will be available via the standard Game Ready drivers, though it's not clear how often DNNs for particular games would be updated.

In the case of RTX-OPS, it describes a workload for a frame where both RT and Tensor Cores are utilized; currently, the classic scenario would be with a game with real time ray tracing and DLSS. So by definition, it only accurately measures that type of workload. However, this metric currently does not apply to any game, as DXR has not yet released. For the time being, the metric does not describe performance any publicly available game.

In sum, then the upcoming game support aligns with the following table.

| Planned NVIDIA Turing Feature Support for Games | |||||

| Game | Real Time Raytracing | Deep Learning Supersampling (DLSS) | Turing Advanced Shading | ||

| Ark: Survival Evolved | Yes | ||||

| Assetto Corsa Competizione | Yes | ||||

| Atomic Heart | Yes | Yes | |||

| Battlefield V | Yes | ||||

| Control | Yes | ||||

| Dauntless | Yes | ||||

| Darksiders III | Yes | ||||

| Deliver Us The Moon: Fortuna | Yes | ||||

| Enlisted | Yes | ||||

| Fear The Wolves | Yes | ||||

| Final Fantasy XV | Yes | ||||

| Fractured Lands | Yes | ||||

| Hellblade: Senua's Sacrifice | Yes | ||||

| Hitman 2 | Yes | ||||

| In Death | Yes | ||||

| Islands of Nyne | Yes | ||||

| Justice | Yes | Yes | |||

| JX3 | Yes | Yes | |||

| KINETIK | Yes | ||||

| MechWarrior 5: Mercenaries | Yes | Yes | |||

| Metro Exodus | Yes | ||||

| Outpost Zero | Yes | ||||

| Overkill's The Walking Dead | Yes | ||||

| PlayerUnknown Battlegrounds | Yes | ||||

| ProjectDH | Yes | ||||

| Remnant: From the Ashes | Yes | ||||

| SCUM | Yes | ||||

| Serious Sam 4: Planet Badass | Yes | ||||

| Shadow of the Tomb Raider | Yes | ||||

| Stormdivers | Yes | ||||

| The Forge Arena | Yes | ||||

| We Happy Few | Yes | ||||

| Wolfenstein II | Yes | ||||

111 Comments

View All Comments

Tamz_msc - Saturday, September 15, 2018 - link

"Besides, what you said isn't true even limiting the discussion to what was covered in this article. The Turing Tensor cores allow for a greater range of precisions."You mean lower precision, right? INT8 and INT4 are lower range. From a higher-level view Volta is very similar to Turing, just like the OP described.

Yojimbo - Saturday, September 15, 2018 - link

"greater range of precisions"INT8, INT4, FP16, etc., are precisions. The range of precisions an architecture can handle is the set of all precisions it can handle. Turing Tensor Cores can handle INT4, INT8, and FP16, whereas Volta Tensor Cores can handle FP16. So Turing can handle a greater range of precisions.

Bulat Ziganshin - Friday, September 14, 2018 - link

I would pray for 2060 w/o all this RT/FP16 stuffSpunjji - Monday, September 17, 2018 - link

Seems likely given how nutso these die sizes are. I expect we won't see it until after Pascal inventory is cleared, though.Da W - Friday, September 14, 2018 - link

Well still playing on my 3-screen Haswell + GTX780 rig, and being pretty satisfied of it, i'll probably just get a cheap GTX 1070 or 1080 for my new Ryzen rig and wait if ray tracing really gets adopted in 1 or 2 years. Seems to me lots of transistors invested for not many games. If history told us anything, it's not because a technology is great that it will get adopted, especially if it asks LOADS more developper time for the game companies.Not sure AMD won't come up with something either down the line. They've been given for dead for over 2 decades, guess where they are now!

Holliday75 - Monday, September 17, 2018 - link

I am waiting as well. This is the first attempt to change the game. Next gen or two is where it will be fined tuned and worth purchasing. This feels like a 4k TV purchase. Waste of money.abufrejoval - Friday, September 14, 2018 - link

I wonder how much Turing is about staking out territorial claims vs. dark silicon also coming to GPUs...Obviously Nvidia wants to protect its CUDA machine learning and HPC empire against custom ASIC competitors which finally also include Intel with their Configurable Spatial Accellerator, as well as Cambricon, Google's TPU ASICs and far too many others for comfort.

But while many seem to bemoan that tensor core or rasterizing real-estate is a waste for gaming and just about raising the purchase prices with overhyped features nobody needs, I wonder if apart from the partial truth in that the other motivating driver is simply that the inability to translate additional transistors into additional performance as additional bandwidth requires step changes in GDDR6 lanes (with unshrinkable pad areas and amplifiers) and hits foundry reticle sizes.

So they had transistors left over (wonder where those came from without a die shrink: I/O voltage reduction, layout optimizations, really bigger chips?), that could not be turned into direct DX1x performance gains due to bandwidth and TDP constraints and going to a richer functional base with Tensor Cores and raytrace assists would eat alternate bandwidth or TDP budgets, not additional ones.

Any truth in those assumptions?

abufrejoval - Friday, September 14, 2018 - link

ok, much bigger chips...And no rip-off: They are worth what they are charging if only for the inference accelleration.

Yojimbo - Saturday, September 15, 2018 - link

I am not convinced the Tensor Cores take up a lot of real estate. And they are tightly integrated into NVIDIA's SMs. Designing two SMs, one with Tensor Cores and one without Tensor Cores would be a lot more expensive than leaving them in. Plus, NVIDIA sees deep learning as important for gaming.Your argument about FLOPS per bandwidth does have validity. It's just that neither Tensor Cores nor RT cores were just thrown in there because they had transistors left over. Look at the die sizes of these new GPUs compared to Pascal GPUs. If they built a smaller chip that performed the same in legacy games then they could sell them more cheaply, and so sell more of them, while making the same profit on each one. That would mean higher margins and greater profits.

The RTX and Tensor Cores are a strategic initiative. I think in making the decision to include them NVIDIA judged that those two technologies would have a positive impact on the future of gaming. The reason they made that judgment may include the dwindling FLOPS/memory bandwidth trend.

bernstein - Friday, September 14, 2018 - link

really interesting time in gpu's right now... remember a decade ago when intel teased a x86-gpu that promised to do real-time raytracing?yet turing may turn out to provide an abysmal price/perf ratio.

- about half the transistors will only be used in a few upcoming games, they could be used to possibly double performance in rasterization-only games (7nm amd navi anyone?)

- but if (hybrid-)raytracing takes off quickly, turing will be crushed by 7nm gpu's dedicating way more transistors to the task, as it's performance is still skewed heavily towards rasterization

- ai inferencing seems like a safe bet, again i'd wager that DLSS will only ever work with the vast minority of games released each day on steam, so it's usefulness will depends on whether developers make other use of the available silicon... (better AI opponents anyone?)