The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTTuring Tensor Cores: Leveraging Deep Learning Inference for Gaming

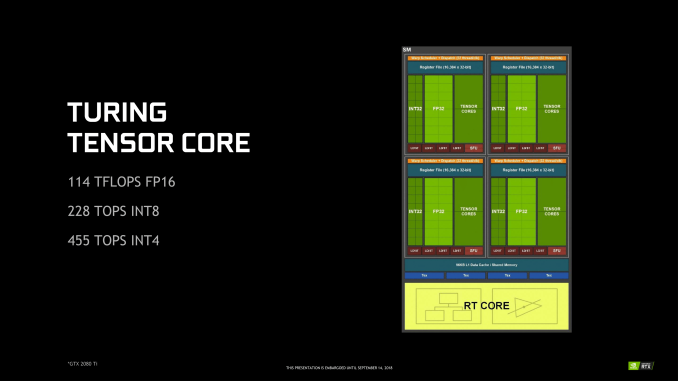

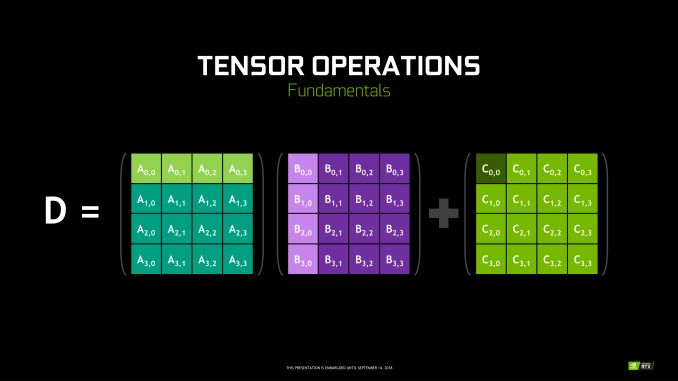

Though RT Cores are Turing’s poster child feature, the tensor cores were very much Volta’s. In Turing, they’ve been updated, reflecting its positioning as a gaming/consumer feature via inferencing. The main changes for the 2nd generation tensor cores are INT8 and INT4 precision modes for inferencing, enabled by new hardware data paths, and perform dot products to accumulate into an INT32 product. INT8 mode operates at double the FP16 rate, or 2048 integer operations per clock. INT4 mode operates at quadruple the FP16 rate, or 4096 integer ops per clock.

Naturally, only some networks tolerate these lower precisions and any necessary quantization, meaning the storage and calculation of compacted format data. INT4 is firmly in the research area, whereas INT8’s practical applicability is much more developed. Regardless, the 2nd generation tensor cores still have FP16 mode, which they now support in a pure FP16 mode without FP32 accumulator. While CUDA 10 is not yet out, the enhanced WMMA operations should shed light on any other differences, such as additional accepted matrix sizes for operands.

Inasmuch as deep learning is involved, NVIDIA is pushing what was a purely compute/professional feature into consumer territory, and we will go over the full picture in a later section. For Turing, the tensor cores can accelerate the features under the NGX umbrella, which includes DLSS. They can also accelerate certain AI-based denoisers that cleanup and correct real time raytraced rendering, though most developers seem to be opting for non-tensor core accelerated denoisers at the moment.

111 Comments

View All Comments

Alistair - Sunday, September 16, 2018 - link

Except for the GTX 780 was the worse nVidia release ever, at a terrible price. Nice try ignoring every other card in the last 10 years.markiz - Monday, September 17, 2018 - link

How can it be the same segment of the market, if the prices are, as you claim, double+?I mean, that claim makes no sense. It's not same segment. it's higher tier.

I mean, who is to say what kind of an advancement in GPU and games have people supposed to be getting?

Buy a 500$ card and max settings as far as they go and call it a day.

If you are

Ej24 - Monday, September 17, 2018 - link

The R&D for smaller manufacturing nodes hasn't scaled linearly. It's been almost exponential in terms of $/Sq.mm to develop each new node. That's why we need die shrinks to cram more transistors per square mm, and why some nodes were skipped because the economics didn't work out, like 20/22nm gpu's never existed. You're assuming that manufacturers have fixed costs that have never changed. The cost of a semiconductor fab, and R&D for new nodes has ballooned much much faster than inflation. That's why we've seen the number of fabs plummet with every new node. There used to be dozens of fabs in the 90nm days and before. Now it's looking like only 3 or 4 will be producing 7nm and below. It's just gotten too expensive for anyone to compete.milkod2001 - Tuesday, September 18, 2018 - link

All those ridiculous prices started when AMD have announced 7970 at $550 plus. NV had mid range card to compete with it: GTX 680 at the same price. And then NV Titan high end cards were introduced at $1000 plus. Since then we pay past high end prices for mid range cards.futrtrubl - Wednesday, September 19, 2018 - link

Just a bit on your math. You say $1 accounting for inflation of 2.7% over 18 years is now just less than $1.50. Maybe you are doing it as $1 * 18 * 1.027 to get that which is incorrect for inflation. It compounds, so it should be $1 * ( 1.027^18) which comes to ~$1.62. Likewise at 5% over 18 years it becomes $2.41.Da W - Sunday, September 16, 2018 - link

Since when does inflation work in the semiconductor industry?Holliday75 - Monday, September 17, 2018 - link

I was wondering the same thing. Smaller, faster, cheaper. For some reason here its the opposite....for 2 out of 3.Yojimbo - Saturday, September 15, 2018 - link

"You must literally live under a rock while also being absurdly naive.It's never been this way in the 20 years that i've been following GPUs. These new RTX GPUs are ridiculously expensive, way more than ever, and the prices will not be changing much at all when there's literally zero competition. The GPU space right now is worse than it's ever been before in history."

No, if you go back and look at historical GPU prices, adjusted for inflation, there have been other times that newly released graphics cards were either as expensive or more expensive. The 700 series is the most recent example of cards that were as expensive as the 20 series is.

eddman - Saturday, September 15, 2018 - link

No.https://i.imgur.com/ZZnTS5V.png

This chart was made last year based on 2017 dollar value, but it still applies. 20 series cards have the highest launch prices in the past 18 years by a large margin.

eddman - Saturday, September 15, 2018 - link

There is one card that surpasses that, 8800 Ultra. It was nothing more than a slightly OCed 8800 GTX. Nvidia simply released it to extract as much money as possible, and that was made possible because of lack of proper competition from ATI/AMD in that time period.