The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTTuring RT Cores: Hybrid Rendering and Real Time Raytracing

As it presents itself in Turing, real-time raytracing doesn’t completely replace traditional rasterization-based rendering, instead existing as part of Turing’s ‘hybrid rendering’ model. In other words, rasterization is used for most rendering, while ray-tracing techniques are used for select graphical effects. Meanwhile, the ‘real-time’ performance is generally achieved with a very small amount of rays (e.g. 1 or 2) per pixel, and a very large amount of denoising.

The specific implementation is ultimately in the hands of developers, and NVIDIA naturally has their raytracing development ecosystem, which we’ll go over in a later section. But because of the computational intensity, it simply isn’t possible to use real-time raytracing for the complete rendering workload. And higher resolutions, more complex scenes, and numerous graphical effects also compound the difficulty. So for performance reasons, developers will be utilizing raytracing in a deliberate and targeted manner for specific effects, such as global illumination, ambient occlusion, realistic shadows, reflections, and refractions. Likewise, raytracing may be limited to specific objects in a scene, and rasterization and z-buffering may replace primary ray casting while only secondary rays are raytraced. Thus, the goal of developers is to use raytracing for the most noticeable and realistic effects that rasterization cannot accomplish.

Essentially, this style of ‘hybrid rendering’ is a lot less raytracing than one might imagine from the marketing material. Perhaps a blunt way to generalize might be: real time raytracing in Turing typically means only certain objects are being rendered with certain raytraced graphical effects, using a minimal amount of rays per pixel and/or only raytracing secondary rays, and using a lot of denoising filtering; anything more would affect performance too much. Interestingly, explaining all the caveats this way both undersells and oversells the technology, because therein lies the paradox. Even in this very circumscribed way, GPU performance is significantly affected, but image quality is enhanced with a realism that cannot be provided by a higher resolution or better anti-aliasing. Except ‘real time’ interactivity in gaming essentially means a minimum of 30 to 45 fps, and lowering the render resolution to achieve those framerates hurts image quality. What complicates this is that real time raytracing is indeed considered the ‘holy grail’ of computer graphics, and so managing the feat at all is a big deal, but there are equally valid professional and consumer perspectives on how that translates into a compelling product.

On that note, then, NVIDIA accomplished what the industry was not expecting to be possible for at least a few more years, and certainly not at this scale and development ecosystem. Real time raytracing is the culmination of a decade or so of work, and the Turing RT Cores are the lynchpin. But in building up to it, NVIDIA summarizes the achievement as a result of:

- Hybrid rendering pipeline

- Efficient denoising algorithms

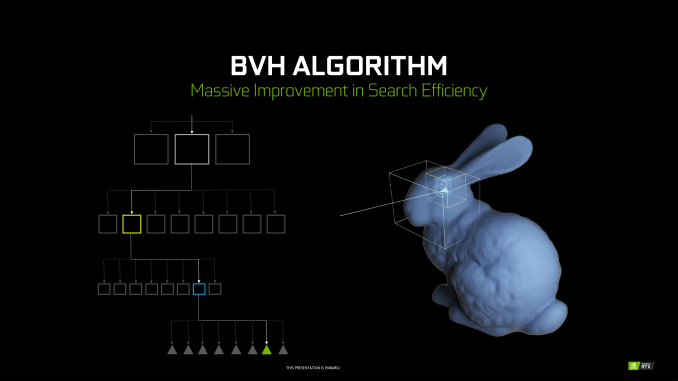

- Efficient BVH algorithms

By themselves, these developments were unable to improve raytracing efficiency, but set the stage for RT Cores. By virtue of raytracing’s importance in the world of computer graphics, NVIDIA Research has been looking into various BVH implementations for quite some time, as well as exploring architectural concerns for raytracing acceleration, something easily noted from their patents and publications. Likewise with denoising, though the latest trend has veered towards using AI and by extension Tensor Cores. When BVH became a standard of sorts, NVIDIA was able to design a corresponding fixed function hardware accelerator.

Being so crucial to their achievement, NVIDIA is not disclosing many details about the RT Cores or their BVH implementation. Of the details given, much is somewhat generic. To reiterate, BVH is a rather general category, and all modern raytracing acceleration structures are typically BVH or kd-tree based.

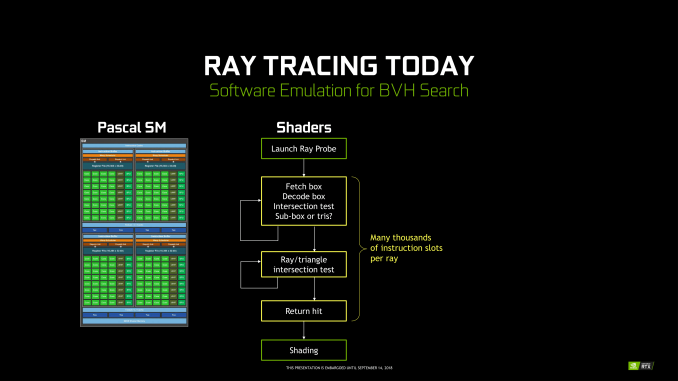

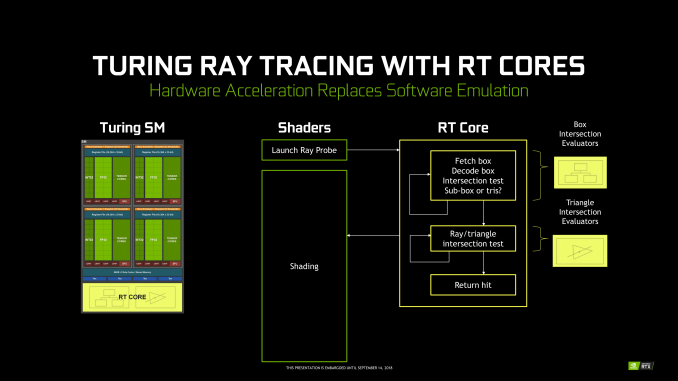

Unlike Tensor Cores, which are better seen as an FMA array alongside the FP and INT cores, the RT Cores are more like a classic offloading IP block. Treated very similar to texture units by the sub-cores, instructions bound for RT Cores are routed out of sub-cores, which is later notified on completion. Upon receiving a ray probe from the SM, the RT Core proceeds to autonomously traverse the BVH and perform ray-intersection tests. This type of ‘traversal and intersection’ fixed function raytracing accelerator is a well-known concept and has had quite a few implementations over the years, as traversal and intersection testing are two of the most computationally intensive tasks involved. In comparison, traversing the BVH in shaders would require thousands of instruction slots per ray cast, all for testing against bounding box intersections in the BVH.

Returning to the RT Core, it will then return any hits and letting shaders do implement the result. The RT Core also handles some grouping and scheduling of memory operations for maximizing memory throughput across multiple rays. And given the workload, presumably some amount of memory and/or ray buffer within the SIP block as well. Like in many other workloads, memory bandwidth is a common bottleneck in raytracing, and has been the focus of several NVIDIA Research papers. And in general, raytracing workloads result in very irregular and random memory accesses, mainly due to incoherent rays, that prove especially problematic for how GPUs typically utilize their memory.

But otherwise, everything else is at a high level governed by the API (i.e. DXR) and the application; construction and update of the BVH is done on CUDA cores, governed by the particular IHV – in this case, NVIDIA – in their DXR implementation.

All-in-all, there’s clearly more involved, and we’ll be looking to run some microbenchmarks in the future. NVIDIA’s custom BVH algorithms are clearly in play, but right now we can’t say what the optimizations might be, such as compressions, wide BVH, node subdivision into treelets. The way the RT Cores are integrated into the SM and into the architecture is likely crucial to how it operates well. Internally, the RT Core might just be a basic traversal and intersection unit, but it might also have other bits inside; one of NVIDIA’s recent patents provide a representation, albeit dated, of what else might be present. I, for one, would not be surprised to see it closely tied with the MIO blocks, and perhaps did more with coherency gathering by manipulating memory traffic for higher efficiency. It would need to coordinate well with the other workloads in the SMs without strangling memory access with unmitigated incoherent rays.

Nevertheless, details like performance impact are as yet unspecified.

111 Comments

View All Comments

Alistair - Sunday, September 16, 2018 - link

Except for the GTX 780 was the worse nVidia release ever, at a terrible price. Nice try ignoring every other card in the last 10 years.markiz - Monday, September 17, 2018 - link

How can it be the same segment of the market, if the prices are, as you claim, double+?I mean, that claim makes no sense. It's not same segment. it's higher tier.

I mean, who is to say what kind of an advancement in GPU and games have people supposed to be getting?

Buy a 500$ card and max settings as far as they go and call it a day.

If you are

Ej24 - Monday, September 17, 2018 - link

The R&D for smaller manufacturing nodes hasn't scaled linearly. It's been almost exponential in terms of $/Sq.mm to develop each new node. That's why we need die shrinks to cram more transistors per square mm, and why some nodes were skipped because the economics didn't work out, like 20/22nm gpu's never existed. You're assuming that manufacturers have fixed costs that have never changed. The cost of a semiconductor fab, and R&D for new nodes has ballooned much much faster than inflation. That's why we've seen the number of fabs plummet with every new node. There used to be dozens of fabs in the 90nm days and before. Now it's looking like only 3 or 4 will be producing 7nm and below. It's just gotten too expensive for anyone to compete.milkod2001 - Tuesday, September 18, 2018 - link

All those ridiculous prices started when AMD have announced 7970 at $550 plus. NV had mid range card to compete with it: GTX 680 at the same price. And then NV Titan high end cards were introduced at $1000 plus. Since then we pay past high end prices for mid range cards.futrtrubl - Wednesday, September 19, 2018 - link

Just a bit on your math. You say $1 accounting for inflation of 2.7% over 18 years is now just less than $1.50. Maybe you are doing it as $1 * 18 * 1.027 to get that which is incorrect for inflation. It compounds, so it should be $1 * ( 1.027^18) which comes to ~$1.62. Likewise at 5% over 18 years it becomes $2.41.Da W - Sunday, September 16, 2018 - link

Since when does inflation work in the semiconductor industry?Holliday75 - Monday, September 17, 2018 - link

I was wondering the same thing. Smaller, faster, cheaper. For some reason here its the opposite....for 2 out of 3.Yojimbo - Saturday, September 15, 2018 - link

"You must literally live under a rock while also being absurdly naive.It's never been this way in the 20 years that i've been following GPUs. These new RTX GPUs are ridiculously expensive, way more than ever, and the prices will not be changing much at all when there's literally zero competition. The GPU space right now is worse than it's ever been before in history."

No, if you go back and look at historical GPU prices, adjusted for inflation, there have been other times that newly released graphics cards were either as expensive or more expensive. The 700 series is the most recent example of cards that were as expensive as the 20 series is.

eddman - Saturday, September 15, 2018 - link

No.https://i.imgur.com/ZZnTS5V.png

This chart was made last year based on 2017 dollar value, but it still applies. 20 series cards have the highest launch prices in the past 18 years by a large margin.

eddman - Saturday, September 15, 2018 - link

There is one card that surpasses that, 8800 Ultra. It was nothing more than a slightly OCed 8800 GTX. Nvidia simply released it to extract as much money as possible, and that was made possible because of lack of proper competition from ATI/AMD in that time period.