The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTTuring Tensor Cores: Leveraging Deep Learning Inference for Gaming

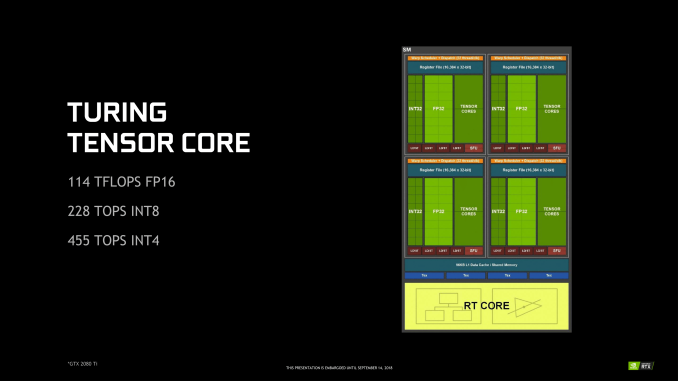

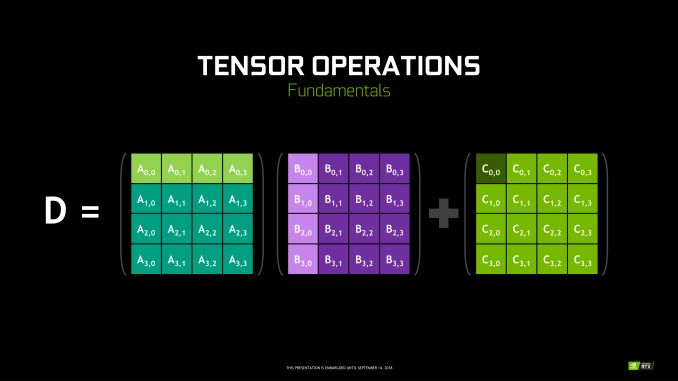

Though RT Cores are Turing’s poster child feature, the tensor cores were very much Volta’s. In Turing, they’ve been updated, reflecting its positioning as a gaming/consumer feature via inferencing. The main changes for the 2nd generation tensor cores are INT8 and INT4 precision modes for inferencing, enabled by new hardware data paths, and perform dot products to accumulate into an INT32 product. INT8 mode operates at double the FP16 rate, or 2048 integer operations per clock. INT4 mode operates at quadruple the FP16 rate, or 4096 integer ops per clock.

Naturally, only some networks tolerate these lower precisions and any necessary quantization, meaning the storage and calculation of compacted format data. INT4 is firmly in the research area, whereas INT8’s practical applicability is much more developed. Regardless, the 2nd generation tensor cores still have FP16 mode, which they now support in a pure FP16 mode without FP32 accumulator. While CUDA 10 is not yet out, the enhanced WMMA operations should shed light on any other differences, such as additional accepted matrix sizes for operands.

Inasmuch as deep learning is involved, NVIDIA is pushing what was a purely compute/professional feature into consumer territory, and we will go over the full picture in a later section. For Turing, the tensor cores can accelerate the features under the NGX umbrella, which includes DLSS. They can also accelerate certain AI-based denoisers that cleanup and correct real time raytraced rendering, though most developers seem to be opting for non-tensor core accelerated denoisers at the moment.

111 Comments

View All Comments

Spunjji - Monday, September 17, 2018 - link

There's no such thing as a bad product, just bad pricing. AMD aren't out of the game but they are playing in an entirely different league.siberian3 - Friday, September 14, 2018 - link

Good architectural leap for nvidia but it is sad very few of gamers can afford the new cards.And AMD is not doing anything for 2018 and probably navi will be mid range on 7nm

V900 - Friday, September 14, 2018 - link

Meh, it’s always been that way with the newest, fastest GPUs.Wait 6 months to a year, and prices will be where people with more modest budgets can play along.

B3an - Friday, September 14, 2018 - link

You must literally live under a rock while also being absurdly naive.It's never been this way in the 20 years that i've been following GPUs. These new RTX GPUs are ridiculously expensive, way more than ever, and the prices will not be changing much at all when there's literally zero competition. The GPU space right now is worse than it's ever been before in history.

Amandtec - Friday, September 14, 2018 - link

I read somewhere that8800GTX + inflation = 2080ti price

Without factoring in inflation the prices seem unprecedented.

Yojimbo - Saturday, September 15, 2018 - link

And you must factor in inflation, otherwise you are just pushing numbers around.Yojimbo - Saturday, September 15, 2018 - link

And comparing the 2080 Ti to previous flagship launch cards is not really proper. The 2080 Ti is a different tier of card. The die size is so much larger than any previous launch GPU. It's just a demonstration of the increase in the amount of resources people are willing to devote to their GPUs, not an indication of an inflation of GPU prices.eddman - Saturday, September 15, 2018 - link

2006 $600 at 2018 dollar value = $750Samus - Saturday, September 15, 2018 - link

What inflation, exactly are you talking about. The dollar hasn't had a substantial change in valuation for 20 years (compared to other first-world currency.)The USD inflation rate has averaged around 2.7%/year since 2000. That means one dollar in 2000 is now worth slightly less than $1.50 today. That means the top-of-the-line GPU released in 2000, I'd take a guess it was the Geforce2 GTS and/or the 3Dfx Voodoo5 5500, both cost $300.

For those who want to throw in cards like the Geforce 2 Ultra and the Voodoo5 6000, the former a card for nVidia to 'probe' the market for how much they could milk it going forward (and creating the situation we have today) and the other a card that never actually "launched"...we can include them for fun. The Ultra launched at $500 (even though it was slower than the Geforce 3 that launched 3 months later) and the Voodoo5 6000 had an MSRP set by 3Dfx at $500.

These were the most expensive gaming-focused GPU's ever made up until that date. Even SLI setups didn't cost $500 (the most expensive Voodoo2 card in the 90's was from Creative Labs @$229/ea - you needed two cards of course - so $460.)

Ok, so you have the absolute cream-of-the-crop cards in 2000 at $500, one was a marketing stunt, and the other never launched because nobody would have bought it. Realistically the most expensive cards were $300. But we will go with $500.

The most expensive high-end gaming focused cards now are $1000+

That would assume an inflation rate of over 5% annually, or the value of the dollar DOUBLING over 2 decades. Which it didn't come close to doing.

Stop using inflation as an excuse. It's bullshit. These companies are fucking greedy. Especially nVidia. They are effectively charging FOUR TIMES more than they used to for the same market segment card. 20 years ago you would have bought a TNT2 Ultra for $230 bucks and had the ultimate card available. Most people purchased entirely capable mainstream cards for $100-$150 like the TNT2 Pro or the Geforce2 MX400 that ran the most demanding games of the day like Counter Strike and Half-Life at 1024x768 in maximum detail.

http://www.in2013dollars.com/2000-dollars-in-2018?...

Yojimbo - Saturday, September 15, 2018 - link

"What inflation, exactly are you talking about."CPI. Consumer Price Index. Even though inflation has been low for quite a while, $649 in 2013 is $697 today. That's almost $50 more, and it's enough to make up the difference between the 2013 launch price of the GTX 780 and the 2018 launch price of the RTX 2080.

I'm not sure why you are talking about cards from 20+ years ago. It's not relevant to my reply. In any case, those cards were completely different. The die sizes were much smaller and the cards were much less capable. They did a lot less of the work, as much of it was done on the CPU. The CPU was much more important to the game performance than today, as was the RAM and other components that were worth spending money on to significantly improve the gaming performance/experience.

"Stop using inflation as an excuse."

I'm not using inflation as an excuse. I'm using inflation as a tool to accurately compare the prices of cards from different years. And doing so clearly shows that the claim that the OP made is wrong. My reply had nothing to do with whether cards were in general cheaper 20 years ago or not. It was in response to "These new RTX GPUs are ridiculously expensive, way more than ever". That's provably untrue. Why are you replying to me and arguing about some entirely different point I wasn't ever talking about?