The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

SDR Color Modes: sRGB and Wide Gamut

Pre-calibration/calibration steps of the monitor is done with SpectraCal’s CalMAN 5 suite. For contrast and brightness, the X-Rite i1DisplayPro colorimeter is used, but for the actual color accuracy readings we use the X-Rite i1Pro spectrophotometer. Pre-calibration measurements were done at 200 nits for sRGB and Wide Gamut with Gamma set to 2.2.

The PG27UQ comes with two color modes for SDR input: 'sRGB' and 'Wide Gamut.' Advertised as DCI-P3 coverage, the actual 'Wide Gamut' sits somewhere between DCI-P3 and BT.2020 HDR, which is right in line with minimum coverages required by DisplayHDR 1000 and UHD Premium. That being the case, the setting isn't directly calibrated to a color gamut, as opposed to sRGB.

Out-of-the-box, the monitor defaults to 8 bits per color, which can be changed in NVIDIA Control Panel. Either way, sRGB accuracy is very good, as the monitor comes factory-calibrated. To note, 10bpc for the PG27UQ is with dithering (8bpc+FRC).

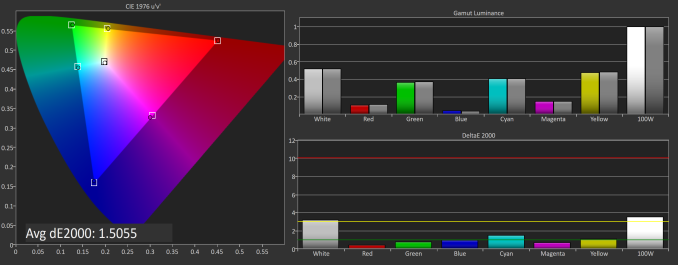

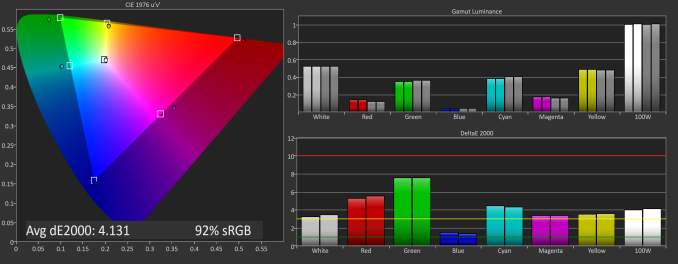

SpectraCal CalMAN sRGB color space for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

In 8bpc or 10bpc, average delta E is around 1.5, which corresponds with the included factory calibration result of 1.62; for reference, for color accuracy a dE below 1.0 is generally imperceptible and a dE below 3.0 is considered accurate.

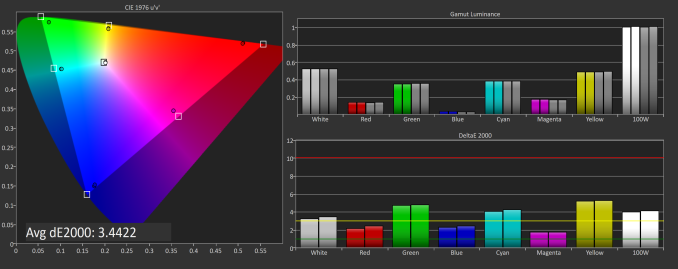

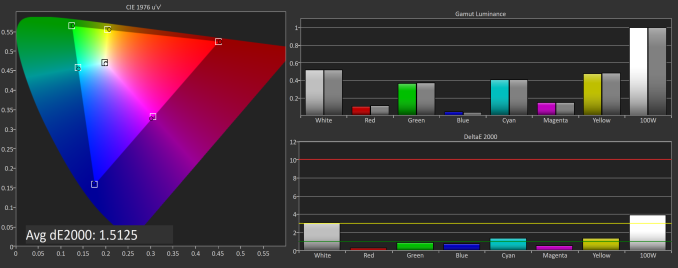

SpectraCal CalMAN DCI-P3 (above) and BT.2020 (below) color spaces for PG27UQ, on default settings with 10bpc and 'wide color gamut' enabled under SDR Input

SpectraCal CalMAN DCI-P3 (above) and BT.2020 (below) color spaces for PG27UQ, on default settings with 10bpc and 'wide color gamut' enabled under SDR Input

The 'wide gamut' options are not mapped to either DCI-P3 or BT.2020, sitting somewhere in between, but then again, it doesn't need to be as a professional or prosumer monitor would.

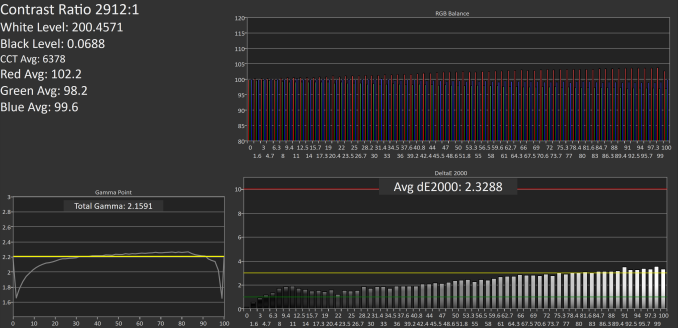

Grayscale and Saturation

Looking at color accuracy more throughly, we look at greyscale and saturation readings with respect to the sRGB gamut. The dips in gamma aren't perfect, and the whitepoints are a little on the warm side.

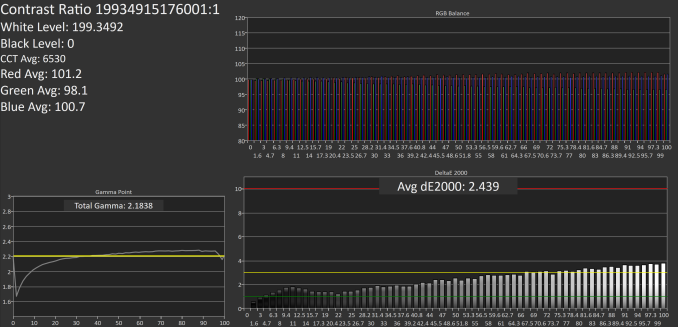

SpectraCal CalMAN sRGB color space grayscales with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

SpectraCal CalMAN sRGB color space grayscales with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

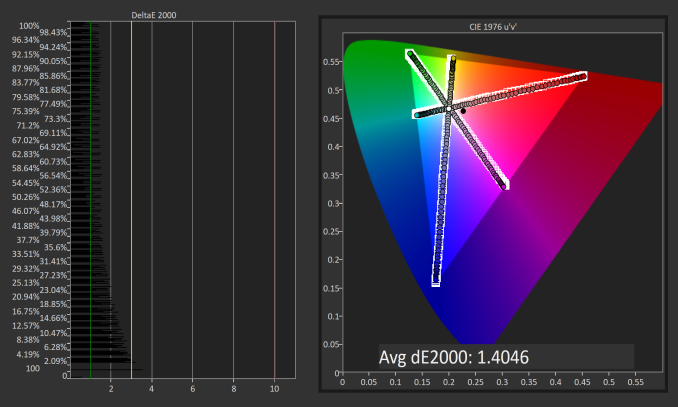

The saturation numbers are better, and in fact the dE is around 1.5 to 1.4, which is impressive for a gaming monitor.

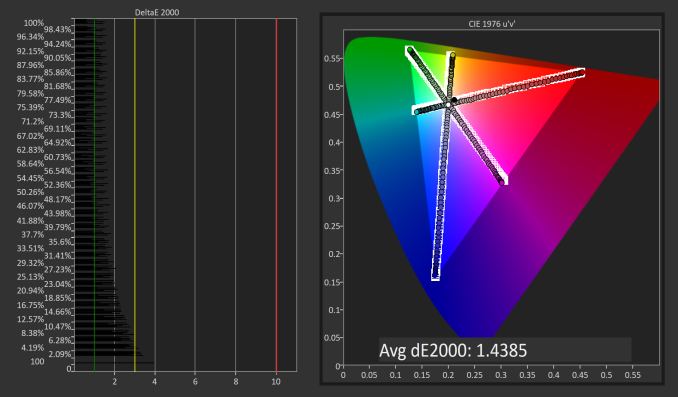

SpectraCal CalMAN sRGB color space saturation sweeps for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

SpectraCal CalMAN sRGB color space saturation sweeps for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

Gretag Macbeth (GMB) and Color Comparator

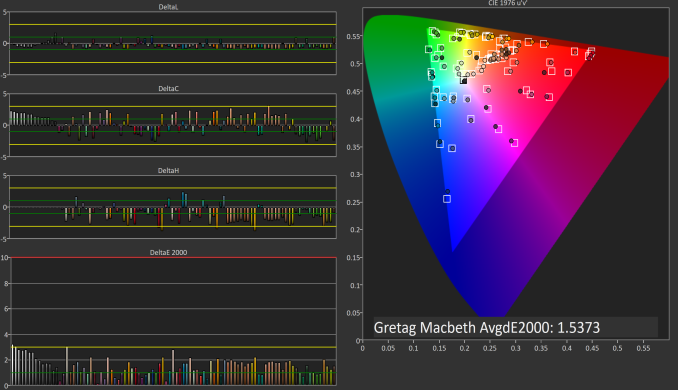

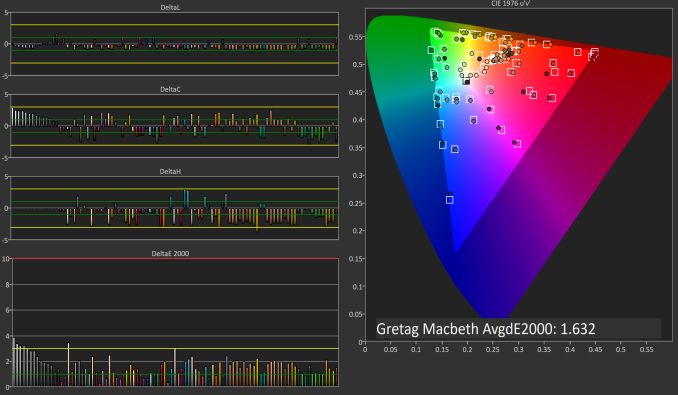

The last color accuracy test is the most thorough, and again the PG27UQ shines with dE of 1.53 and 1.63

SpectraCal CalMAN sRGB color space GMB for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

SpectraCal CalMAN sRGB color space GMB for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom)

Considering that this monitor was not designed for professional use, it's very calibrated out-of-the-box for gamers, and there's no strong concern for calibration. If anything, users should just be sure to select 10bpc in the NVIDIA Control Panel, but even then most games use 8bpc anyhow.

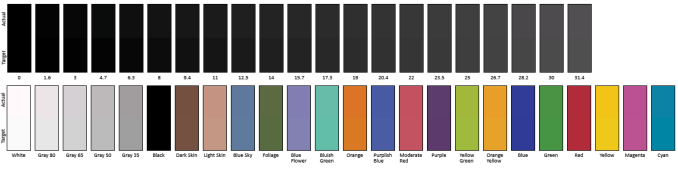

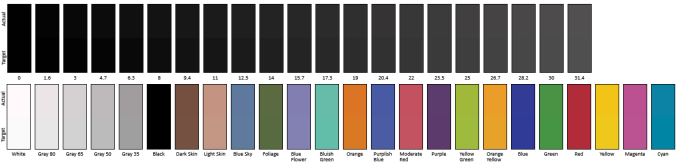

SpectraCal CalMAN sRGB relative color comparator graphs for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom). Each color column is split into halves; the top half is the PG27UQ's reproduction and the bottom half is the correct value

SpectraCal CalMAN sRGB relative color comparator graphs for PG27UQ, with out-of-the-box default 8bpc (top) and default with 10bpc (bottom). Each color column is split into halves; the top half is the PG27UQ's reproduction and the bottom half is the correct value

91 Comments

View All Comments

Ryan Smith - Wednesday, October 3, 2018 - link

Aye. The FALD array puts out plenty of heat, but it's distributed, so it can be dissipated over a large area. The FPGA for controlling G-Sync HDR is generates much less heat, but it's concentrated. So passive cooling would seem to be non-viable here.a5cent - Wednesday, October 3, 2018 - link

Yeah, nVidia's DP1.4 VRR solution is baffelingly poor/non-competitive, not just due to the requirement for active cooling.nVidia's DP1.4 g-sync module is speculated to contribute a lot to the monitor's price (FPGA alone is estimated to be ~ $500). If true, I just don't see how g-sync isn't on a path towards extinction. That simply isn't a price premium over FreeSync that the consumer market will accept.

If g-sync isn't at least somewhat widespread and (via customer lock in) helping nVidia sell more g-sync enabled GPUs, then g-sync also isn't serving any role for nVidia. They might as well drop it and go with VESA's VRR standard.

So, although I'm actually thinking of shelling out $2000 for a monitor, I don't want to invest in technology it seems has priced itself out of the market and is bound to become irrelevant.

Maybe you could shed some light on where nVidia is going with their latest g-sync solution? At least for now it doesn't seem viable.

Impulses - Wednesday, October 3, 2018 - link

How would anyone outside of NV know where they're going with this tho? I imagine it does help sell more hardware to one extent or another (be it GPUs, FPGAs to display makers, or a combination of profits thru the side deals) AND they'll stay the course as long as AMD isn't competitive at the high end...Just the sad reality. I just bought a G-Sync display but it wasn't one of these or even $1K, and it's still a nice display regardless of whether it has G-Sync or not. I don't intend to pay this kinda premium without a clear path forward either but I guess plenty of people are or both Acer and Asus wouldn't be selling this and plenty of other G-Sync displays with a premium over the Freesync ones.

a5cent - Wednesday, October 3, 2018 - link

"How would anyone outside of NV know where they're going with this tho?"Anandtech could talk with their contacts at nVidia, discuss the situation with monitor OEMs, or take any one of a dozen other approaches. Anandtech does a lot of good market research and analysis. There is no reason they can't do that here too. If Anandtech confronted nVidia with the concern of DP1.4 g-sync being priced into irrelevancy, they would surely get some response.

"I don't intend to pay this kinda premium without a clear path forward either but I guess plenty of people are or both Acer and Asus wouldn't be selling this and plenty of other G-Sync displays with a premium over the Freesync ones."

You're mistakenly assuming the DP1.2 g-sync is in any way comparable to DP1.4 g-sync. It's not.

First, nobody sells plenty of g-sync monitors. The $200 price premium over FreeSync has made g-sync monitors (comparatively) low volume niche products. For DP1.4 that premium goes up to over $500. There is no way that will fly in a market where the entire product typically sells for less than $500. This is made worse by the fact that ONLY DP1.4 supports HDR. That means even a measly DisplayHDR 400 monitor, which will soon retail for around $400, will cost at least $900 if you want it with g-sync.

Almost nobody, for whom price is even a little bit of an issue, will pay that.

While DP1.2 g-sync monitors were niche products, DP1.4 g-sync monitors will be irrelevant products (in terms of market penetration). Acer's and Asus' $2000 monitors aren't and will not sell in significant numbers. Nothing using nVidia's DP1.4 g-sync module will.

To be clear, this isn't a rant about price. It's a rant about strategy. The whole point of g-sync is customer lock-in. Nobody, not even nVidia, earns anything selling g-sync hardware. For nVidia, the potential of g-sync is only realized when a person with a g-sync monitor upgrades to a new nVidia card who would otherwise have bought an AMD card. If DP1.4 g-sync isn't adopted in at least somewhat meaningful numbers, g-sync loses its purpose. That is when I'd expect nVidia to either trash g-sync and start supporting FreeSync, OR build a better g-sync module without the insanely expensive FPGA.

Neither of those two scenarios motivates me to buy a $2000 g-sync monitor today. That's the problem.

a5cent - Wednesday, October 3, 2018 - link

To clarify the above...If I'm spending $2000 on a g-sync monitor today, I'd like some reassurance that g-sync will still be relevant and supported three years from now.

For the reasons mentioned, from where I stand, g-sync looks like "dead technology walking". With DP1.4 it's priced itself out of the market. I'm sure many would appreciate some background on where nVidia is going with this...

lilkwarrior - Monday, October 8, 2018 - link

Nvidia's solution is objectively better besides not being open. Similarly NVLINK is better than any other multi-GPU hardware wise.With HDMI 2.1, Nvidia will likely support it unless it's simply underwhelming.

Once standards catch up, Nvidia hasn't been afraid to deprecate their own previous effort somewhat besides continuing to support it for wide-spread support / loyalty or a balanced approach (i.e. NVLINK for Geforce cards but delegate memory pooling to DX12 & Vulkan)

Impulses - Tuesday, October 2, 2018 - link

If NVidia started supporting standard adaptive sync at the same time that would be great... Pipe dream I know. Things like G-Sync vs Freesync, fans inside displays, and dubious HDR support don't inspire much confidence in these new displays. I'd gladly drop the two grand if I *knew* this was the way forward and would easily last me 5+ years, but I dunno if that would really pan out.DanNeely - Tuesday, October 2, 2018 - link

Thank you for including the explanation on why DSC hasn't shown up in any products to date.Heavenly71 - Tuesday, October 2, 2018 - link

I'm pretty disappointed that a gaming monitor with this price still has only 8 bits of native color resolution (plus FRC, I know).Compare this to the ASUS PA32UC which – while not mainly targetted at gamers – has 10 bits, no fan noise, is 5 inches bigger (32" total) and many more inputs (including USB-C DP). For about the same price.

milkod2001 - Tuesday, October 2, 2018 - link

Wonder if they make native 10bit monitors. Would you be able to output 10bit colours from gaming GPU or only professional GPU?