The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

HDR Gaming Impressions

In the end, a monitor like the PG27UQ is really destined for one purpose: gaming. And not just any gaming, but the type of quality experience that does not compromise between resolution and refresh rate, let alone HDR and VRR.

That being said, not many games support HDR, which for G-Sync HDR means HDR10 support. Even for games that do support an HDR standard of some kind, the quality of the implementation naturally varies from developer to developer. And because of console HDR support, some games only feature HDR in their console incarnations.

The other issue is that the HDR gaming experience is hard to communicate objectively. In-game screenshots won't replicate how the HDR content is delivered on the monitor with its brightness, backlighting, and wider color gamut, while photographs are naturally limited by the capturing device. And naturally, any HDR content will obviously be limited by the viewer's display. On our side, this makes it easy to generally gush about glorious HDR vibrance and brightness, especially as on-the-fly blind A/B testing is not so simple (duplicated SDR and HDR output is not currently possible).

As for today, we are looking at Far Cry 5 (HDR10), F1 2017 (scRGB HDR), Battlefield 1 (HDR10), and Middle-earth: Shadow of War (HDR10), which covers a good mix of genres and graphics intensity. Thanks to in-game benchmarks for three of them, they also provide a static point of reference; in the same vein, Battlefield 1's presence in the GPU game suite means I've seen and benchmarked the same sequence enough times to dream about it.

For such subjective-but-necessary impressions like these, we'll keep ourselves grounded by sticking to a few broad questions:

- What differences are noticable from 4K with non-HDR G-Sync?

- What differences are noticable from 4:4:4 to 4:2:2 chroma subsampling at 98Hz?

- What about lowering resolution to 1440p HDR or lowering details with HDR on, for higher refresh rates? Do I prefer HDR over high refresh rates?

- Are there any HDR artifacts? e.g. halo effects, washed out or garish colors, blooming due to local dimming

The 4K G-Sync HDR Experience

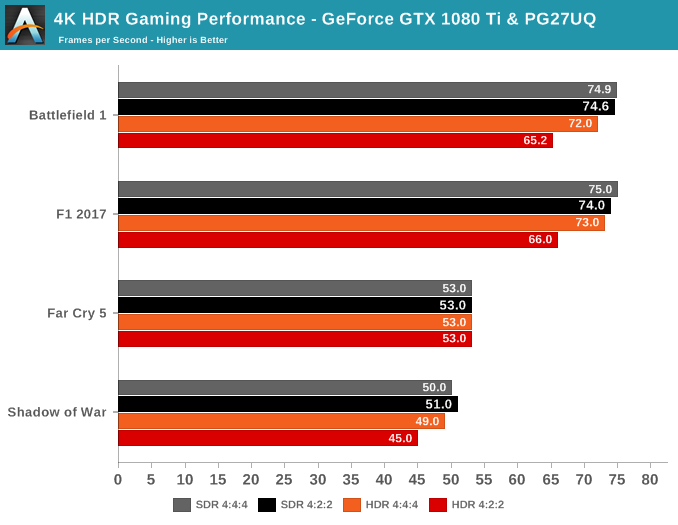

From the beginning, we expected that targeting 144fps at 4K was not really plausible for graphically intense games, and that still holds true. On a reference GeForce GTX 1080 Ti, none of the games averaged past 75fps, and even the brand-new RTX 2080 Ti won't come close to doubling that.

Ubquituous black loading and intro screens make the local dimming bloom easily noticable, though this is a commonly known phenomenon and somewhat unavoidable. The majority of the time, it is fairly unintrusive. Because the local backlighting zones can only get so small on LCD displays – in the case of this monitor, each zone is roughly 5.2cm2 in area – anything that is smaller than the zone will still be lit up across the zone. For example, a logo or loading throbber on a black background will have a visible glow around them. The issue is not specific to the PG27UQ, only that higher maximum brightness makes it little more obvious. One of the answers to this is OLED, where subpixels are self-emitting and thus lighting can be controlled on an individual subpixel basis, but because of burn-in it's not suitable for PCs.

Loading throbbers for Shadow of War (left) and Far Cry 5 (right) with the FALD haloing effect

Much has been said about describing the sheer brightness range, but the closest analogy that comes to mind is like dialing up smartphone brightness to maximum after a day of nursing a low battery on 10% brightness. It's still up to the game to take full advantage of it with HDR10 or scRGB. Some games will also offer to set gamma, maximum brightness, and/or reference white levels, thereby allowing you to adjust the HDR settings to the brightness capability of the HDR monitor.

The most immediate takeaway is the additional brightness and how fast it can ramp up. The former has a tendency to make things more clear and colorful - the Hunt effect in play, essentially. The latter is very noticable in transitions, such as sudden sunlight, looking up to the sky, and changes in lighting. Of course, the extra color vividness works hand-in-hand with the better contrast ratios, but again this can be game- and scene-dependent; Far Cry 5 seemed to fare the best in that respect, though Shadow of War, Battlefield 1, and F1 2017 still looked better than in SDR.

In-game, I couldn't perceive any quality differences going from 4:4:4 to 4:2:2 chroma subsampling, though the games couldn't reach past 98Hz at 4K anyway. So at 50 to 70fps averages, the experience reminded me more of a 'cinematic' experience, because HDR made the scenes look more realistic and brighter while the gameplay was the typical pseudo 60fps VRR experience. With that in mind, it would probably be better for exploration-heavy games where you would 'stop-and-look' a lot - and unfortunately, we don't have Final Fantasy XV at the moment to try out. NVIDIA themselves say that increased luminance actually increases the perception of judder at low refresh rates, but luckily the presence of VRR would be mitigating judder in the first place.

What was interesting to observe was a performance impact with HDR enabled (and with G-Sync off) on the GeForce GTX 1080 Ti, which seems to corroborate last month's findings by ComputerBase.de. For the GTX 1080 Ti, Far Cry 5 was generally unaffected, but Battlefield 1 and F1 2017 took clear performance hits, appearing to stem from 4:2:2 chroma subsampling on HDR (YCbCr422). Shadow of War also seemed to fare worse. Our early results also indicate that even HDR with 4:4:4 chroma subsampling (RGB444) may result in a slight performance hit in affected games.

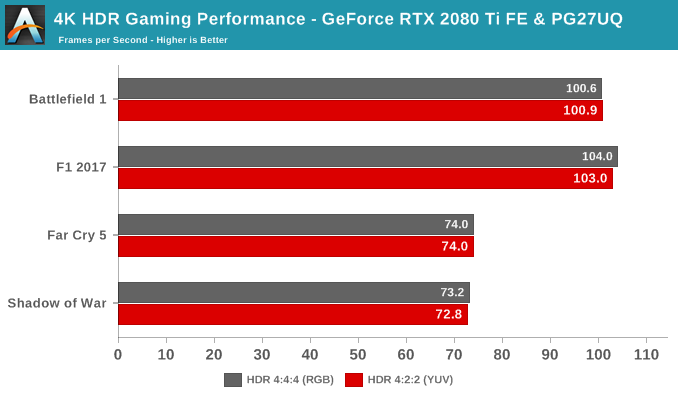

It's not clear what the root cause is, and we'll be digging deeper as we revisit the GeForce RTX 20-series. Taking a glance at the RTX 2080 Ti Founders Editions, the performance hit of 4:2:2 subsampling is reduced to negligable margins in these four games.

On Asus' side, the monitor does everything that it is asked of: it comfortably reaches 1000 nits, and as long as FALD backlighting is enabled, the IPS backlight bleed is pretty much non-existent. There was no observed ghosting or other artifacts of the like.

The other aspects of the HDR gaming 'playflow' is that enabling HDR can be slightly different per game and Alt-Tabbing is hit-or-miss - that is on Microsoft/Windows 10, not on Asus - but it's certainly much better than before. For example, Shadow of Mordor had no in-game HDR toggle and relied on the Windows 10 toggle. And with G-Sync and HDR now in the mix, adjusting resolution and refresh rate (and for 144Hz, the monitor needs to be put in OC mode) to get the exact desired configuration can be a bit of a headache. Thus, it was very finicky in lowering in-game resolution to 1440p but keeping G-Sync HDR and 144Hz OC mode.

At the end of the day, not all HDR is made equal, which goes for the game-world and scene construction in addition to HDR support. So although the PG27UQ is up to the task, you may not see the full range of color and brightness translated into a given game, depending on its HDR implementation. I would strongly recommend visiting a brick-and-mortar outlet that offered an HDR demo, or look into specific HDR content that you would want to play or watch.

91 Comments

View All Comments

lilkwarrior - Monday, October 8, 2018 - link

OLED isn't covered by VESA HDR standards; it's far superior picture quality & contrast.QLED cannot compete with OLED at all in such things. I would very much get a Dolby Vision OLED monitor than a LED monitor with a HDR 1000 rating.

Lolimaster - Tuesday, October 2, 2018 - link

You can't even call HDR with a pathetic low contrast IPS.resiroth - Monday, October 8, 2018 - link

Peak luminance levels are overblown because they’re easily quantifiable. In reality, if you’ve ever seen a recent LG TV which can hit about 900 nits peak that is too much. https://www.rtings.com/tv/reviews/lg/c8It’s actually almost painful.

That said I agree oled is the way to go. I wasn’t impressed by any LCD (FALD or not) personally. It doesn’t matter how bright the display gets if it can’t highlight stars on a night sky etc. without significant blooming.

Even 1000 bits is too much for me. The idea of 4000 is absurd. Yes, sunlight is way brighter, but we don’t frequently change scenes from night time to day like television shows do. It’s extremely jarring. Unless you like the feeling of being woken up repeatedly in the middle of the night by a flood light. It’s a hard pass.

Hxx - Saturday, October 6, 2018 - link

the only competition is Acer which costs the same. If you want Gsync you have to pony up otherwise yeah there are much cheaper alternatives.Hixbot - Tuesday, October 2, 2018 - link

Careful with this one, the "whistles" in the article title is referring to the built-in fan whine. Seriously, look at the newegg reviews.JoeyJoJo123 - Tuesday, October 2, 2018 - link

"because I know"I wouldn't be so sure. Not for Gsync, at least. AU Optronics is the only panel producer for monitor sized displays that even gives a flip about pushing lots of high refresh rate options on the market. A 2560x1440 144hz monitor 3 years ago still costs just as much today (if not more, due to upcoming China-to-US import tariffs, starting with 10% on October 1st 2018, and another 15% (total 25%) in January 1st 2019.

High refresh rate GSync isn't set to come down anytime soon, not as long as Nvidia has a stranglehold on GPU market and not as long as AU Optronics is the only panel manufacturer that cares about high refresh rate PC monitor displays.

lilkwarrior - Monday, October 8, 2018 - link

Japan Display plans to change that in 2019. IIRC Asus is planning to use their displays for a portable Professional OLED monitor.I would not be surprised they or LG created OLED gaming monitors from Japan Display that's a win-win for gamers, Japan Display, & monitor manufacturers in 2020.

Alternatively they surprise us with MLED monitors that Japan Display also invested in + Samsung & LG.

That's way better to me than any Nano-IPS/QLED monitor. They simply cannot compete.

Impulses - Tuesday, October 2, 2018 - link

I would GLADLY pay the premium over the $600-1,000 alternatives IF I thought I was really going to take advantage of what the display offers in the next 2 or even 4 years... But that's the issue. I'm trying to move away from SLI/CF (2x R9 290 atm, about to purchase some sort of 2080), not force myself back into it.You're gonna need SLI RTX 2080s (Ti or not) to really eke out frame rates fast enough for the refresh rate to matter at 4K, chances are it'll be the same with the next gen of cards unless AMD pulls a rabbit out of a hat and quickly gets a lot more competitive. That's 2-3 years easy where SLI would be a requirement.

HDR support seems to be just as much of a mess... I'll probably just end up with a 32" 4K display (because I'm yearning for something larger than my single 16:10 24" and that approaches the 3x 24" setup I've used at times)... But if I wanted to try a fast refresh rate display I'd just plop down a 27" 1440p 165Hz next to it.

Nate's conclusion is exactly the mental calculus I've been doing, those two displays are still less money than one of these and probably more useful in the long run as secondary displays or hand me down options... As awesome as these G-Sync HDR displays may be, the vendor lock in around G-Sync and active cooling makes em seem like poor investments.

Good displays should last 5+ years easy IMO, I'm not sure these would still be the best solution in 3 years.

Icehawk - Wednesday, October 3, 2018 - link

Grab yourself an inexpensive 32" 4k display, decent ones are ~$400 these days. I have an LG and it's great all around (I'm a gamer btw), it's not quite high end but it's not a low end display either - it compares pretty favorably to my Dell 27" 2k monitor. I just couldn't see bothering with HDR or any of that other $$$ BS at this point, plus I'm not particularly bothered by screen tearing and I don't demand 100+ FPS from games. Not sure why people are all in a tizzy about super high FPS, as long as the game runs smoothly I am happy.WasHopingForAnHonestReview - Saturday, October 6, 2018 - link

You dont belong here, plebian.